Thankyou for the question on Microsoft Q&A platform.

As per my understanding, you are trying to write data from dataframe to lake database (non default DB).

You can explicitly mention the database name in which you want to write the dataframe by providing the fully qualified tablename in saveAsTable function.

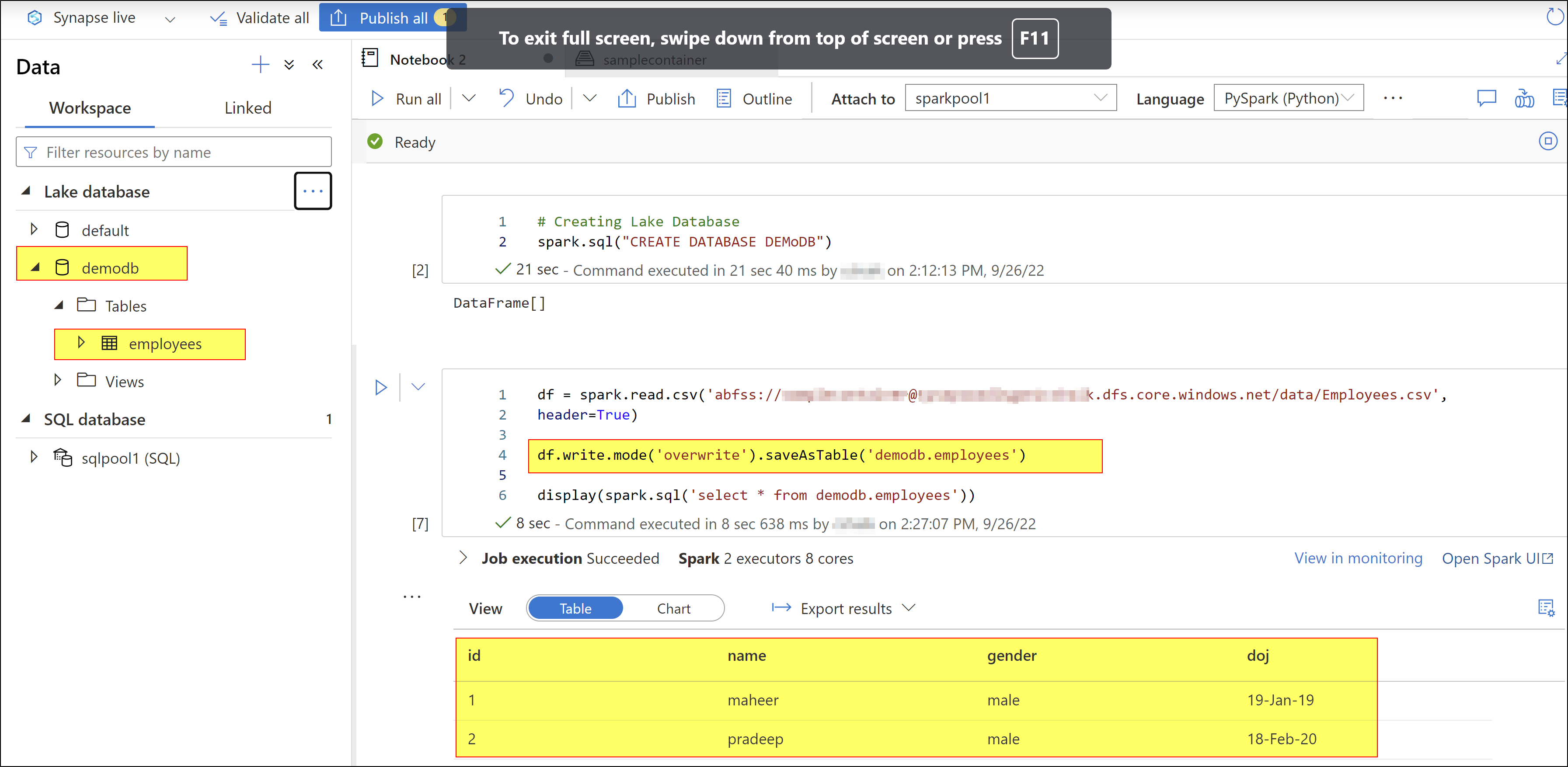

I tried to reproduce your requirement. Here, I created a new database named 'DemoDB' .

Afterwards, I am trying to read a .csv file from my ADLS and loaded it into a dataframe using the code df= spark.read.csv('<filepath>')

Then, I am loading the dataframe content into table in DemoDB database using the following code:

df.write.mode('overwrite').saveAsTable('demoDB.employees')

For more details, kindly check the following video: Analyze data with Server less Spark Pool in Azure Synapse Analytics

Hope this will help. Please let us know if any further queries.

------------------------------

- Please don't forget to click on

or upvote

or upvote  button whenever the information provided helps you.

button whenever the information provided helps you.

Original posters help the community find answers faster by identifying the correct answer. Here is how - Want a reminder to come back and check responses? Here is how to subscribe to a notification

- If you are interested in joining the VM program and help shape the future of Q&A: Here is how you can be part of Q&A Volunteer Moderators