API Limits and Performance of Lanugage Service

I'm trying to use the Converational Language Understanding (CLU) of the Cognitive Language Service in an high-performance use case where I'm trying to make say 50 calls per second (TPS) to this service.

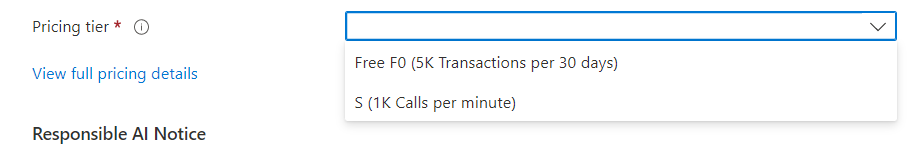

The documentation says the limit is 1000 TPM for the Prediction Service. Which would be ~16 TPS, much lower that what I need to achieve. Earlier LUIS would allow the deployment of multiple prediction resources paired with one Authoring resource for scaling further. Also, LUIS allowed containerized prediction deployment and would get ~40 TPS with a 1-core 4GB RAM machine.

Now I'm getting ~200 ms avg with ~16 TPS with the CLU service and the consumer in the same region. How can I scale this setup?