Hello @Sergey Shabalov ,

Thanks for the question and using MS Q&A platform.

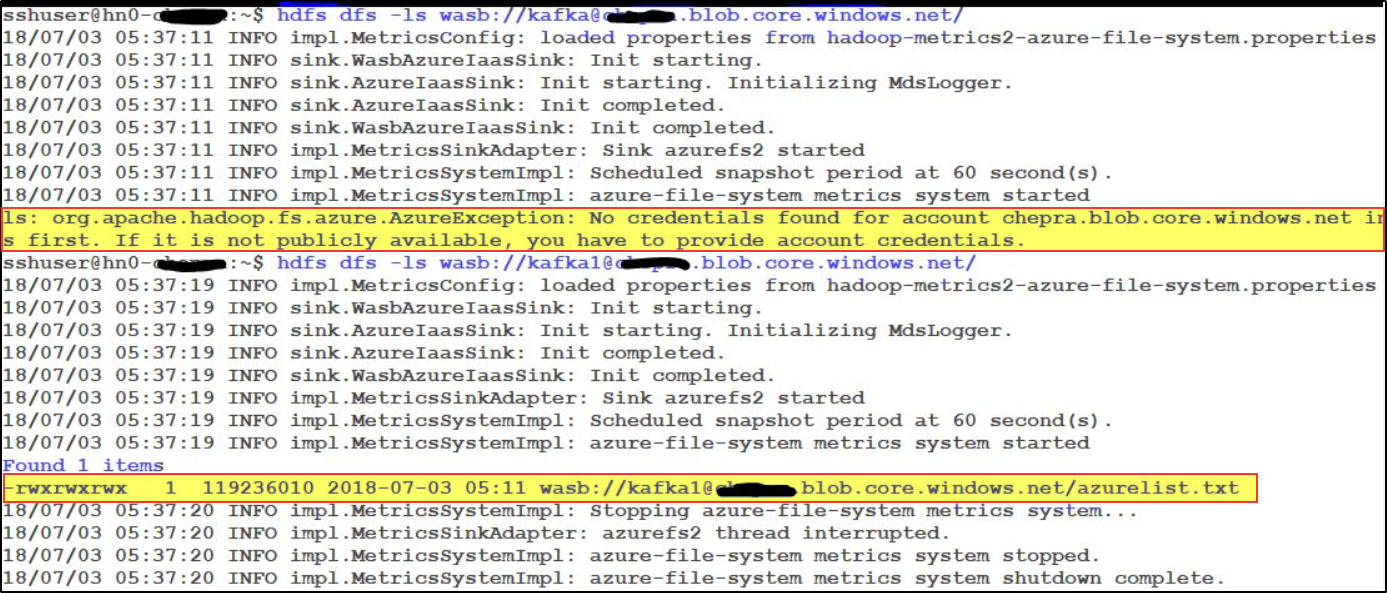

This is an excepted behaviour while you set access level to private permissions when using an Azure Storage account with Hadoop.

Note: Private containers in storage accounts that are NOT connected to a cluster: You can't access the blobs in the containers unless you define the storage account in the Hadoop configuration i.e., core-site.xml file.

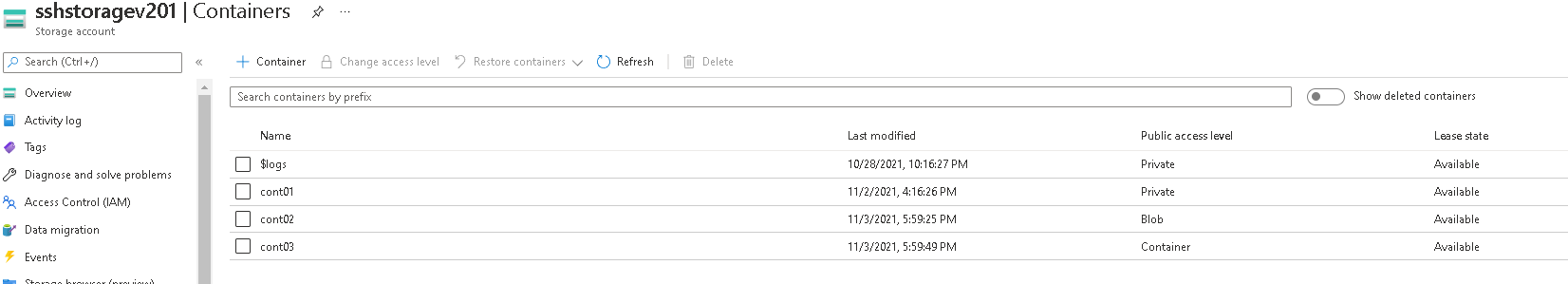

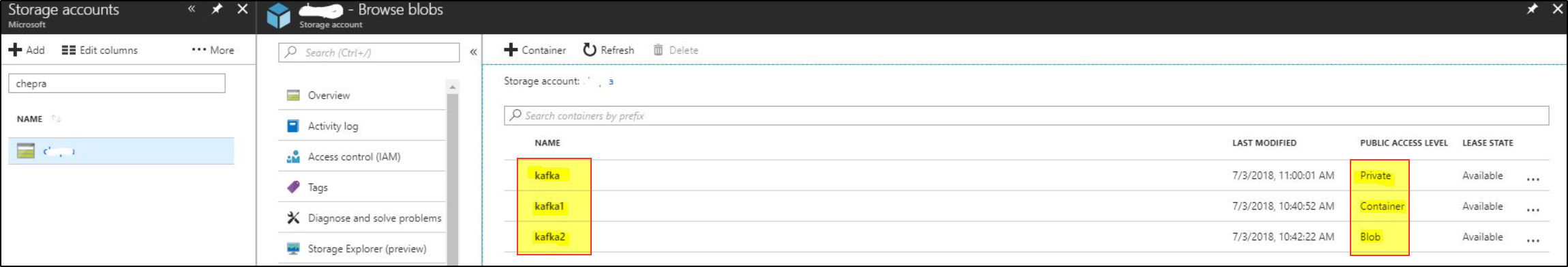

For your understanding, I have created three containers as following;

If you access containers using HDInsight, you will get the same error message for private and blob public access level and gives desired output for the Container public access level.

For more details, refer “HDInsight Storage architecture” and “Hadoop Azure Support: Azure Blob Storage”.

Hope this will help. Please let us know if any further queries.

------------------------------

- Please don't forget to click on

or upvote

or upvote  button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how

button whenever the information provided helps you. Original posters help the community find answers faster by identifying the correct answer. Here is how - Want a reminder to come back and check responses? Here is how to subscribe to a notification

- If you are interested in joining the VM program and help shape the future of Q&A: Here is how you can be part of Q&A Volunteer Moderators