@Rajamannar A K Thanks for the question. Can you please add more details about the trained model and deployment that you are trying.

Here is link to an example of how to deploy and use object detection model in Azure ML with MLFlow option.

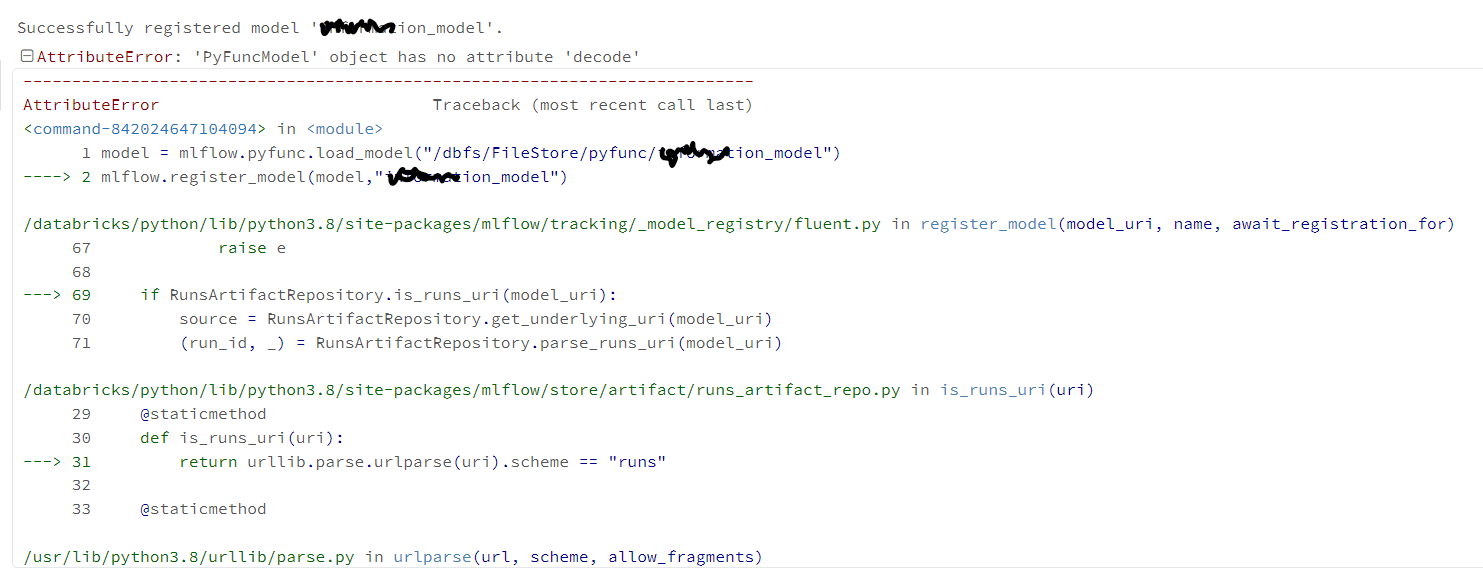

the process to do MLFlow using a custom python flavor then deploy to Azure ML using the Azure version of MLFlow.

Created the template here:

- Main deployment script to AML: aml_mlflow_deployment/mlflow_aml_deployment.ipynb at main · james-tn/aml_mlflow_deployment (github.com)

- Custom mlflow.pyfunc module: aml_mlflow_deployment/model_loader.py at main · james-tn/aml_mlflow_deployment (github.com)

There's also the option of simply using Azure DevOps to pull the model out of the MLFlow model repo in Azure Databricks, containerize it, and put it directly to ACI/AKS for API scoring outside of Azure Databricks.