Hi @AMJ ,

Thank you for posting query in Microsoft Q&A Platform.

If possible try to see if you can apply your replace logic on response itself and then storing it as csv file. That way it will reduce lot of efforts.

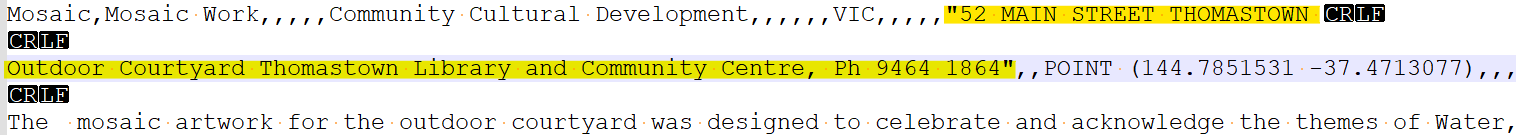

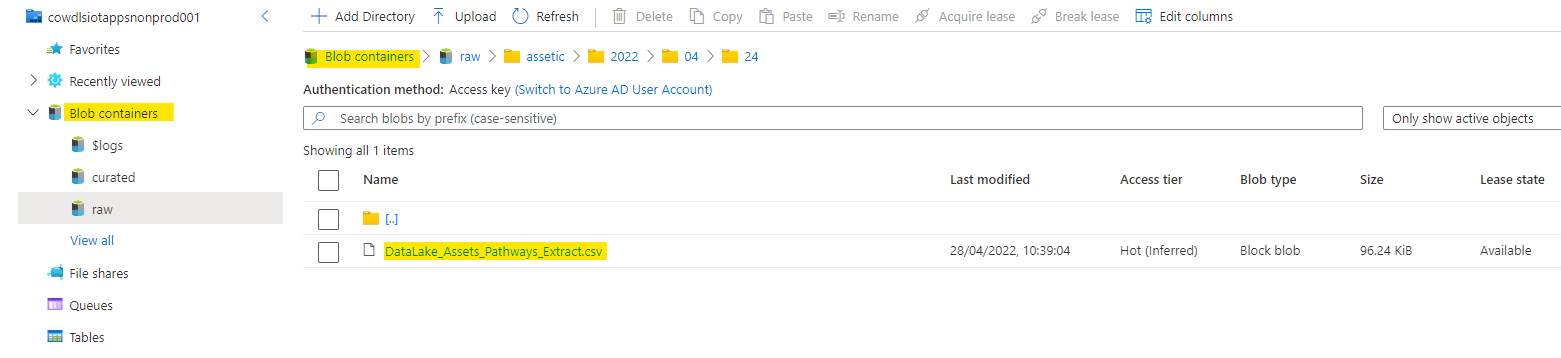

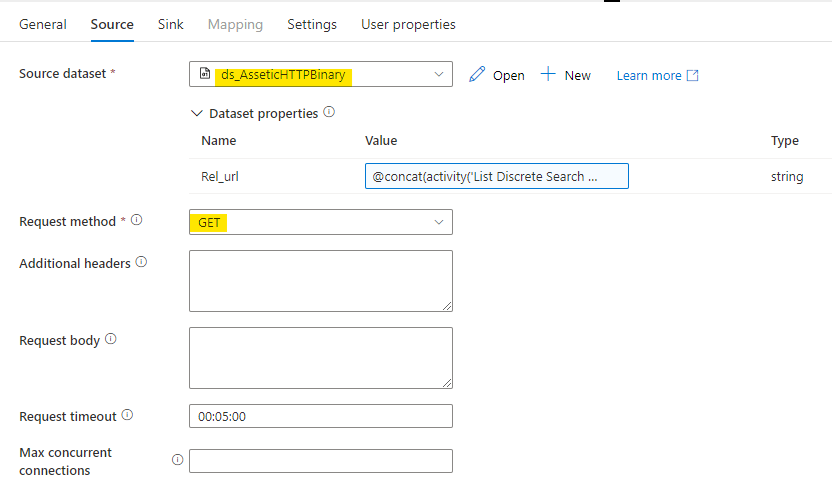

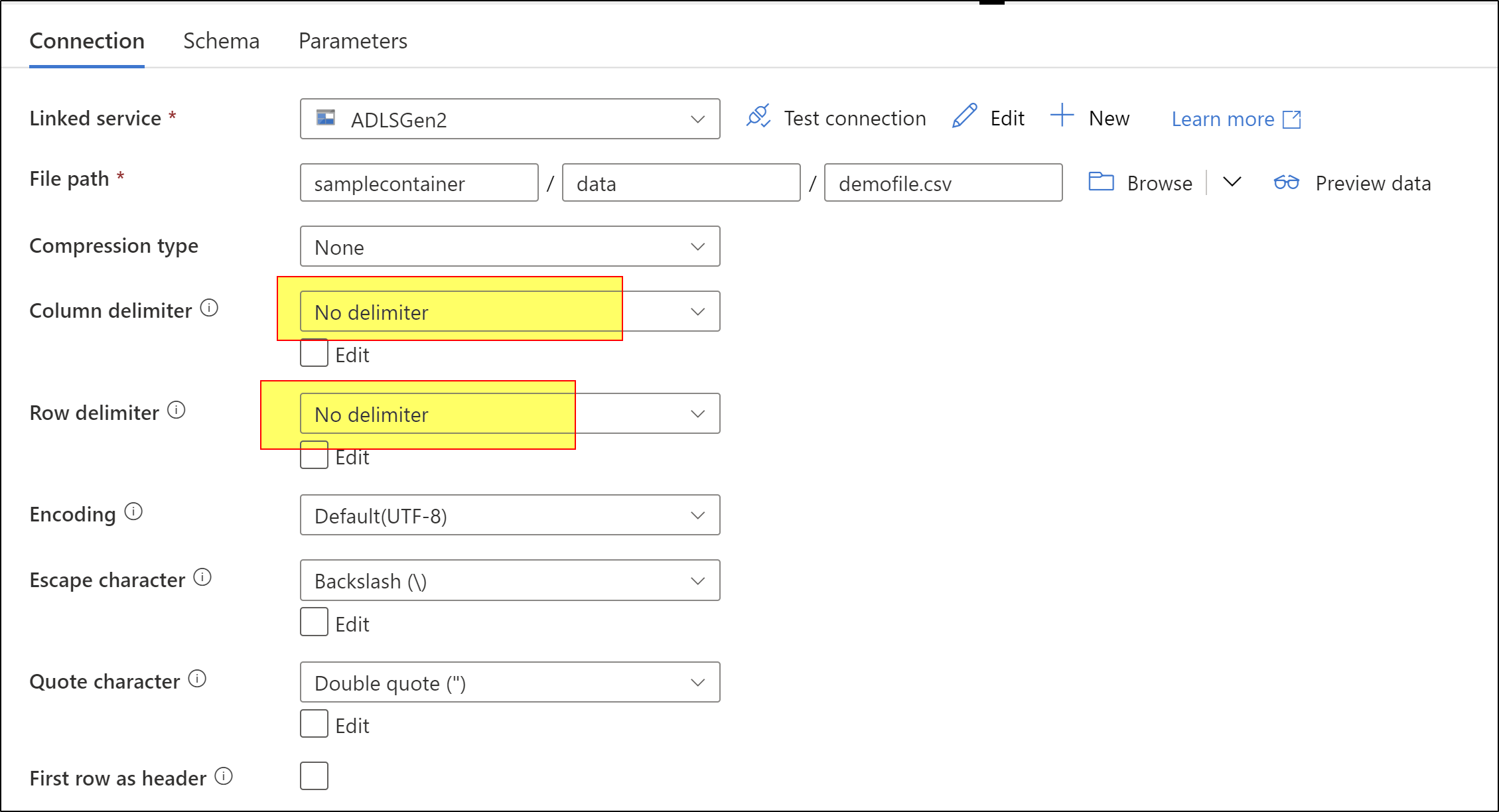

If you would like to play around the logic on csv file after loading data from API then we should consider using data flows. Firstly, try to read entire file data as single column. For the you should have your dataset settings as below.

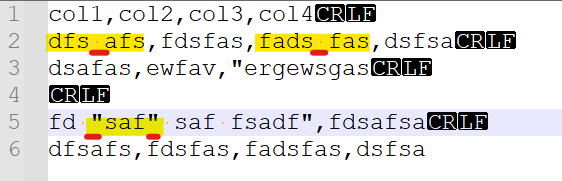

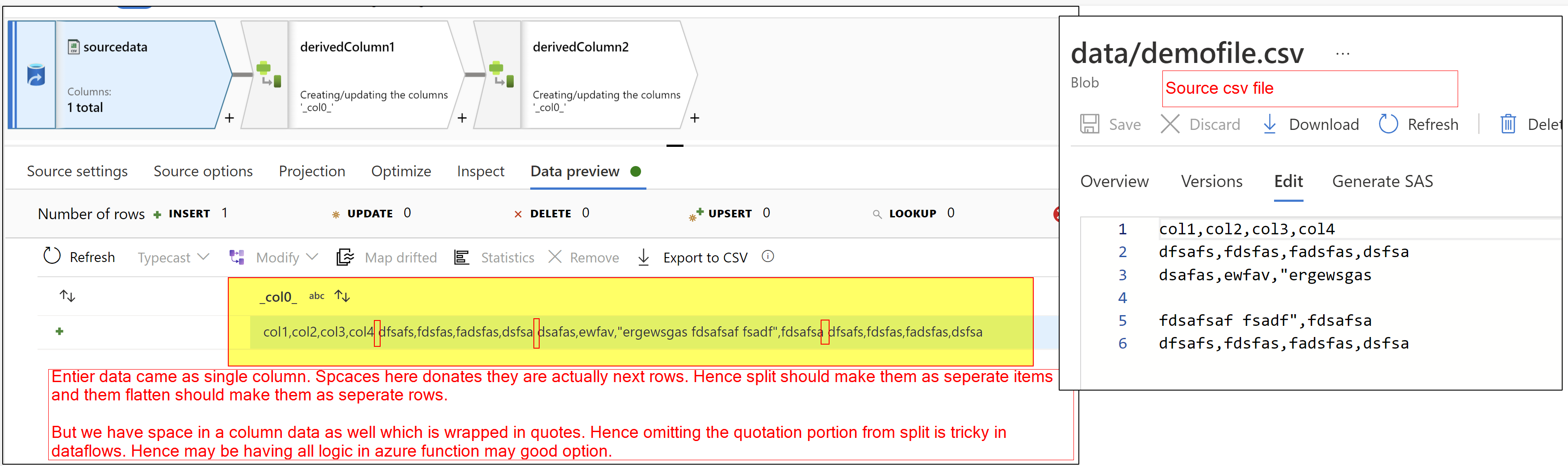

Once you get entire file data as single column then apply your replace logic using derived column. and then consider breaking your single column single data as multiple items using split() function on space. But if your any column has space in there data then that will also get splitted. Hence we should consider omit splitting of data which is wrapped insider quotes.

Once we splitted data as array then flatten that data as multiple rows and load as file. and then use this newly created file as source for further processes.

All above mentioned logic handling may be difficult and sometimes dataflows not much flexible to have this logic. So best way is having your custom code written in Azure functions to read your file data and do the replace() accordingly.

Hope this helps. Please let us know how it goes. Thank you.

--------

Please consider hitting Accept Answer. Accepted answers help community as well.