Using Microsoft Azure Video Indexer

This blog takes the developer through having an Azure subscription to processing video with Azure Video Indexer (VI) REST API. At the time of this writing, VI is in preview. Find VI by going to https://azure.com, then Products, AI + Machine Learning, Cognitive Services, Vision, and finally Video Indexer. Or just search for Azure Video Indexer on Bing. This page is a great place to start learning overview material.

Let's dive in! Follow the guidance on this page to get signed up for the preview and process your first video using the VI portal. Then continue through this page to get set up with the API. Once you have your subscription key (step 2), you're ready to go. Here's the page with a list of the REST API methods that we'll be using.

Start by uploading a video file to a publicly accessible network address.

Most use cases will start with uploading a video. This is facilitated by first uploading the video file to a publicly accessible network address, such as Azure Blob Storage. My storage account has a public container in it. I upload my video files to it (as block blobs) and the upload API accesses it as expected. There are several tools that you can use to manage (upload, download, delete, etc) your storage account files. I like this one: Microsoft Azure Storage Explorer.

Call the VI Upload API to process the video file.

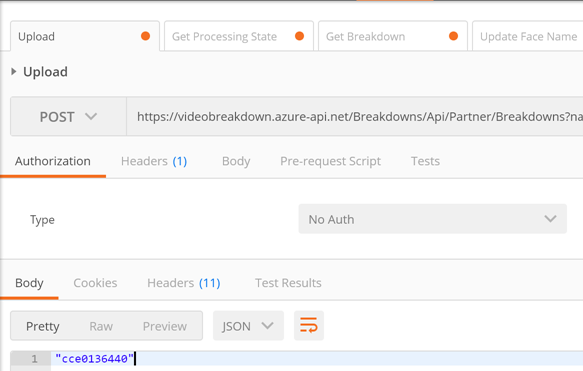

For my testing purposes I use Google Postman. The Upload API is a POST – and here's the address that I used to upload my video file:

POST https://videobreakdown.azure-api.net/Breakdowns/Api/Partner/Breakdowns

?name=GregFrontFacialWithAudio

&privacy=Private

&videoUrl=https%3A%2F%2Fgolivearmstorage.blob.core.windows.net%2Fpublic%2FGregFrontWithAudio.MOV

(Line breaks added for readability.)

One header key is required: Ocp-Apim-Subscription-Key. The value to provide is your subscription key noted above.

Sending this to the backend results in return of a reference code such as "cce0136440". As depicted:

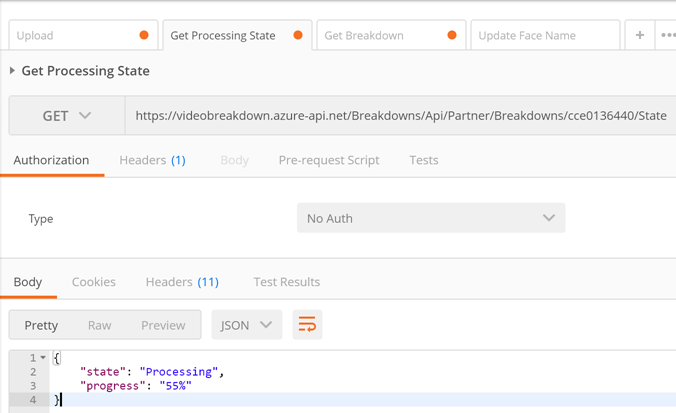

Call the VI Get Processing State API to learn the status of processing of your video.

Now you can learn the status of the VI ingestion process by calling the Get Processing State API. Use the reference code from the upload call to identify which video upload you want to learn about.

GET https://videobreakdown.azure-api.net/Breakdowns/Api/Partner/Breakdowns/cce0136440/State

As before, you'll need the Ocp-Apim-Subscription-Key header key and value. You'll get back something like this:

You'll know it's done when it says "state": "Processed".

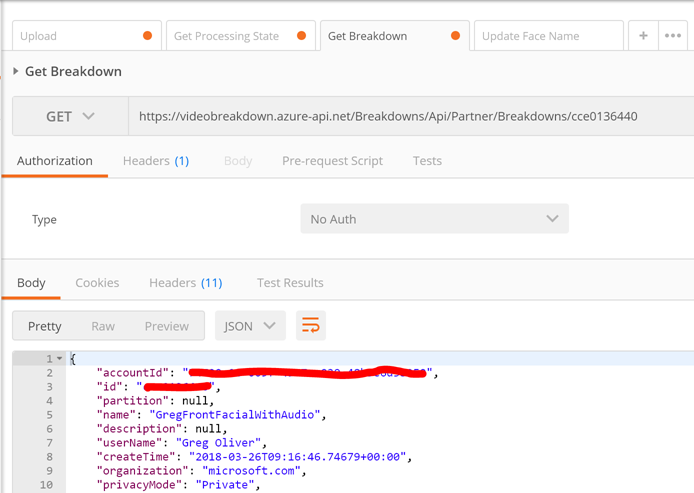

Call the Get Breakdown API to see what VI was able to discern about your video.

To find out what VI was able to see in your video, call the Get Breakdown API. This results in a JSON object with all of the details. Similar to this:

There is a great deal to be discovered by reading the JSON. Many use cases can continue on from this point, such as identifying the faces that are detected by VI the first time it sees them.

Please provide feedback – especially regarding usage of other VI API's.

Cheers!