Failover groups overview & best practices - Azure SQL Managed Instance

Applies to:

Azure SQL Managed Instance

The failover groups feature allows you to manage the replication and failover of all user databases in a managed instance to another Azure region. This article provides an overview of the failover group feature with best practices and recommendations for using it with Azure SQL Managed Instance.

To get started using the feature, review Configure a failover group for Azure SQL Managed Instance.

Overview

The failover groups feature allows you to manage the replication and failover of user databases in a managed instance to a managed instance in another Azure region. Failover groups are designed to simplify deployment and management of geo-replicated databases at scale.

For more information, see High availability for Azure SQL Managed Instance. For geo-failover RPO and RTO, see overview of business continuity.

Endpoint redirection

Failover groups provide read-write and read-only listener end-points that remain unchanged during geo-failovers. You don't have to change the connection string for your application after a geo-failover, because connections are automatically routed to the current primary. A geo-failover switches all secondary databases in the group to the primary role. After geo-failover completes, the DNS record is automatically updated to redirect the endpoints to the new region.

Offload read-only workloads

To reduce traffic to your primary databases, you can also use the secondary databases in a failover group to offload read-only workloads. Use the read-only listener to direct read-only traffic to a readable secondary database.

Recovering an application

To achieve full business continuity, adding regional database redundancy is only part of the solution. Recovering an application (service) end-to-end after a catastrophic failure requires recovery of all components that constitute the service and any dependent services. Examples of these components include the client software (for example, a browser with a custom JavaScript), web front ends, storage, and DNS. It's critical that all components are resilient to the same failures and become available within the recovery time objective (RTO) of your application. Therefore, you need to identify all dependent services and understand the guarantees and capabilities they provide. Then, you must take adequate steps to ensure that your service functions during the failover of the services on which it depends.

Failover policy

Failover groups support two failover policies:

- Customer managed (recommended) - Customers can perform a failover of a group when they notice an unexpected outage impacting one or more databases in the failover group. When using command line tools such as PowerShell, the Azure CLI, or the Rest API, the failover policy value for customer managed is

manual. - Microsoft managed - In the event of a widespread outage that impacts a primary region, Microsoft initiates failover of all impacted failover groups that have their failover policy configured to be Microsoft-managed. Microsoft managed failover won't be initiated for individual failover groups or a subset of failover groups in a region. When using command line tools such as PowerShell, the Azure CLI, or the Rest API, the failover policy value for Microsoft-managed is

automatic.

Each failover policy has a unique set of use cases and corresponding expectations on the failover scope and data loss, as the following table summarizes:

| Failover policy | Failover scope | Use case | Potential data loss |

|---|---|---|---|

| Customer managed (Recommended) |

Failover group(s) | One or more databases in a failover group(s) is impacted by an outage and become unavailable. You can choose to fail over. | Yes |

| Microsoft managed | All failover groups in the region | A widespread outage in a datacenter, availability zone, or region causes unavailability of databases and the Microsoft Azure SQL service team decides to trigger a forced failover. Use this option only when you want to delegate the disaster recovery responsibility to Microsoft and the application is tolerant to RTO (downtime) of at least one hour or more. |

Yes |

Customer managed

On rare occasions, the built in availability or high availability isn't enough to mitigate an outage, and your databases in a failover group might be unavailable for a duration that isn't acceptable to the service level agreement (SLA) of the applications using the databases. Databases can be unavailable due to a localized issue impacting just a few databases, or it could be at the datacenter, availability zone, or region level. In any of these cases, to restore business continuity, you can initiate a forced failover.

Setting your failover policy to customer managed is highly recommended, as it keeps you in control of when to initiate a failover and restore business continuity. You can initiate a failover when you notice an unexpected outage impacting one or more databases in the failover group.

Microsoft managed

With a Microsoft managed failover policy, disaster recovery responsibility is delegated to the Azure SQL service. For the Azure SQL service to initiate a forced failover, the following conditions must be met:

- Datacenter, availability zone, or region level outage caused by a natural disaster event, configuration changes, software bugs or hardware component failures and many databases in the region are impacted.

- Grace period is expired. Because verifying the scale of, and mitigating, the outage depends on human actions, the grace period can't be set below one hour.

When these conditions are met, the Azure SQL service initiates forced failovers for all failover groups in the region that have the failover policy set to Microsoft managed.

Set the failover policy to Microsoft managed only when:

- You want to delegate disaster recovery responsibility to the Azure SQL service.

- The application is tolerant to your database being unavailable for at least one hour or more.

- It's acceptable to trigger forced failovers some time after the grace period expires as the actual time for the forced failover can vary significantly.

- It's acceptable that all databases within the failover group fail over, regardless of their zone redundancy configuration or availability status. Although databases configured for zone redundancy are resilient to zonal failures and might not be impacted by an outage, they'll still be failed over if they're part of a failover group with a Microsoft managed failover policy.

- It's acceptable to have forced failovers of databases in the failover group without taking into consideration the application's dependency on other Azure services or components used by the application, which can cause performance degradation or unavailability of the application.

- It's acceptable to incur an unknown amount of data loss, as the exact time of forced failover can't be controlled, and ignores the synchronization status of the secondary databases.

- All the primary and secondary database(s) in the failover group and any geo replciation relationships have the same service tier, compute tier (provisioned or serverless) & compute size (DTUs or vCores). If the service level objective (SLO) of all the databases don't match, then the failover policy will be eventually updated from Microsoft Managed to Customer Managed by Azure SQL service.

When a failover is triggered by Microsoft, an entry for the operation name Failover Azure SQL failover group is added to the Azure Monitor activity log. The entry includes the name of the failover group under Resource, and Event initiated by displays a single hyphen (-) to indicate the failover was initiated by Microsoft. This information can also be found on the Activity log page of the new primary server or instance in the Azure portal.

Terminology and capabilities

Failover group (FOG)

A failover group allows for all user databases within a managed instance to fail over as a unit to another Azure region in case the primary managed instance becomes unavailable due to a primary region outage. Since failover groups for SQL Managed Instance contain all user databases within the instance, only one failover group can be configured on an instance.

Important

The name of the failover group must be globally unique within the

.database.windows.netdomain.Primary

The managed instance that hosts the primary databases in the failover group.

Secondary

The managed instance that hosts the secondary databases in the failover group. The secondary can't be in the same Azure region as the primary.

Important

- If a database contains in-memory OLTP objects, the primary and secondary geo-replica instance must have matching service tiers, as in-memory OLTP objects reside in memory. A lower service tier on the geo-replica instance can result in out-of-memory issues. If this occurs, the secondary replica might fail to recover the database, causing unavailability of the secondary database along with in-memory OLTP objects on the geo-secondary. This, in turn, could cause failover to be unsuccessful as well. To avoid this, ensure the service tier of the geo-secondary instance matches that of the primary database. Service tier upgrades can be size-of-data operations and can take a while to finish.

Failover (no data loss)

Failover performs full data synchronization between primary and secondary databases before the secondary switches to the primary role. This guarantees no data loss. Failover is only possible when the primary is accessible. Failover is used in the following scenarios:

- Perform disaster recovery (DR) drills in production when data loss isn't acceptable

- Relocate the workload to a different region

- Return the workload to the primary region after the outage has been mitigated (failback)

Forced failover (potential data loss)

Forced failover immediately switches the secondary to the primary role without waiting for recent changes to propagate from the primary. This operation can result in potential data loss. Forced failover is used as a recovery method during outages when the primary isn't accessible. When the outage is mitigated, the old primary will automatically reconnect and become a new secondary. A failover can be executed to fail back, returning the replicas to their original primary and secondary roles.

Grace period with data loss

Because data is replicated to the secondary using asynchronous replication, forced failover of groups with Microsoft managed failover policies can result in data loss. You can customize the failover policy to reflect your application's tolerance to data loss. By configuring

GracePeriodWithDataLossHours, you can control how long the Azure SQL service waits before initiating a forced failover, which can result in data loss.

DNS zone

A unique ID that is automatically generated when a new SQL Managed Instance is created. A multi-domain (SAN) certificate for this instance is provisioned to authenticate the client connections to any instance in the same DNS zone. The two managed instances in the same failover group must share the DNS zone.

Failover group read-write listener

A DNS CNAME record that points to the current primary. It's created automatically when the failover group is created and allows the read-write workload to transparently reconnect to the primary when the primary changes after failover. When the failover group is created on a SQL Managed Instance, the DNS CNAME record for the listener URL is formed as

<fog-name>.<zone_id>.database.windows.net.Failover group read-only listener

A DNS CNAME record that points to the current secondary. It's created automatically when the failover group is created and allows the read-only SQL workload to transparently connect to the secondary when the secondary changes after failover. When the failover group is created on a SQL Managed Instance, the DNS CNAME record for the listener URL is formed as

<fog-name>.secondary.<zone_id>.database.windows.net. By default, failover of the read-only listener is disabled as it ensures the performance of the primary isn't affected when the secondary is offline. However, it also means the read-only sessions won't be able to connect until the secondary is recovered. If you can't tolerate downtime for the read-only sessions and can use the primary for both read-only and read-write traffic at the expense of the potential performance degradation of the primary, you can enable failover for the read-only listener by configuring theAllowReadOnlyFailoverToPrimaryproperty. In that case, the read-only traffic is automatically redirected to the primary if the secondary isn't available.Note

The

AllowReadOnlyFailoverToPrimaryproperty only has effect if Microsoft managed failover policy is enabled and a forced failover has been triggered. In that case, if the property is set to True, the new primary will serve both read-write and read-only sessions.

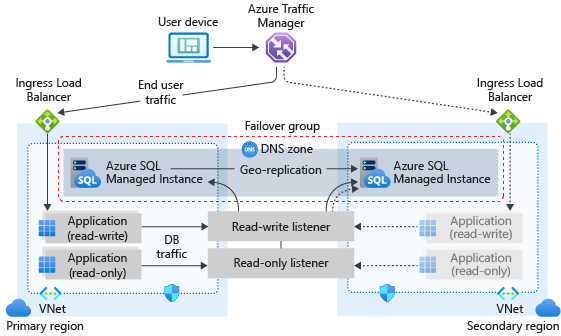

Failover group architecture

The failover group must be configured on the primary instance and will connect it to the secondary instance in a different Azure region. All user databases in the instance will be replicated to the secondary instance. System databases like master and msdb won't be replicated.

The following diagram illustrates a typical configuration of a geo-redundant cloud application using managed instance and failover group:

If your application uses SQL Managed Instance as the data tier, follow the general guidelines and best practices outlined in this article when designing for business continuity.

Create the geo-secondary instance

To ensure noninterrupted connectivity to the primary SQL Managed Instance after failover, both the primary and secondary instances must be in the same DNS zone. It guarantees that the same multi-domain (SAN) certificate can be used to authenticate client connections to either of the two instances in the failover group. When your application is ready for production deployment, create a secondary SQL Managed Instance in a different region, and make sure it shares the DNS zone with the primary SQL Managed Instance. You can do it by specifying an optional parameter during creation. If you're using PowerShell or the REST API, the name of the optional parameter is DNSZonePartner. The name of the corresponding optional field in the Azure portal is Primary Managed Instance.

Important

The first managed instance created in the subnet determines DNS zone for all subsequent instances in the same subnet. This means that two instances from the same subnet can't belong to different DNS zones.

For more information about creating the secondary SQL Managed Instance in the same DNS zone as the primary instance, see Configure a failover group for Azure SQL Managed Instance.

Use paired regions

Deploy both managed instances to paired regions for performance reasons. SQL Managed Instance failover groups in paired regions have better performance compared to unpaired regions.

Azure SQL Managed Instance follows a safe deployment practice where Azure paired regions are generally not deployed to at the same time. However, it's not possible to predict which region will be upgraded first, so the order of deployment isn't guaranteed. Sometimes, your primary instance is upgraded first, and sometimes the secondary instance is upgraded first.

In situations where Azure SQL Managed Instance is part of a failover group, and the instances in the group aren't in Azure paired regions, select different maintenance window schedules for your primary and secondary database. For example, select a Weekday maintenance window for your geo-secondary database and a Weekend maintenance window for your geo-primary database.

Enable and optimize geo-replication traffic flow between the instances

Connectivity between the virtual network subnets hosting primary and secondary instance must be established and maintained for uninterrupted geo-replication traffic flow. There are multiple ways to provide connectivity between the instances that you can choose among based on your network topology and policies:

Global virtual network peering (VNet peering) is the recommended way to establish connectivity between two instances in a failover group. It provides a low-latency, high-bandwidth private connection between the peered virtual networks using the Microsoft backbone infrastructure. No public Internet, gateways, or additional encryption is required in the communication between the peered virtual networks.

Initial seeding

When establishing a failover group between managed instances, there's an initial seeding phase before data replication starts. The initial seeding phase is the longest and most expensive part of the operation. Once initial seeding completes data is synchronized, and only subsequent data changes are replicated. The time it takes for the initial seeding to complete depends on the size of data, number of replicated databases, workload intensity on the primary databases, and the speed of the link between the virtual networks hosting primary and secondary instance that mostly depends on the way connectivity is established. Under normal circumstances, and when connectivity is established using recommended global virtual network peering, seeding speed is up to 360 GB an hour for SQL Managed Instance. Seeding is performed for a batch of user databases in parallel - not necessarily for all databases at the same time. Multiple batches might be needed if there are many databases hosted on the instance.

If the speed of the link between the two instances is slower than what is necessary, the time to seed is likely to be noticeably affected. You can use the stated seeding speed, number of databases, total size of data, and the link speed to estimate how long the initial seeding phase will take before data replication starts. For example, for a single 100-GB database, the initial seed phase would take about 1.2 hours if the link is capable of pushing 84 GB per hour, and if there are no other databases being seeded. If the link can only transfer 10 GB per hour, then seeding a 100-GB database can take about 10 hours. If there are multiple databases to replicate, seeding will be executed in parallel, and, when combined with a slow link speed, the initial seeding phase might take considerably longer, especially if the parallel seeding of data from all databases exceeds the available link bandwidth.

Important

In case of an extremely low-speed or busy link causing the initial seeding phase to take days the creation of a failover group can time out. The creation process will be automatically canceled after 6 days.

Manage geo-failover to a geo-secondary instance

The failover group manages geo-failover of all databases on the primary managed instance. When a group is created, each database in the instance will be automatically geo-replicated to the geo-secondary instance. You can't use failover groups to initiate a partial failover of a subset of databases.

Important

If a database is dropped on the primary managed instance, it will also be dropped automatically on the geo-secondary managed instance.

Use the read-write listener (primary MI)

For read-write workloads, use <fog-name>.zone_id.database.windows.net as the server name. Connections are automatically directed to the primary. This name doesn't change after failover. The geo-failover involves updating the DNS record, so the new client connections are routed to the new primary only after the client DNS cache is refreshed. Because the secondary instance shares the DNS zone with the primary, the client application will be able to reconnect to it using the same server-side SAN certificate. The existing client connections need to be terminated and then recreated to be routed to the new primary. The read-write listener and read-only listener can't be reached via the public endpoint for managed instance.

Use the read-only listener (secondary MI)

If you have logically isolated read-only workloads that are tolerant to data latency, you can run them on the geo-secondary. To connect directly to the geo-secondary, use <fog-name>.secondary.<zone_id>.database.windows.net as the server name.

In the Business Critical tier, SQL Managed Instance supports the use of read-only replicas to offload read-only query workloads, using the ApplicationIntent=ReadOnly parameter in the connection string. When you have configured a geo-replicated secondary, you can use this capability to connect to either a read-only replica in the primary location or in the geo-replicated location:

- To connect to a read-only replica in the primary location, use

ApplicationIntent=ReadOnlyand<fog-name>.<zone_id>.database.windows.net. - To connect to a read-only replica in the secondary location, use

ApplicationIntent=ReadOnlyand<fog-name>.secondary.<zone_id>.database.windows.net.

The read-write listener and read-only listener can't be reached via public endpoint for managed instance.

Potential performance degradation after failover

A typical Azure application uses multiple Azure services and consists of multiple components. Geo-failover of the group is triggered based on the state of the Azure SQL components alone. Other Azure services in the primary region might not be affected by the outage and their components might still be available in that region. Once the primary databases switch to the secondary region, the latency between the dependent components can increase. Ensure the redundancy of all the application's components in the secondary region and fail over application components together with the database so that application's performance isn't affected by higher cross-region latency.

Potential data loss after forced failover

If an outage occurs in the primary region, recent transactions might not have been replicated to the geo-secondary and there might be data loss if a forced failover is performed.

DNS update

The DNS update of the read-write listener will happen immediately after the failover is initiated. This operation won't result in data loss. However, the process of switching database roles can take up to 5 minutes under normal conditions. Until it's completed, some databases in the new primary instance will still be read-only. If a failover is initiated using PowerShell, the operation to switch the primary replica role is synchronous. If it's initiated using the Azure portal, the UI indicates completion status. If it's initiated using the REST API, use standard Azure Resource Manager's polling mechanism to monitor for completion.

Important

Use manual planned failover to move the primary back to the original location once the outage that caused the geo-failover is mitigated.

Save costs with a license-free DR replica

You can save on SQL Server license costs by configuring your secondary managed instance to be used for disaster recovery (DR) only. To set this up, see Configure a license-free standby replica for Azure SQL Managed Instance.

As long as the secondary instance isn't used for read-workloads, Microsoft provides you with a free number of vCores to match the primary instance. You're still charged for compute and storage used by the secondary instance. Failover groups support only one replica - the replica must either be a readable replica, or designated as a DR-only replica.

Enable scenarios dependent on objects from the system databases

System databases are not replicated to the secondary instance in a failover group. To enable scenarios that depend on objects from the system databases, make sure to create the same objects on the secondary instance and keep them synchronized with the primary instance.

For example, if you plan to use the same logins on the secondary instance, make sure to create them with the identical SID.

-- Code to create login on the secondary instance

CREATE LOGIN foo WITH PASSWORD = '<enterStrongPasswordHere>', SID = <login_sid>;

To learn more, see Replication of logins and agent jobs.

Synchronize instance properties and retention policies instances

Instances in a failover group remain separate Azure resources, and no changes made to the configuration of the primary instance will be automatically replicated to the secondary instance. Make sure to perform all relevant changes both on primary and secondary instance. For example, if you change backup storage redundancy or long-term backup retention policy on primary instance, make sure to change it on secondary instance as well.

Scale instances

You can scale up or scale down the primary and secondary instance to a different compute size within the same service tier or to a different service tier. When scaling up within the same service tier, we recommend that you scale up the geo-secondary first, and then scale up the primary. When scaling down within the same service tier, reverse the order: scale down the primary first, and then scale down the secondary. When you scale instance to a different service tier, this recommendation is enforced. The sequence of operations is enforced when scaling the service tier and vCores, as well as storage.

The sequence is recommended specifically to avoid the problem where the geo-secondary at a lower SKU gets overloaded and must be reseeded during an upgrade or downgrade process.

Note

There's a known issue which can impact accessibility of the instance being scaled using the associated failover group listener.

Prevent loss of critical data

Due to the high latency of wide area networks, geo-replication uses an asynchronous replication mechanism. Asynchronous replication makes the possibility of data loss unavoidable if the primary fails. To protect critical transactions from data loss, an application developer can call the sp_wait_for_database_copy_sync stored procedure immediately after committing the transaction. Calling sp_wait_for_database_copy_sync blocks the calling thread until the last committed transaction has been transmitted and hardened in the transaction log of the secondary database. However, it doesn't wait for the transmitted transactions to be replayed (redone) on the secondary. sp_wait_for_database_copy_sync is scoped to a specific geo-replication link. Any user with the connection rights to the primary database can call this procedure.

To prevent data loss during user-initiated, planned geo-failover, replication automatically and temporarily changes to synchronous replication, then performs a failover. Replication then returns to asynchronous mode after the geo-failover is complete.

Note

sp_wait_for_database_copy_sync prevents data loss after geo-failover for specific transactions, but doesn't guarantee full synchronization for read access. The delay caused by a sp_wait_for_database_copy_sync procedure call can be significant and depends on the size of the not yet transmitted transaction log on the primary at the time of the call.

Failover group status

Failover group reports its status describing the current state of the data replication:

- Seeding - Initial seeding is taking place after creation of the failover group, until all user databases are initialized on the secondary instance. Failover process can't be initiated while failover group is in the Seeding status, since user databases aren't copied to secondary instance yet.

- Synchronizing - the usual status of failover group. It means that data changes on the primary instance are being replicated asynchronously to the secondary instance. This status doesn't guarantee that the data is fully synchronized at every moment. There can be data changes from the primary still to be replicated to the secondary due to the asynchronous nature of the replication process between instances in the failover group. Both automatic and manual failovers can be initiated while the failover group is in the Synchronizing status.

- Failover in progress - this status indicates that either automatically or manually initiated failover process is in progress. No changes to the failover group or additional failovers can be initiated while the failover group is in this status.

Failback

When failover groups are configured with a Microsoft-managed failover policy, then forced failover to the geo-secondary server is initiated during a disaster scenario as per the defined grace period. Failback to the old primary must be initiated manually.

Failover groups with transactional replication

Using transactional replication with instances that are in a failover group is supported. However, if you configure replication before adding your SQL managed instance into a failover group, replication pauses when you start to create your failover group, and replication monitor shows a status of Replicated transactions are waiting for the next log backup or for mirroring partner to catch up. Replication resumes once the failover group is created successfully.

If a publisher or distributor SQL managed instance is in a failover group, the SQL managed instance administrator must clean up all publications on the old primary and reconfigure them on the new primary after a failover occurs. Review the transactional replication guide for the step of activities that are needed in this scenario.

Permissions, limitations and prerequisites

Review the configure failover group guide for a list of permissions, limitations and prerequisites before proceeding to configure the failover group.

Programmatically manage failover groups

Failover groups can also be managed programmatically using Azure PowerShell, Azure CLI, and REST API. Review configure failover group to learn more.

Disaster recovery drills

The recommended way to perform a DR drill is using the manual planned failover, as per the following tutorial: Test failover.

Performing a drill using forced failover is not recommended, as this operation doesn't provide guardrails against the data loss. Nevertheless, it's possible to achieve data lossless forced failover by ensuring the following conditions are met prior to initiating the forced failover:

- The workload is stopped on the primary managed instance.

- All long running transactions have completed.

- All client connections to the primary managed instance have been disconnected.

- Failover group status is 'Synchronizing'.

Please ensure the two managed instances have switched roles and that the failover group status has switched from 'Failover in progress' to 'Synchronizing' before optionally establishing connections to the new primary managed instance and starting read-write workload.

To perform a data lossless failback to the original managed instance roles, using manual planned failover instead of forced failover is strongly recommended. To proceed with forced failback:

- Follow the same steps as for the data lossless failover.

- Longer failback execution time is expected if the forced failback is executed shortly after the initial forced failover is completed, as it has to wait for completion of outstanding automatic backup operations on the former primary managed instance.

Related content

- Configure a failover group

- Use PowerShell to add a managed instance to a failover group

- Configure a license-free standby replica for Azure SQL Managed Instance

- Overview of business continuity with Azure SQL Managed Instance

- Automated backups in Azure SQL Managed Instance

- Restore a database from a backup in Azure SQL Managed Instance

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for