Language identification containers with Docker

The Speech language identification container detects the language spoken in audio files. You can get real-time speech or batch audio recordings with intermediate results. In this article, you learn how to download, install, and run a language identification container.

Note

The Speech language identification container is available in public preview. Containers in preview are still under development and don't meet Microsoft's stability and support requirements.

For more information about prerequisites, validating that a container is running, running multiple containers on the same host, and running disconnected containers, see Install and run Speech containers with Docker.

Tip

To get the most useful results, use the Speech language identification container with the speech to text or custom speech to text containers.

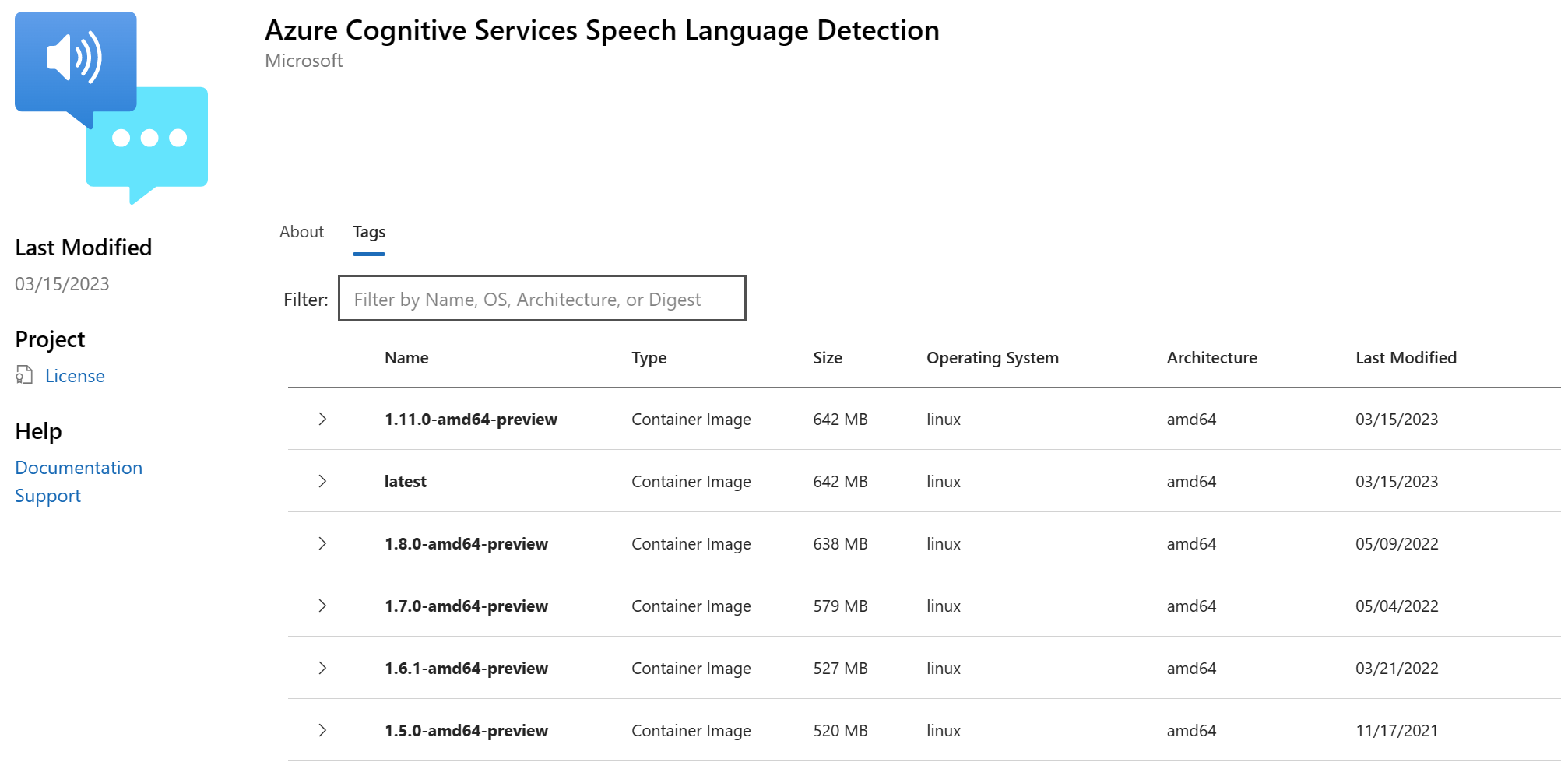

Container images

The Speech language identification container image for all supported versions and locales can be found on the Microsoft Container Registry (MCR) syndicate. It resides within the azure-cognitive-services/speechservices/ repository and is named language-detection.

The fully qualified container image name is, mcr.microsoft.com/azure-cognitive-services/speechservices/language-detection. Either append a specific version or append :latest to get the most recent version.

| Version | Path |

|---|---|

| Latest | mcr.microsoft.com/azure-cognitive-services/speechservices/language-detection:latest |

| 1.12.0 | mcr.microsoft.com/azure-cognitive-services/speechservices/language-detection:1.12.0-amd64-preview |

All tags, except for latest, are in the following format and are case sensitive:

<major>.<minor>.<patch>-<platform>-<prerelease>

The tags are also available in JSON format for your convenience. The body includes the container path and list of tags. The tags aren't sorted by version, but "latest" is always included at the end of the list as shown in this snippet:

{

"name": "azure-cognitive-services/speechservices/language-detection",

"tags": [

"1.1.0-amd64-preview",

"1.11.0-amd64-preview",

"1.12.0-amd64-preview",

"1.3.0-amd64-preview",

"1.5.0-amd64-preview",

<--redacted for brevity-->

"1.8.0-amd64-preview",

"latest"

]

}

Get the container image with docker pull

You need the prerequisites including required hardware. Also see the recommended allocation of resources for each Speech container.

Use the docker pull command to download a container image from Microsoft Container Registry:

docker pull mcr.microsoft.com/azure-cognitive-services/speechservices/language-detection:latest

Run the container with docker run

Use the docker run command to run the container.

The following table represents the various docker run parameters and their corresponding descriptions:

| Parameter | Description |

|---|---|

{ENDPOINT_URI} |

The endpoint is required for metering and billing. For more information, see billing arguments. |

{API_KEY} |

The API key is required. For more information, see billing arguments. |

When you run the Speech language identification container, configure the port, memory, and CPU according to the language identification container requirements and recommendations.

Here's an example docker run command with placeholder values. You must specify the ENDPOINT_URI and API_KEY values:

docker run --rm -it -p 5000:5003 --memory 1g --cpus 1 \

mcr.microsoft.com/azure-cognitive-services/speechservices/language-detection \

Eula=accept \

Billing={ENDPOINT_URI} \

ApiKey={API_KEY}

This command:

- Runs a Speech language identification container from the container image.

- Allocates 1 CPU core and 1 GB of memory.

- Exposes TCP port 5000 and allocates a pseudo-TTY for the container.

- Automatically removes the container after it exits. The container image is still available on the host computer.

For more information about docker run with Speech containers, see Install and run Speech containers with Docker.

Run with the speech to text container

If you want to run the language identification container with the speech to text container, you can use this docker image. After both containers are started, use this docker run command to execute speech-to-text-with-languagedetection-client:

docker run --rm -v ${HOME}:/root -ti antsu/on-prem-client:latest ./speech-to-text-with-languagedetection-client ./audio/LanguageDetection_en-us.wav --host localhost --lport 5003 --sport 5000

Increasing the number of concurrent calls can affect reliability and latency. For language identification, we recommend a maximum of four concurrent calls using 1 CPU with 1 GB of memory. For hosts with 2 CPUs and 2 GB of memory, we recommend a maximum of six concurrent calls.

Use the container

Speech containers provide websocket-based query endpoint APIs that are accessed through the Speech SDK and Speech CLI. By default, the Speech SDK and Speech CLI use the public Speech service. To use the container, you need to change the initialization method.

Important

When you use the Speech service with containers, be sure to use host authentication. If you configure the key and region, requests will go to the public Speech service. Results from the Speech service might not be what you expect. Requests from disconnected containers will fail.

Instead of using this Azure-cloud initialization config:

var config = SpeechConfig.FromSubscription(...);

Use this config with the container host:

var config = SpeechConfig.FromHost(

new Uri("http://localhost:5000"));

Instead of using this Azure-cloud initialization config:

auto speechConfig = SpeechConfig::FromSubscription(...);

Use this config with the container host:

auto speechConfig = SpeechConfig::FromHost("http://localhost:5000");

Instead of using this Azure-cloud initialization config:

speechConfig, err := speech.NewSpeechConfigFromSubscription(...)

Use this config with the container host:

speechConfig, err := speech.NewSpeechConfigFromHost("http://localhost:5000")

Instead of using this Azure-cloud initialization config:

SpeechConfig speechConfig = SpeechConfig.fromSubscription(...);

Use this config with the container host:

SpeechConfig speechConfig = SpeechConfig.fromHost("http://localhost:5000");

Instead of using this Azure-cloud initialization config:

const speechConfig = sdk.SpeechConfig.fromSubscription(...);

Use this config with the container host:

const speechConfig = sdk.SpeechConfig.fromHost("http://localhost:5000");

Instead of using this Azure-cloud initialization config:

SPXSpeechConfiguration *speechConfig = [[SPXSpeechConfiguration alloc] initWithSubscription:...];

Use this config with the container host:

SPXSpeechConfiguration *speechConfig = [[SPXSpeechConfiguration alloc] initWithHost:"http://localhost:5000"];

Instead of using this Azure-cloud initialization config:

let speechConfig = SPXSpeechConfiguration(subscription: "", region: "");

Use this config with the container host:

let speechConfig = SPXSpeechConfiguration(host: "http://localhost:5000");

Instead of using this Azure-cloud initialization config:

speech_config = speechsdk.SpeechConfig(

subscription=speech_key, region=service_region)

Use this config with the container endpoint:

speech_config = speechsdk.SpeechConfig(

host="http://localhost:5000")

When you use the Speech CLI in a container, include the --host http://localhost:5000/ option. You must also specify --key none to ensure that the CLI doesn't try to use a Speech key for authentication. For information about how to configure the Speech CLI, see Get started with the Azure AI Speech CLI.

Try language identification using host authentication instead of key and region. When you run language ID in a container, use the SourceLanguageRecognizer object instead of SpeechRecognizer or TranslationRecognizer.

Next steps

- See the Speech containers overview

- Review configure containers for configuration settings

- Use more Azure AI containers

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for