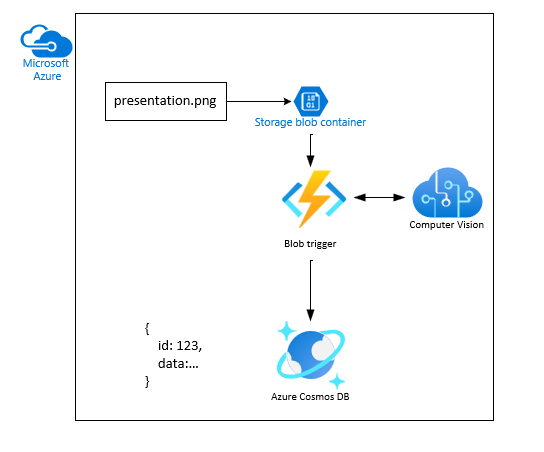

JavaScript Tutorial: Upload and analyze a file with Azure Functions and Blob Storage

In this tutorial, you'll learn how to upload an image to Azure Blob Storage and process it using Azure Functions, Computer Vision, and Cosmos DB. You'll also learn how to implement Azure Function triggers and bindings as part of this process. Together, these services analyze an uploaded image that contains text, extract the text out of it, and then store the text in a database row for later analysis or other purposes.

Azure Blob Storage is Microsoft's massively scalable object storage solution for the cloud. Blob Storage is designed for storing images and documents, streaming media files, managing backup and archive data, and much more. You can read more about Blob Storage on the overview page.

Warning

This tutorial uses publicly accessible storage to simplify the process to finish this tutorial. Anonymous public access presents a security risk. Learn how to remediate this risk.

Azure Cosmos DB is a fully managed NoSQL and relational database for modern app development.

Azure Functions is a serverless computer solution that allows you to write and run small blocks of code as highly scalable, serverless, event driven functions. You can read more about Azure Functions on the overview page.

In this tutorial, learn how to:

- Upload images and files to Blob Storage

- Use an Azure Function event trigger to process data uploaded to Blob Storage

- Use Azure AI services to analyze an image

- Write data to Cosmos DB using Azure Function output bindings

Prerequisites

- An Azure account with an active subscription. Create an account for free.

- Visual Studio Code installed.

- Azure Functions extension to deploy and configure the Function App.

- Azure Storage extension

- Azure Databases extension

- Azure Resources extension

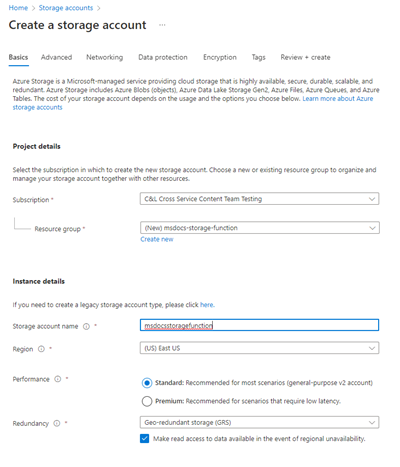

Create the storage account and container

The first step is to create the storage account that will hold the uploaded blob data, which in this scenario will be images that contain text. A storage account offers several different services, but this tutorial utilizes Blob Storage only.

In Visual Studio Code, select Ctrl + Shift + P to open the command palette.

Search for Azure Storage: Create Storage Account (Advanced).

Use the following table to create the Storage resource.

Setting Value Name Enter msdocsstoragefunction or something similar. Resource Group Create the msdocs-storage-functionresource group you created earlier.Static web hosting No. In Visual Studio Code, select Shift + Alt + A to open the Azure Explorer.

Expand the Storage section, expand your subscription node and wait for the resource to be created.

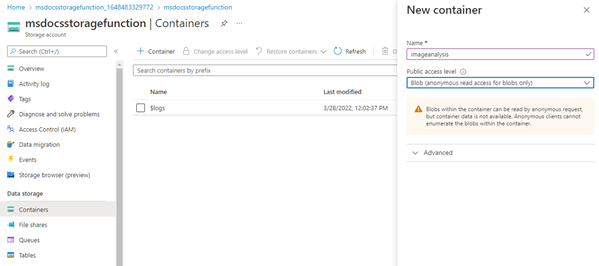

Create the container in Visual Studio Code

- Still in the Azure Explorer with your new Storage resource found, expand the resource to see the nodes.

- Right-click on Blob Containers and select Create Blob Container.

- Enter the name

images. This creates a private container.

Change from private to public container in Azure portal

This procedure expects a public container. To change that configuration, make the change in the Azure portal.

- Right-click on the Storage Resource in the Azure Explorer and select Open in Portal.

- In the Data Storage section, select Containers.

- Find your container,

images, and select the...(ellipse) at the end of the line. - Select Change access level.

- Select Blob (anonymous read access for blobs only then select Ok.

- Return to Visual Studio Code.

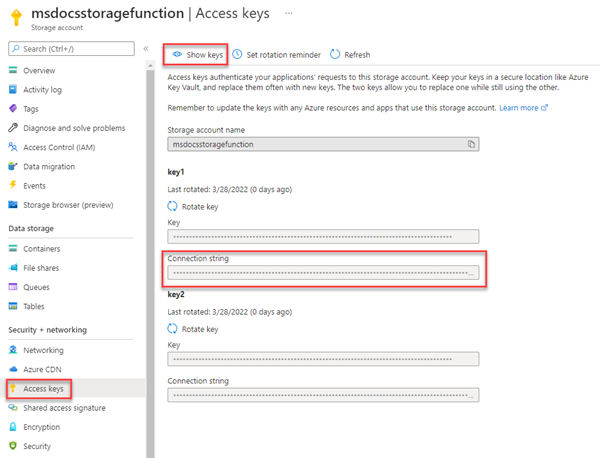

Retrieve the connection string in Visual Studio Code

- In Visual Studio Code, select Shift + Alt + A to open the Azure Explorer.

- Right-click on your storage resource and select Copy Connection String.

- paste this somewhere to use later.

- Also make note of the storage account name

msdocsstoragefunctionto use later.

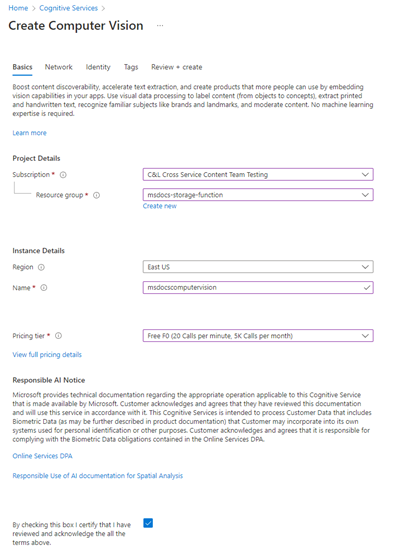

Create the Azure AI Vision service

Next, create the Azure AI Vision service account that will process our uploaded files. Vision is part of Azure AI services and offers various features for extracting data out of images. You can learn more about Azure AI Vision on the overview page.

In the search bar at the top of the portal, search for Computer and select the result labeled Computer vision.

On the Computer vision page, select + Create.

On the Create Computer Vision page, enter the following values:

- Subscription: Choose your desired Subscription.

- Resource Group: Use the

msdocs-storage-functionresource group you created earlier. - Region: Select the region that is closest to you.

- Name: Enter in a name of

msdocscomputervision. - Pricing Tier: Choose Free if it's available, otherwise choose Standard S1.

- Check the Responsible AI Notice box if you agree to the terms

Select Review + Create at the bottom. Azure takes a moment to validate the information you entered. Once the settings are validated, choose Create and Azure will begin provisioning the Computer Vision service, which might take a moment.

When the operation has completed, select Go to Resource.

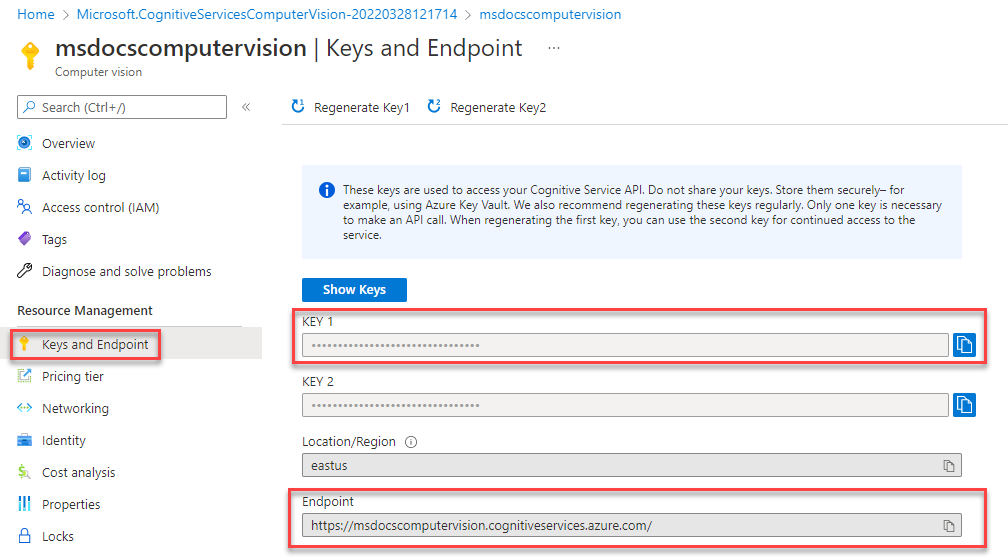

Retrieve the Computer Vision keys

Next, we need to find the secret key and endpoint URL for the Computer Vision service to use in our Azure Function app.

On the Computer Vision overview page, select Keys and Endpoint.

On the Keys and EndPoint page, copy the Key 1 value and the EndPoint values and paste them somewhere to use later. The endpoint should be in the format of

https://YOUR-RESOURCE-NAME.cognitiveservices.azure.com/

Create a Cosmos DB service account

Create the Cosmos DB service account to store the analysis of files. Azure Cosmos DB is a fully managed NoSQL and relational database for modern app development. You can learn more about Cosmos DB and its support APIs for several different industry databases.

While this tutorial specifies an API when you create your resource, the Azure Function bindings for Cosmos DB are configured in the same way for all Cosmos DB APIs.

In the search bar at the top of the portal, search for Azure Cosmos DB and select the result.

On the Azure Cosmos DB page, select + Create. Select Azure Cosmos DB for NoSQL from the list of API choices.

On the Create Cosmos DB page, enter the following values:

- Subscription: Choose your desired Subscription.

- Resource Group: Use the

msdocs-storage-functionresource group you created earlier. - Region: Select the same region as your resource group.

- Name: Enter in a name of

msdocscosmosdb. - Pricing Tier: Choose Free if it's available, otherwise choose Standard S1.

Select Review + Create at the bottom. Azure will take a moment validate the information you entered. Once the settings are validated, choose Create and Azure will begin provisioning the Computer Vision service, which might take a moment.

When the operation has completed, select Go to Resource.

Select Data Explorer then select New Container.

Create a new database and container with the following settings:

- Create new database id:

StorageTutorial. - Enter the new container id:

analysis. - Enter the partition key:

/type.

- Create new database id:

Leave the rest of the default settings and select OK.

Get the Cosmos DB connection string

Get the connection string for the Cosmos DB service account to use in our Azure Function app.

On the Cosmos DB overview page, select Keys.

On the Keys page, copy the Primary Connection String to use later.

Download and configure the sample project

The code for the Azure Function used in this tutorial can be found in this GitHub repository, in the JavaScript-v4 subdirectory. You can also clone the project using the command below.

git clone https://github.com/Azure-Samples/msdocs-storage-bind-function-service.git \

cd msdocs-storage-bind-function-service/javascript-v4 \

code .

The sample project accomplishes the following tasks:

- Retrieves environment variables to connect to the storage account, Computer Vision, and Cosmos DB service

- Accepts the uploaded file as a blob parameter

- Analyzes the blob using the Computer Vision service

- Inserts the analyzed image text, as a JSON object, into Cosmos DB using output bindings

Once you've downloaded and opened the project, there are a few essential concepts to understand:

| Concept | Purpose |

|---|---|

| Function | The Azure Function is defined by both the function code and the bindings. These are in ./src/functions/process-blobs.js. |

| Triggers and bindings | The triggers and bindings indicate that data, which is expected into or out of the function and which service is going to send or receive that data. |

Triggers and bindings used in this tutorial to expediate the development process by removing the need to write code to connect to services.

Input Storage Blob trigger

The code, which specifies that the function is triggered when a blob is uploaded to the images container follows. The function is triggered on any blob name including hierarchical folders.

// ...preceding code removed for brevity

app.storageBlob('process-blob-image', {

path: 'images/{name}', // Storage container name: images, Blob name: {name}

connection: 'StorageConnection', // Storage account connection string

handler: async (blob, context) => {

// ... function code removed for brevity

- app.storageBlob - The Storage Blob input trigger is used to bind the function to the upload event in Blob Storage. The trigger has two required parameters:

path: The path the trigger watches for events. The path includes the container name,images, and the variable substitution for the blob name. This blob name is retrieved from thenameproperty.{name}: The name of the blob uploaded. The use of theblobis the parameter name for the blob coming into the function. Don't change the valueblob.connection: The connection string of the storage account. The valueStorageConnectionmatches the name in thelocal.settings.jsonfile when developing locally.

Output Cosmos DB trigger

When the function finishes, the function uses the returned object as the data to insert into Cosmos DB.

// ... function definition ojbect

app.storageBlob('process-blob-image', {

// removed for brevity

// Data to insert into Cosmos DB

const id = uuidv4().toString();

const analysis = await analyzeImage(blobUrl);

// `type` is the partition key

const dataToInsertToDatabase = {

id,

type: 'image',

blobUrl,

blobSize: blob.length,

analysis,

trigger: context.triggerMetadata

}

return dataToInsertToDatabase;

}),

// Output binding for Cosmos DB

return: output.cosmosDB({

connection: 'CosmosDBConnection',

databaseName:'StorageTutorial',

containerName:'analysis'

})

});

For the container in this article, the following required properties are:

id: the ID required for Cosmos DB to create a new row./type: the partition key specified with the container was created.output.cosmosDB - The Cosmos DB output trigger is used to insert the result of the function to Cosmos DB.

connection: The connection string of the storage account. The valueStorageConnectionmatches the name in thelocal.settings.jsonfile.databaseName: The Cosmos DB database to connect to.containerName: The name of the table to write the parsed image text value returned by the function. The table must already exist.

Azure Function code

The following is the full function code.

const { app, input, output } = require('@azure/functions');

const { v4: uuidv4 } = require('uuid');

const { ApiKeyCredentials } = require('@azure/ms-rest-js');

const { ComputerVisionClient } = require('@azure/cognitiveservices-computervision');

const sleep = require('util').promisify(setTimeout);

const STATUS_SUCCEEDED = "succeeded";

const STATUS_FAILED = "failed"

const imageExtensions = ["jpg", "jpeg", "png", "bmp", "gif", "tiff"];

async function analyzeImage(url) {

try {

const computerVision_ResourceKey = process.env.ComputerVisionKey;

const computerVision_Endpoint = process.env.ComputerVisionEndPoint;

const computerVisionClient = new ComputerVisionClient(

new ApiKeyCredentials({ inHeader: { 'Ocp-Apim-Subscription-Key': computerVision_ResourceKey } }), computerVision_Endpoint);

const contents = await computerVisionClient.analyzeImage(url, {

visualFeatures: ['ImageType', 'Categories', 'Tags', 'Description', 'Objects', 'Adult', 'Faces']

});

return contents;

} catch (err) {

console.log(err);

}

}

app.storageBlob('process-blob-image', {

path: 'images/{name}',

connection: 'StorageConnection',

handler: async (blob, context) => {

context.log(`Storage blob 'process-blob-image' url:${context.triggerMetadata.uri}, size:${blob.length} bytes`);

const blobUrl = context.triggerMetadata.uri;

const extension = blobUrl.split('.').pop();

if(!blobUrl) {

// url is empty

return;

} else if (!extension || !imageExtensions.includes(extension.toLowerCase())){

// not processing file because it isn't a valid and accepted image extension

return;

} else {

//url is image

const id = uuidv4().toString();

const analysis = await analyzeImage(blobUrl);

// `type` is the partition key

const dataToInsertToDatabase = {

id,

type: 'image',

blobUrl,

blobSize: blob.length,

...analysis,

trigger: context.triggerMetadata

}

return dataToInsertToDatabase;

}

},

return: output.cosmosDB({

connection: 'CosmosDBConnection',

databaseName:'StorageTutorial',

containerName:'analysis'

})

});

This code also retrieves essential configuration values from environment variables, such as the Blob Storage connection string and Computer Vision key. These environment variables are added to the Azure Function environment after it's deployed.

The default function also uses a second method called AnalyzeImage. This code uses the URL Endpoint and Key of the Computer Vision account to make a request to Computer Vision to process the image. The request returns all of the text discovered in the image. This text is written to Cosmos DB, using the outbound binding.

Configure local settings

To run the project locally, enter the environment variables in the ./local.settings.json file. Fill in the placeholder values with the values you saved earlier when creating the Azure resources.

Although the Azure Function code runs locally, it connects to the cloud-based services for Storage, rather than using any local emulators.

{

"IsEncrypted": false,

"Values": {

"FUNCTIONS_WORKER_RUNTIME": "node",

"AzureWebJobsStorage": "",

"StorageConnection": "STORAGE-CONNECTION-STRING",

"StorageAccountName": "STORAGE-ACCOUNT-NAME",

"StorageContainerName": "STORAGE-CONTAINER-NAME",

"ComputerVisionKey": "COMPUTER-VISION-KEY",

"ComputerVisionEndPoint": "COMPUTER-VISION-ENDPOINT",

"CosmosDBConnection": "COSMOS-DB-CONNECTION-STRING"

}

}

Create Azure Functions app

You're now ready to deploy the application to Azure using a Visual Studio Code extension.

In Visual Studio Code, select Shift + Alt + A to open the Azure explorer.

In the Functions section, find and right-click the subscription, and select Create Function App in Azure (Advanced).

Use the following table to create the Function resource.

Setting Value Name Enter msdocsprocessimage or something similar. Runtime stack Select a Node.js LTS version. Programming model Select v4. OS Select Linux. Resource Group Choose the msdocs-storage-functionresource group you created earlier.Location Select the same region as your resource group. Plan Type Select Consumption. Azure Storage Select the storage account you created earlier. Application Insights Skip for now. Azure provisions the requested resources, which will take a few moments to complete.

Deploy Azure Functions app

- When the previous resource creation process finishes, right-click the new resource in the Functions section of the Azure explorer, and select Deploy to Function App.

- If asked Are you sure you want to deploy..., select Deploy.

- When the process completes, a notification appears which a choice, which includes Upload settings. Select that option. This copies the values from your local.settings.json file into your Azure Function app. If the notification disappeared before you could select it, continue to the next section.

Add app settings for Storage and Computer Vision

If you selected Upload settings in the notification, skip this section.

The Azure Function was deployed successfully, but it can't connect to our Storage account and Computer Vision services yet. The correct keys and connection strings must first be added to the configuration settings of the Azure Functions app.

Find your resource in the Functions section of the Azure explorer, right-click Application Settings, and select Add New Setting.

Enter a new app setting for the following secrets. Copy and paste your secret values from your local project in the

local.settings.jsonfile.Setting StorageConnection StorageAccountName StorageContainerName ComputerVisionKey ComputerVisionEndPoint CosmosDBConnection

All of the required environment variables to connect our Azure function to different services are now in place.

Upload an image to Blob Storage

You're now ready to test out our application! You can upload a blob to the container, and then verify that the text in the image was saved to Cosmos DB.

- In the Azure explorer in Visual Studio Code, find and expand your Storage resource in the Storage section.

- Expand Blob Containers and right-click your container name,

images, then select Upload files. - You can find a few sample images included in the images folder at the root of the downloadable sample project, or you can use one of your own.

- For the Destination directory, accept the default value,

/. - Wait until the files are uploaded and listed in the container.

View text analysis of image

Next, you can verify that the upload triggered the Azure Function, and that the text in the image was analyzed and saved to Cosmos DB properly.

In Visual Studio Code, in the Azure Explorer, under the Azure Cosmos DB node, select your resource, and expand it to find your database, StorageTutorial.

Expand the database node.

An analysis container should now be available. Select on the container's Documents node to preview the data inside. You should see an entry for the processed image text of an uploaded file.

{ "id": "3cf7d6f0-a362-421e-9482-3020d7d1e689", "type": "image", "blobUrl": "https://msdocsstoragefunction.blob.core.windows.net/images/presentation.png", "blobSize": 1383614, "analysis": { ... details removed for brevity ... "categories": [], "adult": {}, "imageType": {}, "tags": [], "description": {}, "faces": [], "objects": [], "requestId": "eead3d60-9905-499c-99c5-23d084d9cac2", "metadata": {}, "modelVersion": "2021-05-01" }, "trigger": { "blobTrigger": "images/presentation.png", "uri": "https://msdocsstorageaccount.blob.core.windows.net/images/presentation.png", "properties": { "lastModified": "2023-07-07T15:32:38+00:00", "createdOn": "2023-07-07T15:32:38+00:00", "metadata": {}, ... removed for brevity ... "contentLength": 1383614, "contentType": "image/png", "accessTier": "Hot", "accessTierInferred": true, }, "metadata": {}, "name": "presentation.png" }, "_rid": "YN1FAKcZojEFAAAAAAAAAA==", "_self": "dbs/YN1FAA==/colls/YN1FAKcZojE=/docs/YN1FAKcZojEFAAAAAAAAAA==/", "_etag": "\"7d00f2d3-0000-0700-0000-64a830210000\"", "_attachments": "attachments/", "_ts": 1688743969 }

Congratulations! You succeeded in processing an image that was uploaded to Blob Storage using Azure Functions and Computer Vision.

Troubleshooting

Use the following table to help troubleshoot issues during this procedure.

| Issue | Resolution |

|---|---|

await computerVisionClient.read(url); errors with Only absolute URLs are supported |

Make sure your ComputerVisionEndPoint endpoint is in the format of https://YOUR-RESOURCE-NAME.cognitiveservices.azure.com/. |

Clean up resources

If you're not going to continue to use this application, you can delete the resources you created by removing the resource group.

- Select Resource groups from the Azure explorer

- Find and right-click the

msdocs-storage-functionresource group from the list. - Select Delete. The process to delete the resource group may take a few minutes to complete.

Sample code

Next steps

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for