What is your table definition?

It is almost certainly due to updating indexes in batches of 10,000. As your table/indexes grow, it takes longer to update.

10,000 is a very small batch. Try 100,000 to 500,000 row batch size.

This browser is no longer supported.

Upgrade to Microsoft Edge to take advantage of the latest features, security updates, and technical support.

We are doing a basic ETL from Oracle to SQL Server using SSIS (Using a script component).

It is a basic read and bulk insert.

The insert is done in batches of 10,000.

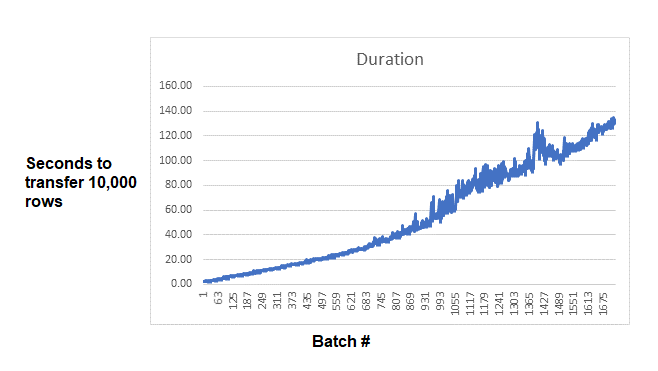

When doing an ETL of 17million rows, we observe a steady decline in performance.

First batch taking 2 seconds but the last taking 132.

Any areas to look for solving?

Thanks

What is your table definition?

It is almost certainly due to updating indexes in batches of 10,000. As your table/indexes grow, it takes longer to update.

10,000 is a very small batch. Try 100,000 to 500,000 row batch size.

Tom mentioned indexes. Having the clustered index in place can help - at least if the data loaded is being aligned to it. But it is much better to create non-clustered indexes until after the load has completed.

Hi @Gayan Dasanayake ,

It could be many problems. See here for MSDN recommendations on optimizing bulk inserts. Try limiting the batches to say 10,000 or 100,000 rows and see how that goes. If it helps a lot then you should spend some time to figure out your best batch size.

From SSIS side, here is a similar issue you may refer this to see if it will be helpful.

Regards,

Zoe

If the answer is helpful, please click "Accept Answer" and upvote it.

Note: Please follow the steps in our documentation to enable e-mail notifications if you want to receive the related email notification for this thread.

Hot issues October

We have found that when the Oracle oledb driver was changed from version 19.1 to 12.1 the issue was fixed.

However, the batch size and index-related optimizations would still improve I assume, which we are investigating further.

Thanks to all who helped.