Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

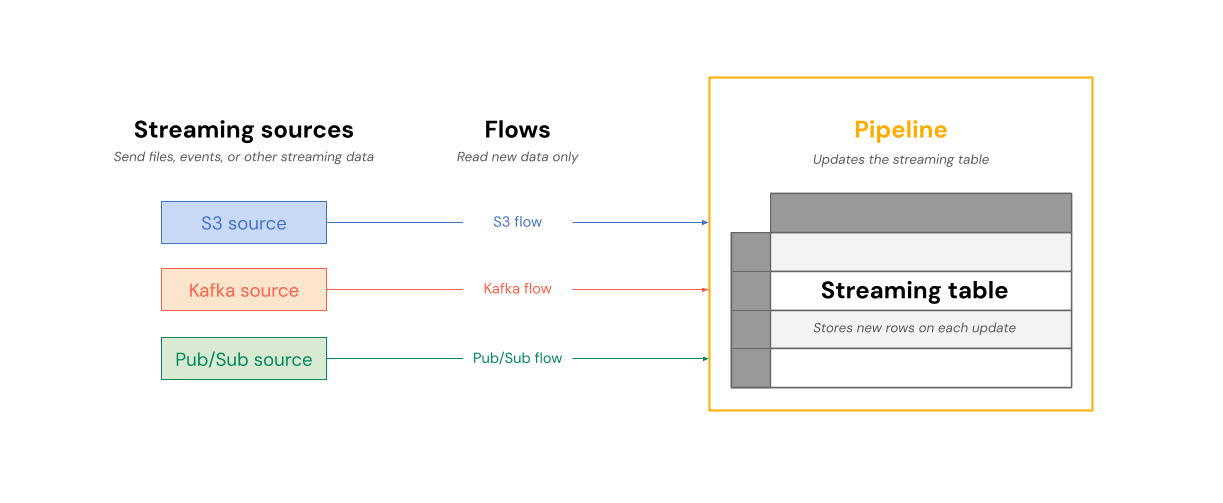

A Streaming table is a Delta table with additional support for streaming or incremental data processing. A Streaming table can be targeted by one or more flows in a pipeline.

Streaming tables are a good choice for data ingestion for the following reasons:

- Each input row is handled only once, which models the vast majority of ingestion workloads (that is, by appending or upserting rows into a table).

- They can handle large volumes of append-only data.

Streaming tables are also a good choice for low-latency streaming transformations because they can reason over rows and windows of time, handle high volumes of data, and provide low-latency processing.

The following diagram shows how flows read from streaming sources and write incrementally to a Streaming table within a pipeline.

On each update, the flows associated with a Streaming table read the changed information in a streaming source, and append new information to that table.

Streaming tables are owned and updated by a single pipeline. You explicitly define streaming tables in the source code of the pipeline. Tables defined by a pipeline can't be changed or updated by any other pipeline. You can define multiple flows to append to a single streaming table.

Azure Databricks creates internal tables to support Streaming table processing. These tables appear in system.information_schema.tables but are not visible in Catalog Explorer or other workspace UI pages.

Note

When you create a streaming table outside a pipeline using Databricks SQL, Azure Databricks creates a pipeline that is used to update the table. You can see the pipeline by selecting Jobs & Pipelines from the left navigation in your workspace. You can add the Pipeline type column to your view. Streaming tables defined in a pipeline have a type of ETL. Streaming tables created in Databricks SQL have a type of MV/ST.

For more information about flows, see Load and process data incrementally with Lakeflow Spark Declarative Pipelines flows.

Streaming tables for ingestion

Streaming tables are designed for append-only data sources and process inputs only once. This makes them well-suited for ingestion workloads where data arrives continuously and must be reliably captured without reprocessing existing records. Azure Databricks supports ingesting data from cloud storage and streaming message buses.

Ingest files from cloud storage

You can use a Streaming table to ingest new files from cloud storage. These examples use Auto Loader to incrementally process new files as they arrive.

Python

from pyspark import pipelines as dp

# Create a streaming table

@dp.table

def customers_bronze():

return (

spark.readStream.format("cloudFiles")

.option("cloudFiles.format", "json")

.option("cloudFiles.inferColumnTypes", "true")

.load("/Volumes/path/to/files")

)

To create a Streaming table, the data set definition must be a stream type. When you use the spark.readStream function in a data set definition, it returns a streaming dataset.

SQL

-- Create a streaming table

CREATE OR REFRESH STREAMING TABLE customers_bronze

AS SELECT * FROM STREAM read_files(

"/volumes/path/to/files",

format => "json"

);

Streaming tables require streaming datasets. The STREAM keyword before read_files tells the query to treat the data set as a stream.

Ingest streaming messages

You can also use streaming tables to ingest data from message buses. The following example demonstrates how to create a Streaming table that reads from a Pub/Sub topic.

Python

@dp.table

def pubsub_raw():

auth_options = {

"clientId": client_id,

"clientEmail": client_email,

"privateKey": private_key,

"privateKeyId": private_key_id

}

return (

spark.readStream

.format("pubsub")

.option("subscriptionId", "my-subscription")

.option("topicId", "my-topic")

.option("projectId", "my-project")

.options(auth_options)

.load()

)

SQL

CREATE OR REFRESH STREAMING TABLE pubsub_raw

AS SELECT * FROM STREAM read_pubsub(

subscriptionId => 'my-subscription',

projectId => 'my-project',

topicId => 'my-topic',

clientEmail => secret('pubsub-scope', 'clientEmail'),

clientId => secret('pubsub-scope', 'clientId'),

privateKeyId => secret('pubsub-scope', 'privateKeyId'),

privateKey => secret('pubsub-scope', 'privateKey')

);

Databricks recommends using secrets when providing authorization options. See Configure access to Pub/Sub for all authentication options.

For more details on loading data into streaming table, see Load data in pipelines.

The following diagram illustrates how append-only streaming tables work.

A row that has already been appended to a Streaming table will not be re-queried with later updates to the pipeline. If you modify the query (for example, from SELECT LOWER (name) to SELECT UPPER (name)), existing rows will not update to be uppercase, but new rows will be uppercase. You can trigger a full refresh to requery all previous data from the source table to update all rows in the Streaming table.

Streaming tables and low-latency streaming

Streaming tables are designed for low-latency streaming over bounded state. Streaming tables use checkpoint management, which makes them well-suited for low-latency streaming. However, they expect streams that are naturally bounded or bounded with a watermark.

A naturally bounded stream is produced by a streaming data source that has a well-defined start and end. An example of a naturally bounded stream is reading data from a directory of files where no new files are being added after an initial batch of files is placed. The stream is considered bounded because the number of files is finite, and the stream ends after all of the files have been processed.

You can also use a watermark to bound a stream. A watermark in Structured Streaming is a mechanism that helps handle late data by specifying how long the system should wait for delayed events before considering the window of time as complete. An unbounded stream that does not have a watermark can cause a pipeline to fail due to memory pressure.

For more information about stateful stream processing, see Optimize stateful processing with watermarks.

Stream-snapshot joins

Stream-snapshot joins connect a streaming dataset to a dimension table that is snapshotted at stream start. Because the dimension table is treated as fixed at that point in time, any changes made to it after the stream starts are not reflected in the join. This is acceptable when small discrepancies don't matter — for example, when the number of transactions is many orders of magnitude larger than the number of customers.

The following code sample joins a dimension table with two rows called customers with an ever-increasing data set, transactions. It materializes a join between these two datasets in a table called sales_report. If an outside process updates the customers table by adding a new row (customer_id=3, name=Zoya), this new row will not be present in the join because the static dimension table was snapshotted when streams were started.

from pyspark import pipelines as dp

@dp.temporary_view

# assume this table contains an append-only stream of rows about transactions

# (customer_id=1, value=100)

# (customer_id=2, value=150)

# (customer_id=3, value=299)

# ... <and so on> ...

def v_transactions():

return spark.readStream.table("transactions")

# assume this table contains only these two rows about customers

# (customer_id=1, name=Bilal)

# (customer_id=2, name=Olga)

@dp.temporary_view

def v_customers():

return spark.read.table("customers")

@dp.table

def sales_report():

facts = spark.readStream.table("v_transactions")

dims = spark.read.table("v_customers")

return facts.join(dims, on="customer_id", how="inner")

Streaming table limitations

Streaming tables have the following limitations:

- Limited evolution: You can change the query without recomputing the entire data set. Without a full refresh, a Streaming table only sees each row once, so different queries will have processed different rows. For example, if you add

UPPER()to a field in the query, only rows processed after the change will be in uppercase. This means you must be aware of all previous versions of the query that are running on your data set. To reprocess existing rows that were processed prior to the change, a full refresh is required. - State management: Streaming tables are low-latency and require streams that are naturally bounded or bounded with a watermark. For more information, see Optimize stateful processing with watermarks.

- Joins don't recompute: Joins in streaming tables do not recompute when dimensions change. This characteristic can be good for “fast-but-wrong” scenarios. If you want your view to always be correct, you might want to use a Materialized view. Materialized views are always correct because they automatically recompute joins when dimensions change. For more information, see Materialized views.