Tutorial: Detect liveness in faces

Face Liveness detection is used to determine if a face in an input video stream is real (live) or fake (spoofed). It's an important building block in a biometric authentication system to prevent imposters from gaining access to the system using a photograph, video, mask, or other means to impersonate another person.

The goal of liveness detection is to ensure that the system is interacting with a physically present, live person at the time of authentication. These systems are increasingly important with the rise of digital finance, remote access control, and online identity verification processes.

The Azure AI Face liveness detection solution successfully defends against various spoof types ranging from paper printouts, 2D/3D masks, and spoof presentations on phones and laptops. Liveness detection is an active area of research, with continuous improvements being made to counteract increasingly sophisticated spoofing attacks. Continuous improvements are rolled out to the client and the service components over time as the overall solution gets more robust to new types of attacks.

Important

The Face client SDKs for liveness are a gated feature. You must request access to the liveness feature by filling out the Face Recognition intake form. When your Azure subscription is granted access, you can download the Face liveness SDK.

Introduction

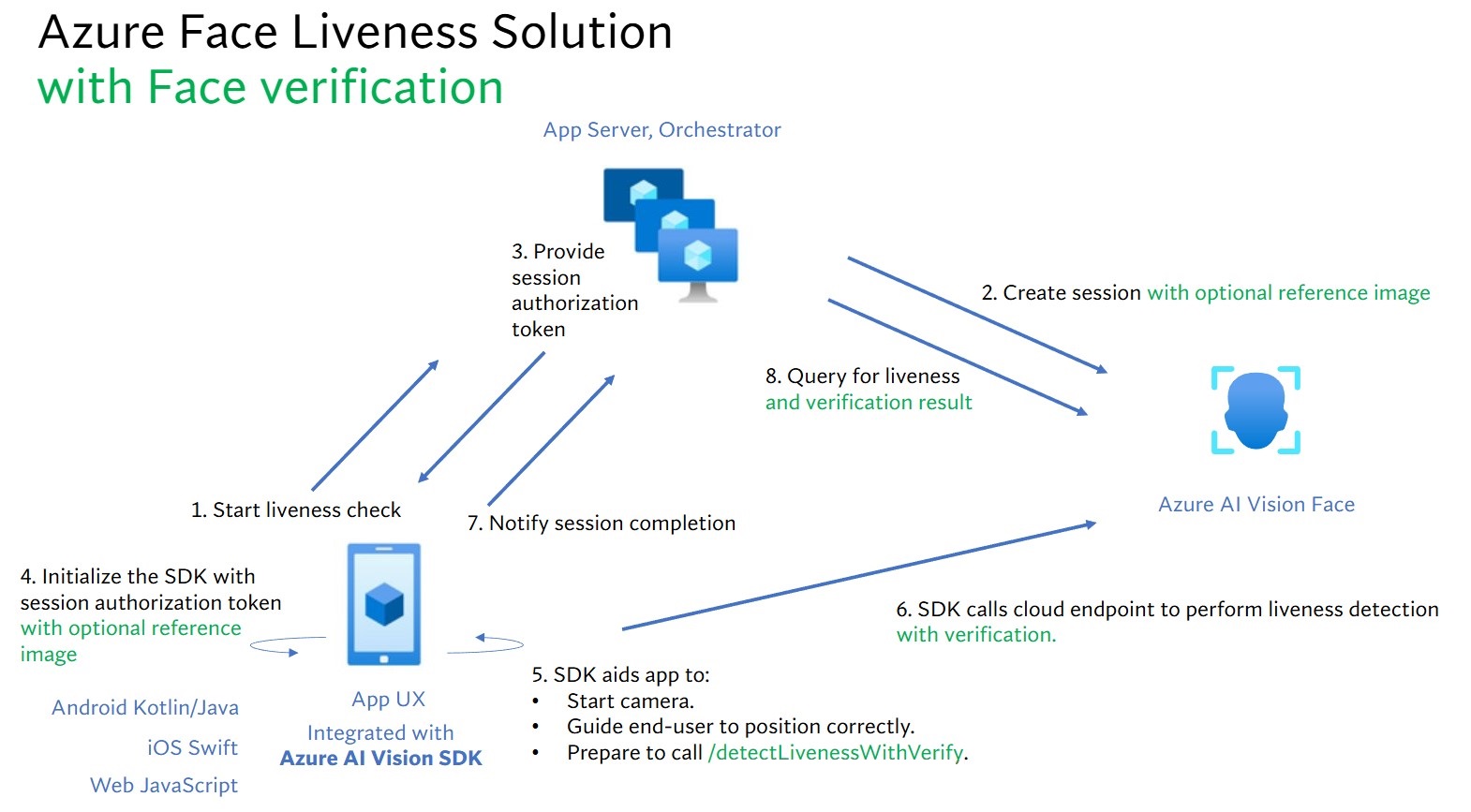

The liveness solution integration involves two distinct components: a frontend mobile/web application and an app server/orchestrator.

- Frontend application: The frontend application receives authorization from the app server to initiate liveness detection. Its primary objective is to activate the camera and guide end-users accurately through the liveness detection process.

- App server: The app server serves as a backend server to create liveness detection sessions and obtain an authorization token from the Face service for a particular session. This token authorizes the frontend application to perform liveness detection. The app server's objectives are to manage the sessions, to grant authorization for frontend application, and to view the results of the liveness detection process.

Additionally, we combine face verification with liveness detection to verify whether the person is the specific person you designated. The following table describes details of the liveness detection features:

| Feature | Description |

|---|---|

| Liveness detection | Determine an input is real or fake, and only the app server has the authority to start the liveness check and query the result. |

| Liveness detection with face verification | Determine an input is real or fake and verify the identity of the person based on a reference image you provided. Either the app server or the frontend application can provide a reference image. Only the app server has the authority to initial the liveness check and query the result. |

This tutorial demonstrates how to operate a frontend application and an app server to perform liveness detection and liveness detection with face verification across various language SDKs.

Prerequisites

- Azure subscription - Create one for free

- Your Azure account must have a Cognitive Services Contributor role assigned in order for you to agree to the responsible AI terms and create a resource. To get this role assigned to your account, follow the steps in the Assign roles documentation, or contact your administrator.

- Once you have your Azure subscription, create a Face resource in the Azure portal to get your key and endpoint. After it deploys, select Go to resource.

- You need the key and endpoint from the resource you create to connect your application to the Face service.

- You can use the free pricing tier (

F0) to try the service, and upgrade later to a paid tier for production.

- Access to the Azure AI Vision Face Client SDK for mobile (IOS and Android) and web. To get started, you need to apply for the Face Recognition Limited Access features to get access to the SDK. For more information, see the Face Limited Access page.

Set up frontend applications and app servers to perform liveness detection

We provide SDKs in different languages for frontend applications and app servers. See the following instructions to set up your frontend applications and app servers.

Download SDK for frontend application

Once you have access to the SDK, follow instructions in the azure-ai-vision-sdk GitHub repository to integrate the UI and the code into your native mobile application. The liveness SDK supports Java/Kotlin for Android mobile applications, Swift for iOS mobile applications and JavaScript for web applications:

- For Swift iOS, follow the instructions in the iOS sample

- For Kotlin/Java Android, follow the instructions in the Android sample

- For JavaScript Web, follow the instructions in the Web sample

Once you've added the code into your application, the SDK handles starting the camera, guiding the end-user in adjusting their position, composing the liveness payload, and calling the Azure AI Face cloud service to process the liveness payload.

Download Azure AI Face client library for app server

The app server/orchestrator is responsible for controlling the lifecycle of a liveness session. The app server has to create a session before performing liveness detection, and then it can query the result and delete the session when the liveness check is finished. We offer a library in various languages for easily implementing your app server. Follow these steps to install the package you want:

- For C#, follow the instructions in the dotnet readme

- For Java, follow the instructions in the Java readme

- For Python, follow the instructions in the Python readme

- For JavaScript, follow the instructions in the JavaScript readme

Create environment variables

In this example, write your credentials to environment variables on the local machine that runs the application.

Go to the Azure portal. If the resource you created in the Prerequisites section deployed successfully, select Go to resource under Next Steps. You can find your key and endpoint under Resource Management in the Keys and Endpoint page. Your resource key isn't the same as your Azure subscription ID.

To set the environment variable for your key and endpoint, open a console window and follow the instructions for your operating system and development environment.

- To set the

FACE_APIKEYenvironment variable, replace<your_key>with one of the keys for your resource. - To set the

FACE_ENDPOINTenvironment variable, replace<your_endpoint>with the endpoint for your resource.

Important

If you use an API key, store it securely somewhere else, such as in Azure Key Vault. Don't include the API key directly in your code, and never post it publicly.

For more information about AI services security, see Authenticate requests to Azure AI services.

setx FACE_APIKEY <your_key>

setx FACE_ENDPOINT <your_endpoint>

After you add the environment variables, you may need to restart any running programs that will read the environment variables, including the console window.

Perform liveness detection

The high-level steps involved in liveness orchestration are illustrated below:

The frontend application starts the liveness check and notifies the app server.

The app server creates a new liveness session with Azure AI Face Service. The service creates a liveness-session and responds back with a session-authorization-token. More information regarding each request parameter involved in creating a liveness session is referenced in Liveness Create Session Operation.

var endpoint = new Uri(System.Environment.GetEnvironmentVariable("FACE_ENDPOINT")); var credential = new AzureKeyCredential(System.Environment.GetEnvironmentVariable("FACE_APIKEY")); var sessionClient = new FaceSessionClient(endpoint, credential); var createContent = new CreateLivenessSessionContent(LivenessOperationMode.Passive) { DeviceCorrelationId = "723d6d03-ef33-40a8-9682-23a1feb7bccd", SendResultsToClient = false, }; var createResponse = await sessionClient.CreateLivenessSessionAsync(createContent); var sessionId = createResponse.Value.SessionId; Console.WriteLine($"Session created."); Console.WriteLine($"Session id: {sessionId}"); Console.WriteLine($"Auth token: {createResponse.Value.AuthToken}");An example of the response body:

{ "sessionId": "a6e7193e-b638-42e9-903f-eaf60d2b40a5", "authToken": "<session-authorization-token>" }The app server provides the session-authorization-token back to the frontend application.

The frontend application provides the session-authorization-token during the Azure AI Vision SDK’s initialization.

The SDK then starts the camera, guides the user to position correctly, and then prepares the payload to call the liveness detection service endpoint.

The SDK calls the Azure AI Vision Face service to perform the liveness detection. Once the service responds, the SDK notifies the frontend application that the liveness check has been completed.

The frontend application relays the liveness check completion to the app server.

The app server can now query for the liveness detection result from the Azure AI Vision Face service.

var getResultResponse = await sessionClient.GetLivenessSessionResultAsync(sessionId); var sessionResult = getResultResponse.Value; Console.WriteLine($"Session id: {sessionResult.Id}"); Console.WriteLine($"Session status: {sessionResult.Status}"); Console.WriteLine($"Liveness detection request id: {sessionResult.Result?.RequestId}"); Console.WriteLine($"Liveness detection received datetime: {sessionResult.Result?.ReceivedDateTime}"); Console.WriteLine($"Liveness detection decision: {sessionResult.Result?.Response.Body.LivenessDecision}"); Console.WriteLine($"Session created datetime: {sessionResult.CreatedDateTime}"); Console.WriteLine($"Auth token TTL (seconds): {sessionResult.AuthTokenTimeToLiveInSeconds}"); Console.WriteLine($"Session expired: {sessionResult.SessionExpired}"); Console.WriteLine($"Device correlation id: {sessionResult.DeviceCorrelationId}");An example of the response body:

{ "status": "ResultAvailable", "result": { "id": 1, "sessionId": "a3dc62a3-49d5-45a1-886c-36e7df97499a", "requestId": "cb2b47dc-b2dd-49e8-bdf9-9b854c7ba843", "receivedDateTime": "2023-10-31T16:50:15.6311565+00:00", "request": { "url": "/face/v1.1-preview.1/detectliveness/singlemodal", "method": "POST", "contentLength": 352568, "contentType": "multipart/form-data; boundary=--------------------------482763481579020783621915", "userAgent": "" }, "response": { "body": { "livenessDecision": "realface", "target": { "faceRectangle": { "top": 59, "left": 121, "width": 409, "height": 395 }, "fileName": "content.bin", "timeOffsetWithinFile": 0, "imageType": "Color" }, "modelVersionUsed": "2022-10-15-preview.04" }, "statusCode": 200, "latencyInMilliseconds": 1098 }, "digest": "537F5CFCD8D0A7C7C909C1E0F0906BF27375C8E1B5B58A6914991C101E0B6BFC" }, "id": "a3dc62a3-49d5-45a1-886c-36e7df97499a", "createdDateTime": "2023-10-31T16:49:33.6534925+00:00", "authTokenTimeToLiveInSeconds": 600, "deviceCorrelationId": "723d6d03-ef33-40a8-9682-23a1feb7bccd", "sessionExpired": false }The app server can delete the session if you don't query its result anymore.

await sessionClient.DeleteLivenessSessionAsync(sessionId); Console.WriteLine($"The session {sessionId} is deleted.");

Perform liveness detection with face verification

Combining face verification with liveness detection enables biometric verification of a particular person of interest with an added guarantee that the person is physically present in the system. There are two parts to integrating liveness with verification:

- Select a good reference image.

- Set up the orchestration of liveness with verification.

Select a reference image

Use the following tips to ensure that your input images give the most accurate recognition results.

Technical requirements

- The supported input image formats are JPEG, PNG, GIF (the first frame), BMP.

- The image file size should be no larger than 6 MB.

- You can utilize the

qualityForRecognitionattribute in the face detection operation when using applicable detection models as a general guideline of whether the image is likely of sufficient quality to attempt face recognition on. Only"high"quality images are recommended for person enrollment and quality at or above"medium"is recommended for identification scenarios.

Composition requirements

- Photo is clear and sharp, not blurry, pixelated, distorted, or damaged.

- Photo is not altered to remove face blemishes or face appearance.

- Photo must be in an RGB color supported format (JPEG, PNG, WEBP, BMP). Recommended Face size is 200 pixels x 200 pixels. Face sizes larger than 200 pixels x 200 pixels will not result in better AI quality, and no larger than 6 MB in size.

- User is not wearing glasses, masks, hats, headphones, head coverings, or face coverings. Face should be free of any obstructions.

- Facial jewelry is allowed provided they do not hide your face.

- Only one face should be visible in the photo.

- Face should be in neutral front-facing pose with both eyes open, mouth closed, with no extreme facial expressions or head tilt.

- Face should be free of any shadows or red eyes. Retake photo if either of these occur.

- Background should be uniform and plain, free of any shadows.

- Face should be centered within the image and fill at least 50% of the image.

Set up the orchestration of liveness with verification.

The high-level steps involved in liveness with verification orchestration are illustrated below:

Providing the verification reference image by either of the following two methods:

The app server provides the reference image when creating the liveness session. More information regarding each request parameter involved in creating a liveness session with verification is referenced in Liveness With Verify Create Session Operation.

var endpoint = new Uri(System.Environment.GetEnvironmentVariable("FACE_ENDPOINT")); var credential = new AzureKeyCredential(System.Environment.GetEnvironmentVariable("FACE_APIKEY")); var sessionClient = new FaceSessionClient(endpoint, credential); var createContent = new CreateLivenessWithVerifySessionContent(LivenessOperationMode.Passive) { DeviceCorrelationId = "723d6d03-ef33-40a8-9682-23a1feb7bccd" }; using var fileStream = new FileStream("test.png", FileMode.Open, FileAccess.Read); var createResponse = await sessionClient.CreateLivenessWithVerifySessionAsync(createContent, fileStream); var sessionId = createResponse.Value.SessionId; Console.WriteLine("Session created."); Console.WriteLine($"Session id: {sessionId}"); Console.WriteLine($"Auth token: {createResponse.Value.AuthToken}"); Console.WriteLine("The reference image:"); Console.WriteLine($" Face rectangle: {createResponse.Value.VerifyImage.FaceRectangle.Top}, {createResponse.Value.VerifyImage.FaceRectangle.Left}, {createResponse.Value.VerifyImage.FaceRectangle.Width}, {createResponse.Value.VerifyImage.FaceRectangle.Height}"); Console.WriteLine($" The quality for recognition: {createResponse.Value.VerifyImage.QualityForRecognition}");An example of the response body:

{ "verifyImage": { "faceRectangle": { "top": 506, "left": 51, "width": 680, "height": 475 }, "qualityForRecognition": "high" }, "sessionId": "3847ffd3-4657-4e6c-870c-8e20de52f567", "authToken": "<session-authorization-token>" }The frontend application provides the reference image when initializing the SDK. This scenario is not supported in the web solution.

The app server can now query for the verification result in addition to the liveness result.

var getResultResponse = await sessionClient.GetLivenessWithVerifySessionResultAsync(sessionId); var sessionResult = getResultResponse.Value; Console.WriteLine($"Session id: {sessionResult.Id}"); Console.WriteLine($"Session status: {sessionResult.Status}"); Console.WriteLine($"Liveness detection request id: {sessionResult.Result?.RequestId}"); Console.WriteLine($"Liveness detection received datetime: {sessionResult.Result?.ReceivedDateTime}"); Console.WriteLine($"Liveness detection decision: {sessionResult.Result?.Response.Body.LivenessDecision}"); Console.WriteLine($"Verification result: {sessionResult.Result?.Response.Body.VerifyResult.IsIdentical}"); Console.WriteLine($"Verification confidence: {sessionResult.Result?.Response.Body.VerifyResult.MatchConfidence}"); Console.WriteLine($"Session created datetime: {sessionResult.CreatedDateTime}"); Console.WriteLine($"Auth token TTL (seconds): {sessionResult.AuthTokenTimeToLiveInSeconds}"); Console.WriteLine($"Session expired: {sessionResult.SessionExpired}"); Console.WriteLine($"Device correlation id: {sessionResult.DeviceCorrelationId}");An example of the response body:

{ "status": "ResultAvailable", "result": { "id": 1, "sessionId": "3847ffd3-4657-4e6c-870c-8e20de52f567", "requestId": "f71b855f-5bba-48f3-a441-5dbce35df291", "receivedDateTime": "2023-10-31T17:03:51.5859307+00:00", "request": { "url": "/face/v1.1-preview.1/detectlivenesswithverify/singlemodal", "method": "POST", "contentLength": 352568, "contentType": "multipart/form-data; boundary=--------------------------590588908656854647226496", "userAgent": "" }, "response": { "body": { "livenessDecision": "realface", "target": { "faceRectangle": { "top": 59, "left": 121, "width": 409, "height": 395 }, "fileName": "content.bin", "timeOffsetWithinFile": 0, "imageType": "Color" }, "modelVersionUsed": "2022-10-15-preview.04", "verifyResult": { "matchConfidence": 0.9304124, "isIdentical": true } }, "statusCode": 200, "latencyInMilliseconds": 1306 }, "digest": "2B39F2E0EFDFDBFB9B079908498A583545EBED38D8ACA800FF0B8E770799F3BF" }, "id": "3847ffd3-4657-4e6c-870c-8e20de52f567", "createdDateTime": "2023-10-31T16:58:19.8942961+00:00", "authTokenTimeToLiveInSeconds": 600, "deviceCorrelationId": "723d6d03-ef33-40a8-9682-23a1feb7bccd", "sessionExpired": true }The app server can delete the session if you don't query its result anymore.

await sessionClient.DeleteLivenessWithVerifySessionAsync(sessionId); Console.WriteLine($"The session {sessionId} is deleted.");

Clean up resources

If you want to clean up and remove an Azure AI services subscription, you can delete the resource or resource group. Deleting the resource group also deletes any other resources associated with it.

Related content

To learn about other options in the liveness APIs, see the Azure AI Vision SDK reference.

To learn more about the features available to orchestrate the liveness solution, see the Session REST API reference.