HB-series virtual machine sizes

Applies to: ✔️ Linux VMs ✔️ Windows VMs ✔️ Flexible scale sets ✔️ Uniform scale sets

Several performance tests have been run on HB-series sizes. The following are some of the results of this performance testing.

| Workload | HB |

|---|---|

| STREAM Triad | 260 GB/s (32-33 GB/s per CCX) |

| High-Performance Linpack (HPL) | 1,000 GigaFLOPS (Rpeak), 860 GigaFLOPS (Rmax) |

| RDMA latency & bandwidth | 1.27 microseconds, 99.1 Gb/s |

| FIO on local NVMe SSD | 1.7 GB/s reads, 1.0 GB/s writes |

| IOR on 4 * Azure Premium SSD (P30 Managed Disks, RAID0)** | 725 MB/s reads, 780 MB/writes |

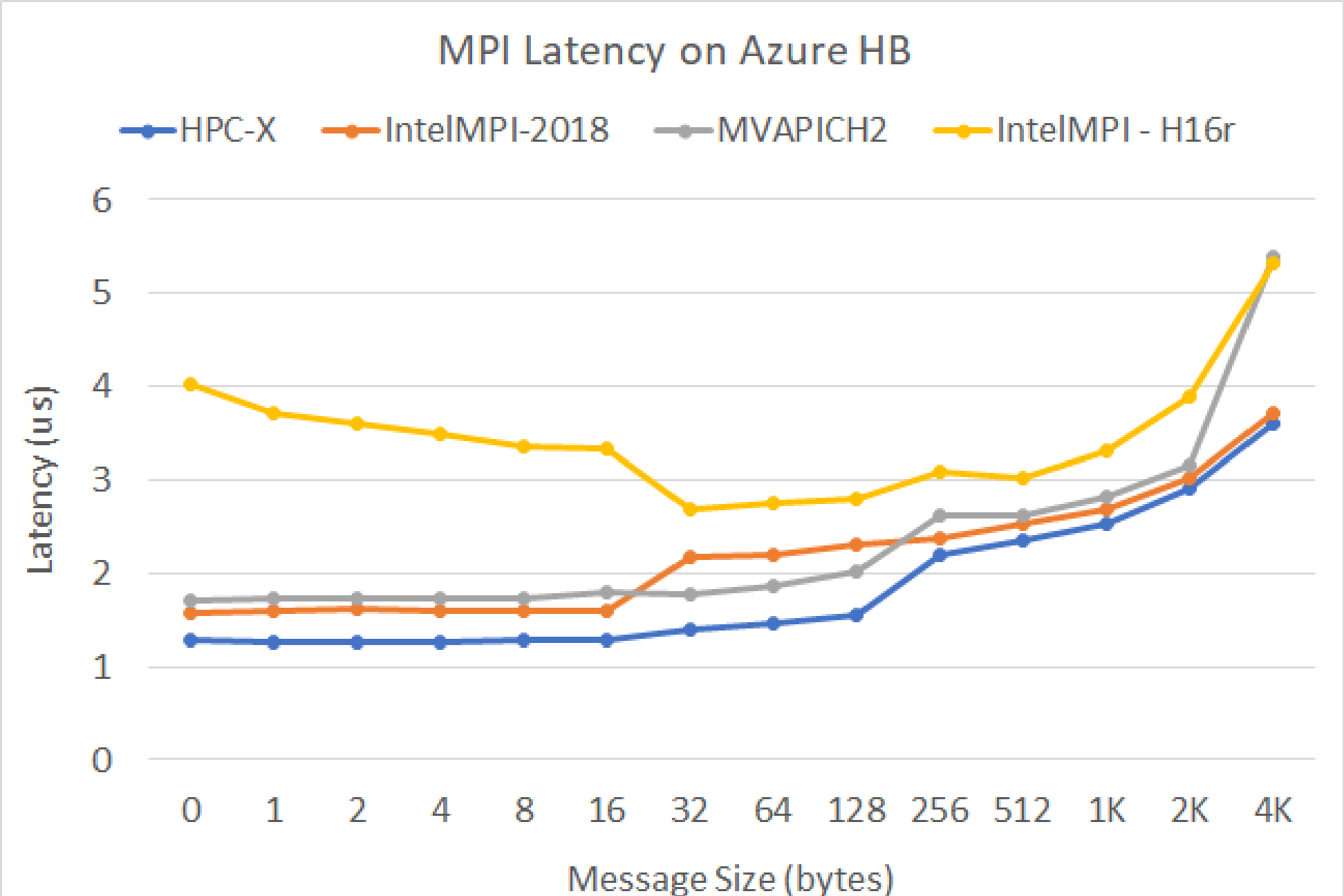

MPI latency

MPI latency test from the OSU microbenchmark suite is run. Sample scripts are on GitHub

./bin/mpirun_rsh -np 2 -hostfile ~/hostfile MV2_CPU_MAPPING=[INSERT CORE #] ./osu_latency

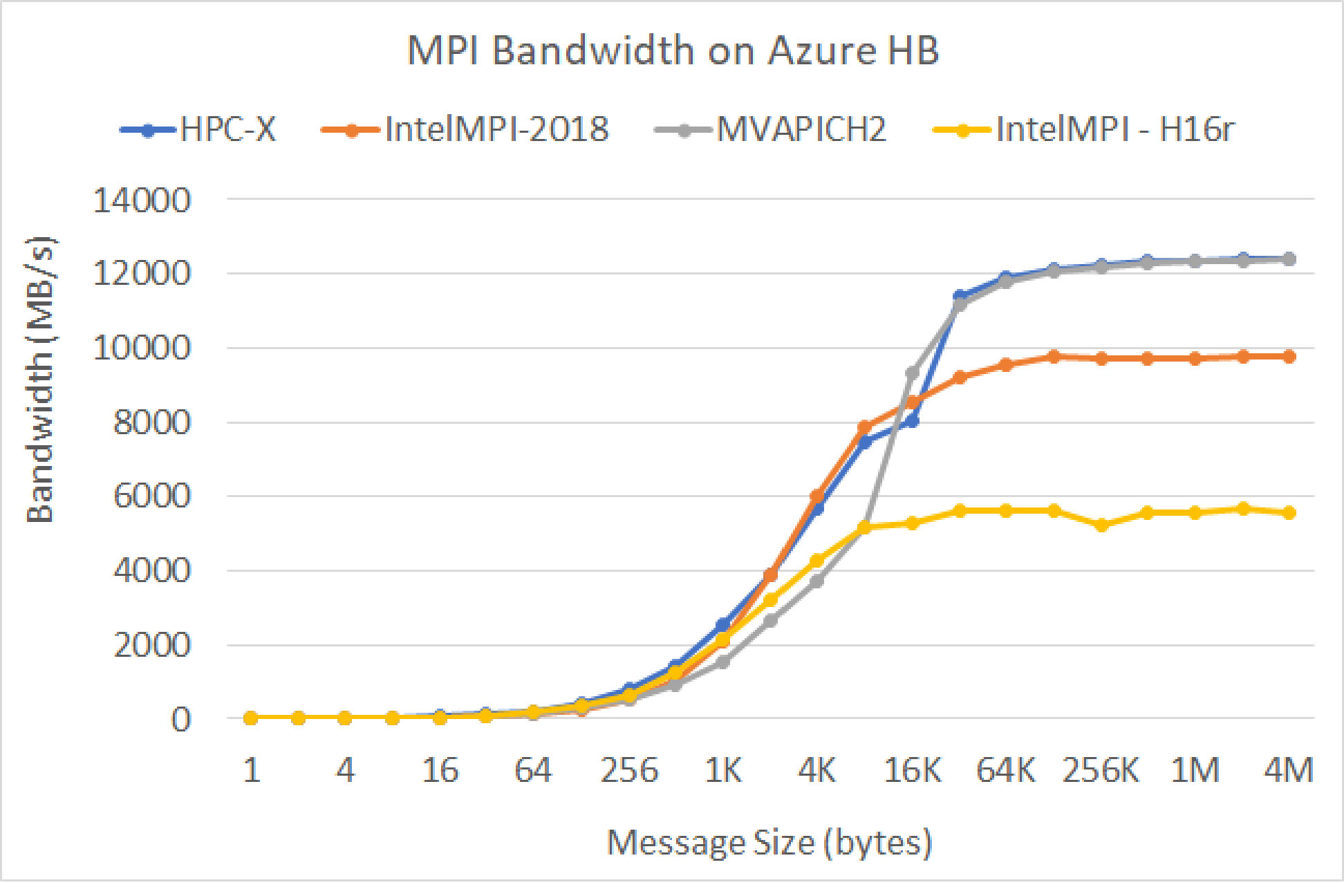

MPI bandwidth

MPI bandwidth test from the OSU microbenchmark suite is run. Sample scripts are on GitHub

./mvapich2-2.3.install/bin/mpirun_rsh -np 2 -hostfile ~/hostfile MV2_CPU_MAPPING=[INSERT CORE #] ./mvapich2-2.3/osu_benchmarks/mpi/pt2pt/osu_bw

Mellanox Perftest

The Mellanox Perftest package has many InfiniBand tests such as latency (ib_send_lat) and bandwidth (ib_send_bw). An example command is below.

numactl --physcpubind=[INSERT CORE #] ib_send_lat -a

Next steps

- Read about the latest announcements, HPC workload examples, and performance results at the Azure Compute Tech Community Blogs.

- For a higher-level architectural view of running HPC workloads, see High Performance Computing (HPC) on Azure.