The Four Stages of NTFS File Growth, Part 2

A few years ago I wrote a blog entry entitled, “The Four Stages of NTFS File Growth”.

This attempted to explain what happens to a file as it gains complexity. Complexity being akin to fragmentation.

If you have not read the above mentioned blog entry, please do so now. This information will not make the slightest bit of sense unless you read my earlier post. I’ll wait.

https://blogs.technet.com/b/askcore/archive/2009/10/16/the-four-stages-of-ntfs-file-growth.aspx

Welcome back.

Since its posting, I have answered a number of questions, mostly about the structure called the attribute list. So today I want to cover this a little more in-depth to hopefully address some of these said questions.

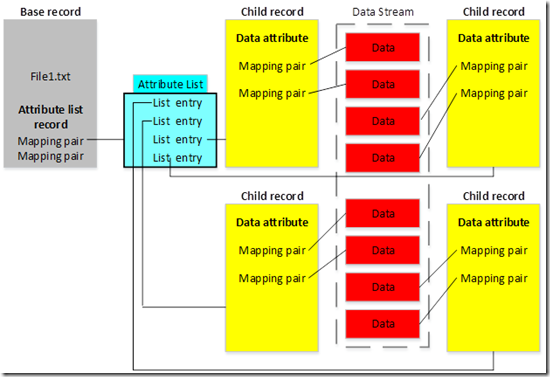

In the previous blog entry, I explained how very complex files had the potential of creating an attribute list (shown below).

The base record and all the child records are each 1kb in size. Each child record keeps track of a portion of the file’s data stream. The more fragmented the data stream, the more mapping pairs are required to track the fragments, and thus the more child records will be created. Each child record must be tracked in the attribute list.

Keep in mind that the child records can hold much more than just two mapping pairs. This is just simplified to keep the diagram from being completely unreadable.

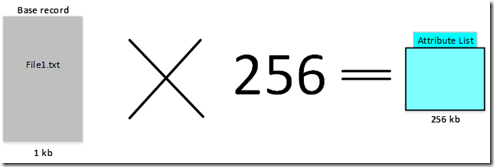

The problem with this is that the attribute list itself. It is NOT a child record, it is created using free space outside the Master File Table (MFT). A file’s attribute list has a hard limit of how large it can grow. This cannot be changed. If it were, it would break backwards compatibility with older versions of NTFS that wouldn’t know how to deal with a larger attribute list.

NOTE: The diagram shows the attribute list as being smaller than the 1kb file record. And while it is true that it starts out that way, the upper limitation of the attribute list is 256kb.

So it is possible to hit a point where a file cannot add on any additional fragments. This is often the case when the following error messages are encountered.

- Insufficient system resources exist to complete the requested service

- The requested operation could not be completed due to a file system limitation

What these messages are trying to tell us is that the attribute list has grown to its maximum size and additional file fragments cannot be created.

To put this into perspective, this isn’t simply about file SIZE. It has to do with how fragmented the file is. In fact it is very hard to MAKE happen. There are really only two scenarios where it is somewhat common.

- Compressing very large files, like virtual hard disks (VHD)

- Very large SQL snapshots, which are sparse

Both compressed and sparse files introduce high levels of fragmentation because of how they are stored. So very large files that are also sparse or compressed run the risk of hitting this limitation. To add to the problem, you cannot clear this up by running defragmentation/optimization. Sparse and compressed files are going to be fragmented.

The good news is that we figured out a way around this. The bad news is that it isn’t really well understood.

It really starts with this hotfix.

Installing the hotfix doesn’t resolve the issue by itself. What this hotfix does is that it gives us the ability to create instances of NTFS that use file records that are 4kb in size, rather than the 1kb that NTFS has used for the longest time.

How is this possible? If we can’t change the size of the attribute list, how can we change the size of file records?

The attribute list is a hard coded limitation. Microsoft made the decision, for performance reasons, that we really should keep a lid on how big the attribute list should grow. On the other hand, file record size is self-defined. By default, the size is defined as 1kb, but records could be other sizes, as long as all the records in a volume are the same size.

This was put to the test when 4kb sector hard drives started to become popular. Since you wouldn’t want a file record to be smaller than a sector, these 4kb sector drives were formatted to utilize a file record size of 4kb. That’s where the hotfix comes into the picture. In addition to being able to use 4kb file records on 4kb sector hard drives, an option was added to the FORMAT.EXE command to force it to create an instance of NTFS with 4kb file records, regardless of sector size.

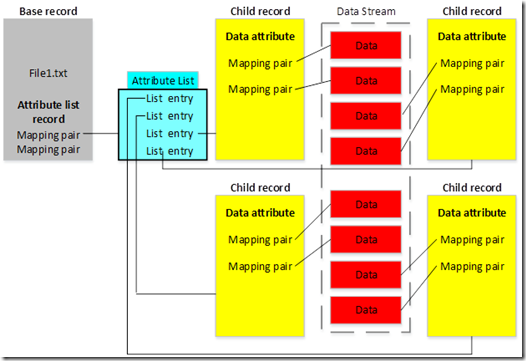

So why should we care about the size of the file records? Look at the diagram again.

If the records are bigger, they can store more mapping pairs, and thus track more fragments. In theory, a file could have FOUR TIMES the number of fragments before running into the same issue.

The catch is that the size of file records is set at the time of formatting. So if you have a volume that is running into this issue, you will need to do the following.

- Copy off your files

- Reformat the drive using the switch (Format /L)

- Copy the files back

You can’t change the size of file records after the fact. It has to be set when formatting. But without an understanding of just what it is that we are changing.

This solves the problem in the short term. For the long term, other solutions were implemented to prevent fragmentation past a certain point. In the newer versions of Windows, NTFS will stop fragmenting compressed and sparse files before the attribute list reaches 100% of its maximum size.

This should put the issue to rest once and for all. However, until everyone gets to Windows 8.1 or Windows Server 2012 R2, we will still run into this issue from time to time.

For more information about 4kb sector drives, check out my article on Windows IT Pro.

https://windowsitpro.com/windows/promise-advanced-format-hard-drives

Robert Mitchell

Senior Support Escalation Engineer

Microsoft Enterprise Platforms Support

Comments

- Anonymous

January 01, 2003

Daniel....recent changes that were part of Windows 8.1 and Windows Server 2012 R2 help to prevent us from hitting the limitation at all. When the attribute list reaches about 75% of its upper limit, Windows no longer adds additional fragmentation from runs of zeros for empty compression units. The file can still get fragmented from other sources, but its not as likely to hit the limit from that alone.

In fact, I've noticed a sharp decline of these issues being reported to us. So yes, this should put the entire issue to rest. :) - Anonymous

January 01, 2003

This should put the issue to rest once and for all. However, until everyone gets to Windows 8.1 or Windows Server 2012 R2, we will still run into this issue from time to time.

What do you mean by not running into the issue on Windows 8.1? - Anonymous

January 01, 2003

Great work Robtm ... your ex-colleague Jasc :) - Anonymous

May 16, 2015

Robert:

5 years later you went up to bat and took a good shot at it.

We are benefiting from your dexterity, mind, and most importantly your heart which is translating to generosity in sharing hard earned knowledge.

Daniel Adeniji - Anonymous

May 28, 2015

Thank You for the Education - Anonymous

May 31, 2015

ok - Anonymous

July 20, 2015

When will you made support for Windows 10, because right now "Boot Defragmentation" is not working and when the computer is running PD can not defrag "Pages & Boot files".

Windows 10 also don't aloud the last update to install and I have full Administrator rights. - Anonymous

August 06, 2015

Hello Kosjan,

I'm afraid that your questions are outside the scope of this blog post. If you have questions about Windows 10, please post them to the Windows 10 forums. Thanks for your interest. - Anonymous

September 16, 2015

Ok - very usefull - now an interesting question - does defragmentation reduce or consolidate the records in the child records?

say there was a sparse file and it had segments that indicated free space between two blocks data1-space-data2 - but then data was added over time and now there is no free space any more data1-data2 the space has gone away - the new record could just show that there is one block [data12] and one fewer entries are needed in the child record (this could happen many times) - so now, in theory, all the entries in child record 9beyond data12) could move up making available slot(s) at the end

- This could even happen a lot allowing child records to be retired and entries from later child records to be copied down into earlier ones.

Does NTFS or Perfectdisk do this?- Anonymous

August 30, 2016

Yes and no. We don't consolidate the records. We will remove them from the file, but the discarded records and child records will simply be marked as unused in the MFT. Then when new files are created, the records will be repurposed to the new files.We don't shrink the MFT. Yes, they are removed from the file, but no, they aren't completely deleted.

- Anonymous

- Anonymous

January 03, 2016

Great article! :)