Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

Target-based scaling provides a fast and intuitive scaling model for customers and is currently supported for these binding extensions:

- Apache Kafka

- Azure Cosmos DB

- Azure Event Hubs

- Azure Queue Storage

- Azure Service Bus (queue and topics)

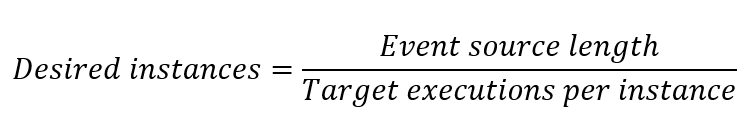

Target-based scaling replaces the previous Azure Functions incremental scaling model as the default for these extension types. Incremental scaling added or removed a maximum of one worker at each new instance rate, with complex decisions for when to scale. In contrast, target-based scaling allows scale up of four instances at a time, and the scaling decision is based on a simple target-based equation:

In this equation, event source length refers to the number of events that must be processed. The default target executions per instance values come from the Software Development Kits (SDKs) used by the Azure Functions extensions. You don't need to make any changes for target-based scaling to work.

Considerations

The following considerations apply when using target-based scaling:

- Target-based scaling is enabled by default for function apps on the Consumption plan, Flex Consumption plan, and Elastic Premium plans. Event-driven scaling isn't supported when running on Dedicated (App Service) plans.

- Target-based scaling is enabled by default starting with version 4.19.0 of the Functions runtime.

- When you use target-based scaling, scale limits are still honored. For more information, see Limit scale out.

- To achieve the most accurate scaling based on metrics, use only one target-based triggered function per function app. You should also consider running in a Flex Consumption plan, which offers per-function scaling.

- When multiple functions in the same function app are all requesting to scale out at the same time, a sum across those functions is used to determine the change in desired instances. Functions requesting to scale out override functions requesting to scale in.

- When there are scale-in requests without any scale-out requests, the max scale in value is used.

Opting out

Target-based scaling is enabled by default for function apps hosted on a Consumption plan or on a Premium plans. To disable target-based scaling and fall back to incremental scaling, add the following app setting to your function app:

| App Setting | Value |

|---|---|

TARGET_BASED_SCALING_ENABLED |

0 |

Customizing target-based scaling

You can make the scaling behavior more or less aggressive based on your app's workload by adjusting target executions per instance. Each extension has different settings that you can use to set target executions per instance.

This table summarizes the host.json values that are used for the target executions per instance values and the defaults:

| Extension | host.json values | Default Value |

|---|---|---|

| Event Hubs (Extension v5.x+) | extensions.eventHubs.maxEventBatchSize | 100* |

| Event Hubs (Extension v3.x+) | extensions.eventHubs.eventProcessorOptions.maxBatchSize | 10 |

| Event Hubs (if defined) | extensions.eventHubs.targetUnprocessedEventThreshold | n/a |

| Service Bus (Extension v5.x+, Single Dispatch) | extensions.serviceBus.maxConcurrentCalls | 16 |

| Service Bus (Extension v5.x+, Single Dispatch Sessions Based) | extensions.serviceBus.maxConcurrentSessions | 8 |

| Service Bus (Extension v5.x+, Batch Processing) | extensions.serviceBus.maxMessageBatchSize | 1000 |

| Service Bus (Functions v2.x+, Single Dispatch) | extensions.serviceBus.messageHandlerOptions.maxConcurrentCalls | 16 |

| Service Bus (Functions v2.x+, Single Dispatch Sessions Based) | extensions.serviceBus.sessionHandlerOptions.maxConcurrentSessions | 2000 |

| Service Bus (Functions v2.x+, Batch Processing) | extensions.serviceBus.batchOptions.maxMessageCount | 1000 |

| Storage Queue | extensions.queues.batchSize | 16 |

* The default maxEventBatchSize changed in v6.0.0 of the Microsoft.Azure.WebJobs.Extensions.EventHubs package. In earlier versions, this value was 10.

For some binding extensions, the target executions per instance configuration is set using a function attribute:

| Extension | Function trigger setting | Default Value |

|---|---|---|

| Apache Kafka | lagThreshold |

1000 |

| Azure Cosmos DB | maxItemsPerInvocation |

100 |

To learn more, see the example configurations for the supported extensions.

Premium plan with runtime scale monitoring enabled

When runtime scale monitoring is enabled the extensions themselves handle dynamic scaling because the scale controller doesn't have access to services secured by a virtual network. After you enable runtime scale monitoring, you'll need to upgrade your extension packages to these minimum versions to unlock the extra target-based scaling functionality:

| Extension Name | Minimum Version Needed |

|---|---|

| Apache Kafka | 3.9.0 |

| Azure Cosmos DB | 4.1.0 |

| Event Hubs | 5.2.0 |

| Service Bus | 5.9.0 |

| Storage Queue | 5.1.0 |

Dynamic concurrency support

Target-based scaling introduces faster scaling, and uses defaults for target executions per instance. When using Service Bus, Storage queues, or Kafka, you can also enable dynamic concurrency. In this configuration, the _target execution per instance value is determined automatically by the dynamic concurrency feature. It starts with limited concurrency and identifies the best setting over time.

Supported extensions

The way in which you configure target-based scaling in your host.json file depends on the specific extension type. This section provides the configuration details for the extensions that currently support target-based scaling.

Service Bus queues and topics

The Service Bus extension support three execution models, determined by the IsBatched and IsSessionsEnabled attributes of your Service Bus trigger. The default value for IsBatched and IsSessionsEnabled is false.

| Execution Model | IsBatched | IsSessionsEnabled | Setting Used for target executions per instance |

|---|---|---|---|

| Single dispatch processing | false | false | maxConcurrentCalls |

| Single dispatch processing (session-based) | false | true | maxConcurrentSessions |

| Batch processing | true | false | maxMessageBatchSize or maxMessageCount |

Note

Scale efficiency: For the Service Bus extension, use Manage rights on resources for the most efficient scaling. With Listen rights, scaling reverts to incremental scale because the queue or topic length can't be used to inform scaling decisions. To learn more about setting rights in Service Bus access policies, see Shared Access Authorization Policy.

Single dispatch processing

In this model, each invocation of your function processes a single message. The maxConcurrentCalls setting governs target executions per instance. The specific setting depends on the version of the Service Bus extension.

Modify the host.json setting maxConcurrentCalls, as in the following example:

{

"version": "2.0",

"extensions": {

"serviceBus": {

"maxConcurrentCalls": 16

}

}

}

Single dispatch processing (session-based)

In this model, each invocation of your function processes a single message. However, depending on the number of active sessions for your Service Bus topic or queue, each instance leases one or more sessions. The specific setting depends on the version of the Service Bus extension.

Modify the host.json setting maxConcurrentSessions to set target executions per instance, as in the following example:

{

"version": "2.0",

"extensions": {

"serviceBus": {

"maxConcurrentSessions": 8

}

}

}

Batch processing

In this model, each invocation of your function processes a batch of messages. The specific setting depends on the version of the Service Bus extension.

Modify the host.json setting maxMessageBatchSize to set target executions per instance, as in the following example:

{

"version": "2.0",

"extensions": {

"serviceBus": {

"maxMessageBatchSize": 1000

}

}

}

Event Hubs

For Azure Event Hubs, Azure Functions scales based on the number of unprocessed events distributed across all the partitions in the event hub within a list of valid instance counts. By default, the host.json attributes used for target executions per instance are maxEventBatchSize and maxBatchSize. However, if you choose to fine-tune target-based scaling, you can define a separate parameter targetUnprocessedEventThreshold that overrides to set target executions per instance without changing the batch settings. If targetUnprocessedEventThreshold is set, the total unprocessed event count is divided by this value to determine the number of instances, which is then be rounded up to a worker instance count that creates a balanced partition distribution.

Warning

Setting batchCheckpointFrequency above 1 for hosting plans supported by target based scaling can cause incorrect scaling behavior. The platform calculates unprocessed events as "current position - checkpointed position", which may incorrectly indicate unprocessed messages when batches have been processed but not yet checkpointed, preventing proper scale-in when no messages remain.

Scaling Behavior and Stability

For Event Hubs, frequent scale-in and scale-out operations can trigger partition rebalancing, which leads to processing delays and increased latency. To mitigate this:

- The platform uses a predefined list of valid worker counts to guide scaling decisions.

- The platform ensures that scaling is stable and deliberate, avoiding disruptive changes to partition assignments.

- If the desired worker count isn't in the valid list—for example, 17, the system automatically selects the next largest valid count, which in this case is 32. Additionally, to prevent rapid repeated scaling, scale-in requests are throttled for 3 minutes after the last scale-up. This delay helps reduce unnecessary rebalancing and contributes to maintaining throughput efficiency.

Valid Instance Counts for Event Hubs

For each Event Hubs partition count, we calculate a corresponding list of valid instance counts to ensure optimal distribution and efficient scaling. These counts are chosen to align well with partitioning and concurrency requirements:

| Partition Count | Valid Instance Counts |

|---|---|

| 1 | [1] |

| 2 | [1, 2] |

| 4 | [1, 2, 4] |

| 8 | [1, 2, 3, 4, 8] |

| 10 | [1, 2, 3, 4, 5, 10] |

| 16 | [1, 2, 3, 4, 5, 6, 8, 16] |

| 32 | [1, 2, 3, 4, 5, 6, 7, 8, 9, 11, 16, 32] |

These predefined counts help ensure that instances are distributed as evenly as possible across partitions, minimizing idle or overloaded workers.

Note

Note: For Premium and Dedicated event hub tiers the partition count can exceed 32, allowing for larger valid instance count sets. These tiers support higher throughput and scalability, and the valid worker count list is extended accordingly to evenly distribute event hub partitions across instances. Also, since Event Hubs is a partitioned workload, the number of partitions in your event hub is the limit for the maximum target instance count.

Event Hubs settings

The specific setting depends on the version of the Event Hubs extension.

Modify the host.json setting maxEventBatchSize to set target executions per instance, as in the following example:

{

"version": "2.0",

"extensions": {

"eventHubs": {

"maxEventBatchSize" : 100

}

}

}

When defined in host.json, targetUnprocessedEventThreshold is used as target executions per instance instead of maxEventBatchSize, as in the following example:

{

"version": "2.0",

"extensions": {

"eventHubs": {

"targetUnprocessedEventThreshold": 153

}

}

}

Storage Queues

For v2.x+ of the Storage extension, modify the host.json setting batchSize to set target executions per instance:

{

"version": "2.0",

"extensions": {

"queues": {

"batchSize": 16

}

}

}

Note

Scale efficiency: For the storage queue extension, messages with visibilityTimeout are still counted in event source length by the Storage Queue APIs. This can cause overscaling of your function app. Consider using Service Bus queues que scheduled messages, limiting scale out, or not using visibilityTimeout for your solution.

Azure Cosmos DB

Azure Cosmos DB uses a function-level attribute, MaxItemsPerInvocation. The way you set this function-level attribute depends on your function language.

For a compiled C# function, set MaxItemsPerInvocation in your trigger definition, as shown in the following examples for an in-process C# function:

namespace CosmosDBSamplesV2

{

public static class CosmosTrigger

{

[FunctionName("CosmosTrigger")]

public static void Run([CosmosDBTrigger(

databaseName: "ToDoItems",

collectionName: "Items",

MaxItemsPerInvocation: 100,

ConnectionStringSetting = "CosmosDBConnection",

LeaseCollectionName = "leases",

CreateLeaseCollectionIfNotExists = true)]IReadOnlyList<Document> documents,

ILogger log)

{

if (documents != null && documents.Count > 0)

{

log.LogInformation($"Documents modified: {documents.Count}");

log.LogInformation($"First document Id: {documents[0].Id}");

}

}

}

}

Note

Since Azure Cosmos DB is a partitioned workload, the number of physical partitions in your container is the limit for the target instance count. To learn more about Azure Cosmos DB scaling, see physical partitions and lease ownership.

Apache Kafka

The Apache Kafka extension uses a function-level attribute, LagThreshold. For Kafka, the number of desired instances is calculated based on the total consumer lag divided by the LagThreshold setting. For a given lag, reducing the lag threshold increases the number of desired instances.

The way you set this function-level attribute depends on your function language. This example sets the threshold to 100.

For a compiled C# function, set LagThreshold in your trigger definition, as shown in the following examples for an in-process C# function for a Kafka Event Hubs trigger:

[FunctionName("KafkaTrigger")]

public static void Run(

[KafkaTrigger("BrokerList",

"topic",

Username = "$ConnectionString",

Password = "%EventHubConnectionString%",

Protocol = BrokerProtocol.SaslSsl,

AuthenticationMode = BrokerAuthenticationMode.Plain,

ConsumerGroup = "$Default",

LagThreshold = 100)] KafkaEventData<string> kevent, ILogger log)

{

log.LogInformation($"C# Kafka trigger function processed a message: {kevent.Value}");

}

Next steps

To learn more, see the following articles: