Inquiries

- May I see how to prevent initial movement after grabbing an object with MRTK3?

- May I see how to set smaller thresholds for detecting grab actions?

- Also, may I see how pinching points are calculated?

Problem statement

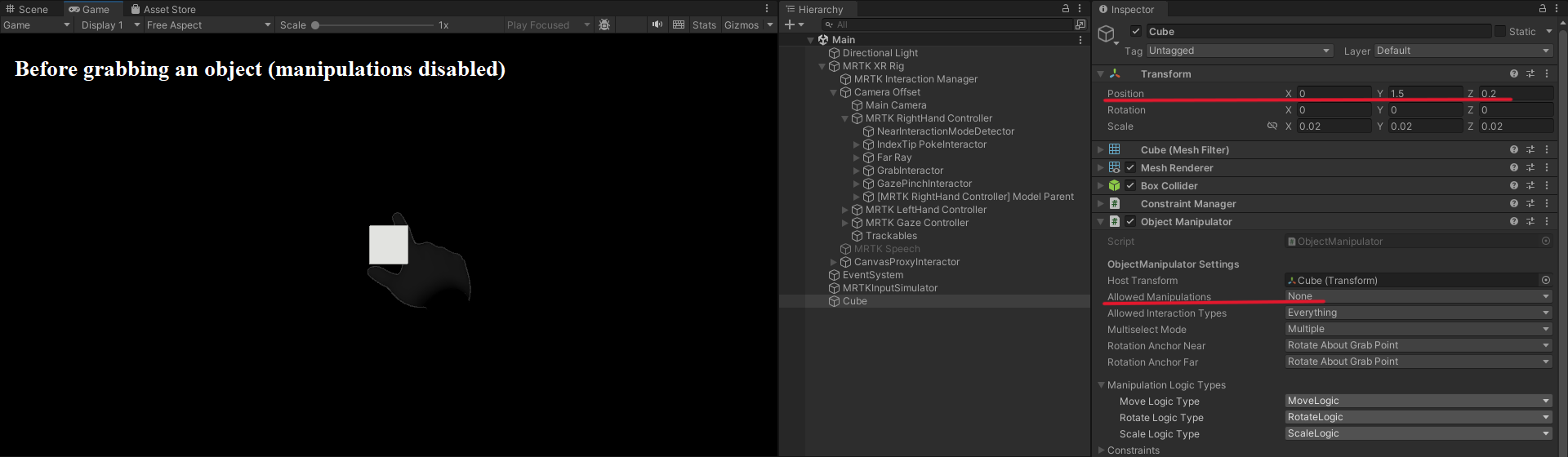

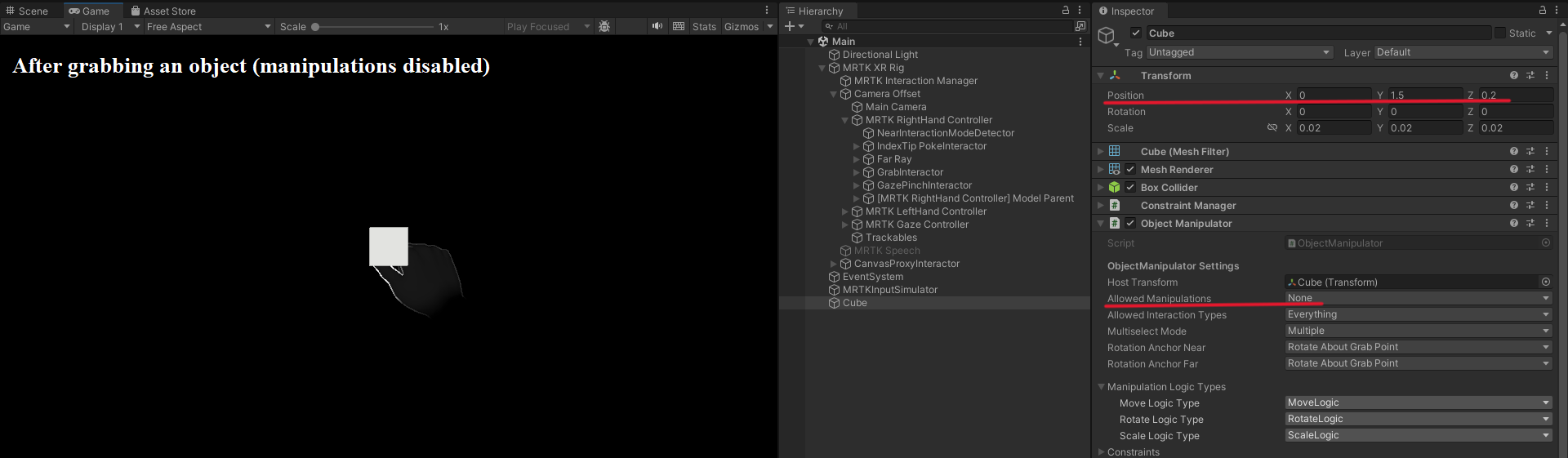

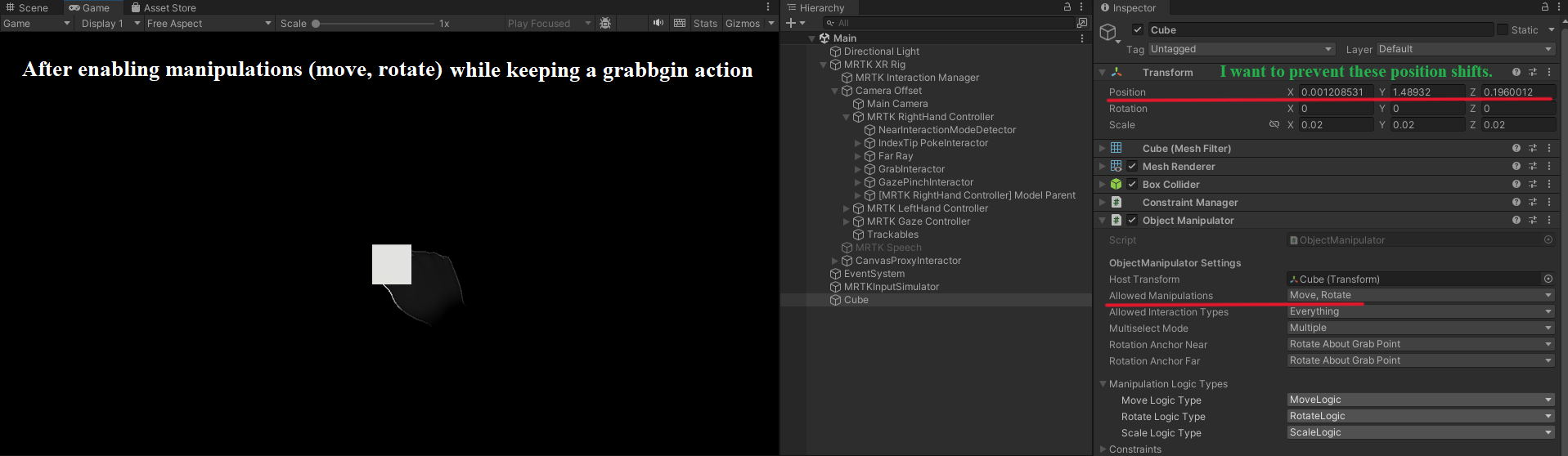

(1) The position of an object shifts right after grabbed, or even right after enabling manipulations (move, rotation) from disabled (none). The latter is detailed in screenshots below.

(2) With a headset on, a "a little bit" sign (a posture with space between an index finger and a thumb) enables a grabbing action.

(3) This is related with item (2). The pinching point may be ambiguous.

Background

(1) The current settings seem to let an object shift its position after being grabbed. This is likely not what actually occurs when grabbing an object in the real world. I would like to recreate a situation where simply grabbing an object keeps its static status, yet dynamic movements initiating (move, rotate) once fingers operate. I have looked for Inspector settings of the Object Manipulator script and codes inside but found no solution yet.

(2) While wearing a Hololens 2 headset, a grabbing action occurs even before contacting an index finder with a thumb. I would like to reflect the action of contacting these two fingers translated into a grabbing action, or more of a smaller threshold to detect this action via a narrower space between these two fingers. I was assuming that collider radius may be related for grabbing actions, thinking that the collision of a collider of an index finger tip and that of a thumb may trigger some action. Yet, I have not found solution yet after trying sphere collider of the NearInteractionModeDetector.

(3) The present program tries to get a pinching position by calling TryGetPinchingPoint (https://learn.microsoft.com/en-us/dotnet/api/mixedreality.toolkit.subsystems.handsaggregatorsubsystem.trygetpinchingpoint?view=mrtkcore-3.1). However, I am not quite sure how they are calculated (or even conceptually). This is related with item (2). I am assuming that pinching points are the middle point between an index finger and a thumb (average of two 3D vectors). This information would provide an advantage to program for letting users to more precisely place their index finger and thumb to a target grab point, pinch it, and then rotate around it rather than other points such as the perimeter of the target grab point.

Environments

Hololens 2 (Hand tracking)

Windows 10, Visual Studio 2022 with workloads (https://learn.microsoft.com/en-us/windows/mixed-reality/develop/install-the-tools)

Unity 2022.3.19f1 with MRTK3 (https://learn.microsoft.com/en-us/training/modules/learn-mrtk-tutorials/)