Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

Create a copy of an existing table on Azure Databricks at a specific version using the clone command. Clones can be either deep or shallow.

Azure Databricks also supports cloning Parquet and Apache Iceberg tables. See Incrementally clone Parquet and Apache Iceberg tables to Delta Lake.

For details on using clone with Unity Catalog, see Shallow clone for Unity Catalog tables.

Note

Databricks recommends using Delta Sharing to provide read-only access to tables across different organizations. See What is Delta Sharing?.

Clone types

- A deep clone is a clone that copies the source table data to the clone target in addition to the metadata of the existing table. Additionally, stream metadata is also cloned such that a stream that writes to the Delta table can be stopped on a source table and continued on the target of a clone from where it left off.

- A shallow clone is a clone that does not copy the data files to the clone target. The table metadata is equivalent to the source. These clones are cheaper to create.

The metadata that is cloned includes: schema, partitioning information, invariants, nullability, TBLPROPERTIES. For deep clones only, stream and COPY INTO metadata are also cloned. Metadata not cloned are the table description and user-defined commit metadata.

Important

Delta Lake table history is not copied when cloning a table. Unity Catalog properties are not copied, such as tags. See Delta Lake table history and tags.

What are the semantics of Delta clone operations?

If you are working with a Delta table registered to the Hive metastore or a collection of files not registered as a table, clone has the following semantics:

Important

In Databricks Runtime 13.3 LTS and above, Unity Catalog managed tables have support for shallow clones. Clone semantics for Unity Catalog tables differ from clone semantics in other environments. See Shallow clone for Unity Catalog tables.

- Any changes made to either deep or shallow clones affect only the clones themselves and not the source table.

- Shallow clones reference data files in the source directory. If you run

vacuumon the source table, clients can no longer read the referenced data files and aFileNotFoundExceptionis thrown. In this case, running clone with replace over the shallow clone repairs the clone. If this occurs often, consider using a deep clone instead which does not depend on the source table. - Deep clones do not depend on the source from which they were cloned, but are expensive to create because a deep clone copies the data as well as the metadata.

- Cloning with

replaceto a target that already has a table at that path creates a Delta log if one does not exist at that path. You can clean up any existing data by runningvacuum. - For existing Delta tables, a new commit is created that includes the new metadata and new data from the source table. This new commit is incremental, meaning that only new changes since the last clone are committed to the table.

- Cloning a table is not the same as

Create Table As SelectorCTAS. A clone copies the metadata of the source table in addition to the data. Cloning also has simpler syntax: you don't need to specify partitioning, format, invariants, nullability and so on as they are taken from the source table. - A cloned table has an independent history from its source table. Time travel queries on a cloned table do not work with the same inputs as they work on its source table.

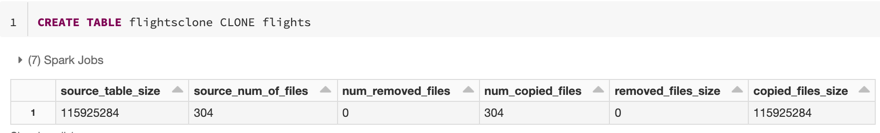

Clone metrics

CLONE reports the following metrics as a single row DataFrame once the operation is complete:

source_table_size: Size of the source table that's being cloned in bytes.source_num_of_files: The number of files in the source table.num_removed_files: If the table is being replaced, how many files are removed from the current table.num_copied_files: Number of files that were copied from the source (0 for shallow clones).removed_files_size: Size in bytes of the files that are being removed from the current table.copied_files_size: Size in bytes of the files copied to the table.

Permissions

You must configure permissions for Azure Databricks table access control and your cloud provider.

Table access control

The following permissions are required for both deep and shallow clones:

SELECTpermission on the source table.- If you are using

CLONEto create a new table,CREATEpermission on the database in which you are creating the table. - If you are using

CLONEto replace a table, you must haveMODIFYpermission on the table.

Cloud provider permissions

If you have created a deep clone, any user that reads the deep clone must have read access to the clone's directory. To make changes to the clone, users must have write access to the clone's directory.

If you have created a shallow clone, any user that reads the shallow clone needs permission to read the files in the original table, since the data files remain in the source table with shallow clones, as well as the clone's directory. To make changes to the clone, users will need write access to the clone's directory.

Examples

Create deep or shallow clones

The following code examples demonstrate syntax for creating deep and shallow clones:

SQL

CREATE TABLE target_table CLONE source_table; -- Create a deep clone of source_table as target_table

CREATE OR REPLACE TABLE target_table CLONE source_table; -- Replace the target

CREATE TABLE IF NOT EXISTS target_table CLONE source_table; -- No-op if the target table exists

CREATE TABLE target_table SHALLOW CLONE source_table;

CREATE TABLE target_table SHALLOW CLONE source_table VERSION AS OF version;

CREATE TABLE target_table SHALLOW CLONE source_table TIMESTAMP AS OF timestamp_expression; -- timestamp can be like “2019-01-01” or like date_sub(current_date(), 1)

Python

The Python DeltaTable API is Delta Lake-specific.

from delta.tables import *

deltaTable = DeltaTable.forName(spark, "source_table")

deltaTable.clone(target="target_table", isShallow=True, replace=False) # clone the source at latest version

deltaTable.cloneAtVersion(version=1, target="target_table", isShallow=True, replace=False) # clone the source at a specific version

# clone the source at a specific timestamp such as timestamp="2019-01-01"

deltaTable.cloneAtTimestamp(timestamp="2019-01-01", target="target_table", isShallow=True, replace=False)

Scala

The Scala DeltaTable API is Delta Lake-specific.

import io.delta.tables._

val deltaTable = DeltaTable.forName(spark, "source_table")

deltaTable.clone(target="target_table", isShallow=true, replace=false) // clone the source at latest version

deltaTable.cloneAtVersion(version=1, target="target_table", isShallow=true, replace=false) // clone the source at a specific version

deltaTable.cloneAtTimestamp(timestamp="2019-01-01", target="target_table", isShallow=true, replace=false) // clone the source at a specific timestamp

For syntax details, see CREATE TABLE CLONE.

Check metadata copied during CLONE

-- Test: Confirm which metadata is copied over during CLONE operations

-- Checks: TBLPROPERTIES, UC tags, and Delta history

-- Setup: create source table with a custom property my.custom.prop set to 'hello' and set delta.logRetentionDuration to '12 days' instead of default '30 days'. Insert data to generate table history

CREATE OR REPLACE TABLE test_clone_source (id INT, val STRING)

TBLPROPERTIES ('my.custom.prop' = 'hello', 'delta.logRetentionDuration' = '12 days');

ALTER TABLE test_clone_source SET TAGS ('team' = 'data-eng', 'env' = 'prod');

INSERT INTO test_clone_source VALUES (1, 'a');

INSERT INTO test_clone_source VALUES (2, 'b');

-- Deep clone

CREATE OR REPLACE TABLE test_clone_deep

DEEP CLONE test_clone_source;

-- Shallow clone

CREATE OR REPLACE TABLE test_clone_shallow

SHALLOW CLONE test_clone_source;

-- Show that TBLPROPERTIES are copied to clones

SHOW TBLPROPERTIES test_clone_source;

SHOW TBLPROPERTIES test_clone_deep;

SHOW TBLPROPERTIES test_clone_shallow;

-- Show that UC tags not copied to clones

SELECT catalog_name, schema_name, table_name, tag_name, tag_value FROM information_schema.table_tags WHERE table_name = 'test_clone_source';

SELECT catalog_name, schema_name, table_name, tag_name, tag_value FROM information_schema.table_tags WHERE table_name = 'test_clone_deep';

SELECT catalog_name, schema_name, table_name, tag_name, tag_value FROM information_schema.table_tags WHERE table_name = 'test_clone_shallow';

-- Show that Delta history is not copied to clones

DESCRIBE HISTORY test_clone_source;

DESCRIBE HISTORY test_clone_deep;

DESCRIBE HISTORY test_clone_shallow;

-- Cleanup

DROP TABLE IF EXISTS test_clone_shallow;

DROP TABLE IF EXISTS test_clone_source;

DROP TABLE IF EXISTS test_clone_deep;

Data archiving

You can use deep clone to preserve the state of a table at a certain point in time for archival purposes. You can sync deep clones incrementally to maintain an updated state of a source table for disaster recovery.

-- Every month run

CREATE OR REPLACE TABLE archive_table CLONE my_prod_table

ML model reproduction

When doing machine learning, you may want to archive a certain version of a table on which you trained an ML model. Future models can be tested using this archived data set.

-- Trained model on version 15 of Delta table

CREATE TABLE model_dataset CLONE entire_dataset VERSION AS OF 15

Short-term experiments on a production table

To test a workflow on a production table without corrupting the table, you can easily create a shallow clone. This allows you to run arbitrary workflows on the cloned table that contains all the production data but does not affect any production workloads.

-- Perform shallow clone

CREATE OR REPLACE TABLE my_test SHALLOW CLONE my_prod_table;

UPDATE my_test WHERE user_id is null SET invalid=true;

-- Run a bunch of validations. Once happy:

-- This should leverage the update information in the clone to prune to only

-- changed files in the clone if possible

MERGE INTO my_prod_table

USING my_test

ON my_test.user_id <=> my_prod_table.user_id

WHEN MATCHED AND my_test.user_id is null THEN UPDATE *;

DROP TABLE my_test;

Override table properties

Table property overrides are particularly useful for:

- Annotating tables with owner or user information when sharing data with different business units.

- Archiving Delta tables and table history or time travel is required. You can specify the data and log retention periods independently for the archive table. For example:

SQL

-- For Delta tables

CREATE OR REPLACE TABLE archive_table CLONE prod.my_table

TBLPROPERTIES (

delta.logRetentionDuration = '3650 days',

delta.deletedFileRetentionDuration = '3650 days'

)

-- For Iceberg tables

CREATE OR REPLACE TABLE archive_table CLONE prod.my_table

TBLPROPERTIES (

iceberg.logRetentionDuration = '3650 days',

iceberg.deletedFileRetentionDuration = '3650 days'

)

Python

The Python DeltaTable API is Delta Lake-specific.

dt = DeltaTable.forName(spark, "prod.my_table")

tblProps = {

"delta.logRetentionDuration": "3650 days",

"delta.deletedFileRetentionDuration": "3650 days"

}

dt.clone(target="archive_table", isShallow=False, replace=True, tblProps)

Scala

The Scala DeltaTable API is Delta Lake-specific.

val dt = DeltaTable.forName(spark, "prod.my_table")

val tblProps = Map(

"delta.logRetentionDuration" -> "3650 days",

"delta.deletedFileRetentionDuration" -> "3650 days"

)

dt.clone(target="archive_table", isShallow = false, replace = true, properties = tblProps)