Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

You can administer the use and settings for datamarts just like you can administer other aspects of Power BI. This article describes and explains how to administer your datamarts, and where to find the settings.

Important

The Power BI datamarts feature is being retired in October 2025. To avoid losing your data and breaking reports built on top of datamarts, you should upgrade your Power BI Datamart to a Warehouse. For more information, see Unify Datamart with Fabric Data Warehouse.

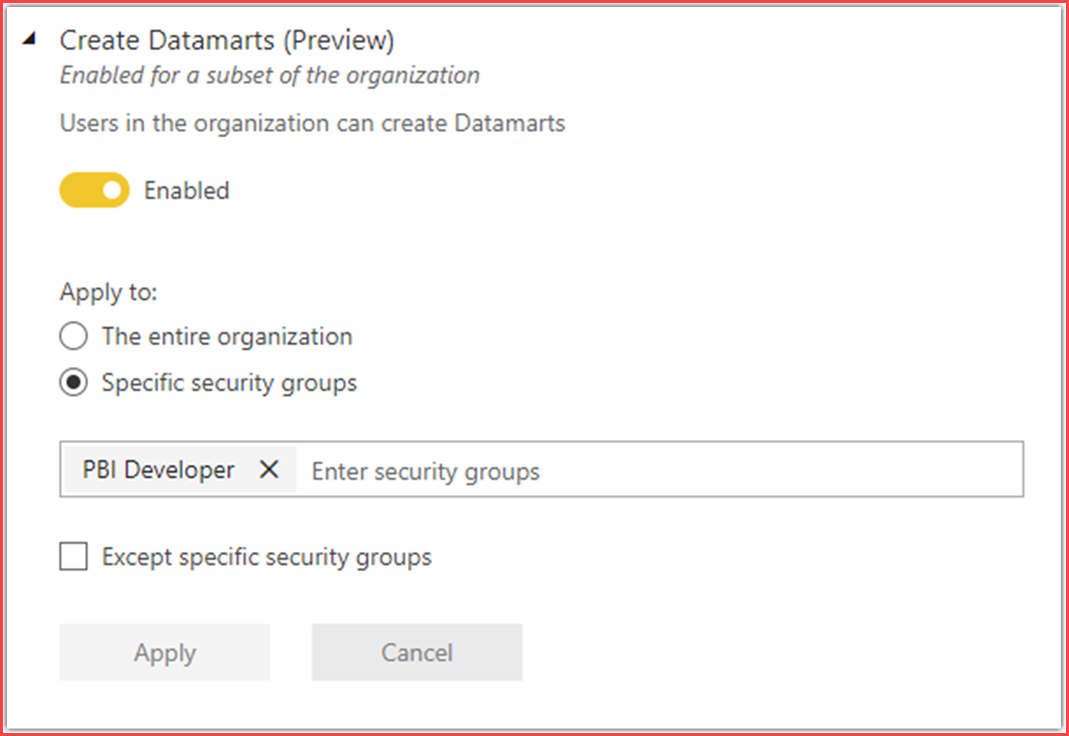

Enabling datamarts in the admin portal

Power BI administrators can enable or disable datamart creation for the entire organization or for specific security groups, using the setting found in the Power BI admin portal, as shown in the following image.

Keeping track of datamarts

In the Power BI admin portal, you can review a list of datamarts along with all other Power BI items in any workspace, as shown in the following image.

Existing Power BI admin APIs for getting workspace information work for datamarts as well, such as GetGroupsAsAdmin and the workspace scanner API. Such APIs enable you, as the Power BI service administrator, to retrieve datamarts metadata along with other Power BI item information, so you can monitor workspace usage and generate relevant reports.

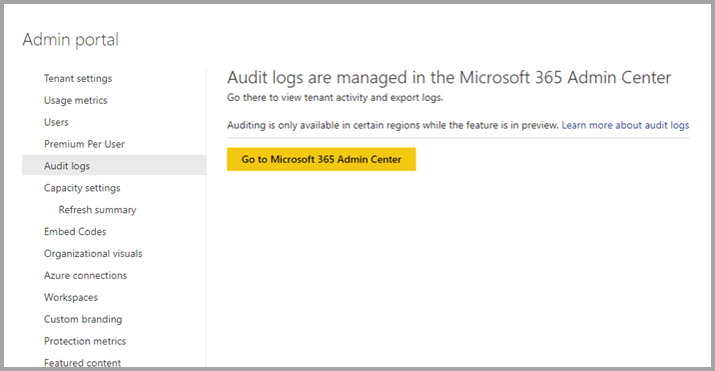

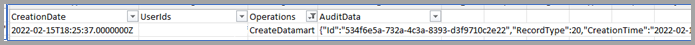

Viewing audit logs and activity events

Power BI administrators can audit datamart operations from the Microsoft 365 Admin Center. Audit operations supported on datamarts are the following items:

- Create

- Rename

- Update

- Delete

- Refresh

- View

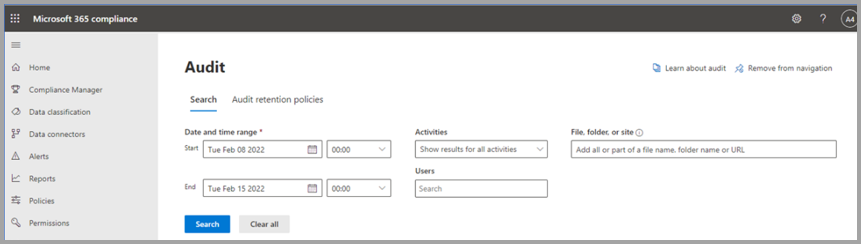

To get audit logs, complete the following steps:

Sign in to the Power BI admin portal as the administrator and navigate to Audit logs.

In the Audit logs section, select the button to go to Microsoft 365 Admin Center

Get audit events by applying search criteria.

Export audit logs and apply filter for datamart operations.

Using REST APIs for activity events

Administrators can export activity events on datamarts by using existing supported REST APIs. The following articles provide information about the APIs:

- Admin - Get Activity Events - REST API (Power BI Power BI REST APIs)

- Track user activities in Power BI

Capacity utilization and reporting

Datamart CPU usage is free during preview, including datamarts and queries on SQL endpoints of a datamart. Autogenerated semantic model usage is reported for throttling and autoscaling. To avoid incurring costs during the preview period, consider using a Premium Per User (PPU) trial workspace.

Considerations and limitations

The following limitations should be considered when using datamarts:

- Datamarts aren't currently supported in the following Power BI SKUs: EM1/EM2 and EM3.

- Datamarts aren't available in workspaces that are bound to an Azure Data Lake Gen2 storage account.

- Datamarts aren't available in sovereign or government clouds.

- Datamart extract, transform, and load (ETL) operations can currently only run for up to 24 hours

- Datamarts officially support data volumes of up to 100 GB.

- Currently datamarts don’t support the currency data type, and such data types are converted to float.

- Data sources behind a VNET or using private links can't currently be used with datamarts; to work around this limitation you can use an on-premises data gateway.

- Datamarts use port 1948 for connectivity to the SQL endpoint. Port 1433 needs to be open for datamarts to work.

- Datamarts only support Microsoft Entra ID and do not support managed identities or service principals at this time.

- Beginning February 2023, datamarts support any SQL client.

- Datamarts aren't currently available in the following Azure regions:

- West India

- UAE Central

- Poland

- Israel

- Italy

Datamarts are supported in all other Azure regions.

Datamart connectors in Premium workspaces

Some connectors aren't supported for datamarts (or dataflows) in Premium workspaces. When using an unsupported connector, you may receive the following error: Expression.Error: The import "<"connector name">" matches no exports. Did you miss a module reference?

The following connectors aren't supported for dataflows and datamarts in Premium workspaces:

- Linkar

- Actian

- AmazonAthena

- AmazonOpenSearchService

- BIConnector

- DataVirtuality

- DenodoForPowerBI

- Exasol

- Foundry

- Indexima

- IRIS

- JethroODBC

- Kyligence

- MariaDB

- MarkLogicODBC

- OpenSearchProject

- QubolePresto

- SingleStoreODBC

- StarburstPresto

- TibcoTdv

The use of the previous list of connectors with dataflows or datamarts is only supported workspaces that aren't Premium.

Related content

This article provided information about the administration of datamarts.

The following articles provide more information about datamarts and Power BI:

- Introduction to datamarts

- Understand datamarts

- Get started with datamarts

- Analyzing datamarts

- Create reports with datamarts

- Access control in datamarts

For more information about dataflows and transforming data, see the following articles: