Hi Miss,

I've tested the speech translation service deployed in eastus2 on my end; it is working fine. You can use the below code to test it at your end:

import azure.cognitiveservices.speech as speechsdk

import threading

import time

speech_key = "<YOUR-SPEECH-KEY>"

service_region = "<DEPLOYMENT-REGION>"

translation_config = speechsdk.translation.SpeechTranslationConfig(

subscription=speech_key,

region=service_region

)

translation_config.speech_recognition_language = "en-US"

translation_config.add_target_language("es") # Spanish

audio_config = speechsdk.audio.AudioConfig(use_default_microphone=True)

translator = speechsdk.translation.TranslationRecognizer(

translation_config=translation_config,

audio_config=audio_config

)

last_speech_time = time.time()

silence_timeout = 5 # seconds

stop_flag = False

output_log = []

def recognizing_handler(evt):

global last_speech_time

if evt.result.text.strip():

last_speech_time = time.time()

print(f"Recognizing: {evt.result.text}", end="\r")

def recognized_handler(evt):

global last_speech_time

text = evt.result.text.strip()

if text:

last_speech_time = time.time()

print(f"\nRecognized: {text}")

entry = f"Input: {text}\n"

for lang, translation in evt.result.translations.items():

translated_text = f"Output ({lang}): {translation}"

print(translated_text)

entry += translated_text + "\n"

output_log.append(entry)

def silence_watcher():

global stop_flag

while not stop_flag:

time.sleep(1)

if time.time() - last_speech_time > silence_timeout:

print(f"\nNo speech detected for {silence_timeout}s — stopping translation.")

translator.stop_continuous_recognition()

stop_flag = True

break

translator.recognizing.connect(recognizing_handler)

translator.recognized.connect(recognized_handler)

print("Speak into your microphone...")

print("(It will keep listening and stop 5 seconds after your last word.)")

translator.start_continuous_recognition()

watcher_thread = threading.Thread(target=silence_watcher)

watcher_thread.daemon = True

watcher_thread.start()

while not stop_flag:

time.sleep(0.1)

print("Translation session ended.")

with open("translation_output.txt", "w", encoding="utf-8") as f:

for entry in output_log:

f.write(entry)

f.write("\n" + "-"*40 + "\n")

print("Output saved to translation_output.txt")

The above code will keep listening continuously and will stop after 5 seconds of silence.

Here is the supported documentation:

https://learn.microsoft.com/en-us/azure/ai-services/speech-service/how-to-translate-speech?tabs=terminal&pivots=programming-language-python

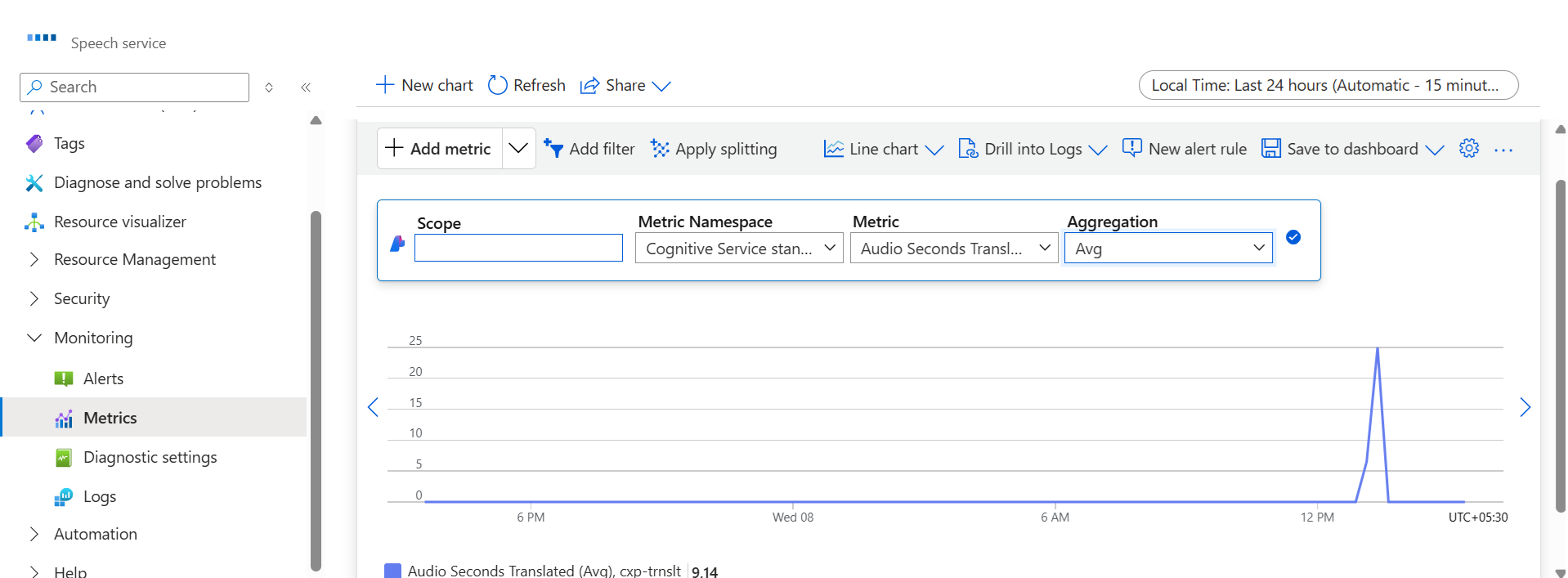

You can also check Audio Seconds translated on the Azure Portal, as shown below:

Feel free to accept this as answer.

Thankyou for reaching out to the Microsoft QNA Portal.