Hello,

we are developing a medical transcription and documentation solution for doctors (running, happy with it) and expanding it to licensed psychologists and psychiatrists using Azure OpenAI models. In routine clinical work, dictated notes naturally include terminology related to self-harm assessment. These are clinical observations, not expressions of intent.

Problem

Even after:

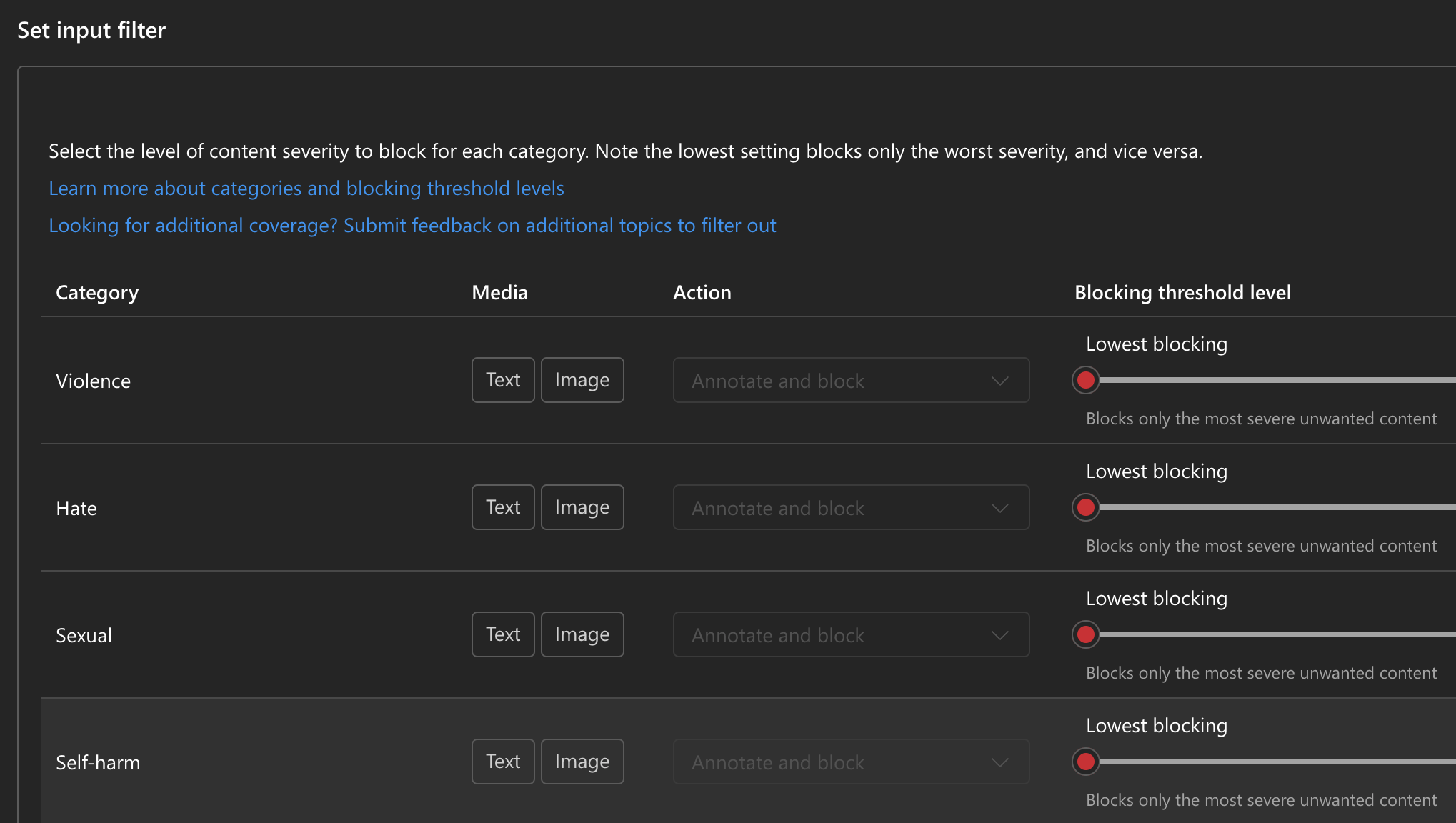

- enabling personalized safety filters,

minimizing other axes as far as allowed,

requesting an official Self-Harm exception via the standard escalation channel,

the model still reliably blocks medically necessary content such as:

“suicidal thoughts considered / denied”

“no self-injury intentions”

“patient reports past suicidal ideation”

other routine psychiatric assessment terminology

These expressions are mandatory in clinical records and cannot be removed.

Impact

The safety filter currently prevents:

generating clinical notes,

transcribing real therapy sessions,

automated documentation workflows for licensed clinicians.

If we cannot resolve this issue, we will be forced to switch providers, because moderation blocks make our use case technically impossible.

Our Question

Is it possible to obtain a full exemption from the Self-Harm axis specifically for server-to-server, authenticated, clinician-only medical workflows?

Or alternatively:

Is there any configuration, endpoint, deployment mode, or model variant that allows processing psychiatric terminology without triggering a refusal?

Are there upcoming changes to the moderation stack that would support medically supervised scenarios?

Is there a documented best practice for obtaining clinical exemptions similar to those used by medical researchers?

Context

Deployment: Azure OpenAI, Switzerland North

Use case: transcription of clinical psychology / psychiatry sessions

Users: authenticated licensed clinicians (not general public)

Data: provided with patient consent, stored in a healthcare-compliant environment

- We already opened a support request and were told the exception was forwarded, but no change is visible. The

We would appreciate concrete technical guidance or escalation steps, as safety filtering currently blocks safe, medically legitimate content that clinicians must document.

Thank you.Hello,

we are developing a medical transcription and documentation solution for licensed psychologists and psychiatrists using Azure OpenAI models.

In routine clinical work, dictated notes naturally include terminology related to self-harm assessment. These are clinical observations, not expressions of intent.

Problem

Even after:

enabling personalized safety filters,

minimizing other axes as far as allowed,

requesting an official Self-Harm exception via the standard escalation channel,

the model still reliably blocks or redacts medically necessary content such as:

“suicidal thoughts considered / denied”

“no self-injury intentions”

“patient reports past suicidal ideation”

other routine psychiatric assessment terminology

These expressions are mandatory in clinical records and cannot be removed.

Impact

The safety filter currently prevents:

generating clinical notes,

transcribing real therapy sessions,

automated documentation workflows for licensed clinicians.

If we cannot resolve this issue, we will be forced to switch providers, because moderation blocks make our use case technically impossible.

Our Question

Is it possible to obtain a full exemption from the Self-Harm axis specifically for

server-to-server, authenticated, clinician-only medical workflows?

Or alternatively:

Is there any configuration, endpoint, deployment mode, or model variant that allows processing psychiatric terminology without triggering a refusal?

Are there upcoming changes to the moderation stack that would support medically supervised scenarios?

Is there a documented best practice for obtaining clinical exemptions similar to those used by medical researchers?

Context

Deployment: Azure OpenAI, Switzerland North

Use case: transcription of clinical psychology / psychiatry sessions

Users: authenticated licensed clinicians (not general public)

Data: provided with patient consent, stored in a healthcare-compliant environment

We already opened a support request and were told the exception was forwarded, but no change is visible.

We would appreciate concrete technical guidance or escalation steps, as safety filtering currently blocks safe, medically legitimate content that clinicians must document.

Thank you.