You're getting about 7ms/read on a 6TB database with lots of IO, which is reasonable. Do you have an actual problem or just noticing the IO wait time? This might be acceptable, but you're probably a bit under-sized from an IO and VM point-of-view. Nothing wrong with that so long as your performance is acceptable and you can perform maintenance tasks in a reasonable time frame. But everything is harder and takes longer when you're running on under-sized infrastructure.

Using Premium SSDs. It is an Azure Win server 2019 VM.

How many disks and what SKU? Are they in a Storage Space (eg managed by the Azure SQL Server resource blade) or mounted individually?

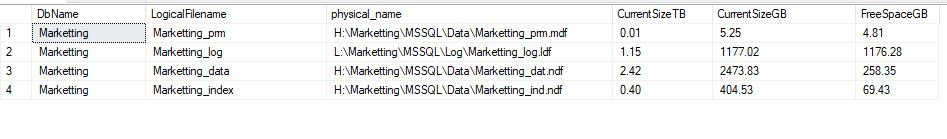

how we can improve I/O demands for this database.

There's nothing you can do with the file and filegroup design that will make a difference. And moving tables between filegroups won't help either.

From an infrastructure point-of-view add more disks and/or increase the VM size to provide more cache memory and possibly space for buffer pool extensions.

Each P30 disk you add gives you 5000IOPS or 200MB/sec of additional throughput in addition to 1TB of usable space. So storage provisioning in Azure isn't just about the size of your database. You may need 20TB of space to get the performance you want on a 5TB database.

But before you do that, evaluate whether some of your large tables can be compressed using PAGE or Columnstore compression, or your queries can be optimized to not have to read so much data. As I might have said once or twice before, turn on the Query Store. You will want to look at queries driving IO, both Logical Reads and Physical Reads (including Read-Ahead Reads).