AKS clusters can be shared across multiple tenants in different scenarios and ways. In some cases, diverse applications can run in the same cluster. In other cases, multiple instances of the same application can run in the same shared cluster, one for each tenant. All these types of sharing are frequently described using the umbrella term multitenancy.

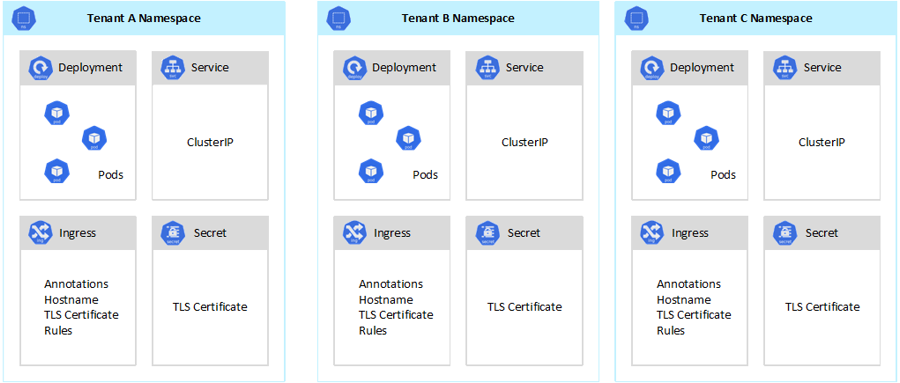

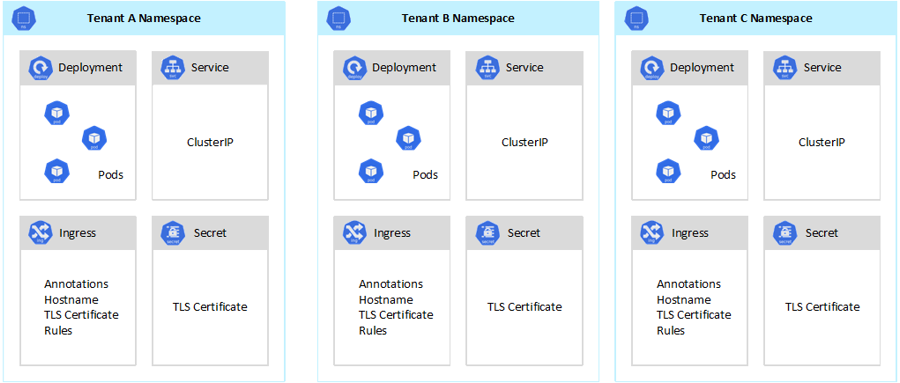

For example, the following picture shows the typical SaaS provider model that hosts multiple instances of the same application on the same cluster, one for each tenant. Each application lives in a separate namespace.

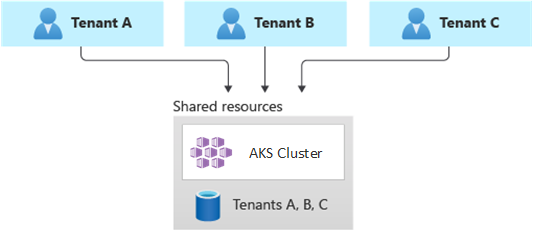

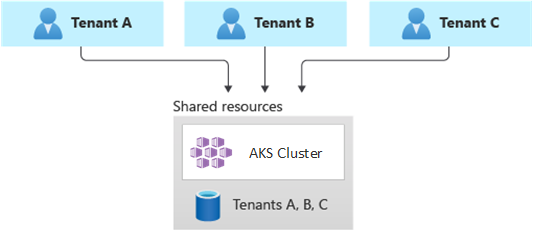

In a fully multitenant deployment, a single application serves the requests of all the tenants, and all the Azure resources are shared, including the AKS cluster. In this context, you only have one set of infrastructure to deploy, monitor, and maintain. All the tenants use the resource, as illustrated in the following diagram:

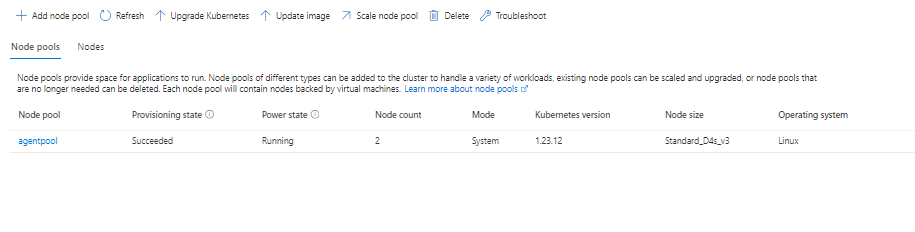

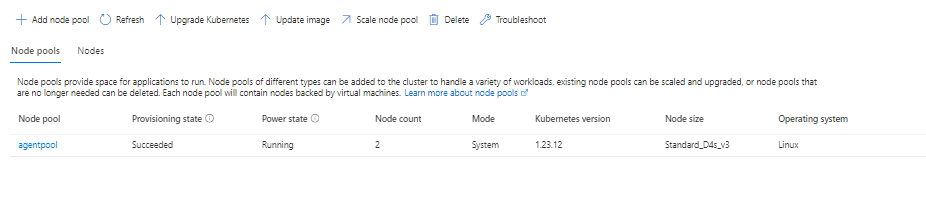

To keep up with the traffic demand that's generated by tenant applications, you can enable the cluster autoscaler to scale the agent nodes of your Azure Kubernetes Service (AKS). Autoscaling helps systems remain responsive in the circumstances where autoscaling is needed. When you enable autoscaling for a node pool, you specify a minimum and a maximum number of nodes based on the expected workload sizes. By configuring a maximum number of nodes, you can ensure enough space for all the tenant pods in the cluster, regardless of the namespace they run in.

When the traffic increases, cluster autoscaling adds new agent nodes to avoid pods going into a pending state, due to a shortage of resources in terms of CPU and memory.

Likewise, when the load diminishes, cluster autoscaling decreases the number of agent nodes in a node pool, based on the specified boundaries, which helps reduce your operational costs.

To reduce the risk of downtimes that may affect tenant applications during cluster or node pool upgrades, schedule AKS Planned Maintenance to occur during off-peak hours. Planned Maintenance allows you to schedule weekly maintenance windows to update the control plane of the AKS clusters that run tenant applications and node pools, which minimizing workload impact. You can schedule one or more weekly maintenance windows on your cluster by specifying a day or time range on a specific day. All maintenance operations will occur during the scheduled windows.

When you share an AKS cluster between multiple teams within an organization, you need to implement the principle of least privilege to isolate different tenants from one another. In particular, you need to make sure that users have access only to their Kubernetes namespaces and resources when using tools, such as kubectl, Helm, Flux, Argo CD, or other types of tools.

----------

--please don't forget to upvote and Accept as answer if the reply is helpful--