Synapse Data flow stuck in queued status

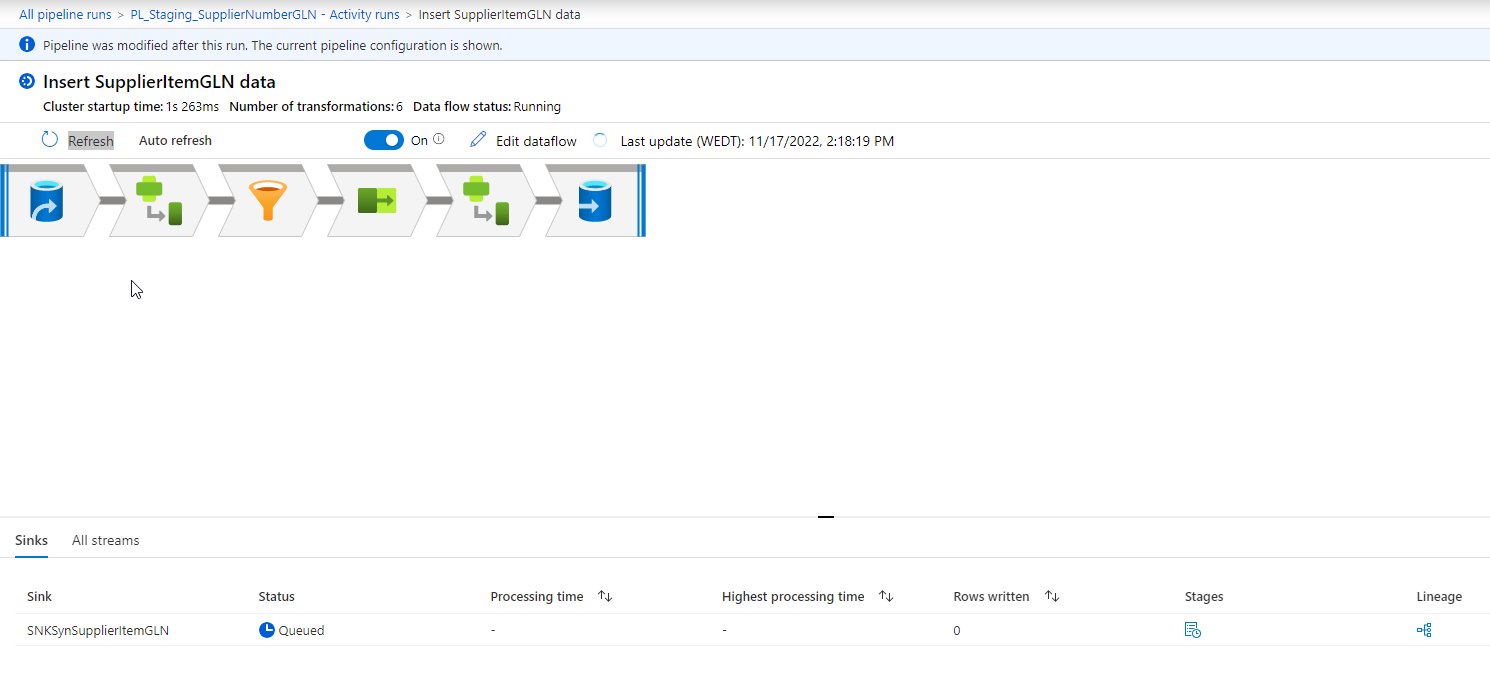

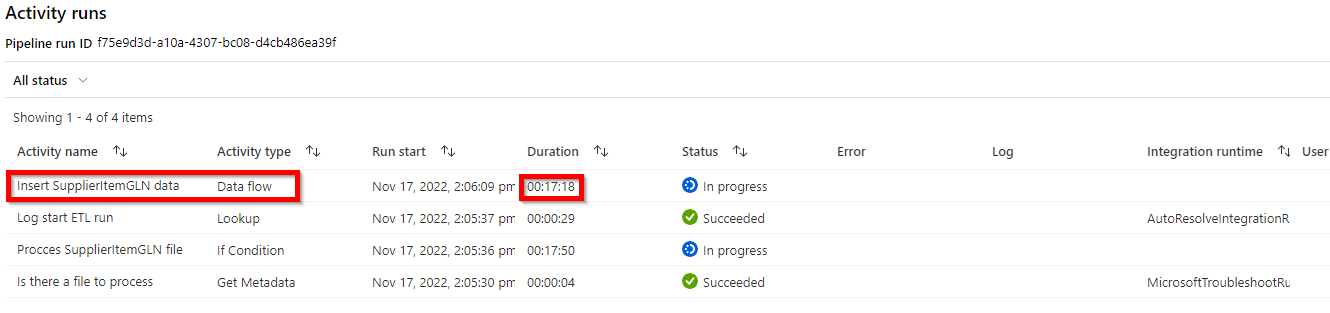

We have a few Excel files that need to be imported into Synapse Analytics dedicated pool. For this requirement we build a few data flows, one for each Excel file to be imported. We were recently asked to add another one so I duplicated one of the existing flows and modified it for the new file. When I preview the results from the Data Flow configuration everything looks fine, but when I run it in a debug session from the main pipeline the data flow remains stuck in queued status:

Existing flows for other files using the same integration runtime still work just fine.

I have tried using another integration runtime (we have to use AutoResolve as we don't have self hosted runtimes we can use for this purpose) and tried using a medium instead of a small compute cluster, but neither change makes any difference. The Excel file contains about 110.000 rows and is only 5 columns wide, so we're not talking a lot of data here. I'm stumped as to what might be going on. Hoping someone here can give me some pointers as to where to search for the problem.