Tag not monitored by Microsoft.

This browser is no longer supported.

Upgrade to Microsoft Edge to take advantage of the latest features, security updates, and technical support.

Hey,I am a beginner in C# and I wanted to know what the difference was between type decimal and double.

Tag not monitored by Microsoft.

Basically, precision.

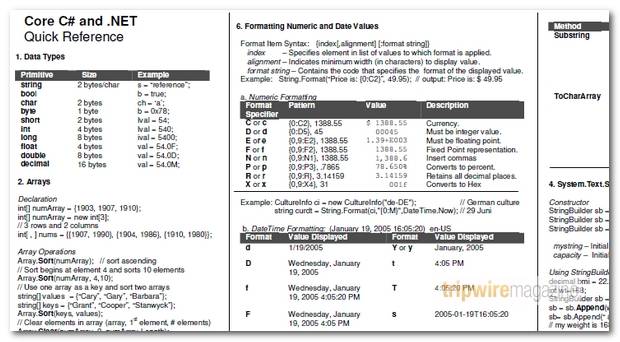

Float: 7 digits (32-bit)

Double: 15-16 digits (64-bit)

Decimal: 28-29 digits (128-bit)

Float and double work with rounding values. For that reason, they are recommended when you don't care if there is a rounding here or there. They are widely used for scientific calculations.

Decimal is different: we use it when we want exact precision of values. We usually want this when we are working with money, right?

Because of the high accuracy, working with decimals is slower.

In terms of magnitude, we were able to store larger numbers in a double, but with less precision.

In a decimal we keep smaller, but with more precision

+---------+----------------+---------+----------+---------------------------------------------+

| C# | .Net Framework | Signed? | Bytes | Possible Values |

| Type | (System) type | | Occupied | |

+---------+----------------+---------+----------+---------------------------------------------+

| sbyte | System.Sbyte | Yes | 1 | -128 to 127 |

| short | System.Int16 | Yes | 2 | -32768 to 32767 |

| int | System.Int32 | Yes | 4 | -2147483648 to 2147483647 |

| long | System.Int64 | Yes | 8 | -9223372036854775808 to 9223372036854775807 |

| byte | System.Byte | No | 1 | 0 to 255 |

| ushort | System.Uint16 | No | 2 | 0 to 65535 |

| uint | System.UInt32 | No | 4 | 0 to 4294967295 |

| ulong | System.Uint64 | No | 8 | 0 to 18446744073709551615 |

| float | System.Single | Yes | 4 | Approximately ±1.5 x 10-45 to ±3.4 x 1038 |

| | | | | with 7 significant figures |

| double | System.Double | Yes | 8 | Approximately ±5.0 x 10-324 to ±1.7 x 10308 |

| | | | | with 15 or 16 significant figures |

| decimal | System.Decimal | Yes | 12 | Approximately ±1.0 x 10-28 to ±7.9 x 1028 |

| | | | | with 28 or 29 significant figures |

| char | System.Char | N/A | 2 | Any Unicode character (16 bit) |

| bool | System.Boolean | N/A | 1 / 2 | true or false |

+---------+----------------+---------+----------+---------------------------------------------+

Use decimal for counted values

Use float/double for measured values

Some examples:

We always count money and should never measure it. We usually measure distance. We often count scores.

Hi Andrew,

Please refer the following blog about the difference between decimal and double:

Hi

Best Regards.

Please click the Mark as answer button and vote as helpful if this reply solves your problem.

As far as I know decimals are better for financial calculation because you will not lose the decimal precision, the reason for this is because decimals are not saved directly as binary value which means that the cpu will preforme more aritmetic operations when you make calculations with them, althought this doesn't have any impact in simple or common applications It could have a big performance impact when working with a lot of data calculations.