PROBLEM CONTEXT

I am building a data pipeline in my Synapse Analytics workspace

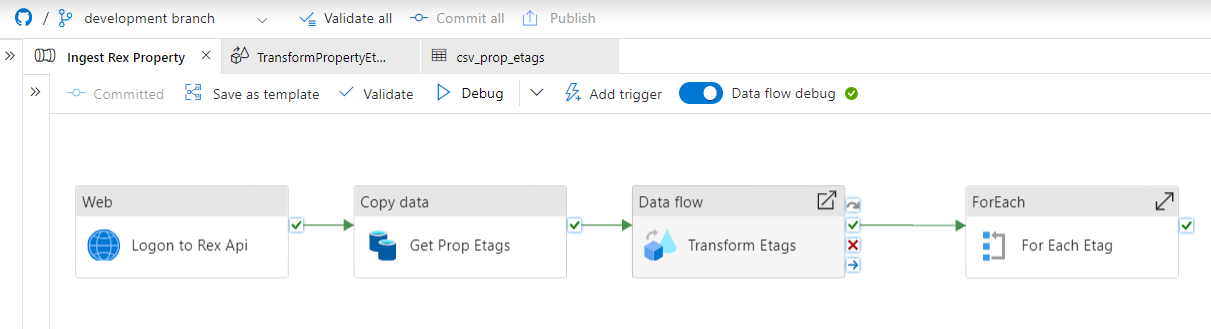

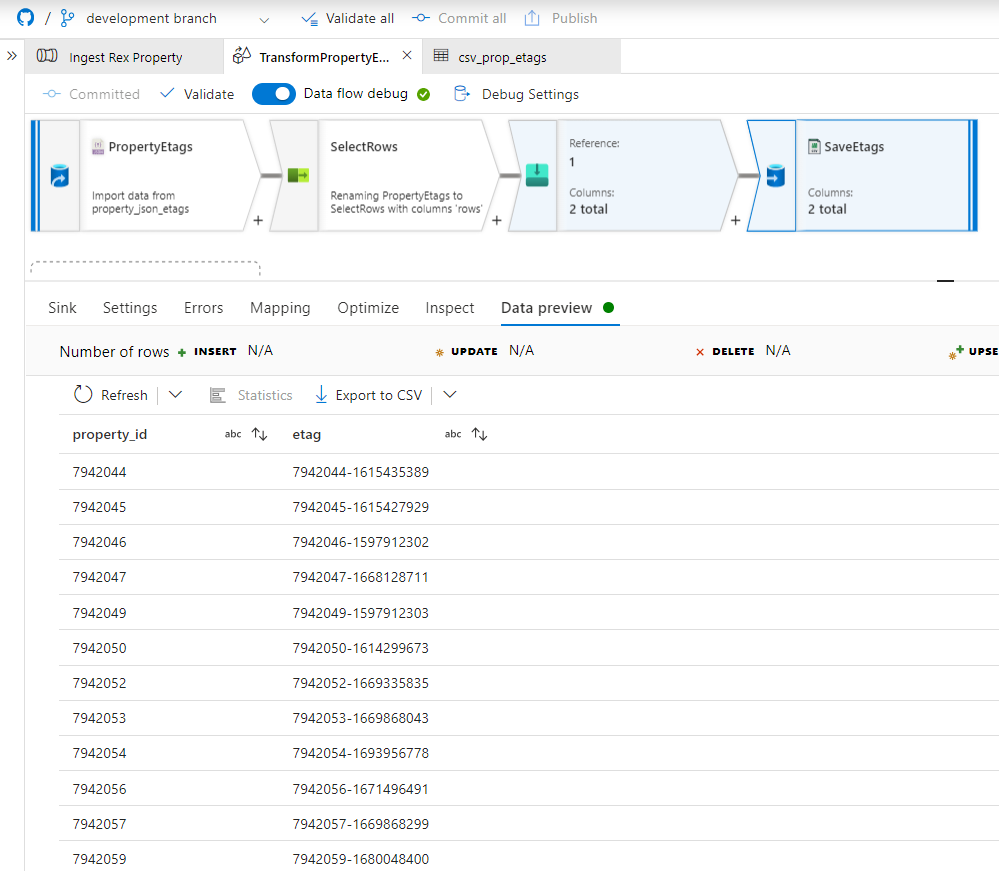

The pipeline successfully connects to an API and sends a request to an endpoint that returns rows of data as a Json array. This Json document is subsequently saved as a Json Dataset for downstream parsing and flattening. The data is finally transformed and saved as a 2 column csv Dataset.

The intent was then to use the 2 column csv Dataset as an input source of row data for a ForEach activity in the pipeline. Each iteration of the ForEach would then use the row property_id to send an API request to an enpoint that would return the details of an individual property. Then it would transform and save these details to the staging area of a data lakehouse database, using a stored procedure.

THE PROBLEM

I am new to the use of synapse and still learning what the best patterns, tools and techniques are for Ingesting, Transforming and Storing data for downstream analytics and machine learning workloads.

In this instance I am uncertain how I can iterate over the dataset to carry out the loop activities.

SUPPORTING MATERIAL

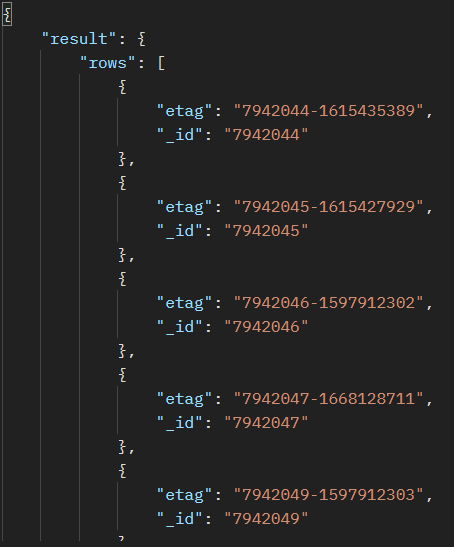

The raw API Json data is illustrated below:

The data pipeline is illustrated below for reference:

The csv dataset produced by the Data flow Sink is illustrated below:

I tried to use the csv dataset as input to the ForEach activity and was uncertain as to how to acccomplish this. I was expecting that I could iterate over each row and extract the property_id and etag. And subsequently use the unique property_id on each iteration to call the API and return the detailed property details to be used in updating the staging area.

I have yet to be successfull in getting access to the relevant data on each iteration. Searching online has revealed many examples that use arrays as inputs to the ForEach activity, however I am unsure whether this even applies to my scenario.

Thank you in advance