@Billy Cheng - Thanks for the question and using MS Q&A platform.

You will receive this error when you exceed the limit of cores for a region. You need to raise a support ticket to increase the limit of the number of cores for a West Europe region.

Cause: Quotas are applied per resource group, subscriptions, accounts, and other scopes. For example, your subscription may be configured to limit the number of cores for a region. If you attempt to deploy a virtual machine with more cores than the permitted amount, you receive an error stating the quota has been exceeded.

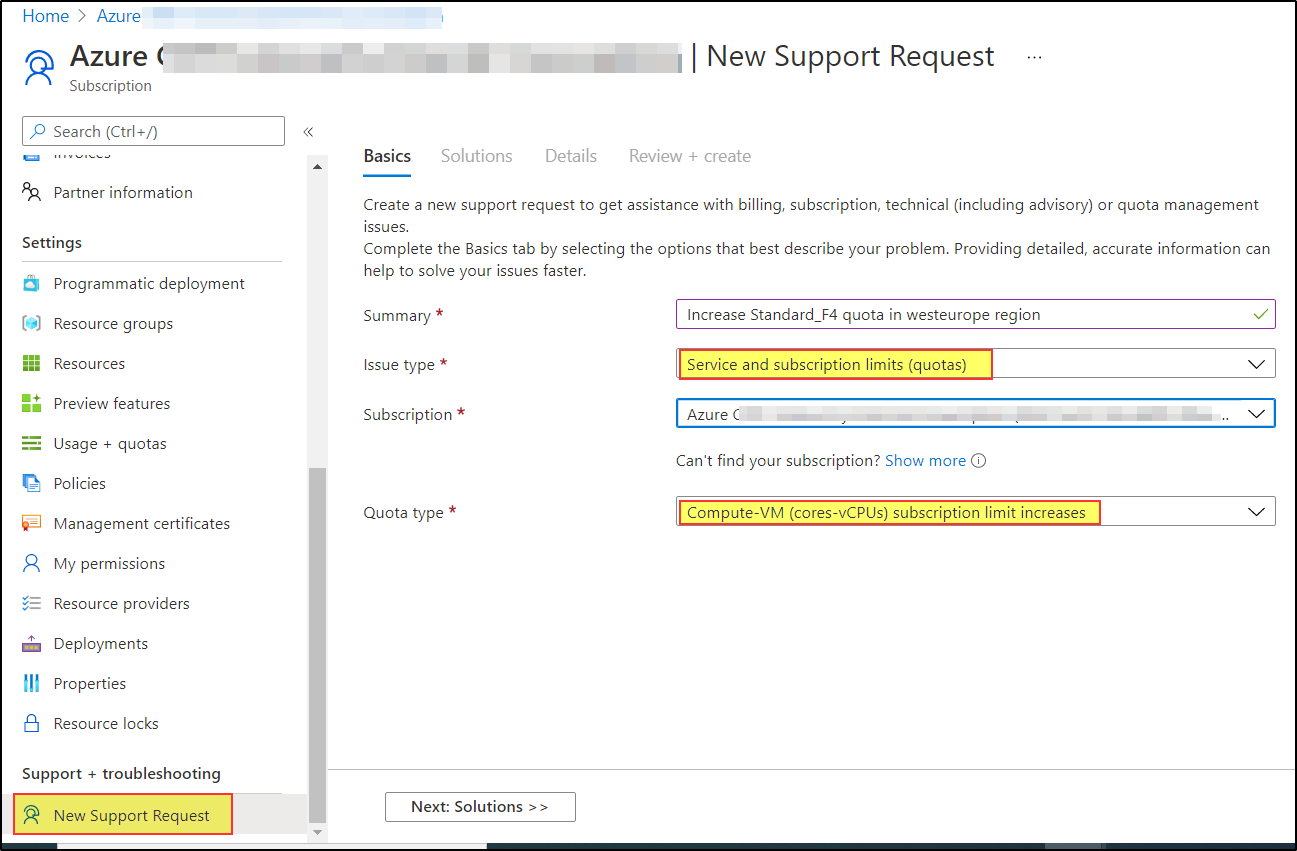

Solution: To request a quota increase, go to the portal and file a support issue. In the support issue, request an increase in your quota for the region into which you want to create the VMs.

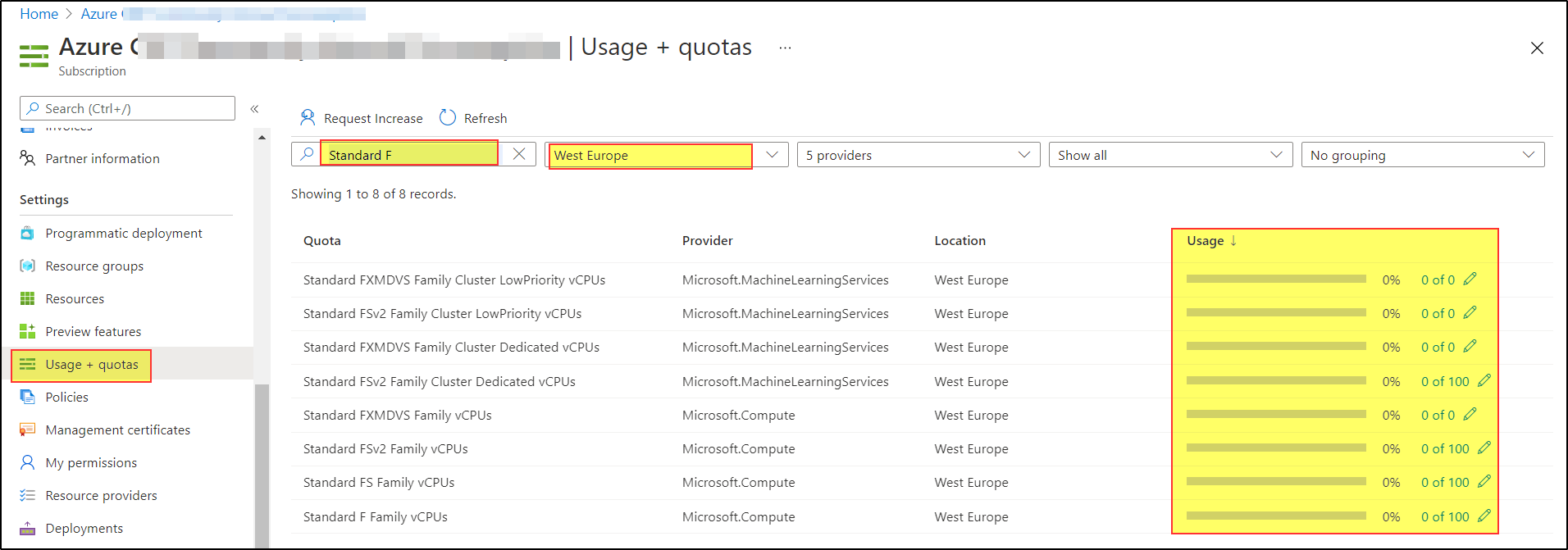

How to check Usage + Quotas for your subscription?

Select your subscription => Under Settings => Usage + quotas => Use filter to select "Standard F" & "West Europe" => Check usage of Total Regional vCPUs => If the usage is full, you need to click on Request Increase to increase the limit of cores in the region.

To request a quota increase, go to the portal and file a support issue. In the support issue, request an increase in your quota for the region into which you want to deploy.

For more details,refer to Resolve errors for resource quotas.

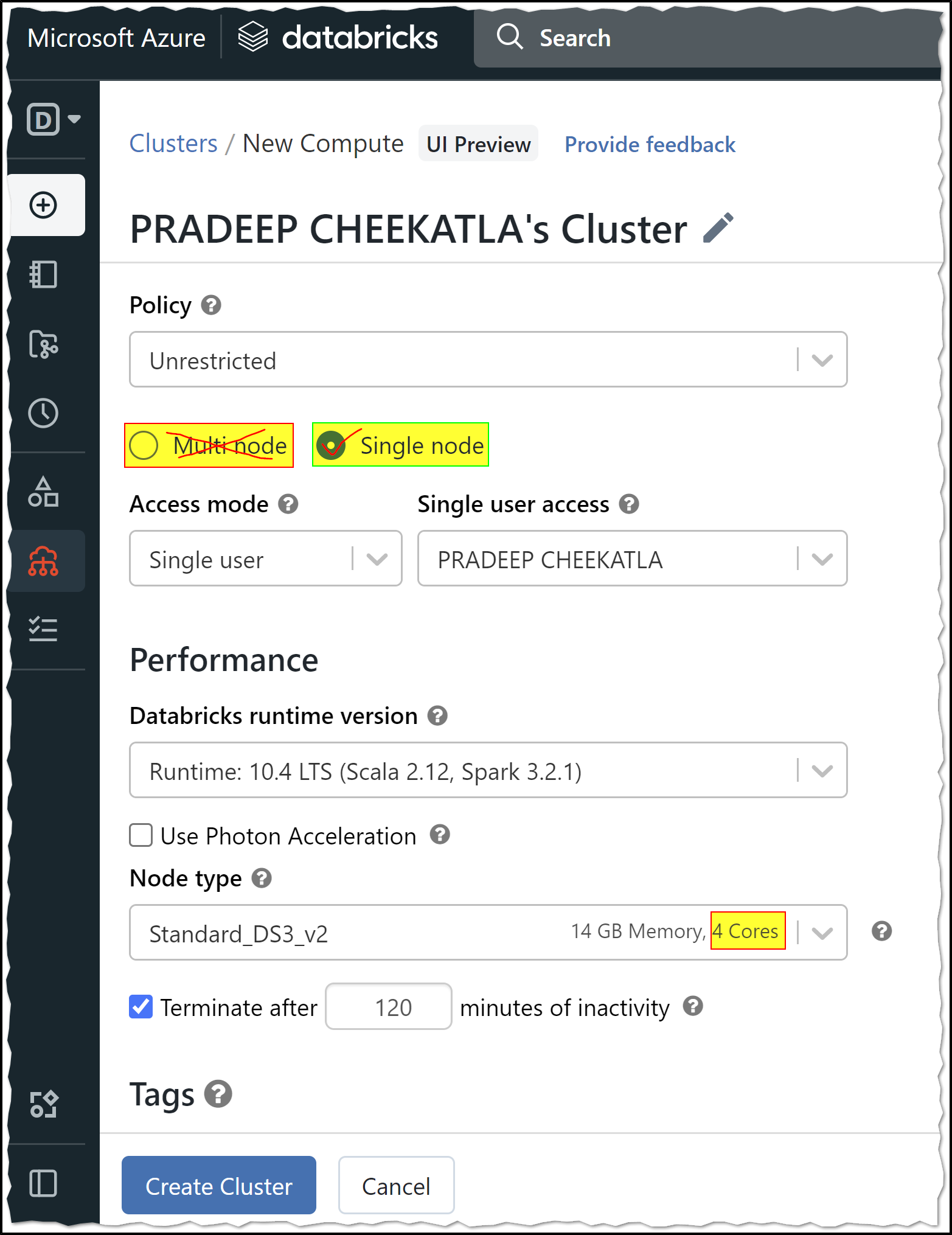

Note: Azure Databricks Cluster - multi node is not available under the Azure free trial/Student/Pass subscription.

Reason: Azure free trial/Student/Pass subscription has a limit of 4 cores, and you cannot create Databricks cluster multi node using a Student Subscription because it requires more than 8 cores.

You need to upgrade to a Pay-As-You-Go subscription to create Azure Databricks clusters with multi mode.

Note: Azure Student subscriptions aren't eligible for limit or quota increases. If you have a Student subscription, you can upgrade to a Pay-As-You-Go subscription.

You can use Azure Student subscription to create a Single node cluster which will have one Driver node with 4 cores.

A Single Node cluster is a cluster consisting of a Spark driver and no Spark workers. Such clusters support Spark jobs and all Spark data sources, including Delta Lake. In contrast, Standard clusters require at least one Spark worker to run Spark jobs.

Single Node clusters are helpful in the following situations:

- Running single node machine learning workloads that need Spark to load and save data

- Lightweight exploratory data analysis (EDA)

For more details, Azure Databricks - Single Node clusters

Hope this helps. Do let us know if you any further queries.

If this answers your query, do click Accept Answer and Yes for was this answer helpful. And, if you have any further query do let us know.