Hello anonymous user,

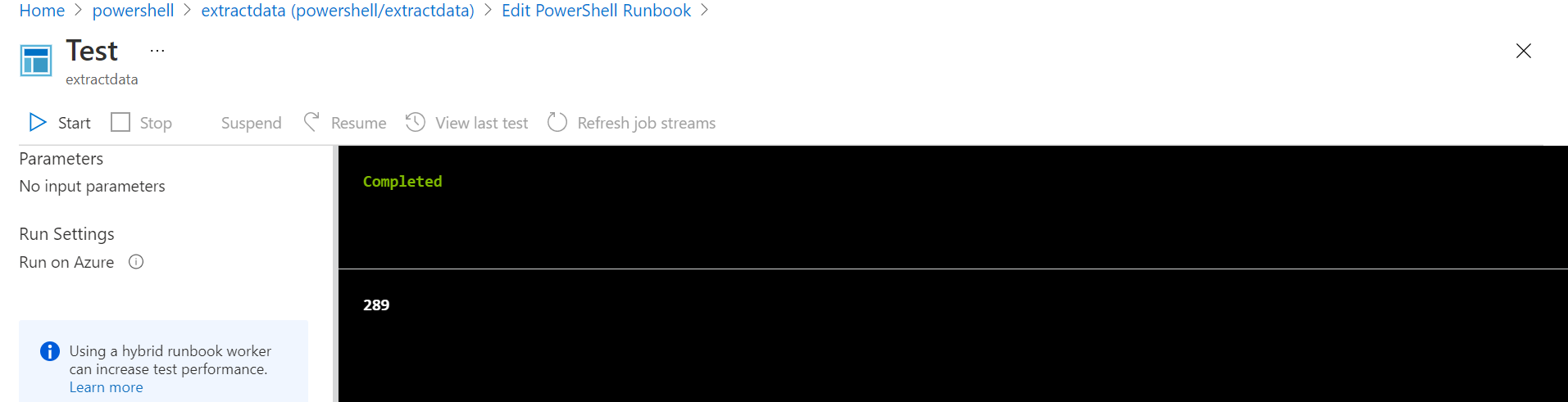

Couple of important points to note while running the powershell scripts in Azure Automation runbook.

Before running your powershell script in Azure Automation runbook, test it in local PowerShell mode and figure out any issues.

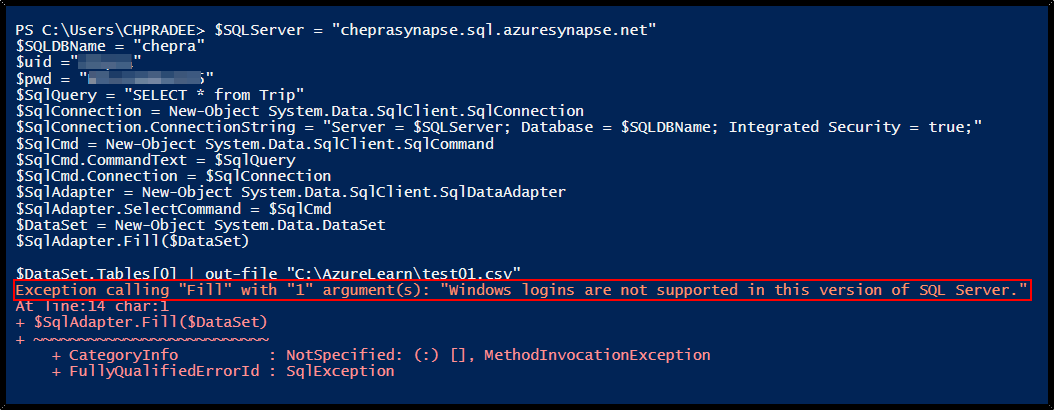

When running the above code in local powershell mode, it got the below error message: Exception calling "Fill" with "1" argument(s): "Windows logins are not supported in this version of SQL Server."

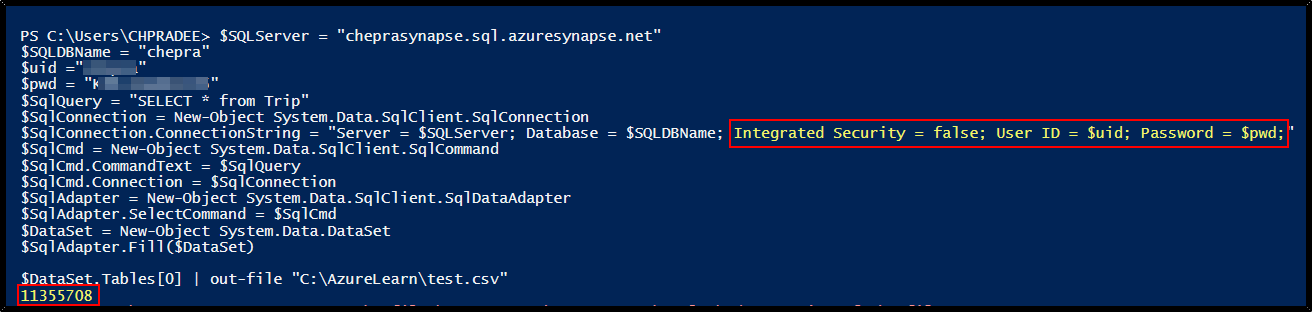

Change Integrated security to false in the connection string and pass username and password which will work without any issue.

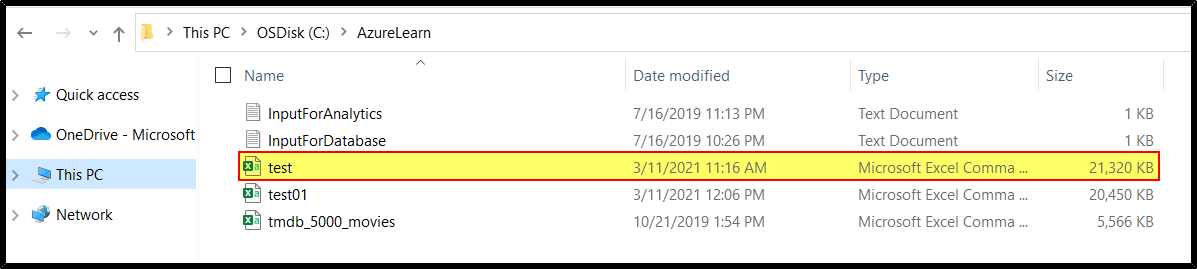

Checkout the file saved in local filesystem path:

You may checkout the SO thread which addressing similar issue.

Unfortunately, you cannot pass local directory path in the Azure Automation runbook as

$DataSet.Tables[0] | out-file "C:\File\test.csv".

Reason: If you need to create temporary files as part of your runbook logic, you can use the Temp folder (that is, $env:TEMP) in the Azure sandbox for runbooks running in Azure. The only limitation is you cannot use more than 1 GB of disk space, which is the quota for each sandbox. When working with PowerShell workflows, this scenario can cause a problem because PowerShell workflows use checkpoints and the script could be retried in a different sandbox.

The best option for a location to create temporary files is under the $env:TEMP location. Note that any data is lost as soon as the runbook execution has completed however it makes a good storage location for temporary files that are hopefully stored longterm in something like Azure Storage and the temporary location is to download the data for access.

$SQLServer = "cheprasynapse.sql.azuresynapse.net"

$SQLDBName = "chepra"

$uid ="XXXXXXXXXX"

$pwd = "XXXXXXXXXXXXX"

$SqlQuery = "SELECT * from Trip"

$SqlConnection = New-Object System.Data.SqlClient.SqlConnection

$SqlConnection.ConnectionString = "Server = $SQLServer; Database = $SQLDBName; Integrated Security = false; User ID = $uid; Password = $pwd;"

$SqlCmd = New-Object System.Data.SqlClient.SqlCommand

$SqlCmd.CommandText = $SqlQuery

$SqlCmd.Connection = $SqlConnection

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter

$SqlAdapter.SelectCommand = $SqlCmd

$DataSet = New-Object System.Data.DataSet

$SqlAdapter.Fill($DataSet)

$DataSet.Tables[0] | out-file ("$Env:temp"+"\test.csv")

The easiest option is to continue writing the file as you are now, then after the file is written have your Powershell code upload it to Blob storage using Set-AzureStorageBlobContent. See https://savilltech.com/2018/03/25/writing-to-files-with-azure-automation/ for an example.

For more details, refer Azure Automation, RunBooks and T-SQL Query output directly to Azure Blob Storage

Hope this helps. Do let us know if you any further queries.

------------

Please don’t forget to Accept Answer and Up-Vote wherever the information provided helps you, this can be beneficial to other community members.