Hi, I'm trying to copy a Databricks Delta lake table to Synapse, using the Azure Data Factory Copy Activity with PolyBase.

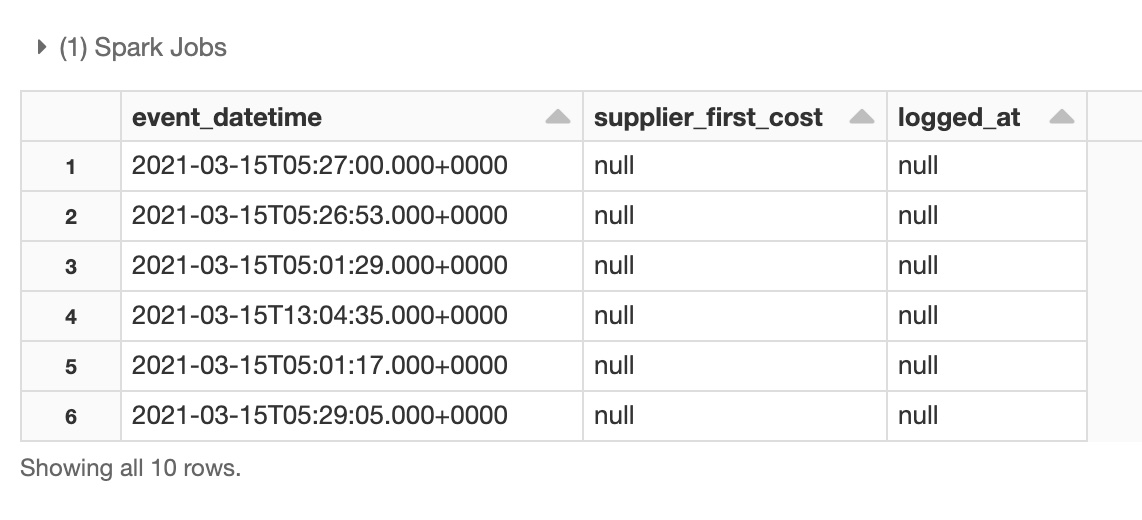

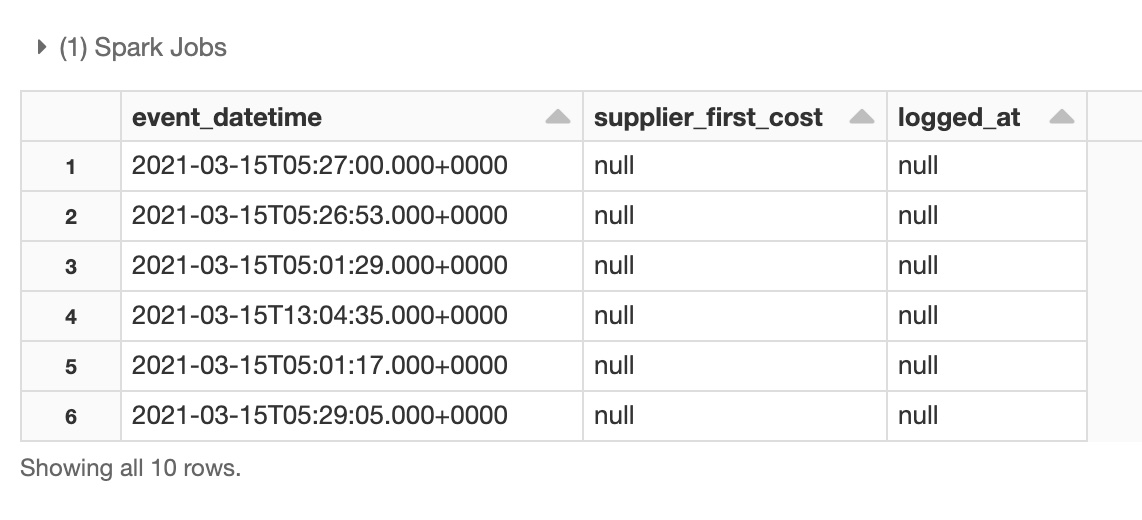

The Delta lake table has two columns which are all nulls. Here are the sample data in the Delta lake table:

supplier_first_cost is defined as Double, logged_at as Timestamp.

But I got the conversion error when running Copy Activity with Poly in Azure Data Factory for these two null columns:

ErrorCode=UserErrorInvalidDataValue,'Type=Microsoft.DataTransfer.Common.Shared.HybridDeliveryException,Message=,Source=,''Type=Microsoft.DataTransfer.Common.Shared.HybridDeliveryException,Message=Column 'supplier_first_cost' contains an invalid value ''. Cannot convert '' to type 'Double'.,Source=Microsoft.DataTransfer.Common,''Type=System.FormatException,Message=Input string was not in a correct format.,Source=mscorlib,''Type=Microsoft.DataTransfer.Common.Shared.HybridDeliveryException,Message=Column 'supplier_first_cost' contains an invalid value ''. Cannot convert '' to type 'Double'.,Source=Microsoft.DataTransfer.Common,''Type=System.FormatException,Message=Input string was not in a correct format.,Source=mscorlib,'

and

ErrorCode=UserErrorInvalidDataValue,'Type=Microsoft.DataTransfer.Common.Shared.HybridDeliveryException,Message=Column 'logged_at' contains an invalid value ''. Cannot convert '' to type 'DateTime'.,Source=Microsoft.DataTransfer.Common,''Type=System.FormatException,Message=String was not recognized as a valid DateTime.,Source=mscorlib,'

I can use Databricks fillna to replace null to 0 in supplier_first_cost, 1970-01-01 00:00:00 in logged_at for the Delta lake table, so the Copy Activity works without exceptions.

But is there a general solution to handle null in this PolyBase Copy Activity? For example, if I have hundreds of tables, each of them may have various data types for different columns, to replace all the null value for every column is not an efficient way for me.