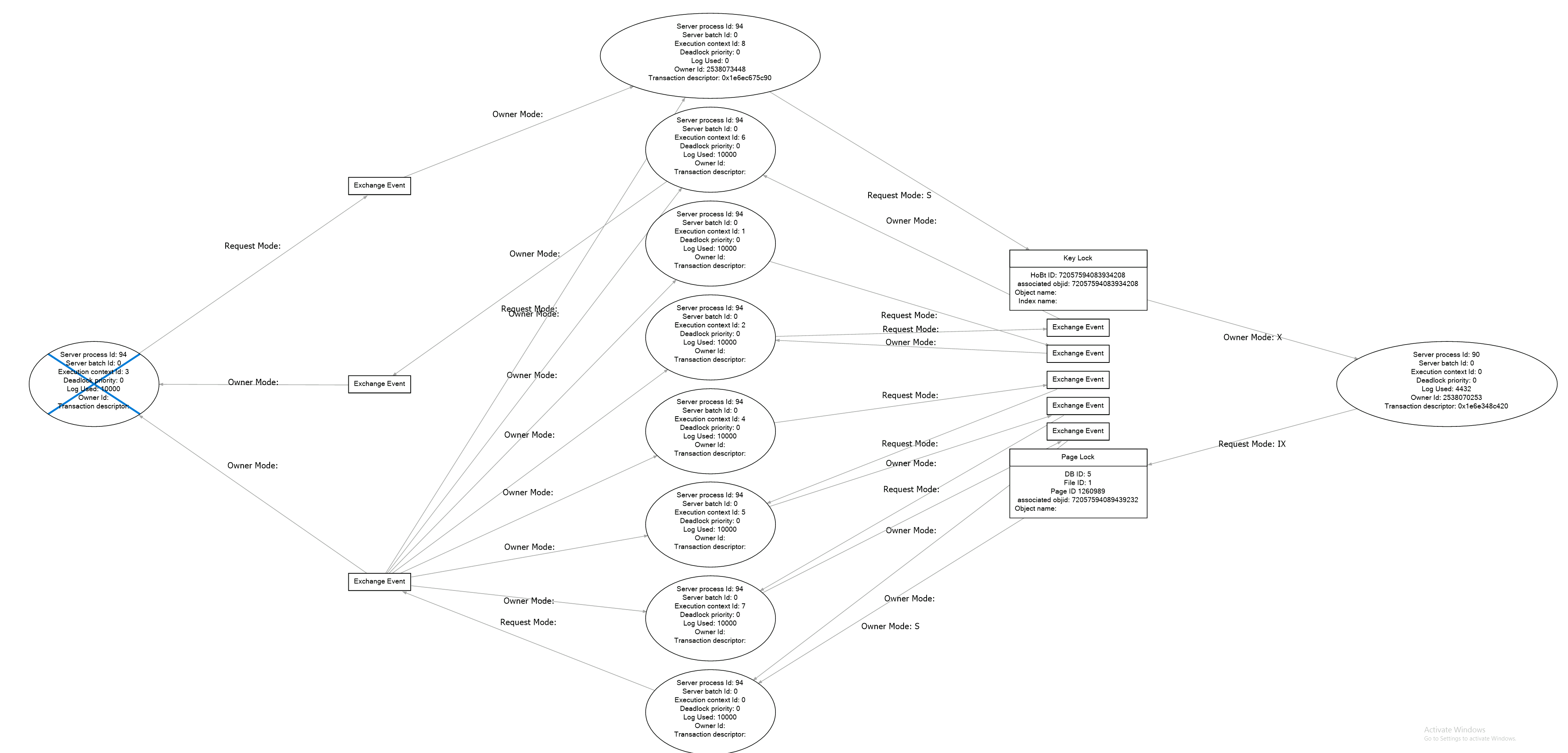

If we overlook the parallelism aspect of it, the pattern of the deadlock does not seem to unfamiliar. You have a reader that reads an entire table or a view. You have a writer that performs a multi-statement transaction. I can tell, because the current batch started later than the current transaction did.

The reader get stuck on a row, that the writer has updated but not yet committed. Eventually the writer gets blocked by the reader, because the reader has not yet released its lock. Which in this case may be due to that there are multiple reader threads, so that one thread is one waiting for the blocked thread to complete.

There are are two things to look into. First, why is the writer running a transaction that spans multiple calls to SQL Server? That does not have to be wrong, but I note that the statement updates a single row. Is the writer running a loop to update rows one-by-one when it should update all at once in a set-based operation? This would not remove the risk for deadlock entirely, but it would reduce the window where the deadlock can occur considerably.

The other thing you should consider is to set the database into READ_COMMITTED_SNAPSHOT. With this setting, the READ COMMITTED isolation level is implemented by reading from a version store in tempdb. In this mode, readers and writers do not block each other. The reader will get the result that was committed when then they query started. This is often good enough, but there are also situations where this is not acceptable, so you need to understand the consequences. The fix as such is simple:

ALTER DATABASE db SET SINGLE_USER WITH ROLLBACK IMMEDIATE

ALTER DATABASE db SET READ_COMMIITED_SNAPSHOT ON

ALTER DATABASE db SET MULTI_USER

Obviously, you need to find a maintenance to run this.

Finally, I should say that I have seen unresolved deadlocks with parallelism. But the case I investigated was even worse, because the deadlock was not even detected.