Hi @Patrick Doerig ,

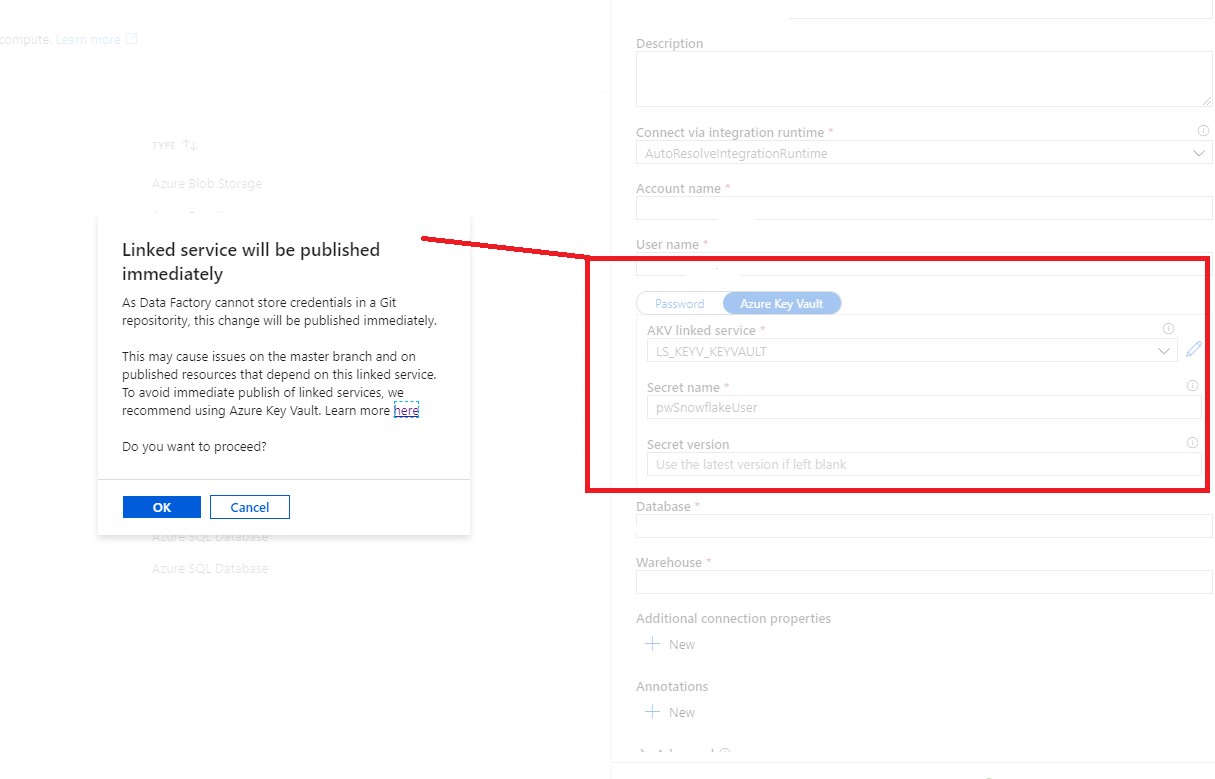

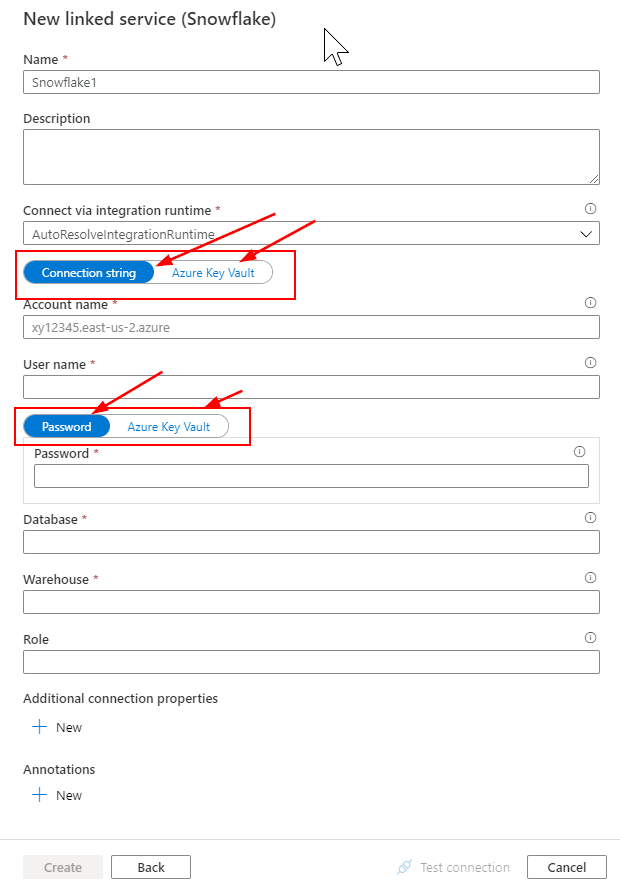

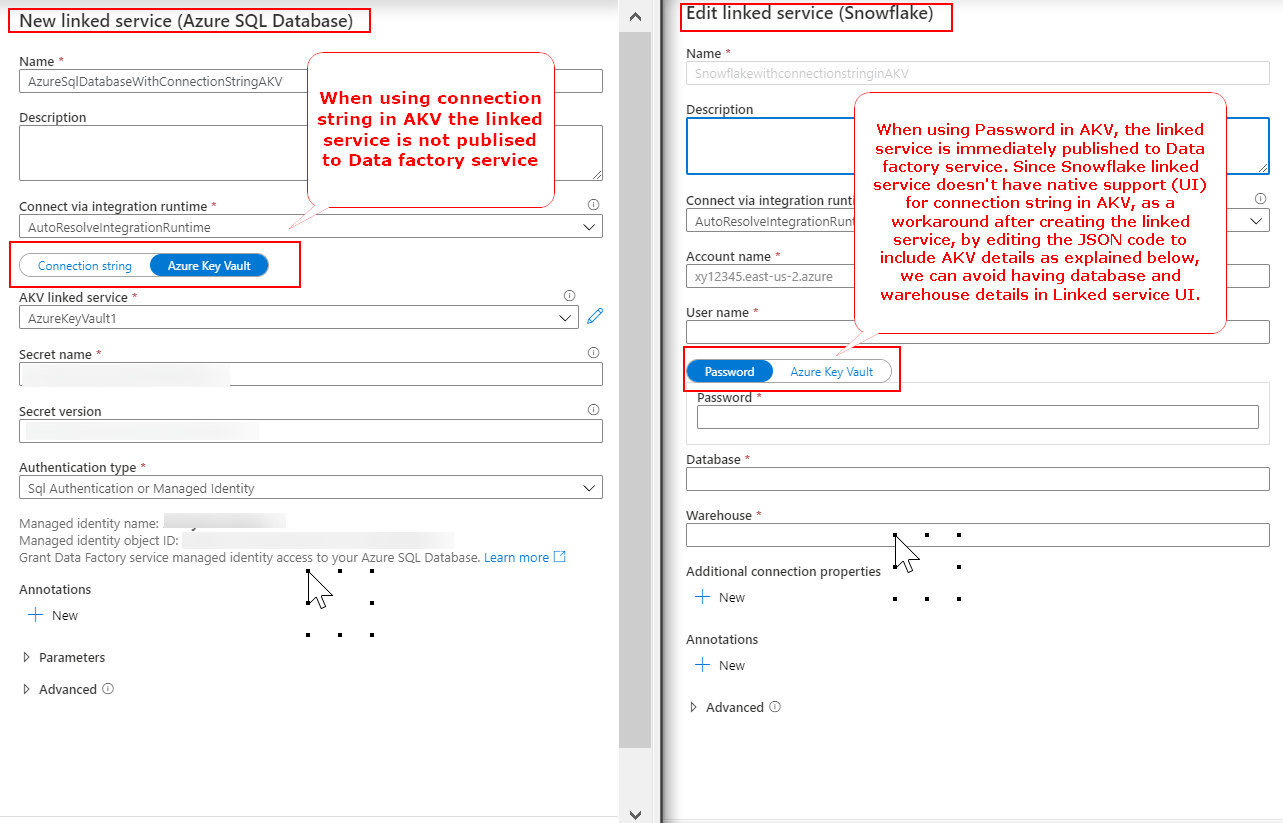

Apologizes for the delay in response. After having further analysis, it is determined that when Password is used in Azure Key Valut (AKV) [Azure SQL linked service, Snowflake linked service, etc., ] then we see the warning and the linked service is immediately published to Data factory service.

But when Connection string is used in AKV (which is not currently supported in Snowflake UI, supports for Azure SQL linked service, etc.,) from linked service UI, then we don't see the warning message and the linked service is not published immediately. Please see below image for further clarification.

In order to avoid use of Database and Warehouse values in linked service UI, as a workaround you can save the complete connection string (including accountname, username, password, database, warehouse details) in Azure Key Vault (sample connection string format: jdbc:snowflake://<accountname>.snowflakecomputing.com/?user=<username>&password=<password>&db=<database>&warehouse=<warehouse>)

Then create a Snowflake linked service by using below JSON format file from powershell as described in this document: Create a ADF linked service using powershell Or you can create a dummy snowflake linked service from UI and then you update the JSON code with below JSON code to use connection string from AKV. I have tested this and working as expected.

{

"name": "<YouSnowflakeLinkedServiceName>",

"type": "Microsoft.DataFactory/factories/linkedservices",

"properties": {

"annotations": [],

"type": "Snowflake",

"typeProperties": {

"connectionString": {

"type": "AzureKeyVaultSecret",

"store": {

"referenceName": "<YourAzureKeyVaultLinkedServiceName>",

"type": "LinkedServiceReference"

},

"secretName": "<YourSnowflakeConnectionstringSecretName>",

"secretVersion": "<YourSnowflakeConnectionstringSecretVersion>"

}

}

}

}

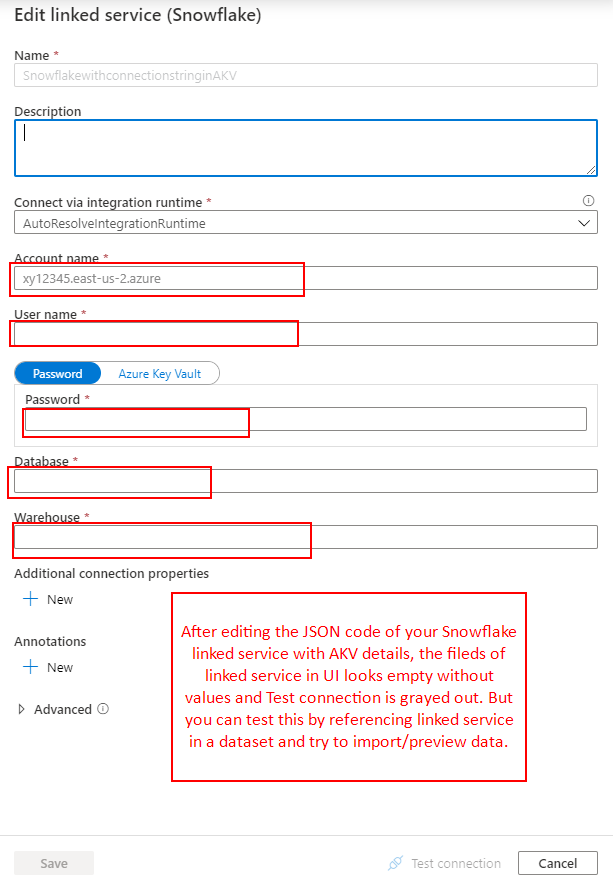

One thing you will notice is that the linked service properties will not populate any values in UI when you using Connection string from AKV. The linked service looks as below (with all fields empty). Even you won't be able to test the connection as it is grayed out (which is weird). But in order to test this linked service, you can create a dataset and use this linked service to import/preview data. I have tested this and it is working.

I agree that this is not an appropriate way to achieve this but this is the only possible way/workaround to use complete connection string from AKV for snowflake linked service. I have provided a feedback to the ADF engineering team to include native support of Connection String in AKV from UI and it is under review.

I would also request you to please share your feedback in ADF user-voice forum and do share feedback link here with the community so that other users can up-vote and comment on your feedback to prioritize the feature request implementation.

Hope this helps. Please feel free to let me know if you have any further query.

----------

Thank you

Please do consider to click on "Accept Answer" and "Upvote" on the post that helps you, as it can be beneficial to other community members.