@Akash Bhot Welcome to Microsoft Q&A Forum, Thank you for posting your query here!

For better understanding the issue are you using SQL Server database backup to Azure azure blob storage? Can you also check port 80 and 443 is enabled?

Are you using any proxy or firewall? A proxy may prevent connection or limit the number of connections.

Which version of Sql Server are you using?

SQL Server Managed Backup to Microsoft Azure uses the Backup to Block Blob feature. The maximum size of a block blob is 200 GB. But by utilizing striping, the maximum size of an individual backup can be up to 12 TB. If your backup requirements exceed this, consider using compression, and test the backup file size prior to setting up SQL Server Managed Backup to Microsoft Azure. You can either test by backing up to a local disk or manually backing up to Microsoft Azure storage using BACKUP TO URL Transact-SQL statement. For more information, see SQL Server Backup to URL.

Large SQL Server database backup on an Azure VM and archiving

Based on the error message issue may have occurred due to the credentials(, SQL backup and restore to Azure Blob storage service

Install Azure Storage Explorer and add Read/Write permissions in Shared Access Key

Error message: Msg 3013, Level 16, State 1, Line 1

Troubleshooting method 2: - Create a new SAS on the Storage account level

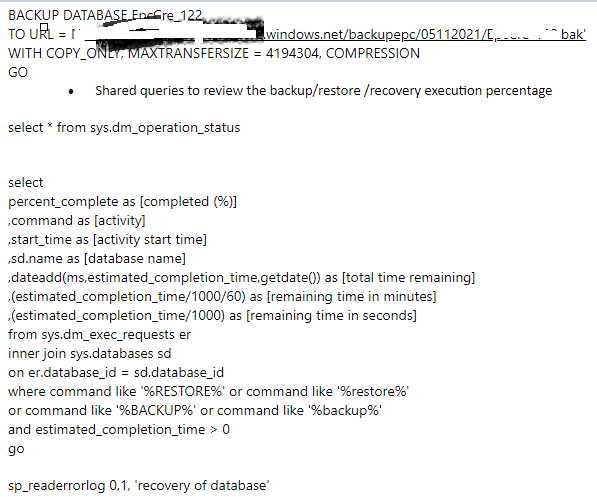

- Backup with compression and maxtransfersize

Additionally, I would like to share with you the checksum, Stats and Stripe options

BACKUP (Transact-SQL) - SQL Server | Microsoft Learn

RESTORE VERIFYONLY (Transact-SQL) - SQL Server | Microsoft Learn

Example

Checksum:CHECKSUM Specifies that the backup operation verifies each page for checksum and torn page, if enabled and available, and generate a checksum for the entire backup.

Using backup checksums may affect workload and backup throughput.

Perform checksum before writing to the media We can ensure a good SQL backup. SQL Server internally calculates the checksum value for each page. This checksum value remains precisely the same for the same data in the page during the recalculation as well. SQL Server writes the CHECKSUM value for each page during the backup once we select this option.

During the database restore process, SQL Server recalculates the CHECKSUM value for each page and matches with the CHECKSUM value written during the backup. If both values are similar, it shows that the database backup is valid.

Stats: With stats, you may see the percentage of the backup/restore

Stripe: A striped backup is just a backup of your database that is spread across multiple files. It's useful when your backup file is too big, striping the file you are going to have the same final size of your backup, but the size will be spread in more files.

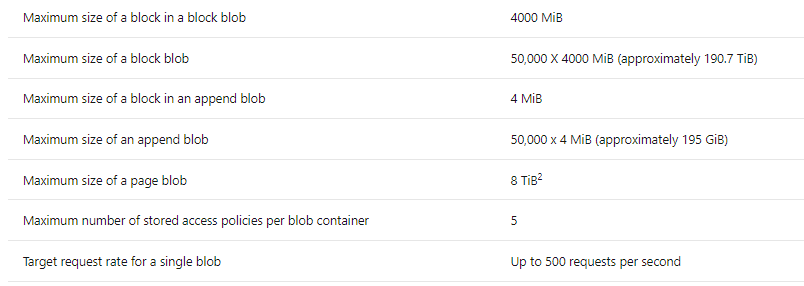

Limitations for SQL Managed Instance: Max backup stripe size is 195 GB (maximum blob size). Increase the number of stripes in the backup command to reduce individual stripe size and stay within this limit.

BACKUP (Transact-SQL) - SQL Server | Microsoft Learn

Additional information: Azcopy does a good job at copying data in Azure. Azcopy command will copy the backup files from the VM to a storage of your choice.

Hope this helps!

Kindly let us know if the above helps or you need further assistance on this issue.

---------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Please do not forget to "Accept the answer” and “up-vote” wherever the information provided helps you, this can be beneficial to other community members.