Hello @Ganesh Pathak and welcome to Microsoft Q&A.

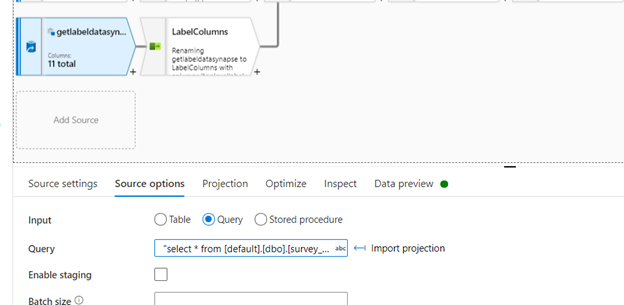

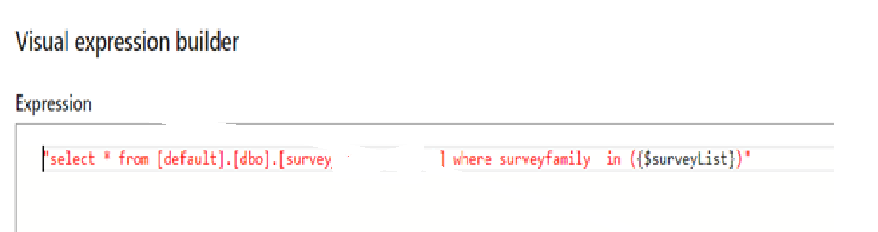

I am confused why you need a dataflow for every variable. You can pass array type variable to dataflow. See below pictures.

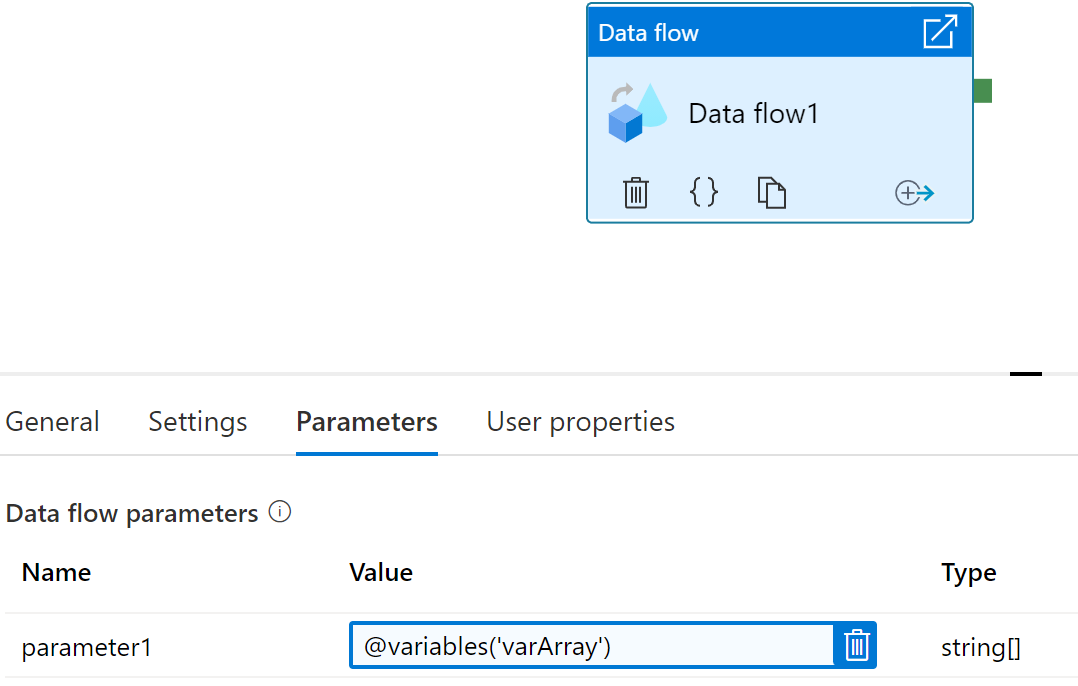

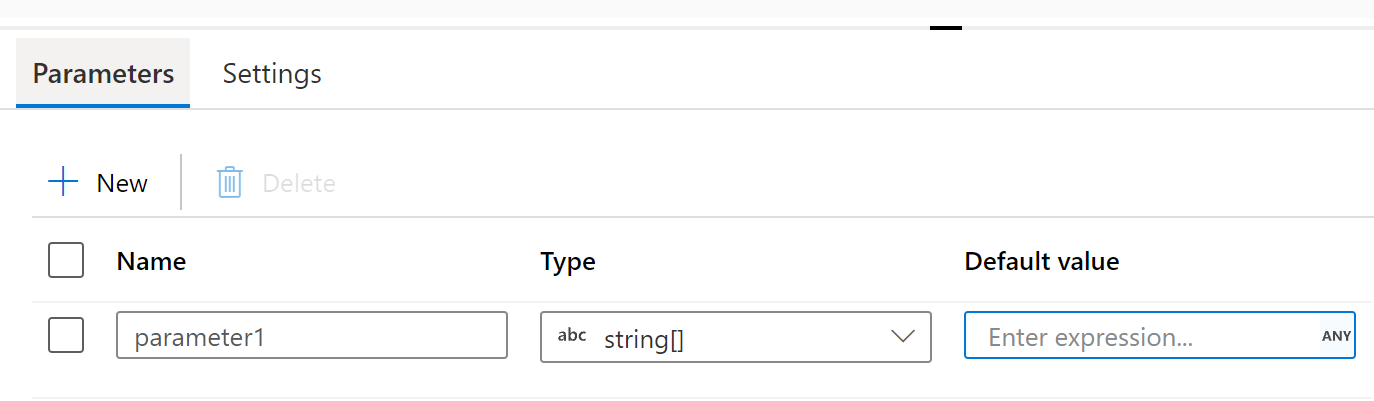

Inside the dataflow, declare parameter of appropriate array type:

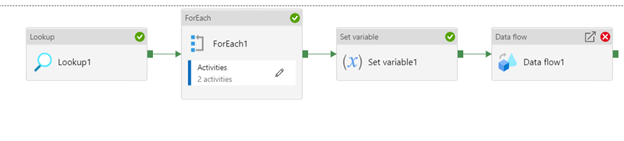

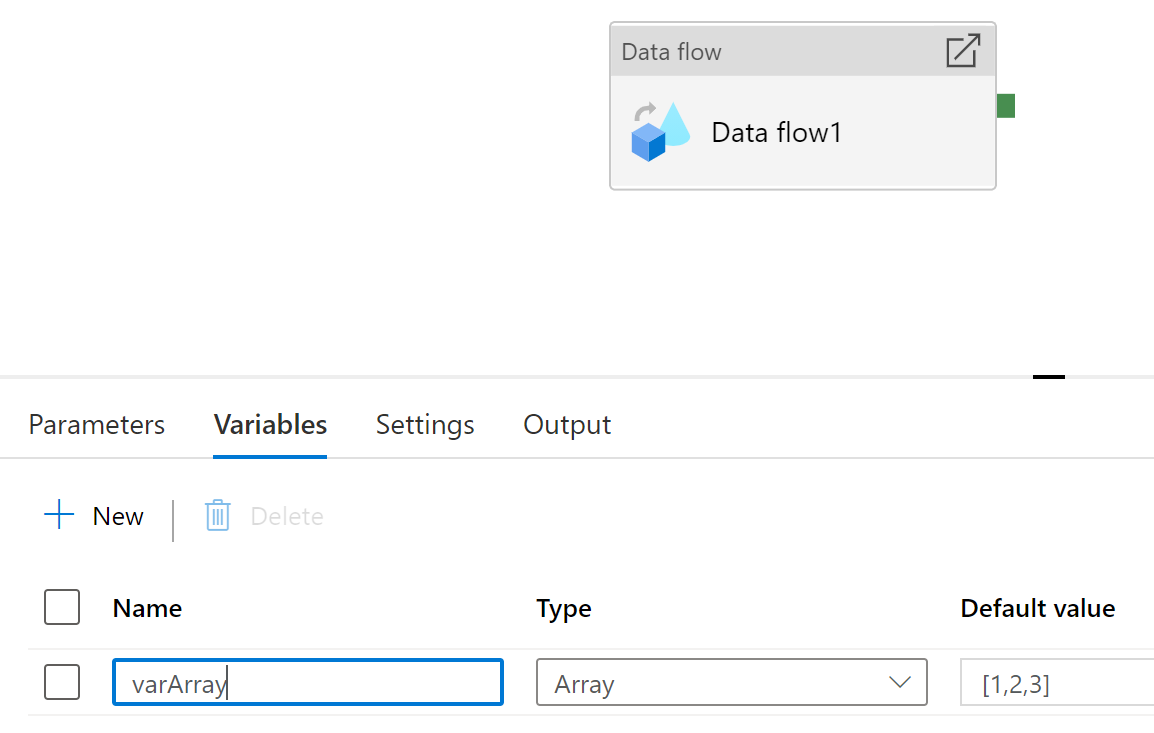

In pipeline, make array type variable:

In pipeline's dataflow activity, pass the variable: