This is my web app:

- https://dn42usw.azurewebsites.net

- https://dn42br.azurewebsites.net

- https://dn42uk.azurewebsites.net/

I get this 3 errors randomly:

- 503 The service is unavailable.

- 503 Service Unavailable.

- 502 Web server received an invalid response while acting as a gateway or proxy server.

Everything seems to be just fine in the portal.

The app status is Running, no obvious reason to 503s to be seen.

I tried restart the app, with no luck resolving the issue.

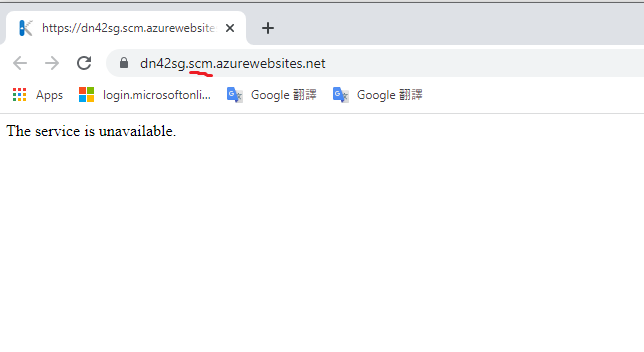

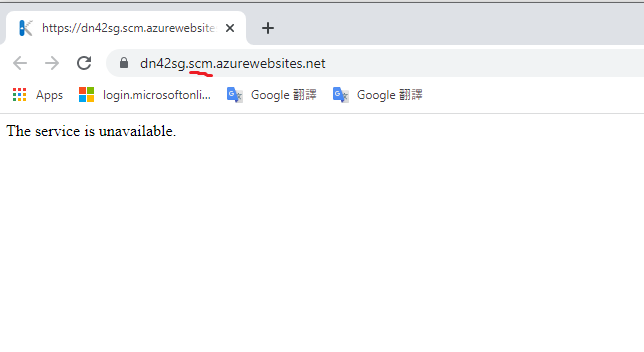

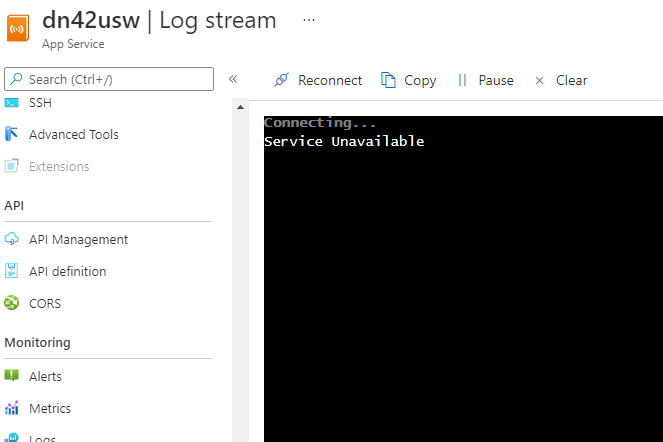

503 on SCM

I also tried to use webssh to check there is any problem, I get 503, too

I guess my app are not running in the background at all

https://dn42usw.scm.azurewebsites.net/webssh/host --> 503 The service is unavailable.

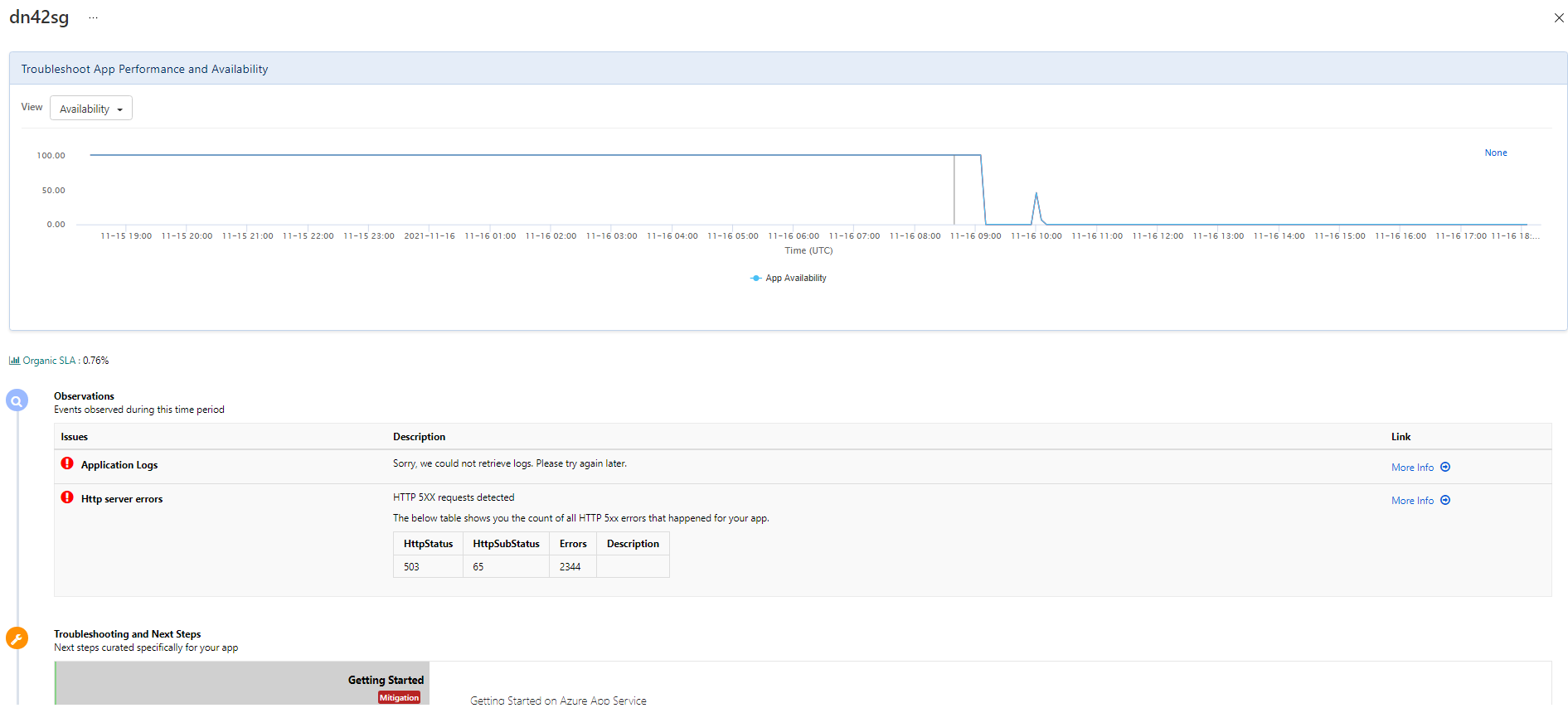

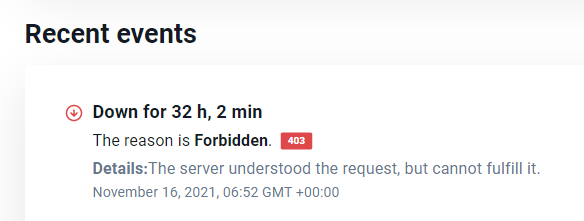

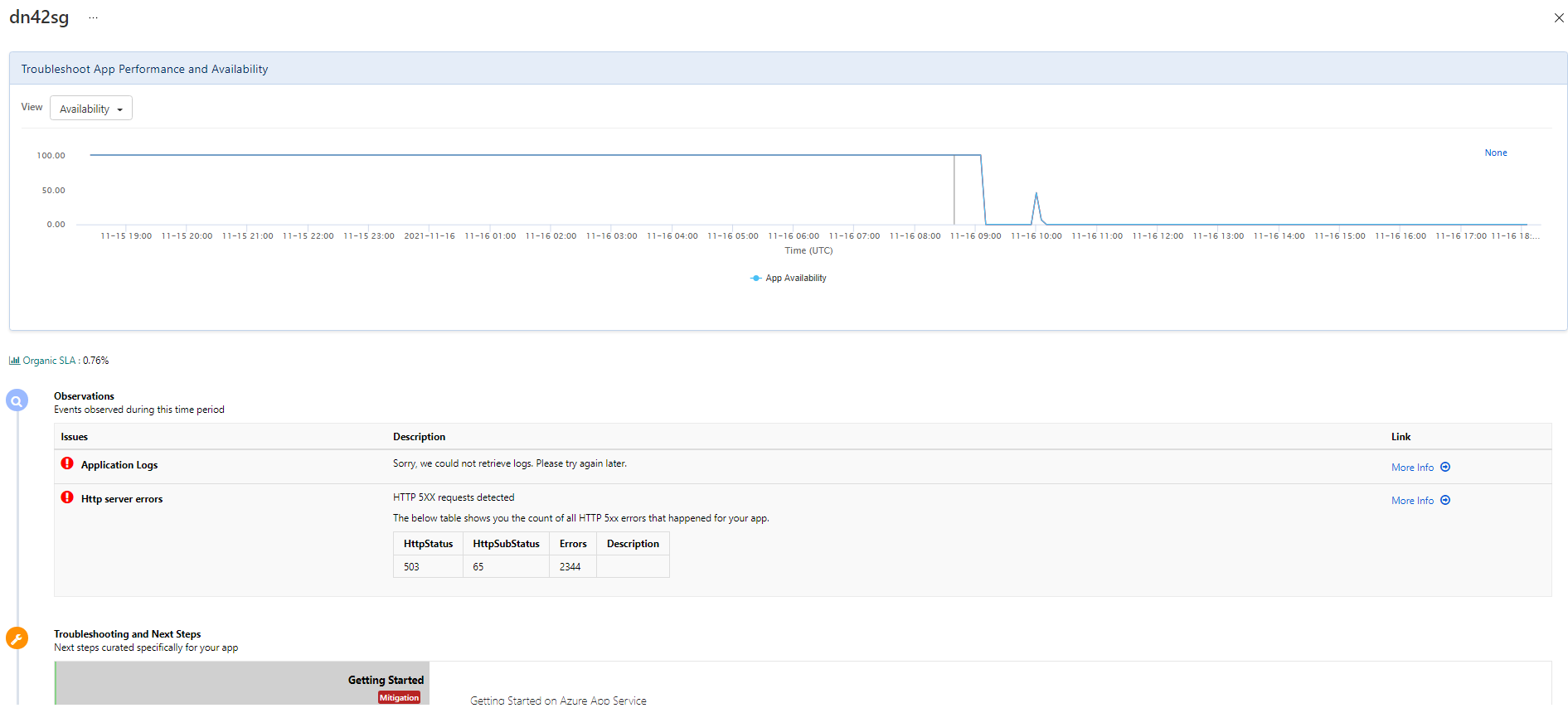

The service just stop working suddenly:

Some logs

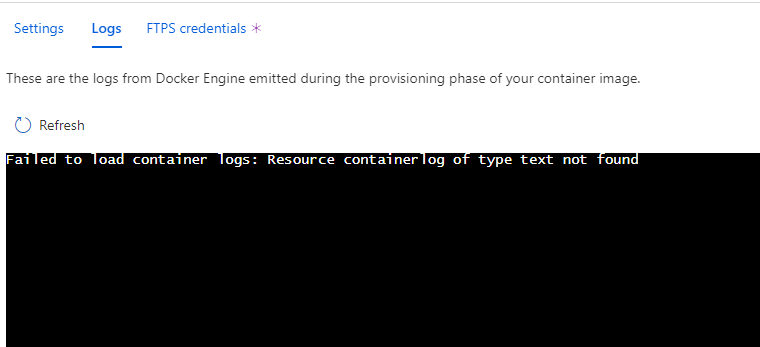

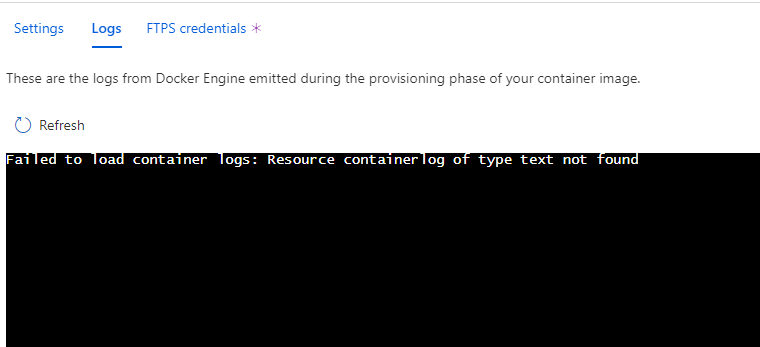

Deployment center:

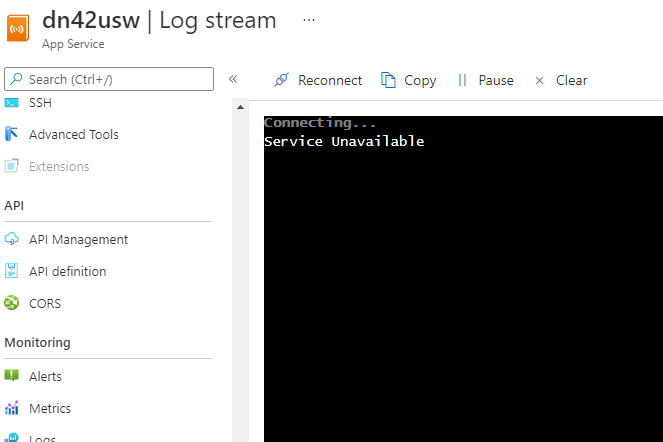

Log stream

Log stream

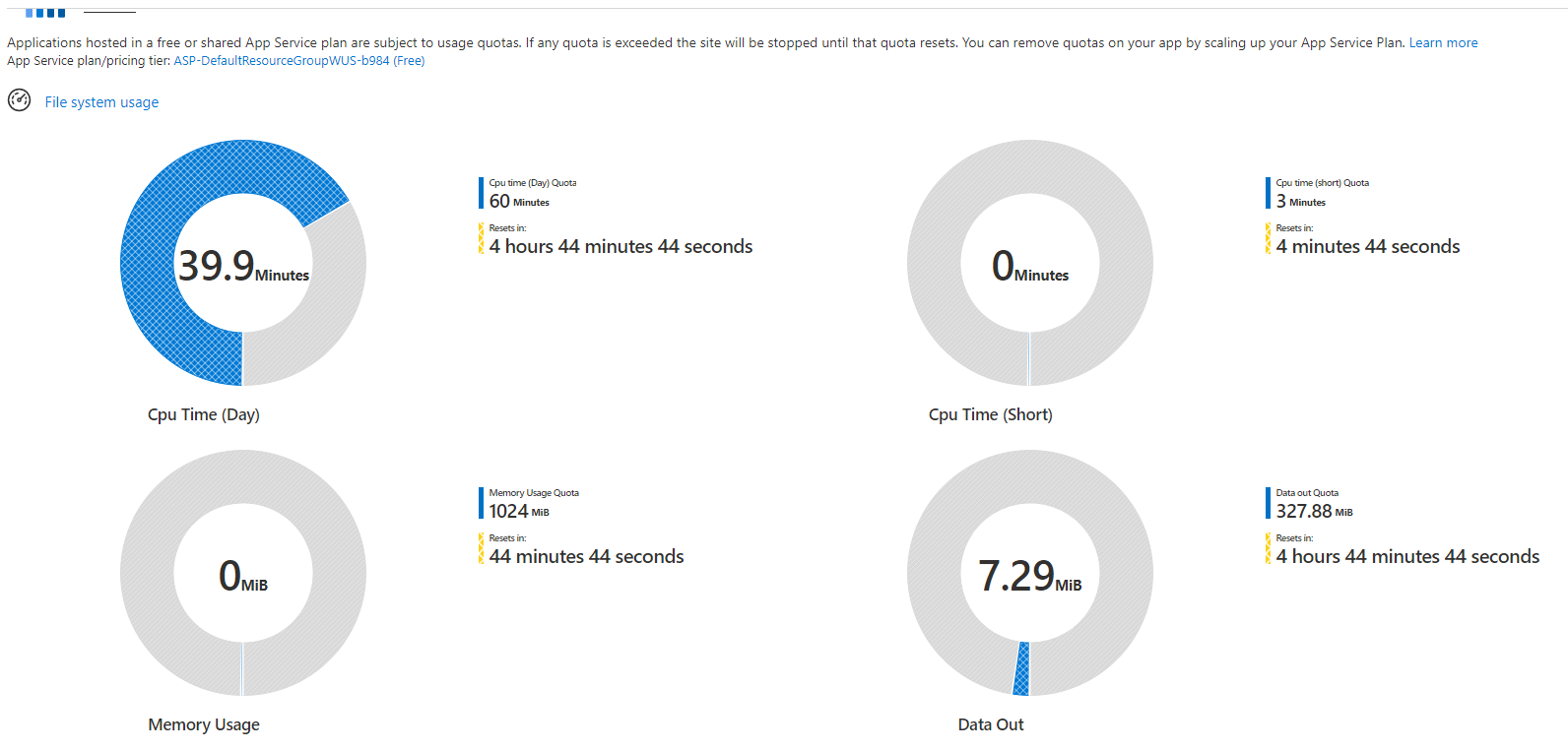

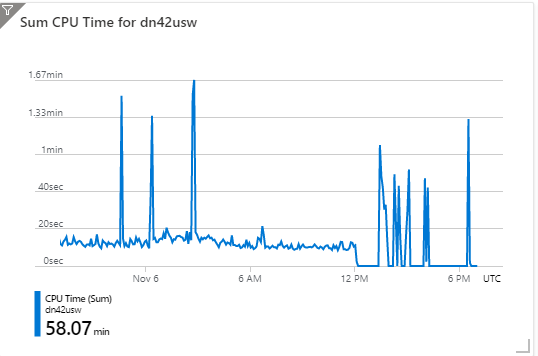

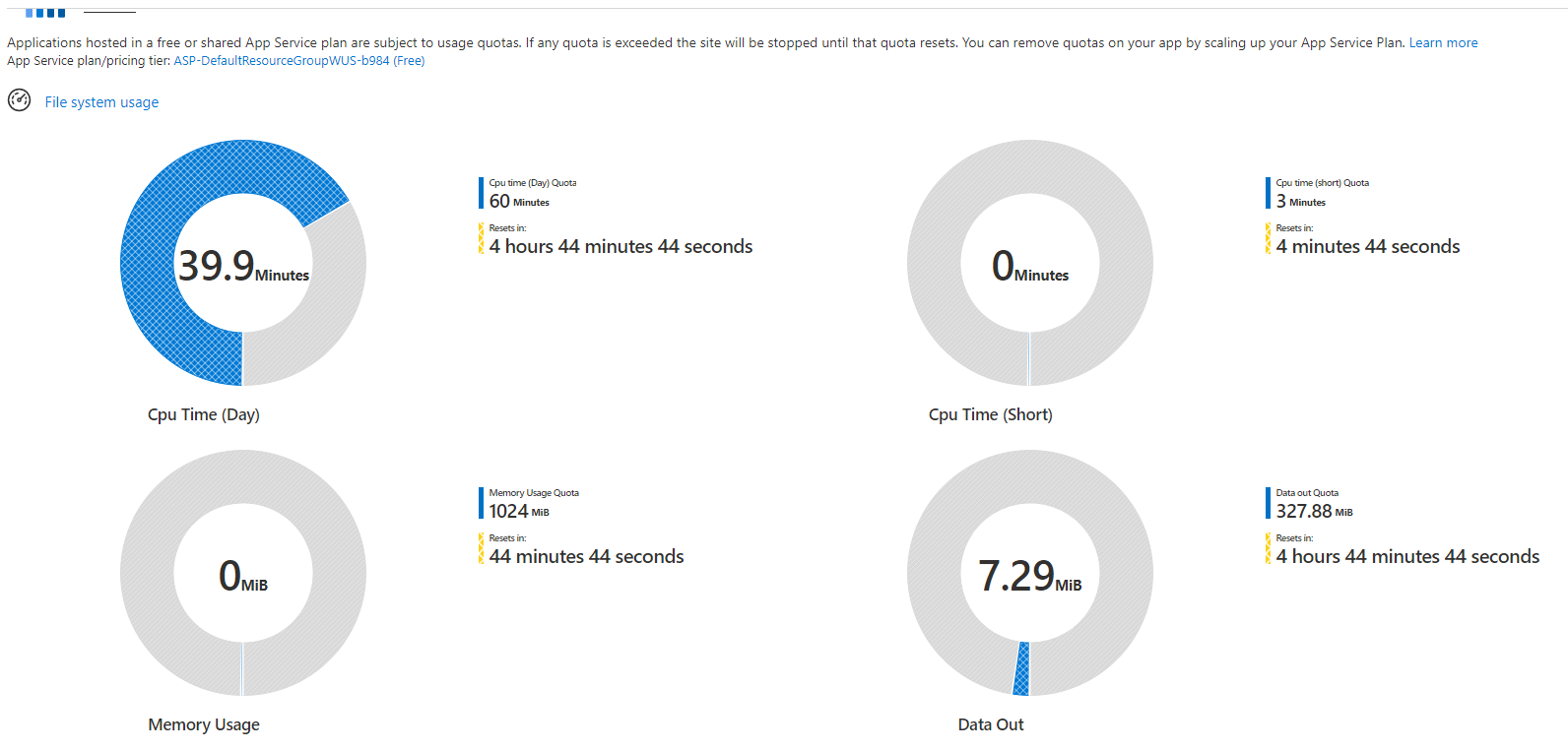

This case indicates the reason may be the application resources exhaustion

but I checked several times, I'm pretty sure there are no resource exceed the limit.

Quotas are not exceeded

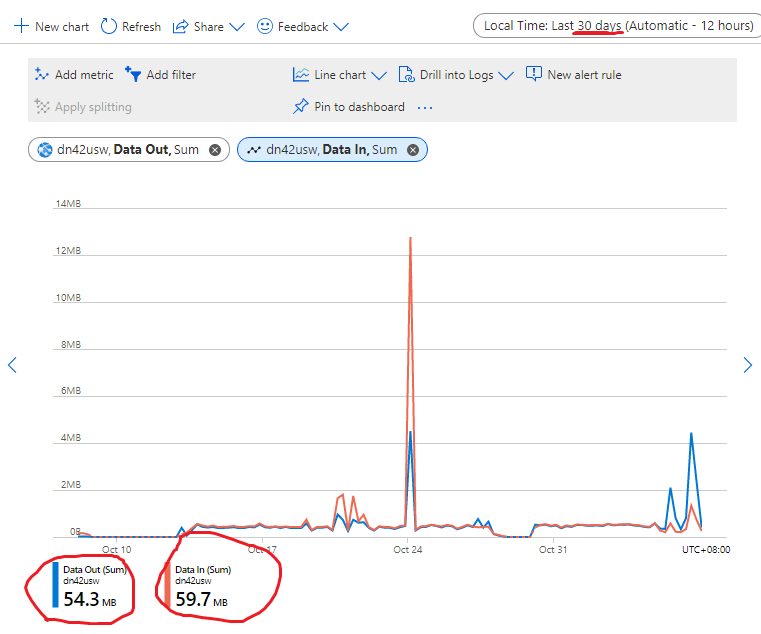

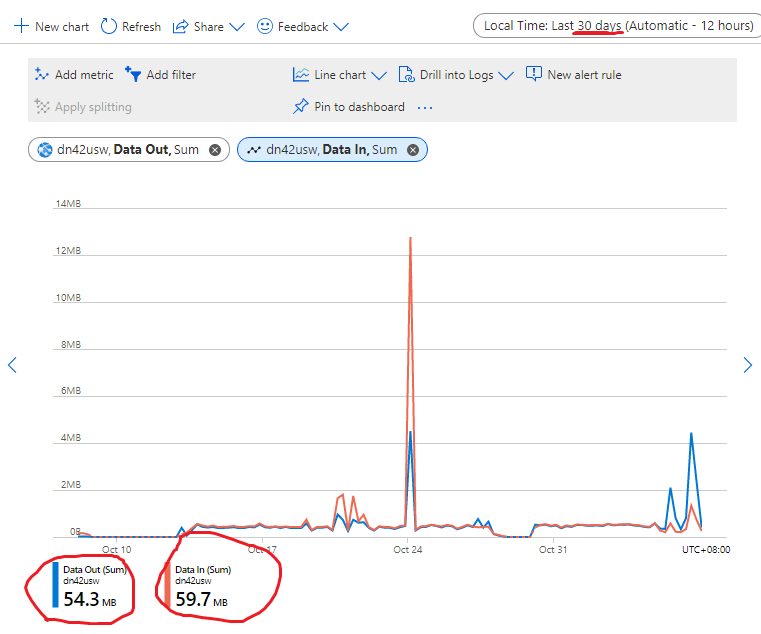

All traffic during last month, 60MB.

Shell not exceed the 5GB/month limit.

My question is: Why I get 503 error on my app?

If the reason is resource exhaustion, where I can know which resource exhausted?

====Update====

The web app works now. But... may I know the reason why it stop working during the past 8 hours?

====Update 2====

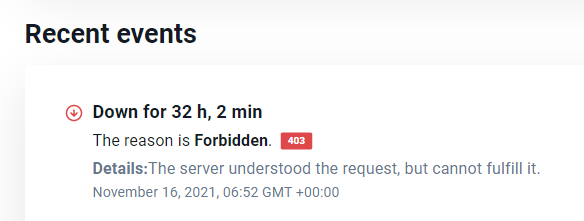

The service goes down again.

====Update 3====

New Discover

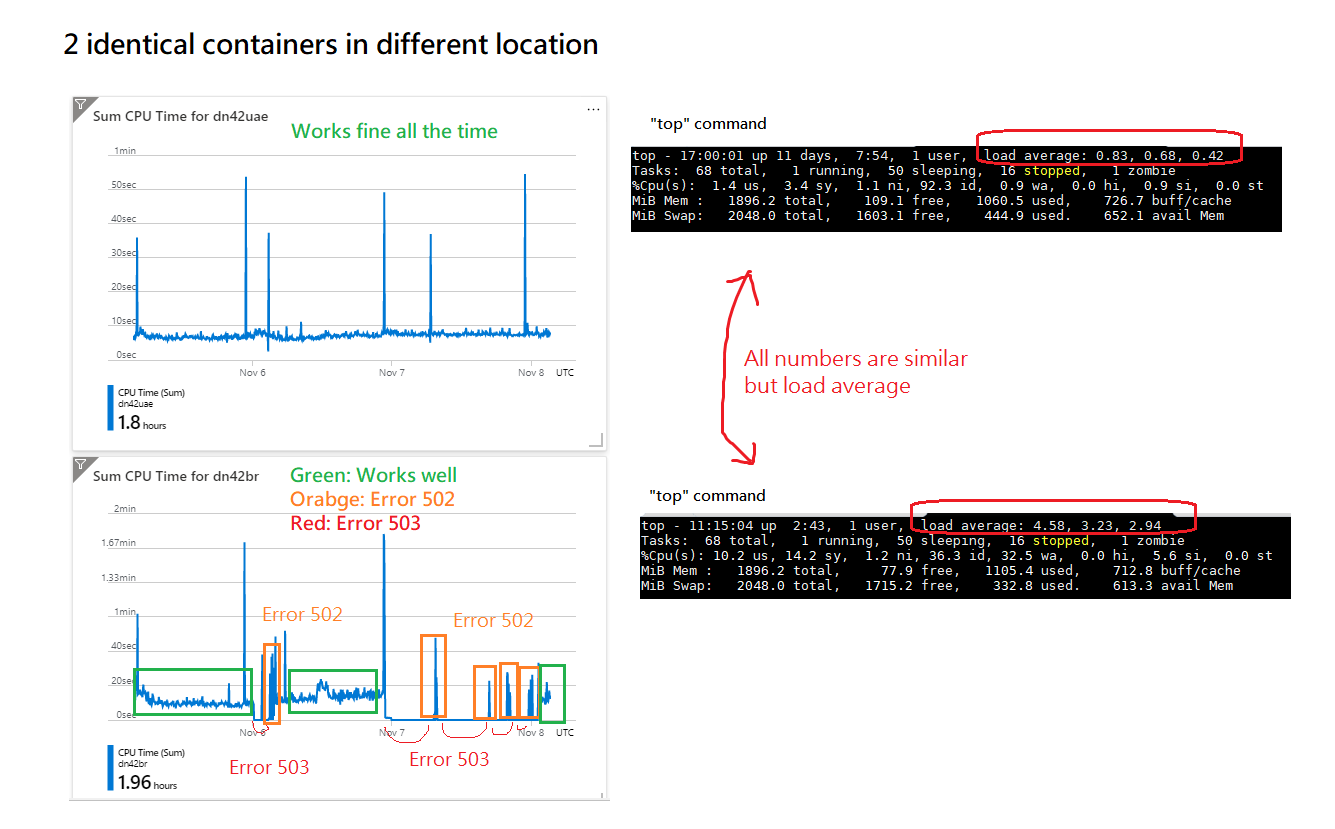

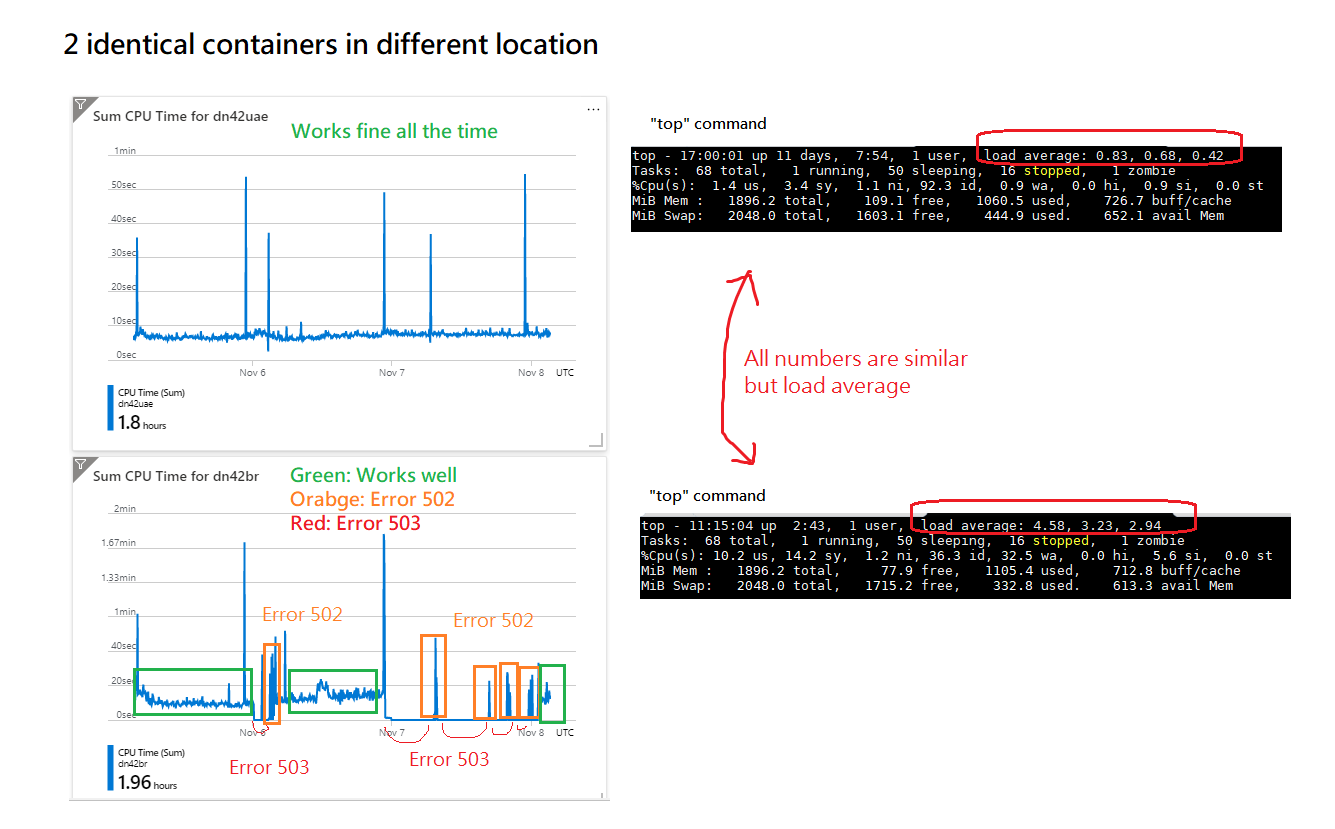

This is 2 identical containers in different location

The load average in Brazil node is very high, and it's very unstable. I got error 503 and 502 very often from it.

I'm guessing the reason for this problem is the loading of the host machine is different.

The loading of the physical machine in Brazil is very high, that's why I'm getting very high load average and it's crash so often.

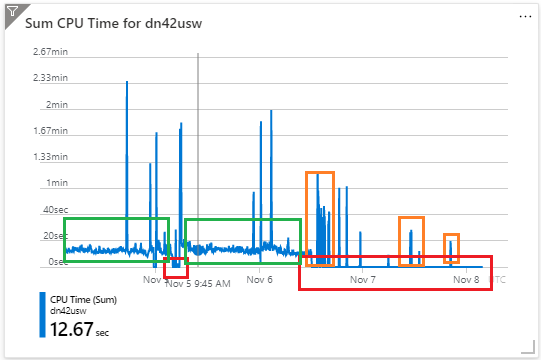

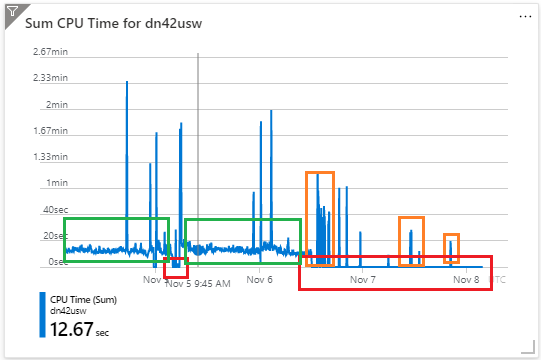

btw: this is the log of us west:

====Update 4====

Suddenly all unstable nodes(usw/uk/br) running again

I'm still don't know the true reason for this problem.

====Update 5==== 2021/11/17

Thank you for all your assistance, I really appreciate your help in resolving the problem.

Now I'm fully understand the LinuxFree SKU are not stable enough to running a production application.

During our email conversation, you gives me two reasons to my problem

- It occurred due to a normal movement of your site during routine service maintenance from an existing worker.

- This indicates that your application crashed, unexpectedly finished or didn't expose/listen to the correct TCP port.

But I have a doubt about the two reasons ...

- About this node: https://dn42sg.azurewebsites.net/

The error continues 10~30 hours, not 10~30 minutes. Does the movement takes so long?

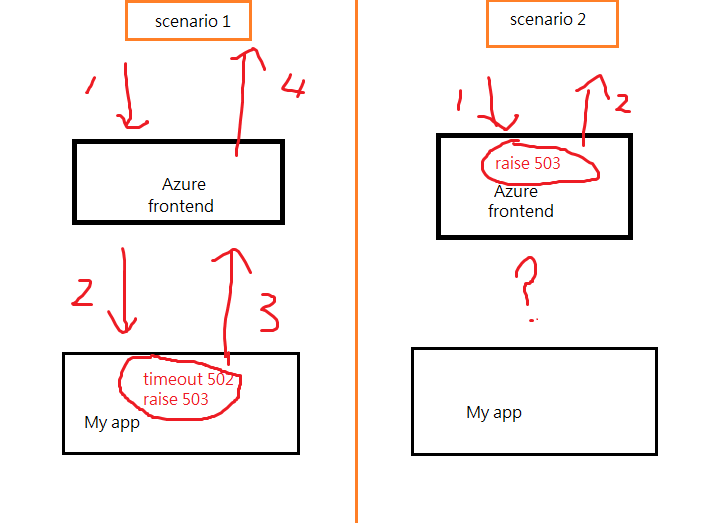

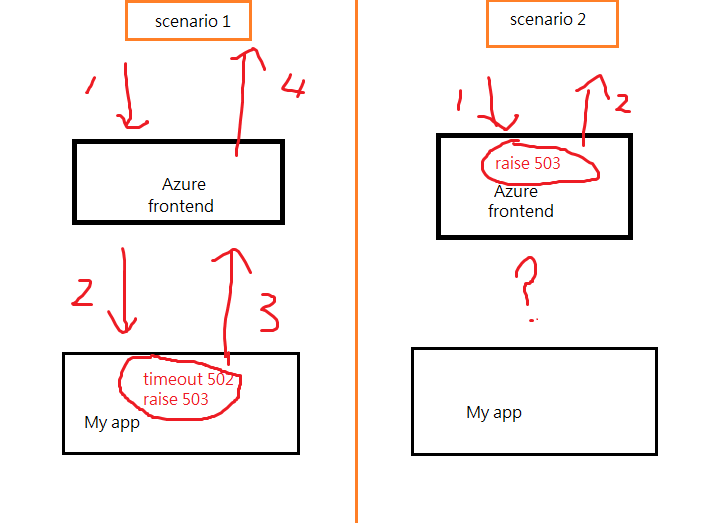

- Reason 2 indicates it's scenario1, but all the metrics and logs in azure portal indicates the error is scenario 2, not scenario1:

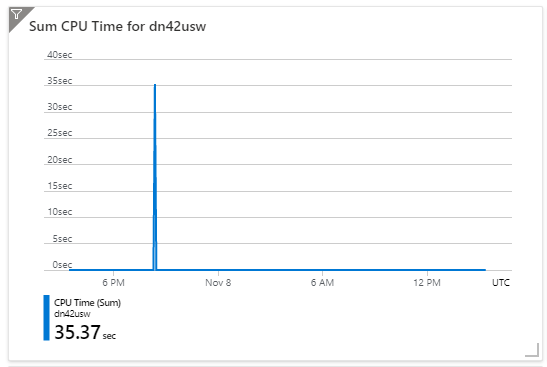

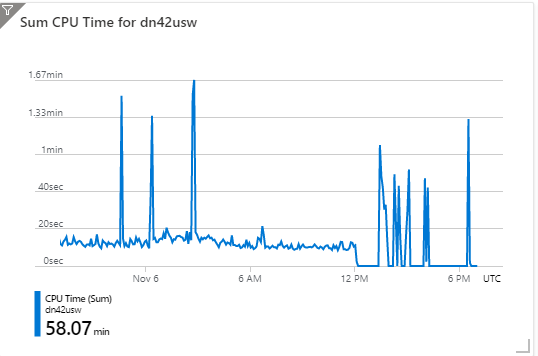

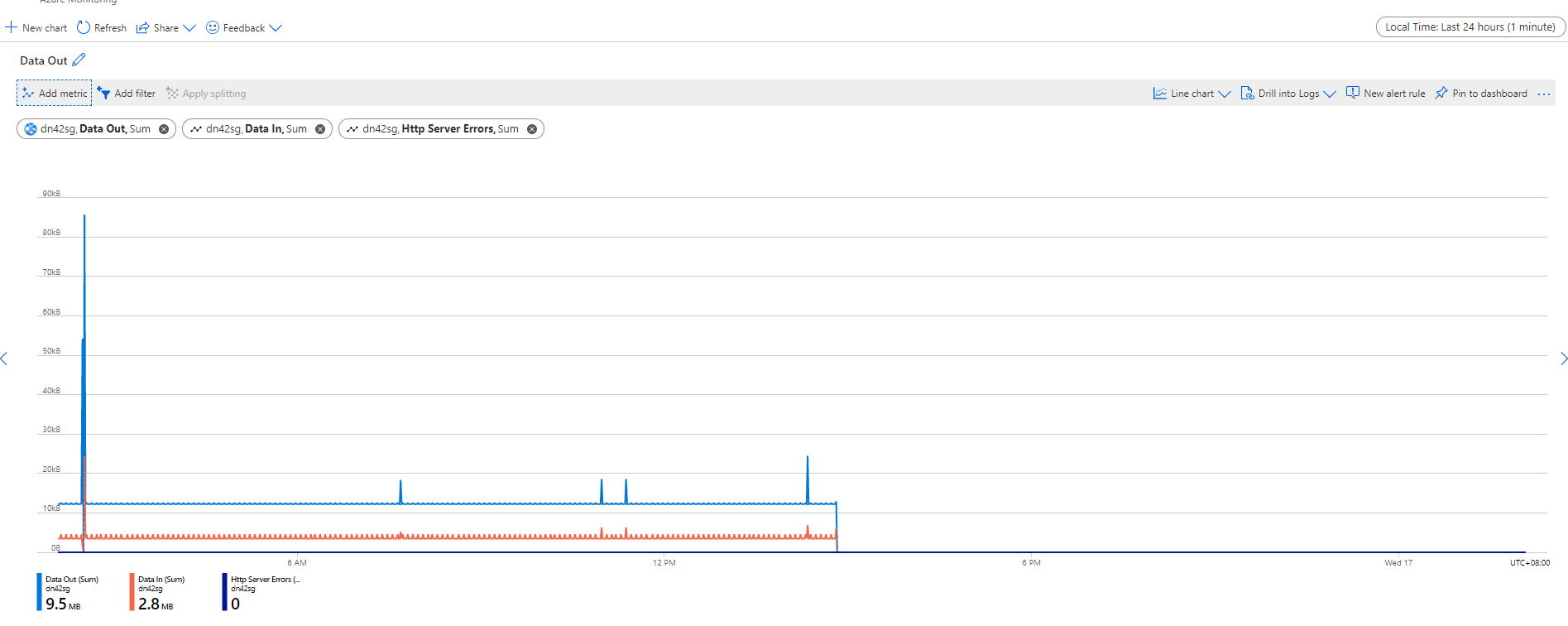

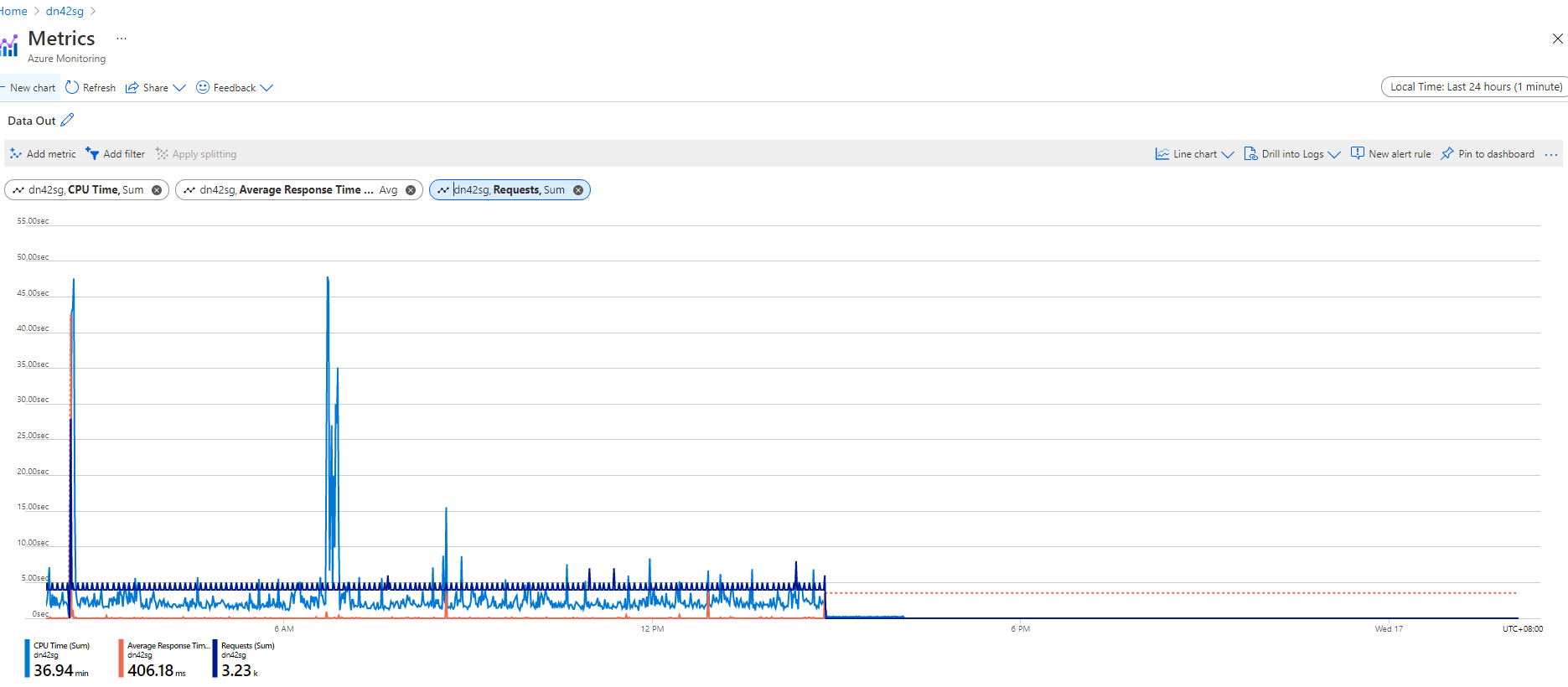

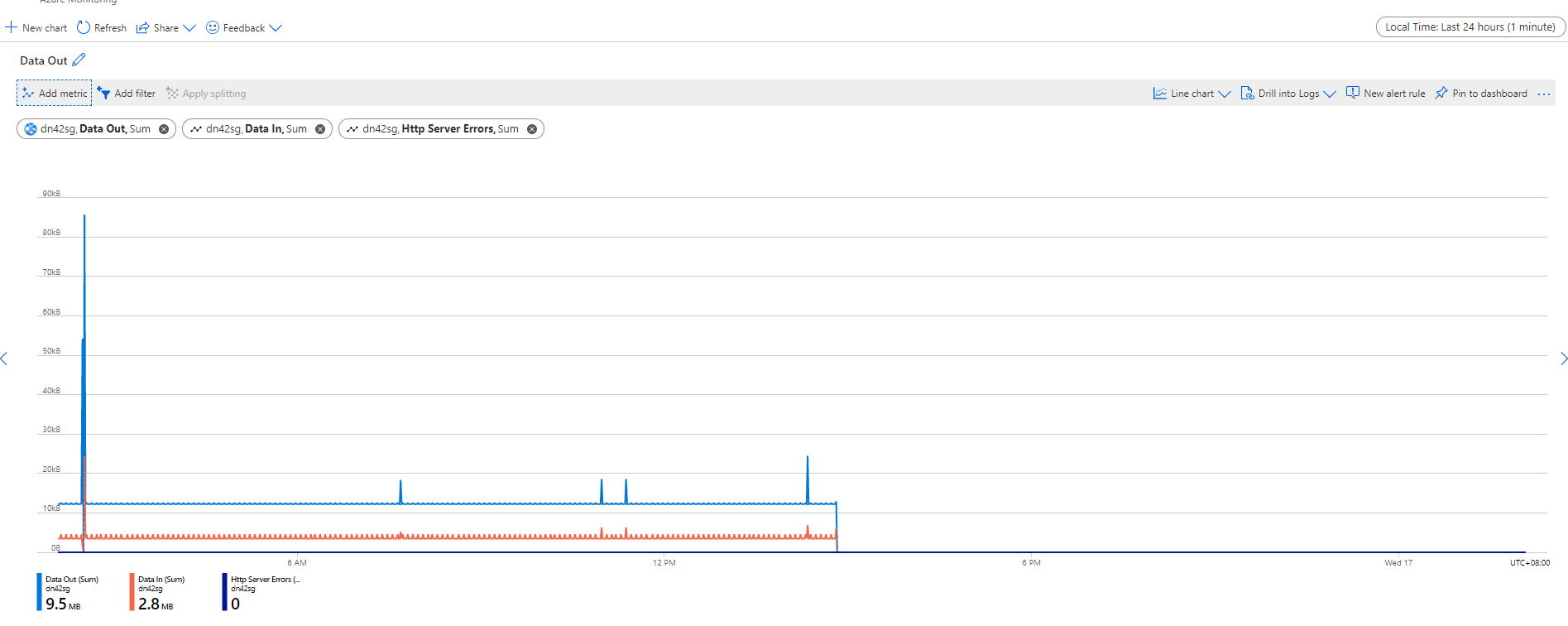

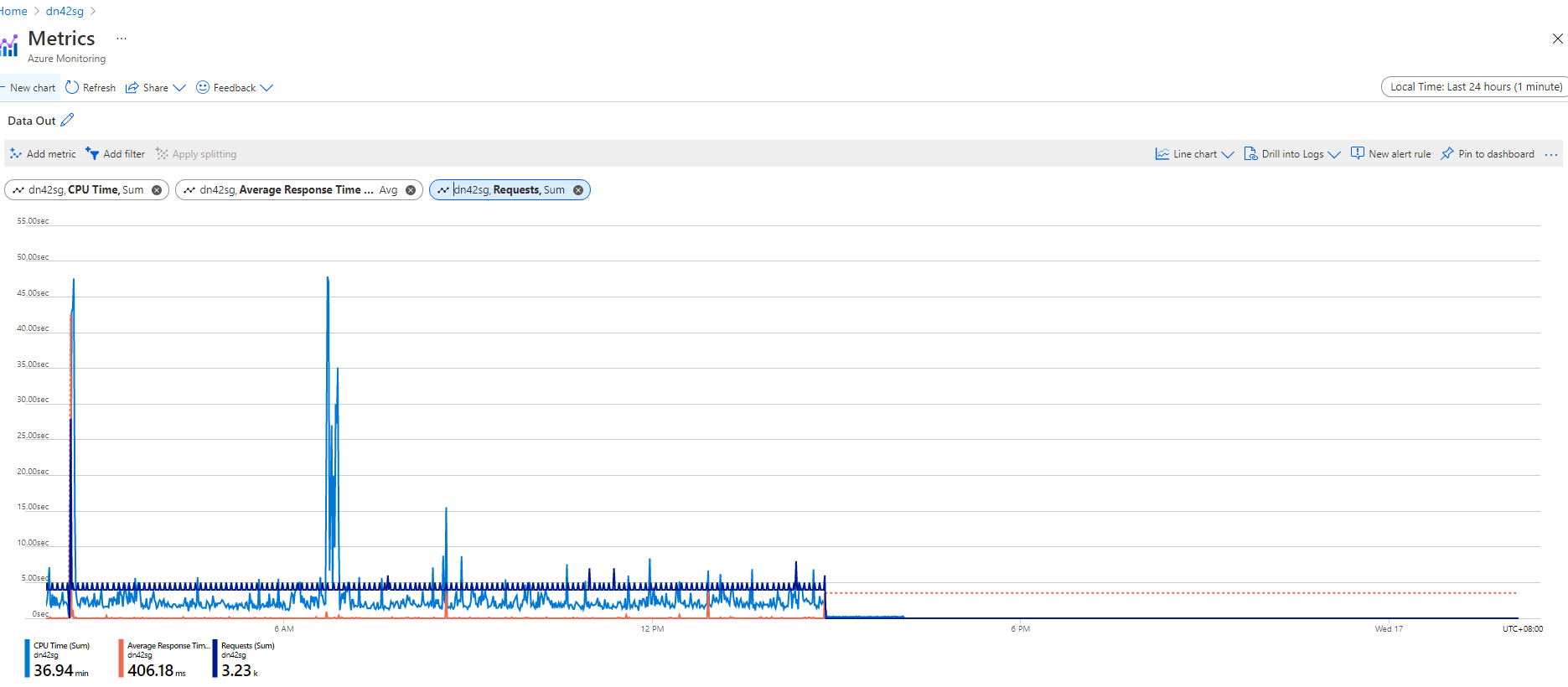

metrics:

-

-

The data in, data out, cpu time drops to 0, maybe it's my app crashed.

But the requests drop to 0, which means my app didn't receive any requests, which indicates the scenario 2 is only possible reason.

The data in, data out, cpu time drops to 0, maybe it's my app crashed.

But the requests drop to 0, which means my app didn't receive any requests, which indicates the scenario 2 is only possible reason.

logs:

====Update 6==== 2021/11/20

Thanks your help, now I know the HttpStatus:503 and HttpSubStatus:65 means We ran out of workers.

There are too many people uses that node so that there are no space leave for free SKU. But paid SKUs have higher priority so that they will not affact by this issue.

Log stream

Log stream

The data in, data out, cpu time drops to 0, maybe it's my app crashed.

But the requests drop to 0, which means my app didn't receive any requests, which indicates the scenario 2 is only possible reason.

The data in, data out, cpu time drops to 0, maybe it's my app crashed.

But the requests drop to 0, which means my app didn't receive any requests, which indicates the scenario 2 is only possible reason.