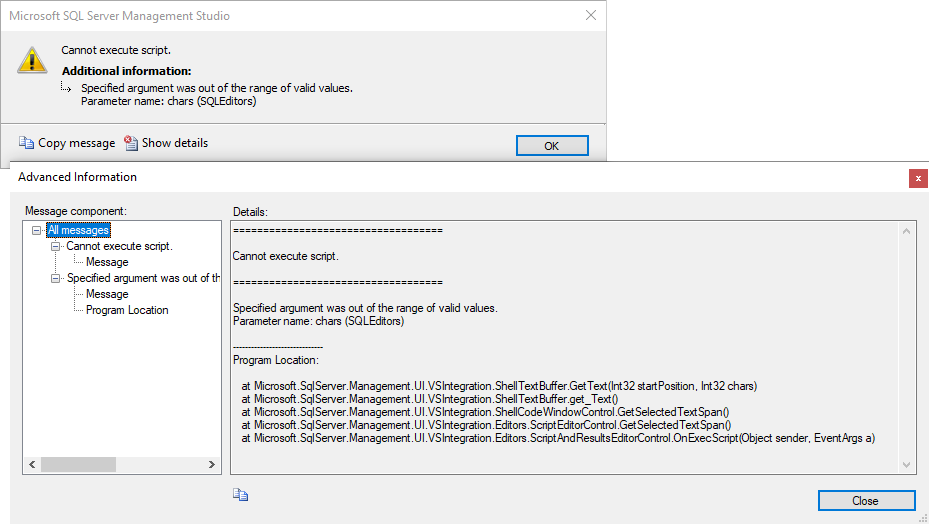

@Viorel - The aggregated script creates a new DB, follows with a series of INSERT statements. That is, there's nothing fancy. Its total length is under 2GB. I'm not sure what's the maximum validated script length for SSMS 15.0.18390.0. Do you know?

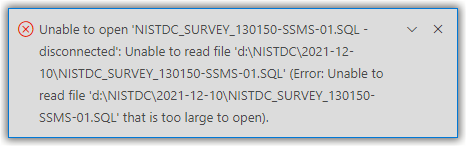

@Erland Sommarskog - Initially, I used sqlcmd to run these individual scripts (":r"). These scripts are machine generated. In total, there're several hundreds of GBs worth of data. I switched to using SSMS to work around character encoding issues in sqlcmd. When I open the aggregated script, it shows up in the SSMS query window properly with no syntax errors. This is as expected since the individual scripts are well-formed and separately executed successfully in SSMS.