@Yu, Hazel (APEX SYSTEMS LLC) If you have already able to test your model then your scoring script is essentially should try to load the model and define a input and output schema based on the input/output value types. This will validate your input data and generate a swagger document when you deploy your model. For example, I think your scoring script can be defined as below:

import joblib

import numpy as np

import os

from inference_schema.schema_decorators import input_schema, output_schema

from inference_schema.parameter_types.numpy_parameter_type import NumpyParameterType

# The init() method is called once, when the web service starts up.

#

# Typically you would deserialize the model file, as shown here using joblib,

# and store it in a global variable so your run() method can access it later.

def init():

global model

# The AZUREML_MODEL_DIR environment variable indicates

# a directory containing the model file you registered.

model_filename = 'your_model.pkl'

model_path = os.path.join(os.environ['AZUREML_MODEL_DIR'], model_filename)

model = joblib.load(model_path)

# The run() method is called each time a request is made to the scoring API.

#

# Shown here are the optional input_schema and output_schema decorators

# from the inference-schema pip package. Using these decorators on your

# run() method parses and validates the incoming payload against

# the example input you provide here. This will also generate a Swagger

# API document for your web service.

@input_schema('data', NumpyParameterType(np.array([[0.1, 1.2, 2.3, 3.4, 4.5, 5.6, 6.7, 7.8, 8.9, 9.0]])))

@output_schema(NumpyParameterType(np.array([4429.929236457418])))

def run(data):

# Use the model object loaded by init().

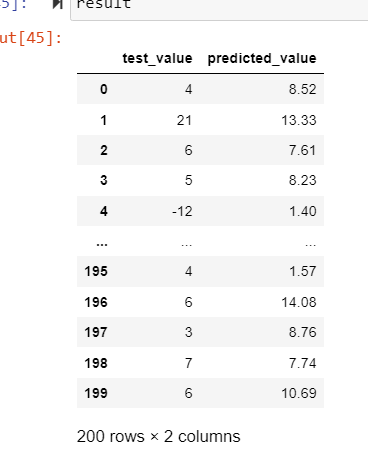

result = model.predict(data)

# You can return any JSON-serializable object.

return result.tolist()

Ref: Scoring script from Azure ML Notebooks github repo.

If an answer is helpful, please click on  or upvote

or upvote  which might help other community members reading this thread.

which might help other community members reading this thread.