Hello Team,

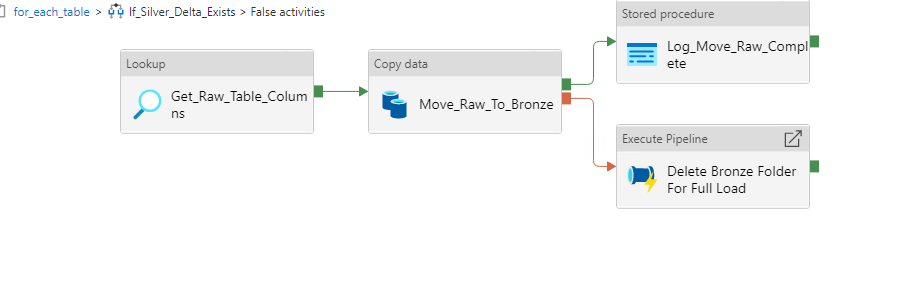

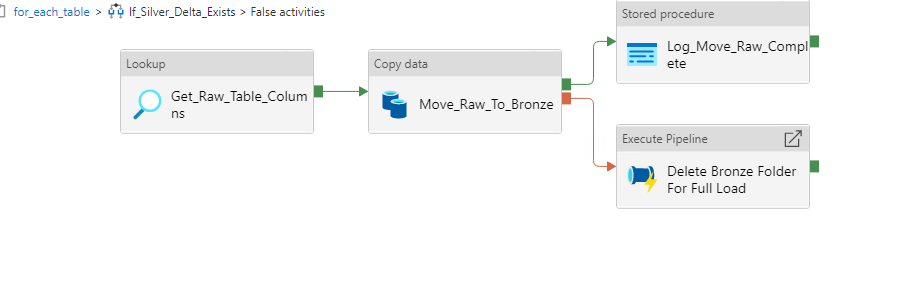

We had setup following pipeline as per screenshot. main goal of this pipeline is copy data from sql server to data lake blob storage using parquet file type.

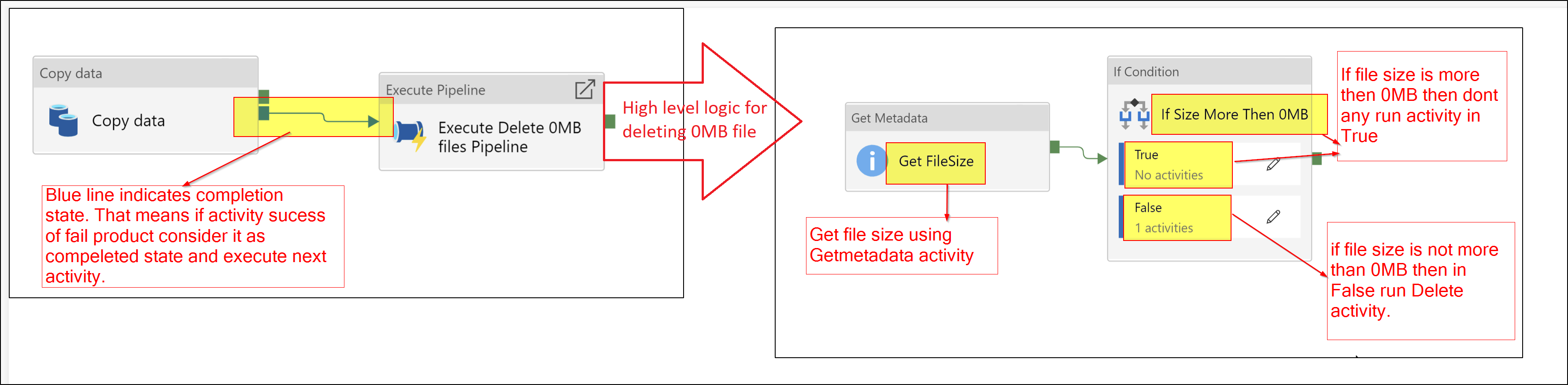

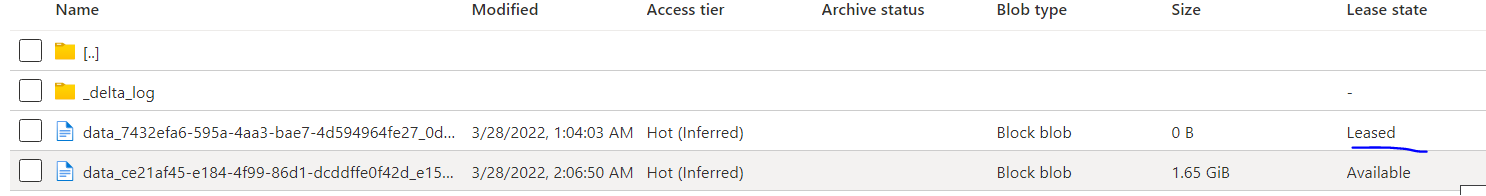

Many times we facing Internal server error in Copy activity, but we setup retry attempt to 3 to make it successful. But in case of failure attempt Copy activity created parquet file on data lake with leased stage. To handle this we created one pipeline which drop this type of files in case of failed copy activity.

But we notice like when copy activity failed using following internal error in first 2 attempt and in last attempt in was successful. Still it not ran failed scenario and not drop those leased files in 2 failed attempt.

"errorCode": "1000",

"message": "ErrorCode=SystemErrorActivityRunExecutedMoreThanOnce,'Type=Microsoft.DataTransfer.Common.Shared.HybridDeliveryException,Message=The activity run failed due to service internal error, please retry this activity run later.,Source=Microsoft.DataTransfer.TransferTask,'",

"failureType": "SystemError",

"target": "Move_Raw_To_Bronze",

"details": []

So can you please guide us is there any specific reason why it not ran first 2 failed attempt ? (In my case copy activity successes in 3rd attempt).

Thank you in advance.