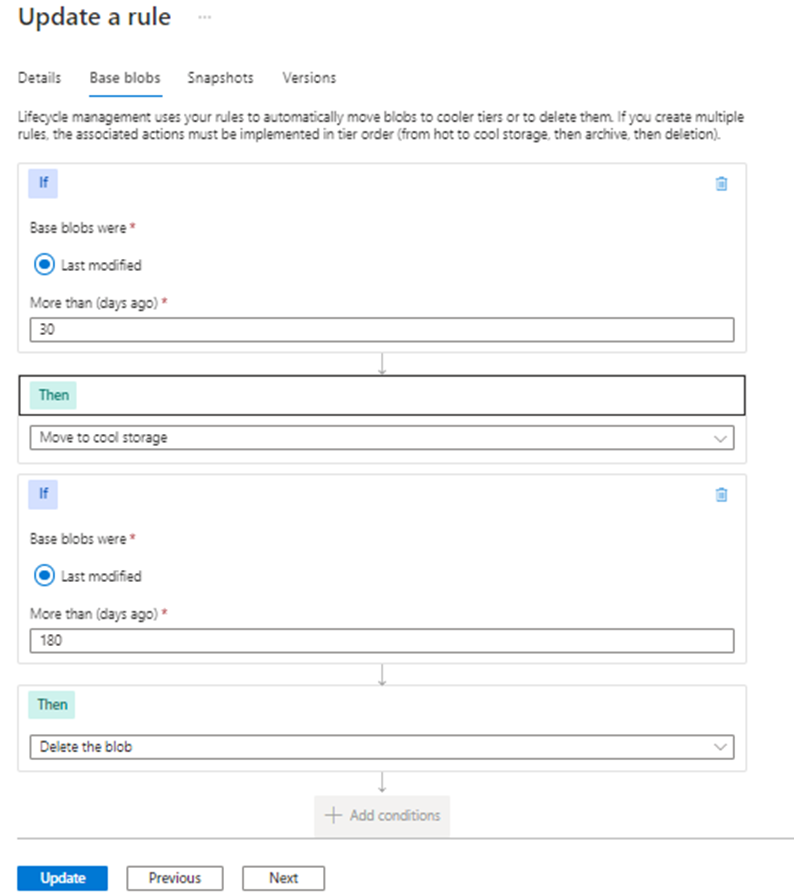

My understanding is that the data export feature is sent simultaneously at ingestion (no option for after the retention period). The difference once the data starts flowing (during or after) is really unimportant (at least not in the 30-90 day range). You could be more targeted with a logic app but the added cost and complexity may not be worth the effort. https://learn.microsoft.com/en-us/azure/azure-monitor/logs/logs-data-export

Sentinel increased the free retention period to 90 days. Extended storage in Log Analytics is very cost effective in the short term. If you only require 180 days I think you will find the added 90-days within log analytics to be affordable and simple to manage. Extended retention in log analytics can be expensive for large datasets after 8-12 months. Our pricing calculator can help with extended storage budgeting if needed.

Also note that Log Analytics supports table-level retention settings. This is set with an ARM template if you have varying retention requirements for individual tables. This option can make native retention more affordable.

You should also check out the new basic logs, archive option, and ingestion-time filtering. These new options will have a big impact on archival strategy. https://techcommunity.microsoft.com/t5/azure-observability-blog/the-next-evolution-of-azure-monitor-logs/ba-p/3143195