@Andrew Howard Thank you for contacting us!

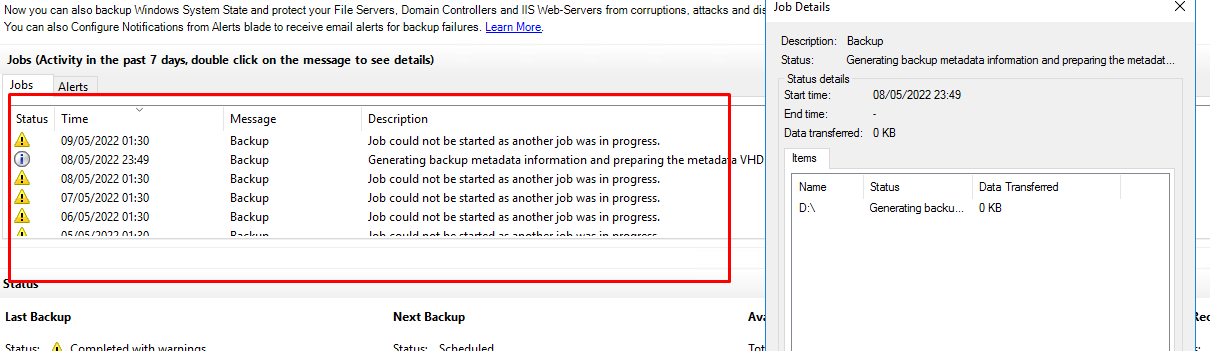

From the error details I can see that you are using MARS agent backup to backup a folder structure of 9TB and erroring out. This error code occurs when there is low disk space on drive C:.

To confirm the space need to the scratch folder, please check the following link - https://learn.microsoft.com/en-us/azure/backup/backup-azure-file-folder-backup-faq#what-s-the-minimum-size-requirement-for-the-cache-folder-

Another option is to relocate the scratch folder to another location. To change it, please see the following information: Change Scratch: https://learn.microsoft.com/en-us/azure/backup/backup-azure-file-folder-backup-faq#how-do-i-change-the-cache-location-for-the-mars-agent-

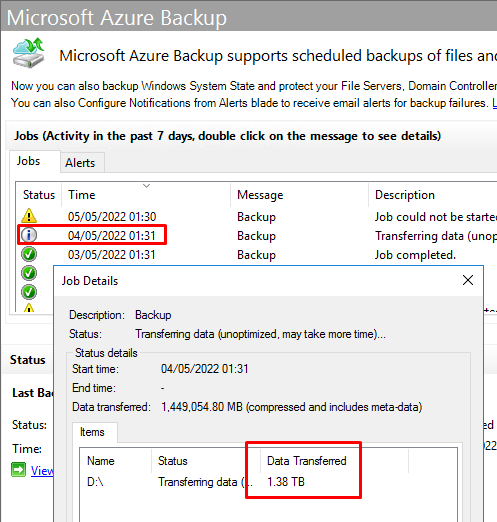

In your case, I also noticed the folder size is beyond the supported backup size limits - Refer to support matrix URL - https://learn.microsoft.com/en-us/azure/backup/backup-support-matrix-mars-agent#backup-limits

Recommendation: Please backup the data in smaller churns to avoid failures.

Hope this helps!

Update:

As the entire folder backup is 9TB (far less than the 54TB max that is specified) am I correct in thinking that there were too many files in the entire job from the perspective of the root folder level and that specifying the job using subfolders instead will overcome this?

Correct!

----------------------------------------------------------------------------------------------------------------------

If the response helped, do "Accept Answer" and up-vote it