Hi @Koteswara Pentakota ,

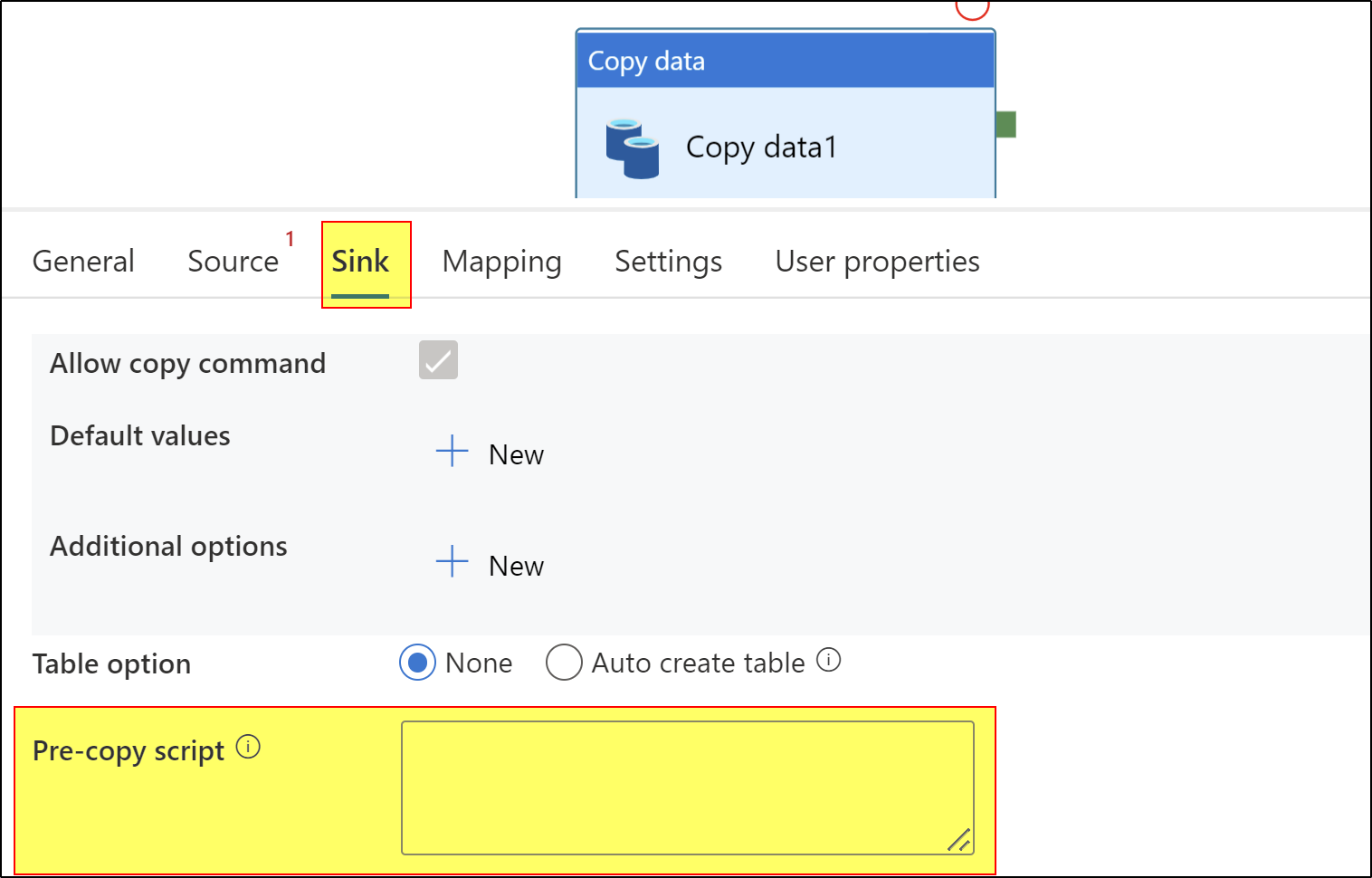

Could you please explain more about What you mean when you say create a relationship based on previous tables? If it means create primary key and foreign key relations, by running SQL script, then we can do that using "pre-copy script: field of copy activity or using script activity as one of the step in your pipeline.

Click here to know more about script activity. This video also explains more about script activity usage.

Azure data factory cannot encrypt data out of box directly. We should either consider using Custom activity or Azure Function activity. Please check below Q&A thread where similar discussion is available.

https://learn.microsoft.com/en-us/answers/questions/280549/how-to-configure-the-adf-pipe-line-to-encrypt-and.html

Hope this helps. Please let us know if any further queries.

------------------

Please consider hitting Accept Answer button. Accepted answers help community as well.