OS: Windows Server 2019 - Hyper-V Role

Hello,

Apologies if asked elsewhere, I could not find a winning search term.

Any assistance is appreciated.

Issue Description

Non-Existent VM's are listed in the Hyper-V Manager (and Get-VM powershell).

I cannot identify where the queried metadata is stored and how to remove it.

Ghosts share a name with production VM's, so I do not know of a safe way to run remove-vm with the proper reference.

Background

We had to recover Hyper-V VM's from backup due to RAID failure/loss of Data Partition (primary OS remained intact).

After replacing the array, Hyper-V no longer listed the lost VM's in Hyper-V manager. (Just an empty list)

I recovered all VM's from backup, at which time it became clear that some metadata remained: to facilitate the recovery from backup, we needed to apply new UUIDs.

After reboot, and a number of reboots since (monthly patching), only the newly recovered VM's were listed . . . until now.

Oddly, "Ghosts" of the VM's from the lost RAID are now present, and I cannot find a way to de-register them or remove the entries via Hyper-V Manager.

Additional Information

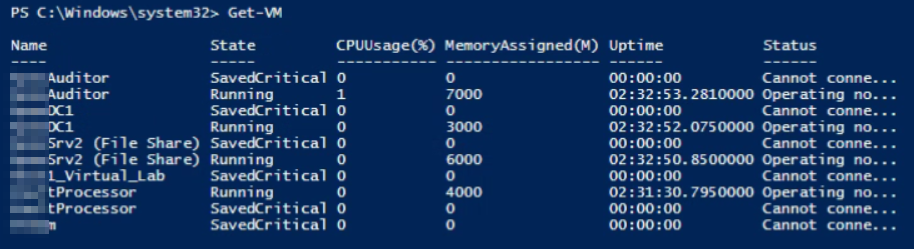

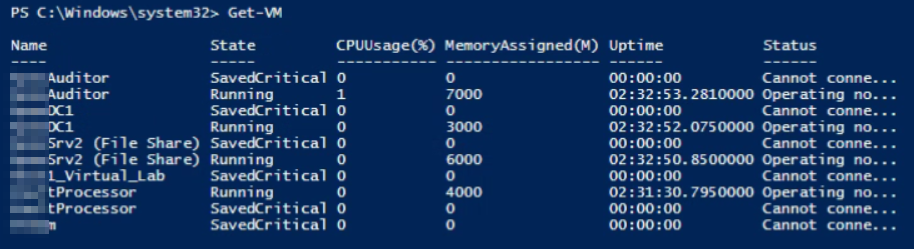

Get-VM results (We can see the "Ghost" VM's with Saved Critical status

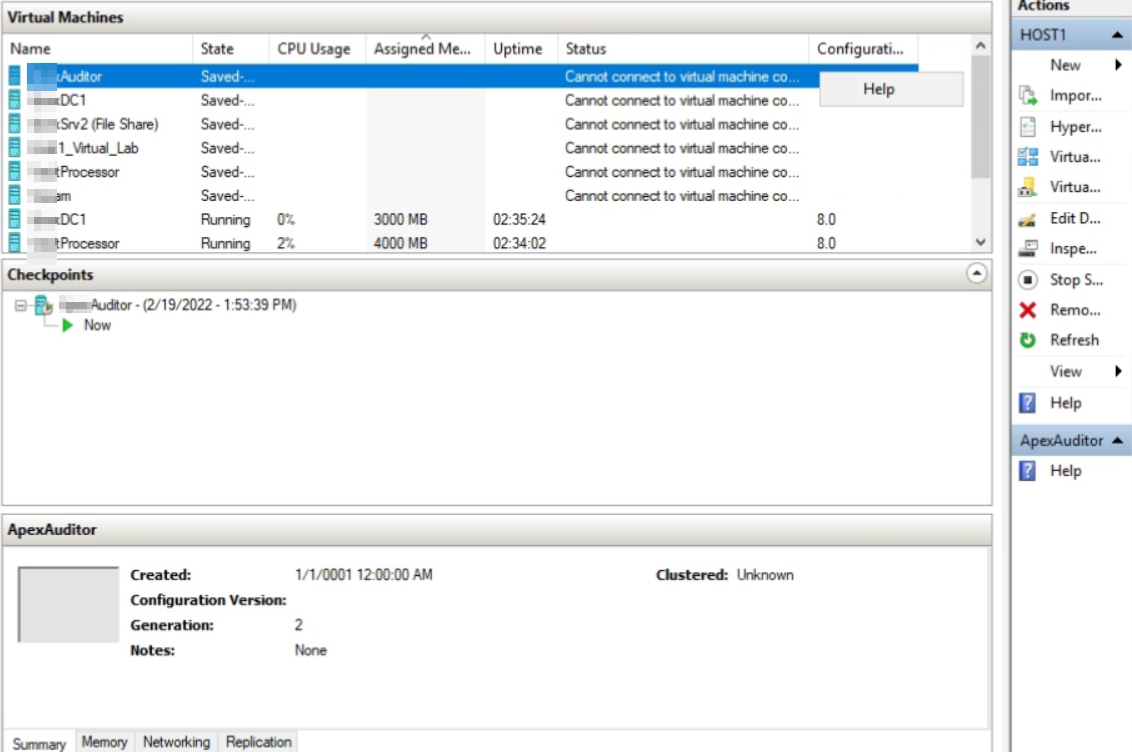

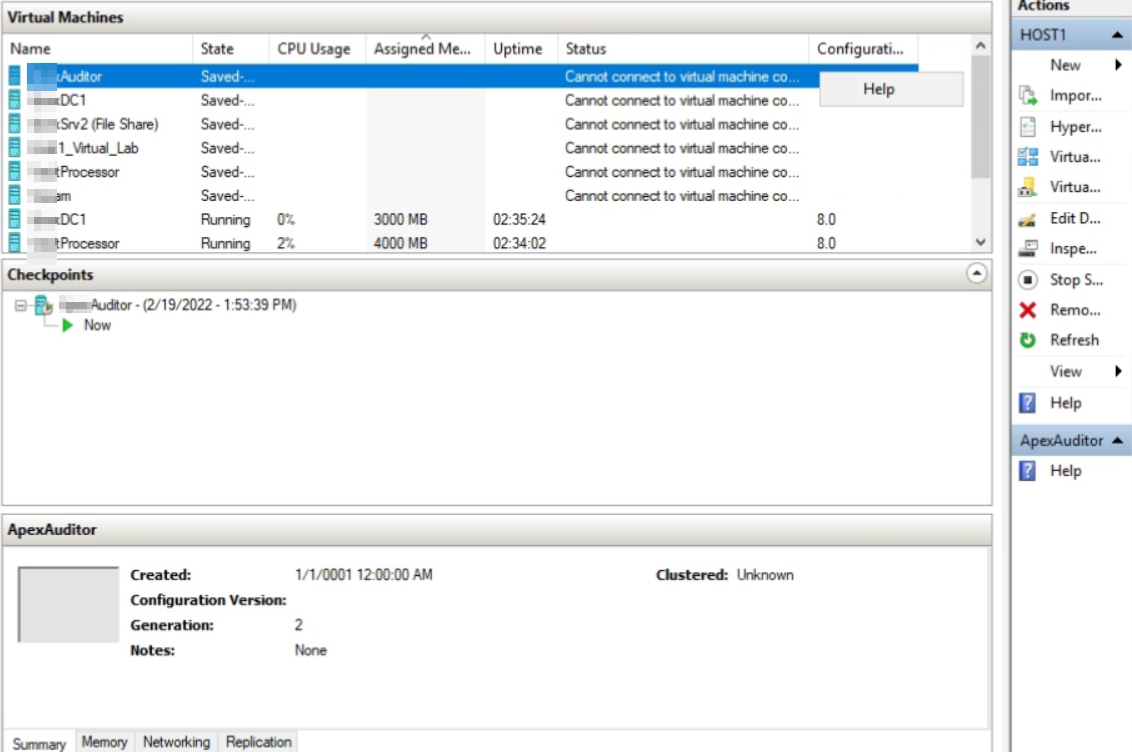

From Hyper-V Manager we can see the Ghost entries lack context menu or management options

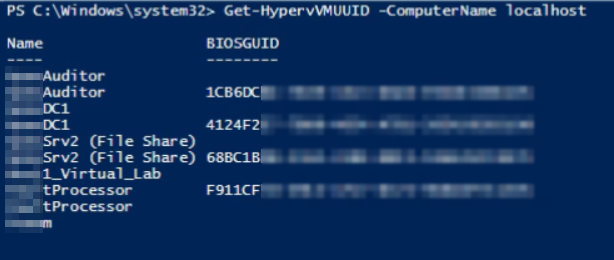

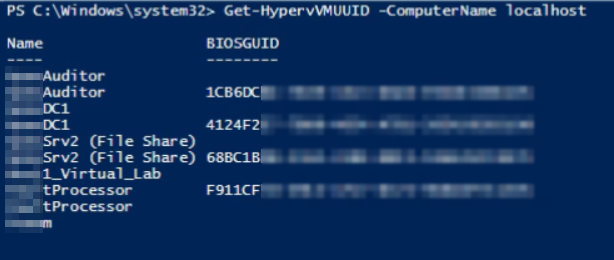

Attempting to retrieve the UUID of the "Ghost" VM's fails.

Utilizing Aaron Parker's script, we can see duplicates, of which the legacy entries lack UUID's.