Behavior of Dynamic Witness on Windows Server 2012 R2 Failover Clustering

With Failover Clustering on Windows Server 2012 R2, we introduced the concept of Dynamic Witness and enhanced how we handle a tie breaker when the nodes are in a 50% split:

See:

Today, I would like to explain how we handle the scenario where you are left with one node and the witness as the only votes on your Windows Server 2012 R2 Cluster.

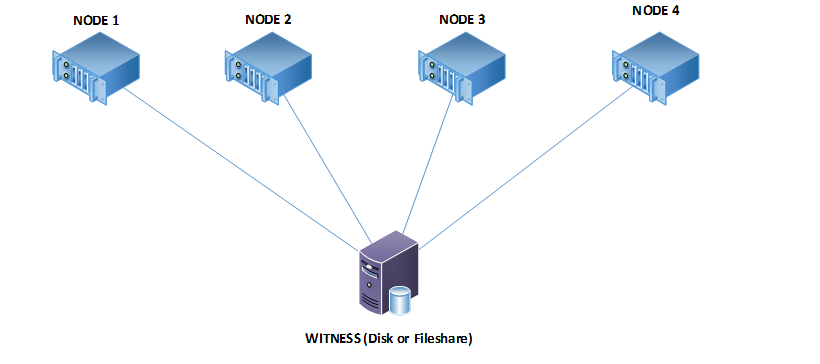

Let’s assume that we have a 4 Node cluster with dynamic quorum enabled.

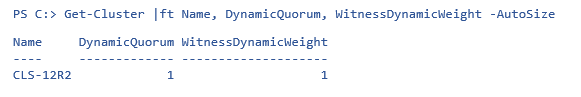

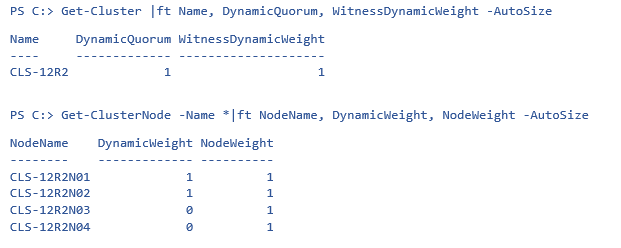

To see the status of dynamic quorum and the witness’ dynamic weight, you can use this PowerShell command:

Now, let’s use PowerShell to look at the weights of the nodes:

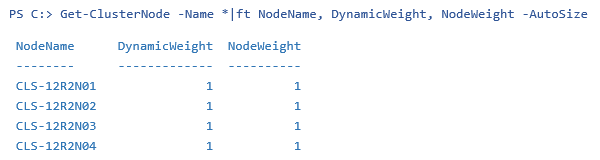

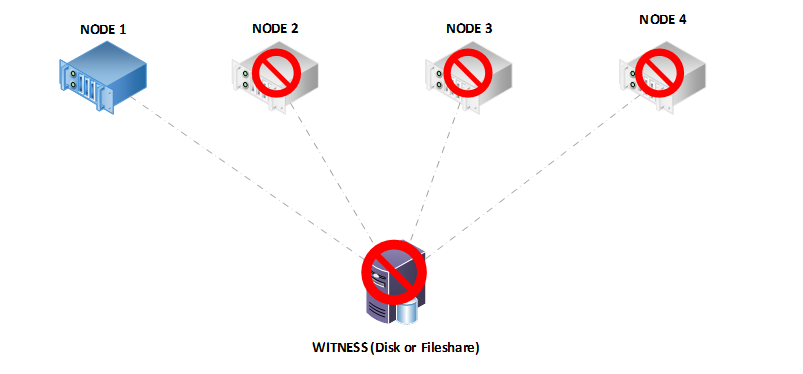

Now, let’s take down the nodes one by one until we have just one of the nodes and the witness standing. I turned off Node 4, followed by Node 3, and finally Node 2:

The cluster will continue to remain functional thanks to dynamic quorum, assuming that all of the resources can run on the single node.

Let’s look at the node weights and dynamic witness weight now:

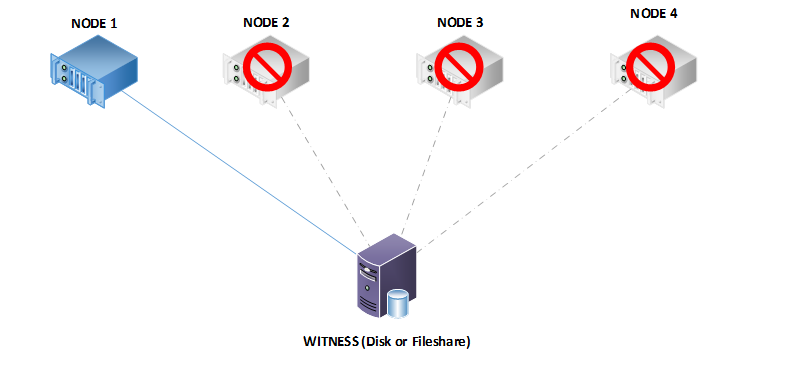

Let’s take this further and assume that for some reason, the witness also sees a failure and you see the event ID 1069 for the failure of the witness:

Log Name: System

Source: Microsoft-Windows-FailoverCluster

Date: Date

Event ID: 1069

Level: Error

User: AUTHORITY\SYSTEM

Computer: servername

Description:

Cluster resource 'Cluster Disk x' in clustered service or application 'Cluster Group' failed.

We really do not expect that this would happen on a production cluster where nodes go offline until there is one left and the witness also suffers a failure. Unfortunately, in this scenario, the cluster will not continue running and the cluster service will terminate because we can no longer achieve quorum. We will not dynamically adjust the votes below three in a multi-node cluster with a witness, so that means we need two votes active to continue functioning.

When you configure a cluster with a witness, we want to ensure that the cluster is able to recover from a partitioned scenario. The philosophy is that two replicas of the cluster configuration are better than one. If we adjusted the quorum weight after we suffered the loss of Node 2 in our scenario above (when we had two nodes and the witness), then your data would be subject to loss with a single failure. This is intentional, we are keeping two copies of the cluster configuration and now either copy can start the cluster back up. You have a much better chance of surviving and recovering from a loss.

That’s about the little secret to keep your cluster fully functional.

Until next time!

Ram Malkani

Technical Advisor

Windows Core Team