Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

(dieser Beitrag ist auch auf Deutsch verfügbar)

Sometimes you need to copy data between different storage locations, for example from your local PC to the Cloud, or between different Azure Cloud environments like Azure Germany and Azure global. Here are some hints that might help you with AzCopy, a free commandline tool.

AzCopy is a free tool that enables you to copy data back and forth between on-premise and Cloud, inside the Cloud and between Clouds. The command line looks a little bit long, but is easy to understand. If you already installed the Azure SDK for Visual Studio, AzCopy is most likely already available for you, look under %ProgramFiles(x86)%\Microsoft SDKs\Azure\AzCopy or %ProgramFiles%\Microsoft SDKs\Azure\AzCopy. If not, you can download it for free.

AzCopy uses URLs and StorageKeys, no need to login to Azure. Well, that's not completely right. You don't need to login to perform the copy command, but you need to login first (at least once) to get all the information AzCopy wants. That leads us to the question: Where to get URLs and keys from?

URL and StorageKey

Information via Portal

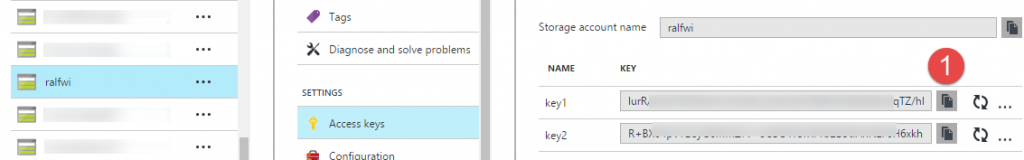

This is easy. Just login to the new portal, look for StorageAccounts in the menu on the left, and pick the one you want to use. Click the "Blobs" service, and you see a list of containers and next to them the URL you need. You can also build the URL by scratch: It's the storage account name, followed by the blob storage endpoint, and the containername attached. So for Azure Germany for example the container "test" in the storage account "store1" has the URL:https://store1.blob.core.cloudapi.de/test.

A little bit further down you see "Access Keys". It doesn't matter if you chose the primary or the secondary key, click on (1) to copy it. For now we have all the information we need.

Information via PowerShell

Also in PowerShell it's easy to get the information. After the login type:

[code light="true"]

Get-AzureStorageAccount

to get a list of all storage accounts, and with the storage account name you get the key:

[code light="true"]

Get-AzureStorageKey -StorageAccountName store1

Again it doesn't matter which one, primary or secondary. Finding the URL is a little bit more complicated. Either you again build the URL based on the name and endpoints, or you save the following script and call it with the name of the storage account (or nothing to get a list of storage accounts).

[code]

param(

[parameter(position=0,mandatory=$false)][string] $storagename

)

if (-not $storagename){

Get-AzureStorageAccount |select StorageAccountName,label, location

} else {

$key=(Get-AzureStorageKey -StorageAccountName $storagename |select -ExpandProperty Primary)

$context = New-AzureStorageContext -StorageAccountName $storagename -StorageAccountKey $key

Write-Host "Key: " $key

(Get-AzureStorageContainer -Context $context).CloudBlobContainer|select Name,Uri|fl

}

Now we know how to get the information to do some tests with AzCopy...

Upload to Azure

AzCopy always needs a source, a destination and a pattern what files should be copied. A simple pattern might be a filename, but you can use wildcards to copy more than one file. In our first example we copy the file c:\temp\test.txt to Azure in the storageaccount "store1" and there into the container "test". After we found the URL and a key with the portal or powershell, let's start:

[code light="true"]

AzCopy.exe /source:c:\temp /dest:https://store1.blob.core.cloudapi.de/test /destkey:Dy8(...)oA== /pattern:test.txt

I shortened the key a little bit... AzCopy should now report "1 file succesfully copied". But I guess somebody entered the complete filename at /source and not only the path, right? That's something you have to get used to. source and dest always has only the path, the filenames come with pattern. That also means that files always have the same name at source and destination.

Now for the other way round...

Download from Azure

We simply switch source and destination and get:

[code light="true"]

AzCopy.exe /dest:c:\temp /source:https://store1.blob.core.cloudapi.de/test /sourcekey:Dy(...)oA== /pattern:test.txt

Easy going... And now from Cloud to Cloud...

Copy from Cloud to Cloud

We do nearly the same, but need two storage accounts and two keys, one for the source, and one for the destination. The accounts could be part of the same subscription, different subscription, same cloud environment or different. We will copy as an example a file form Azure Germany to Azure global. The syntax is the same like above:

[code light="true"]

AzCopy.exe /source:https://store1.blob.core.cloudapi.de/test /sourcekey:Dy(...)oA== /dest:https://store2.blob.core.windows.net/testglobal /destkey:M8(...)aM== /pattern:test.txt

You can see that the storage endpoints for Azure Germany (blob.core.cloudapi.de) are different from the storage endpoints in Azure global (blob.core.windows.net). The copy process might take some time depending on the file size, especially in this particular case (a copy between Azure Germany and Azure global). Due to the speciality of the Microsoft Cloud Germany, the sovereignty and the necessary separation of this environment, there is no direct backbone between the global and the german datacenters, so the data is transfered over the normal internet (of course encrypted, see URL), but there might be variations in bandwidth and network performance.

By the way, if you try to copy a running virtual server, AzCopy reports an error but doesn't give you any hints why. The .vhd file is locked, so shutting down the VM helps...

Next steps

AzCopy has a lot more to offer, likle copy not only blob storage, but file storage and table storage and more. There is a good documentation available on the Azure site with a lot more examples. Make sure to read it and enjoy AzCopy.

Comments

- Anonymous

February 12, 2018

Does copying data from one storage account to another storage account using AzCopy depend on network? What if the VM on which AzCopy command is running shuts down unexpectedly. Does it affect the transfer?- Anonymous

February 12, 2018

Copy jobs are asynchronous, so the status of the VM where the command is started is not relevant afterwards. You send a command saying "Hey, copy this from point A to point B", and Azure will do so.- Anonymous

February 13, 2018

It's a great feature. Appreciate.

- Anonymous

- Anonymous