Azure Storage - Essential Facts

August 2014 - Definitely subject to change

The platform is evovling quickly. This is a snapshot as of Aug 2014.

Utilities

The following section provides some guidance about using external utilities with Azure Storage.

Client Utilities

These are tools used by client applications that access or help with Azure Storage.

Copy Utilities - AzCopy

The fastest way to move data from Azure Blobs to Azure File is to use AzCopy. You should run AzCopy from a VM in the same datacenter as the destination storage account.

AzCopy is now in release 2.5 can can be found here:

Figure 1: Azure Command prompt

Note the directory where it can b found. I will copy the binaries to

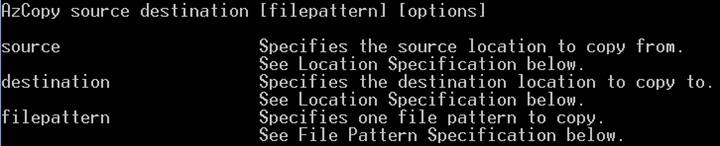

Here is the syntax:

Figure 2: AzCopy Syntax

Here are some things to remember:

You can copy files that are in file system directory, a blob container, a blob virtual directory, or a storage file share.

You can copy recursively as well.

You can copy a single blob or multiple with wild-cards.

You can copy across storage accounts.

With geo-redundancy you can copy blobs from secondary regions

You can also copy snapshots to another storage account

You can use response files to support automation

You can use Shared Access signatures

Log files can be generated

Works in the storage emulator

Copying across storage accounts asynchronously enables scenarios, like:

Backup up blobs

Migrate blobs to different account

The asynchronous copy blob runs in the background using spare bandwidth capacity, so there is no SLA in terms of how fast a blob will be copied

Cross account copies involve an egress fee.

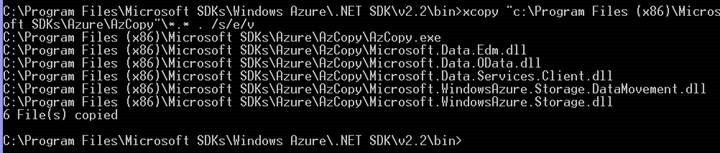

You can copy the binary to where they are needed:

Figure 3: Location of AzCopy Binaries

https://blogs.msdn.com/b/windowsazurestorage/archive/2012/06/12/introducing-asynchronous-cross-account-copy-blob.aspx for more information

To protect from the source changing, you can use a lease, introducing the concept of lock (i.e. infinite lease) which makes it easy for a client to hold on to the lease.

During a pending copy, the blob service ensures that no client requests can write to the destination blob

How you get charged

There are 3 ways you get charged for Azure Storage.

| Storage capacity |

| Storage transactions (number of read and write operations to storage) |

| Data transferred (data egress). |

Replicating Data / Redundancy

Azure Storage lets you specify how data gets replicated. There are 4 tiers. They differ on how they work, how much they cost, and how they perform. You should also understand the difference between a primary and secondary region.

Primary and Secondary Regions

| Primary Region | Secondary Region |

| North Central US | South Central US |

| South Central US | North Central US |

| East US | West US |

| West US | East US |

| North Europe | West Europe |

| West Europe | North Europe |

| South East Asia | East Asia |

| East Asia | South East Asia |

| East China | North China |

| North China | East China |

Table of Features regarding Redundancy Strategy

| Redundancy Type | Description | Uptime | Cost for Block Blob |

| Locally Redundant Storage (LRS) | We store an equivalent of three replicas of data synchronously replicated within a single region to provide high durability. The reduction in price compared to GRS is around 23% to 34% depending on how much data is stored Some customers want their data only replicated within a single region due to application’s data governance requirements Some applications may have built their own geo replication strategy and not require geo replication to be managed by Windows Azure Storage service | Can get 99.9% for read operations, 99.9% for write. | As of 8/10/2014, 50 to 100 TB / Month costs $0.023 per GB |

| Zone Redundant Storage (ZRS) | We store an equivalent of three replicas of data across 2 to 3 facilities within a single region or across regions for higher durability. | Can get 99.9% for read operations, 99.9% for write. | As of 8/10/2014, 50 to 100 TB / Month costs $0.029 per GB |

| Geographically Redundant Storage (GRS) | We store an equivalent of six replicas of data across 2 regions (three in each region) to provide additional data durability of the data. The data is committed to three replicas in the primary region and then asynchronously replicated to a secondary region hundreds of miles apart from the primary.

In the event of a complete regional outage or a regional disaster in which the primary location is not recoverable, your data is still durable This means we keep three replicas in each of the locations (i.e. total of 6 copies) to ensure that each location can recover by itself from common failures (e.g., disk, node, rack, TOR failing) However, with respect to transactions, since there is a delay in the geo replication, in the event of a regional disaster it is possible that delta changes that have not yet been replicated to the secondary region may be lost if the data cannot be recovered from the primary region Regarding Azure Tables, there are no geo-replication ordering guarantees across objects with different Partition Key values, only within partitions.

|

Can get 99.9% for read operations, 99.9% for write. | As of 8/10/2014, 50 to 100 TB / Month costs $0.046 per GB |

| Read-Access Geographically Redundant Storage (RA-GRS) | In addition to geographically redundant storage, we provide read-only access to the storage account in the secondary region that will have an eventually consistent copy of the data in the primary storage. Customers can use this service to access their data when the storage account in the primary region is unavailable. | Can get 99.99% for read operations, 99.9% for write. | As of 8/10/2014, 50 to 100 TB / Month costs $0.059 per GB |

How to the failover scenarios work?

When a primary region goes down, how does Azure recover your data

| Azure first tries to restore data before failing over to secondary data center | If disaster affects the primary storage location, Azure will first try to restore the data in the primary location using data from the secondary location |

| Why Azure tries to restore data instead of failing over to 2nd data center | Replication is asynchronous and secondary may not have the most up to date data. Customers generally prefer to have all data available |

| How failover works | A DNS change is made and is propagated. Existing Blob, Table, and Queue URIs will work without modifcation. All traffic will be re-directed to the secondary storage account |

| GeoReplicatin SLA | There is currently no SLA on how long geo-replication takes. |

| Trigger a failover | Customers will have an ability to trigger a failover at an account level, which would then allow customers to control and test Recovery times. This is NOT available yet. |

Regarding blobs, what are the capacities?

This table describes the capacity, throughput, and max size of a blob.

| Capacity | Up to 500TB containers |

| Throughput | Up to 60 MB/s per blob |

| Object size | Up to 1 TB/blob |

Egress (data leaving a data center) Costs

It gets cheaper with scale. But it varies by region.

| Outbound Data Transfers | US West, US East, US North Central, US South Central, US East 2, US Central, Europe West, Europe North | Asia Pacific East, Asia Pacific Southeast, Japan East, Japan West | Brazil South |

| First 5 GB / Month | Free | Free | Free |

| 5 GB - 10 TB / Month | $0.12 per GB | $0.19 per GB | $0.25 per GB |

| Next 40 TB / Month | $0.09 per GB | $0.15 per GB | $0.23 per GB |

| Next 100 TB / Month | $0.07 per GB | $0.13 per GB | $0.21 per GB |

Questions about data leaving region

| Is data transfer between Azure services located within the same region charged? | No. For example, an Azure SQL database in the same region will not have any additional data transfer costs. |

| Is data transfer between Azure services located in two regions charged? | Yes. Outbound data transfer is charged at the normal rate and inbound data transfer is free. |

Transaction Costs

Every time you have read or write operations, there is a cost.

| Storage Transactions |

| $0.005 per 100,000 transactions across all Storage types |

| Transactions include both read and write operations to Storage. |

Azure Tables - Support for JSON

It used to be AtomPub. But JSON makes more sense for a variety of reasons.

JSON provides minimal metadata.

Dramatic reduction payload size, saving CPU cycles, supporting higher scale, lower latency

Azure has an extensive set of SDKs

Of course, Azure Storage supports REST, meaning that you can talk to storage from any client that can use HTTP. But more specialized SDKs are also available.

| Java | There is full support and RTM bits for Java developers with Azure Storage. It is available at Github. Download samples |

| Android | Support for Azure Storage is now in preview mode. |

| .NET | Update release. Some code to work with Azure Storage Analytics, finding specific logs. You can get the new 4.0 version of our library on NuGet. |

| Windows Phone and Windows Runtime | Have been RTM'd. |

| Support for C++ | Is now in preview update. |

| Future support for iOS | Coming this year |

Performance of Geo Redundant versus Local Redundant

| Geo Redundant Storage (GRS) - Ingress | Geo Redundant Storage (GRS) - Egress | Local Redundant Storage (LRS) - Ingress | Local Redundant Storage (LRS) - Egress |

| 10 Gibps | 20 Gibps | 20 Gibps | 30 Gibps |

Note that GeoRedundant slows down your uploading and download of content to MS data centers. Note that GRS does not impact latency of transactions made to the primary location.

Tooling to Manage Azure Storage

These are some free client tools that will allow you interact with data using Azure Storage Services

| Azure Storage Explorer | https://azurestorageexplorer.codeplex.com/ |

| Azure Web Storage Explorer | https://storageexplorer.codeplex.com/ |

| Azure Explorer by Cerebrata | https://www.cerebrata.com/products/azure-explorer/introduction |

| Gladinet Cloud Drive | https://www.gladinet.com/ |

| Windows Azure SDK Storage Explorer for Visual Studio 2013 (Developed by Microsoft) | https://www.microsoft.com/en-us/download/details.aspx?id=40893 |

Understanding Cross Origin Resource Sharing (CORS)

Microsoft does support Cross Origin Resource Sharing (CORS) - Why this is so important

This support makes it possible for client-side web applications running from a specific domain to issue requests to another domain

This will allow JavaScript code loaded as part of https://www.contoso.com to issue requests at will to any other domain like https://www.northwindtraders.com

If CORS were not supported, you'd have to use a proxy for storage calls, limiting scale and adding an extra layer of work.

CORS makes it possible for web apps to directly place content to Azure Storage from your company web site.

More specifically, your end users could directly upload blobs using shared access signatures to a company storage account without the need of a proxy service.

You can therefore benefit from the massive scale of the Windows Azure Storage service without needing to scale out a service in order to deal with any increase in upload traffic to your website.

It is about granting a Web Browser write privilege to your company's storage account

Your web service does not need to be in the upload path of storage services

It works very simply - whenever a user is ready to upload, the JavaScript code would request a blob SAS URL to upload against from your service and then perform a PUT blob request against storage

As a precaution, it is recommended that you limit the access time of the SAS token to the needed duration time in order to limit any security risks and the specific container and or blob to be uploaded

Another scenario where this is usesful is allowing users to edit data in a browser and persisting the data to Windows Azure Tables, which is a dictionary-like persistent store.

- How to save data to Azure Tables from a browser

- Extended sample on leveraging CORS

Here is some guidance on how to enable CORS

- Enabling CORS

- Using Client Library to enable CORS - Windows Azure Storage Client .NET Library 3.0

Conclusion

I hope that I have surfaced some key facts that are buried in blogs and in on-line documentation.