Azure Availability Zones Quick Tour and Guide

Three years ago, I started working with a big Microsoft partner on moving infrastructure and services into Azure. At that time, it was not possible to implement all the required functionalities and requirements, due to some missing features, one of these was Availability Zones (AZ). Not a hard blocker initially, we slightly modified the architecture to achieve what the partner requested, and then we post-poned the AZ discussion until few months back. First lesson we learned: do you really need AZ? Is it a critical feature you cannot avoid using? Is it a real hard blocker for customers that are already using it with other Cloud providers? At least in my specific case, the answer to all these questions is NO, but nice to have. Azure Availability Zones (AZ) is a great feature that is aiming to augment Azure capabilities and raise high-availability bar. First step is understand what it is, capabilities and limitations, why should be eventually used and which problems is aiming to solve. What I’m going to describe here, are the core concepts and discussion points I used talking with my partner, then what I used to modify that specific architecture to include AZ design. Where not differently stated, I will talk in the context of Virtual Machines (VM) since my project was IaaS scoped.

Where Availability Zones are sitting?

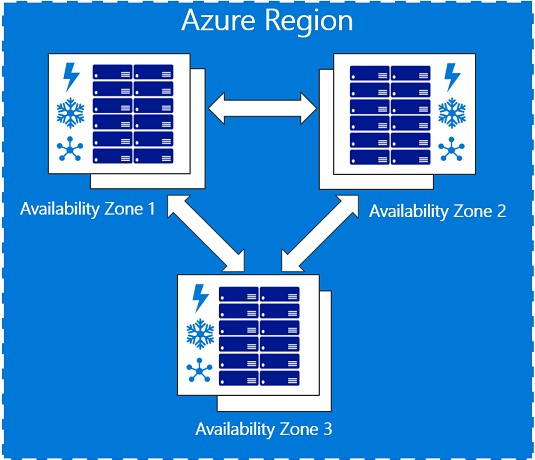

For you that are not aware of what AZ is, let me include a good single sentence definition: Availability Zones (AZ) are unique physical locations with independent power, network, and cooling. Each Availability Zone is comprised of one or more datacenters and houses infrastructure to support highly available, mission critical applications. AZ are tolerant to datacenter failures through redundancy and logical isolation of services. I already authored an article introducing this feature, then you may want to read it before going forward with the current one:

Why Azure Availability Zones

https://blogs.msdn.microsoft.com/igorpag/2017/10/08/why-azure-availability-zones

Azure today is available in 50 regions worldwide, as you can see in the map below. In the same picture (live link here) you can see both announced and already available regions. You can also find out in which regions AZ feature is already available (France Central and Central US, at the time of writing this article), and where is currently in preview (East US 2, West Europe, Southeast Asia), reaching this page.

But in the general Azure hierarchy, where AZ is exactly sitting? Using the map above and the page from where I pulled it down, we can describe the following hierarchy:

- Geographies: Azure regions are organized into 4 geographies actually (Americas, Europe, Asia Pacific, Middle East and Africa). An Azure geography ensures that data residency, sovereignty, compliance, and resiliency requirements are honored within geographical boundaries.

- Regions: A region is a set of datacenters deployed within a latency-defined perimeter and connected through a dedicated regional low-latency network. Inside each geography, there are at least two regions to provide geo disaster recovery inside the same geopolitical boundary (Brazil South is an exception).

- Availability Zones: AZ are physically separate locations within an Azure region. Each Availability Zone is made up of one or more datacenters equipped with independent power, cooling, and networking. For each region enabled for AZ, there are three Availability Zones.

- Datacenters: physical buildings where compute, network and storage resources are hosted, and service operated. Inside each AZ, where present, there is at least one Azure datacenter.

- Clusters: group of racks used to organize compute, storage and network resources inside each datacenter.

- Racks: physical steel and electronic framework that is designed to house servers, networking devices, cables. Each rack includes several physical blade servers.

I often heard this nice question from my customers and partners: why Azure provides three zones? Should be enough two zones instead? Reason is pretty simple: for any kind of software or service that requires a quorum mechanism, for example SQL Server or Cassandra, two zones are not enough to always guarantee a majority when a single one is down. Think about three nodes: if you have three zones, you can deploy one node in each zone, and still have majority if a single zone/node is down. But what happens if you have only two zones? You will have to place two nodes into the same zone, and this specific zone will be down, you will lose majority and your service will stop. A nice example for SQL Server, deployed using AlwaysOn Availability Group across AZ, is reported below:

SQL Server 2016 AlwaysOn with Managed Disks in Availability Zones

https://azure.microsoft.com/en-us/resources/templates/sql-alwayson-md-mult-subnets-zones

Why should I use Availability Zones?

AZ has been built to make Azure resiliency strategy better and more comprehensive. Let me re-use a perfect summarizing sentence from this blog post:

Azure now offers the most comprehensive resiliency strategy in the industry from mitigating rack level failures with availability sets to protecting against large scale events with failover to separate regions. Within a region, Availability Zones increase fault tolerance with physically separated locations, each with independent power, network, and cooling....

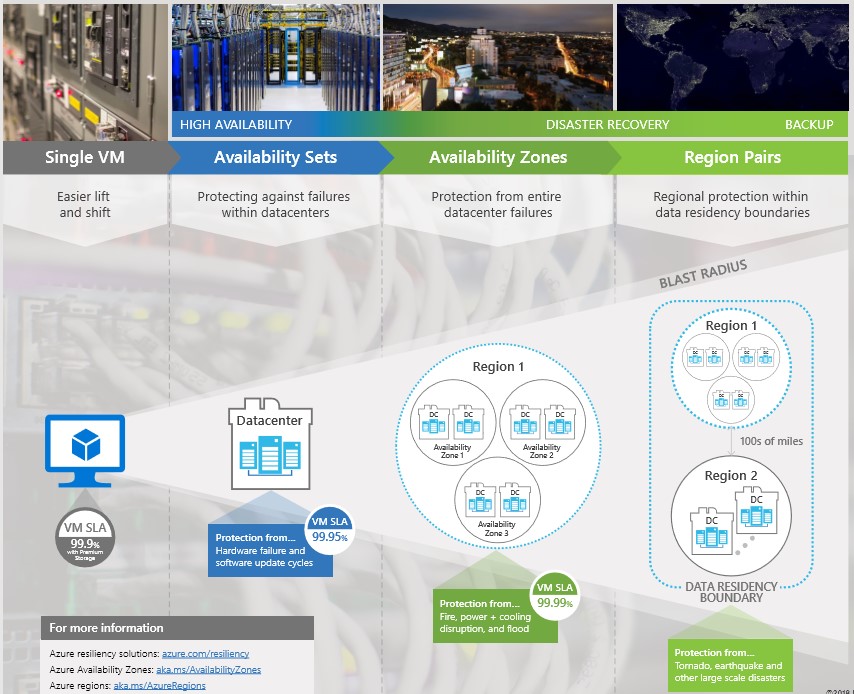

While regions are 100s of miles distant and provide protection from wide natural disasters, AZs are much closer and then not suitable for protecting from this kind of events. If you want to build your geo-DR story, you should still use different Azure regions. On the other hand, this relative proximity should permit you to replicate synchronously (RPO = 0) your data across different physical and isolated, independent locations, protecting your application or service from datacenter wide failures. With AZ, what Azure can provide now in terms of High-Availability SLA, is slightly changed, let me recap the full spectrum using the graphic below:

As you can see, now for your VMs you can have 99.99% HA SLA, compared to the lower 99.95% provided by the traditional Availability Set (AS) mechanism. AS is still supported, but cannot be used in conjunction with AZ, you have to decide which option you want to use: at VM creation time, you need to place your VM into an AZ or into an AS, and you will not allowed to move it later, at least as the technology works today. For an overview of mechanisms that you can use to manage availability of your VMs, you can read the article below:

Manage the availability of Windows virtual machines in Azure

/en-us/azure/virtual-machines/windows/manage-availability

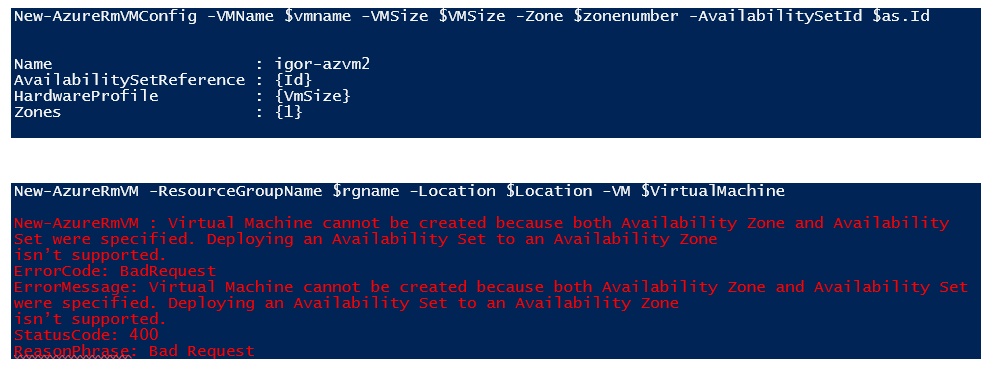

If you decide to use AZ, you will *not* formally have the concepts of Update Domains (UDs) and Fault Domains (FDs). In this case, AZ will be now your UDs an FDs, remember that these are different and independent Azure locations, from a physical and logical perspective. If you try to put your VM into both AS and AZ, you will get an error. You can realize it looking into the PowerShell cmdlet "New-AzureRmVMConfig": as you can see below, it is here that you can specify values for either " -Zone" or " -AvailabilitySetId" parameters, but not both at the same time. You will not get an error for this cmdlet execution, but later when you will try to instantiate a VM object with “New-AzureRmVM”:

In the print screen above you can read the error message below:

Virtual Machine cannot be created because both Availability Zone and Availability Set were specified. Deploying an Availability Set to an Availability Zone isn’t supported.

Availability Zones are only for VM?

Short answer is “NO”, long answer is starting now. As I stated at the beginning, in this article I’m mainly talking about the Virtual Machine (IaaS) context but let me shortly describe where AZ is also expanding its coverage. I would be not surprised at all to learn about upcoming new Azure resources and services. This is only my opinion, but AZ is a great mechanism to improve resiliency of distributed services, and I can imagine Azure will make more of them AZ enabled.

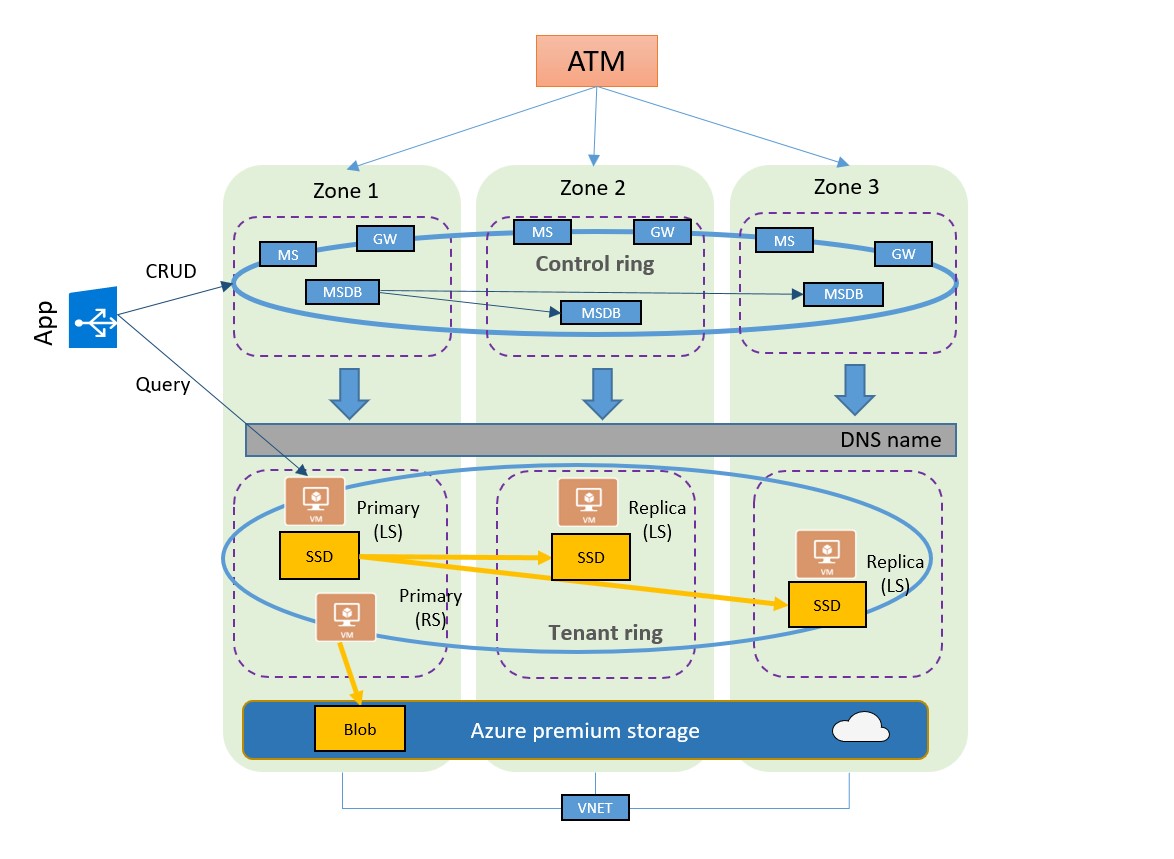

Azure SQL Database (SQLDB)

It is still in preview, even if AZ is generally available in some regions, main article is reported below and the section interesting for us is “Zone redundant configuration”:

High-availability and Azure SQL Database

/en-us/azure/sql-database/sql-database-high-availability

Important thing to keep in mind is that SQLDB with AZ is only available with the “Premium” tier. Why this? If you are interested in the details, I would recommend you read the article above. If not, let me shortly recap here: only with “Premium” SQLDB tier you have three different active SQLDB instances, deployed across all the 3 zones, database storage is local ephemeral SSD storage, and synchronously replicated. HA SLA is the same as without AZ, that is 99.99%, with RPO = 0, RTO = 30s. For automatic backups, ZRS storage is used. NOTE: You don’t have control on how many and which zones your SQLDB nodes will be placed.

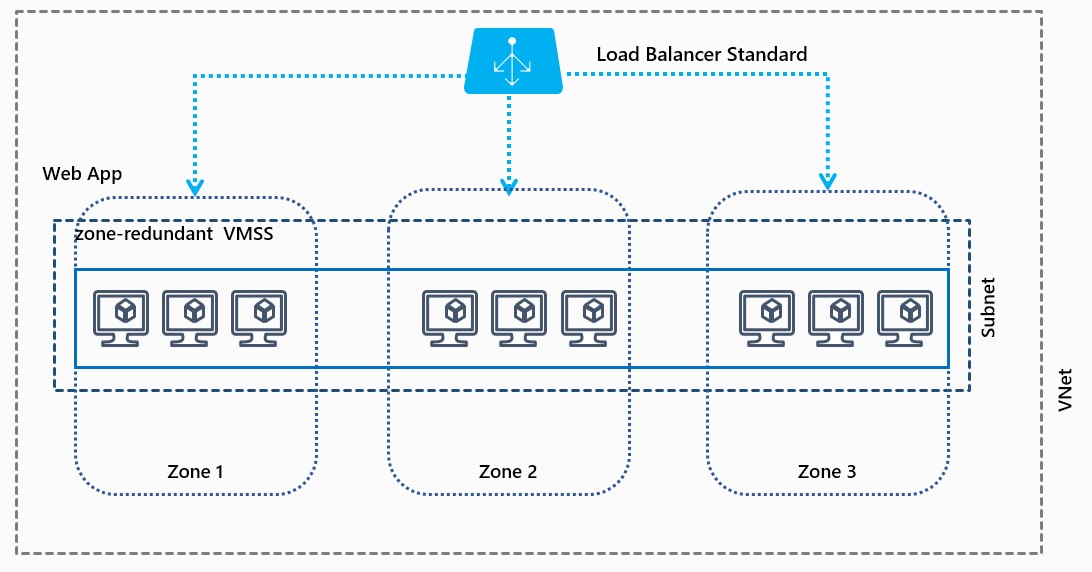

Virtual Machine Scale Set (VMSS)

VMSS has nice and deep relation with AZ, let me explain why. First, with VMSS you can use AZ and at the same time you can still use Availability Set (AS). Once again, this is *not* possible with standard Azure VMs.

Create a virtual machine scale set that uses Availability Zones

/en-us/azure/virtual-machine-scale-sets/virtual-machine-scale-sets-use-availability-zones

As explained in the article above, you have the option to deploy VMSS with "Max Spreading" or "Static 5 fault domain spreading". With first option, the scale set spreads your VMs across as many fault domains as possible within each zone. This spreading could be across greater or fewer than five fault domains per zone. With the second option, the scale set spreads your VMs across exactly five fault domains per zone. Another cool VMSS feature that can be used in conjunction with AZ is “Placement Group”. A placement group is a construct similar to an Azure AS, with its own fault domains and upgrade domains. By default, a scale set consists of a single placement group with a maximum size of 100 VMs. If a scale set is composed of a single placement group, it has a range of 0-100 VMs only, with multiple you can arrive to 1000.

Last evidence of good integration between VMSS and AZ is the “load balancing” options you have. For VMSS deployed across multiple zones, you also have the option of choosing "Best effort zone balance" or "Strict zone balance". A scale set is considered "balanced" if the number of VMs in each zone is within one of the number of VMs in all other zones for the scale set. With best-effort zone balance, the scale set attempts to scale in and out while maintaining balance.

Where am I placing my resources?

I decided to group here a series of questions related to AZ selection and placement. I felt some confusion here from customers and partners, then I hope that what I’m writing will be useful.

- Usage of AZ is mandatory? NO, you can continue deploying your VMs as usual. Then, where will my resource will be deployed? You should not be worried about this, you will only indicate the “region” in your request, and Azure will place your VM in a datacenter inside that region. NOTE: AZ related parameters in ARM templates, PowerShell cmdlets and AZ CLI are all optional.

- Is there a default AZ if not specified? NO, apparently there is no way to set a subscription wide default AZ. If you want to use it, you must specify which one.

- Can I specify the physical AZ? NO, you can specify only logical AZ as “1”, “2” or “3”. How logical is translated to physical zones, and datacenters, is managed automatically by Azure platform.

- Is logical to physical mapping constant across Azure subscription? NO, I didn’t find any official statement or SLA about providing same mapping for different subscriptions even under the same Azure Active Directory tenant.

- Which is the distance (and then the latency) between AZ? Officially, there is no indication of distance between different AZs, nor network latency SLA, only a general indication of “less than 2ms” boundary inside the same region.

- Is it possible to check in which AZ my VM is deployed? YES, you can check in which zone your VM is deployed looking into the VM properties using the Azure Portal, PowerShell and Azure Instance Metadata Service (see JSON fragment example below, more details later in this article).

What I need to create my VM into Availability Zone?

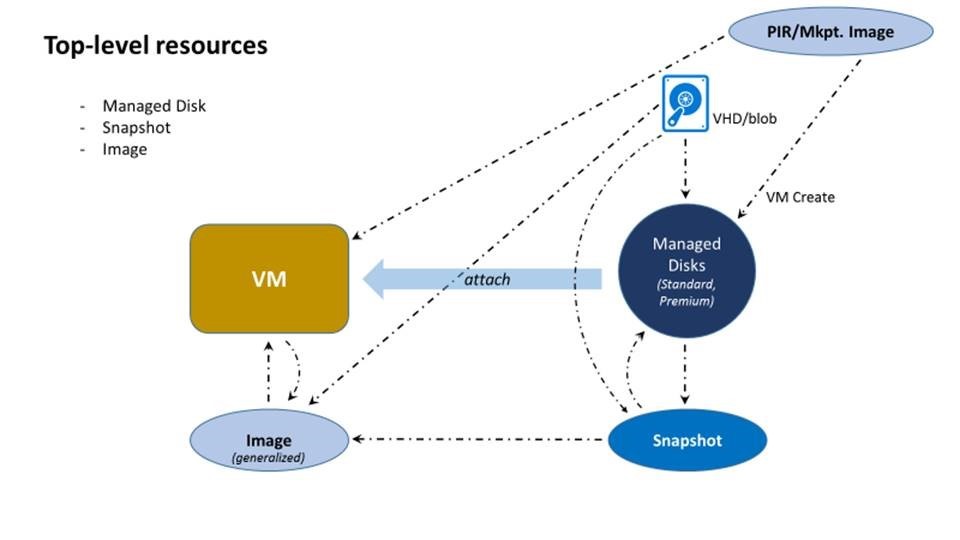

If you want to create and access a VM in Azure, you would normally need to define first dependent “core” resources: a public IP (VIP), maybe a Load Balancer (LB) tied to this public IP, a network interface card object (NIC), a Virtual Network and subnet (VNET, Subnet) and a disk (Managed Disk). This remains the same also with AZ. In addition to these resources, you may also need an Image to deploy your custom OS, and a Snapshot taken from your VM disk for backup/recovery purposes. With the advent of AZ, Azure team applied several underlying changes to all these core resources to make possible and efficient running Azure VMs, and other services, into AZ. Before shortly describing each of them, let me clarify a new terminology that you may find around in Azure documentation: with “zone redundant” is intended a resource that is deployed across at least two AZs and then able to survive the loss of a single zone. Instead, with “zonal” is intended a resource that is deployed into a single and specific AZ, and will not survive to zone loss. Keep in mind that this distinction is only for resources that are “AZ-aware”. There are resources that are not deployed, or deployable, into Availability Zone. For example, an Azure VM per se is a “zonal” resource because is a monolithic object that can be deployed only into a single AZ. But can be also created without mentioning AZ at all, as you have always done before this feature. Instead, Azure Load Balancer can be created either as “zonal” resource or “zone redundant”.

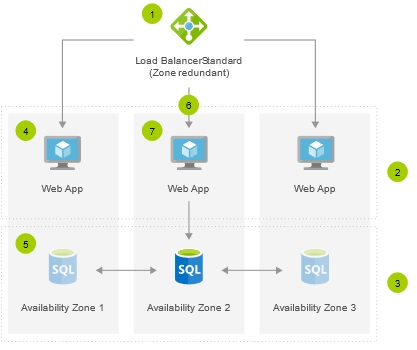

Load Balancer

Azure recently introduced a new type/SKU of load balancer (LB) called Azure Standard Load Balancer that has lots of new nice features, one of these is the support for Availability Zones (AZ). Pay attention to what I have just written: I said “support” because a LB resource itself is regional. Imagine it as zone-redundant already, even without leveraging AZ capability. What is correct to say is that LB can support zonal or zone-redundant scenarios, depending on your needs and configuration. Both public and internal LB supports both scenarios, with internal and external frontend IP configurations, can direct traffic across zones as needed (cross-zone load balancing). These two scenarios are reported below as examples:

Create a public Load Balancer Standard with zonal frontend using Azure CLI

/en-us/azure/load-balancer/load-balancer-standard-public-zonal-cli

Load balance VMs across all availability zones using Azure CLI

/en-us/azure/load-balancer/load-balancer-standard-public-zone-redundant-cli

Public IP

Along with the Load Balancer described above, also for Public IP (VIP) we have a Standard SKU. If you want/need to work with a Standard LB for AZ, you also need to use a Standard Public IP, you cannot mix the SKUs here. With Standard LB and VIP you can now enable zone redundancy on your public and internal frontends using a single IP address, or tie their frontend IP addresses to a specific zone. This type of cross-zone load balancing can address any VM or VMSS in a region. A zone-redundant IP address is served (advertised) by the load balancer in every zone, since the data path is anycast within the region. In the unlikely chance that a zone goes down completely, the load balancer is able to serve the traffic from instances in another zone, very quickly.

Virtual Network, Subnet, NIC, NSG and UDR

You may be surprised if you are not familiar with Azure, and coming from other Cloud providers with AZ feature available. Azure VNET and related subnets are regional by design, and do not require any AZ specification when creating them. Additionally, there is no requirement on subnet placing: both VNET and subnet can include IP addresses for resources across AZs. Azure supports region wide VNETs since 2014 as originally announced here. Based on this, there is no specific impact on definition and behavior for Network Security Groups (NSGs) and User Defined Routes (UDRs) when AZ design is applied. Same for the Network Interface Card (NIC) object.

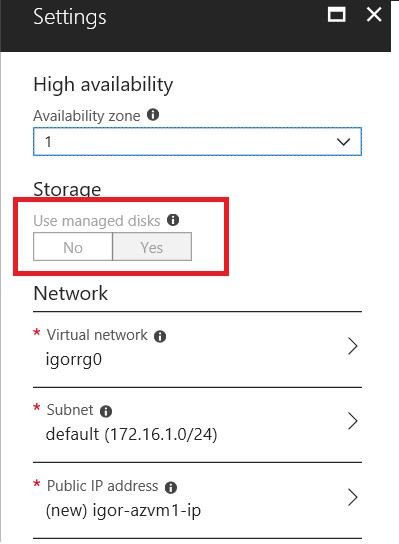

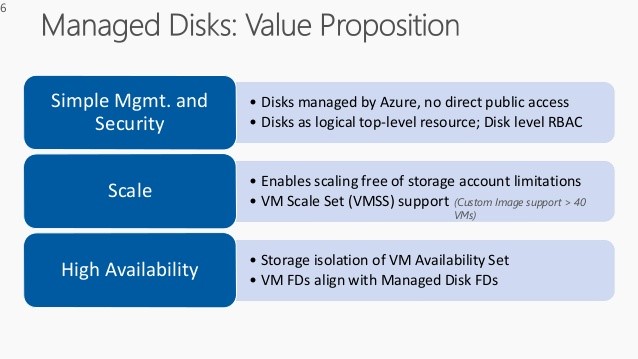

Managed Disk & Storage

A VM needs at least an OS disk to boot from, additional data disks are possible but not required. There are several types of disks available in Azure, but if you want to attach to a “zonal” VM, you need to use the Managed Disk (MD) type, either Premium or Standard, you cannot use the unmanaged legacy version. If using the Azure Portal, the “Storage” option will be immediately grayed out as soon as you will select a zone for your VM placement. Once again, Availability Zones feature requires Managed Disks for VMs.

Please remember that MD feature only supports Locally Redundant Storage (LRS) as the replication option. When you create a VM from the Azure Portal, or for example using PowerShell, a MD for OS will be implicitly created and co-located in the same AZ chosen for the placement of compute resources. MD can be also created as standalone object, syntax for creation commands/scripts has been augmented to allow AZ specification. MD is always a “zonal” object and cannot be directly moved/migrated. Be aware that you cannot attach a MD created in AZ(1) to a VM created in AZ(2).

Images & Snapshots

Images and Snapshots (for Managed Disks) are not strictly required, but you may need to use these objects. If you want to use a different image from the ones available in the Azure Gallery, you need to build your own one, store in the Azure storage as an image object, and then deploy new VMs sourcing from this new template. For Snapshots, the usage and purpose are a bit less evident: whenever you will take a snapshot on your VM Managed Disk, it will be created on ZRS storage and synchronously replicated across all storage zones. This is useful because MD storage cannot be neither ZRS nor GRS, more details in the next section. Now these two objects are “zone-redundant”.

General availability: Azure zone-redundant snapshots and images for managed disks

https://azure.microsoft.com/en-us/updates/azure-managed-snapshots-images-ga

Which kind of storage should I use for my zoned VM?

I decided to dedicate a specific section to this topic because there are some key aspects that need to be clarified. I’m not going to disclose any internal information, everything is in the Azure public documentation, but you may find difficult to connect all the dots and obtain a unique clear picture. First, let me clarify about ZRS: Zone Replicated Storage has been released in v1 long time ago. This is different from what, instead, we recently released as v2 on March 30th, 2018:

General availability: Zone-redundant storage

https://azure.microsoft.com/en-us/updates/azure-zrs

Now v1, renamed as “ZRS Classic”, is officially deprecated and will be retired on March 31, 2021. Original intent was to be used only for block blobs in general-purpose V1 (GPv1) storage accounts. ZRS Classic asynchronously replicates data across data centers within one to two regions. The new v2 instead, uses synchronous replication between all AZs in the region. Zone Redundant Storage (ZRS) synchronously replicates your data across three storage clusters in a single region. Each storage cluster is physically separated from the others and resides in its own availability zone (AZ). Each availability zone, and the ZRS cluster within it, is autonomous, with separate utilities and networking capabilities. Now that you are fully aware of the goodness of ZRS v2, you may be tempted to use it to host VM OS and/or Data disks. Since data replication is synchronous, then no data loss, in case of a zone disaster, you may want to mount the disks to another VM in another AZ. Unfortunately, ZRS v2 does *not* support disk page blobs, as stated in the article below:

ZRS currently supports standard, general-purpose v2 (GPv2) account types. ZRS is available for block blobs, non-disk page blobs, files, tables, and queues.

Zone-redundant storage (ZRS): Highly available Azure Storage applications

/en-us/azure/storage/common/storage-redundancy-zrs

At this point, you may ask yourself which storage/disk options you should use for your zoned VMs. As I briefly introduced in the previous section, you need to use Managed Disks (MD).

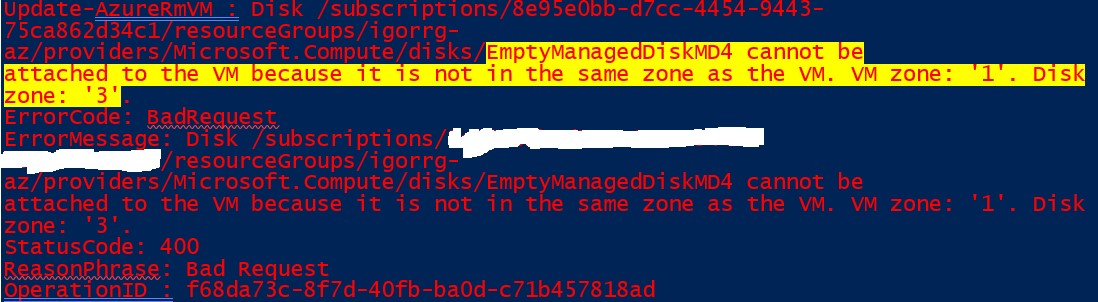

Premium will give you higher performance with SSD based storage, guaranteed IOPS and bandwidth, while Standard will be a cheaper choice. If you create a MD in AZ(1), you will have the benefit of the three local copies, but always remember that MD does not replicate data beyond the local AZ(1). Once MD will be created in a certain zone, it will be *not* possible to mount to a VM located in another zone, compute and storage here must be aligned (see error message below):

“EmptyManagedDiskMD4 cannot be attached to the VM because it is not in the same zone as the VM”

Since you are going to use VMs in each zone with local disks, how would you replicate data? Since we are in the IaaS context, it is all dependent on the application that you will install inside the VMs. Once again, Azure does not replicate the VM data in this context (i.e. data stored in disks) across zones. The customer can have benefits from AZ if their application replicates data to different zones. For example, they can use SQL Server Always-On Availability Group (AG) to host the primary replica (VM where data is written) in AZ(1) and then host secondary replicas in AZ(2), and a third one in AZ(3). SQL Always-On replicates the data from primary replica to the secondary replicas. If AZ(1) goes down, then SQL Server AG will automatically fail over to either AZ(2) or AZ(3). However, Azure automatically replicates the zone-redundant snapshots of Managed Disks. It ensures that snapshots are not affected by zone failure in a region. For example, if a snapshot is created on a disk in AZ(1) and it goes down, then the snapshot can be used to restore the VM in AZ(2) or AZ(3). Below are a couple of excellent articles to understand storage performances and replication strategies.

Azure Storage replication

/en-us/azure/storage/common/storage-redundancy

Azure Storage Scalability and Performance Targets

/en-us/azure/storage/common/storage-scalability-targets

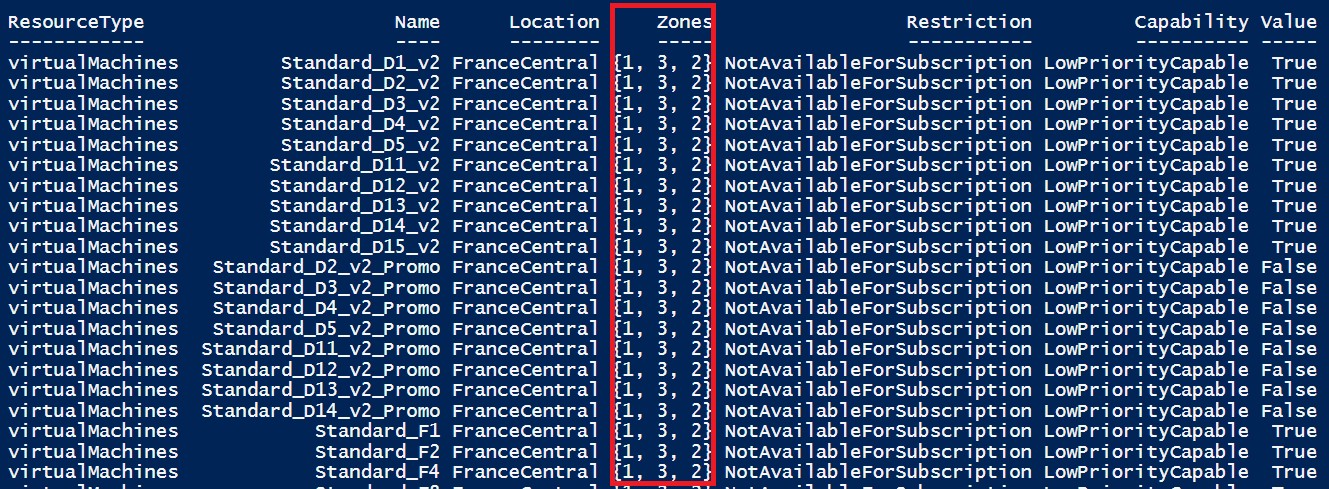

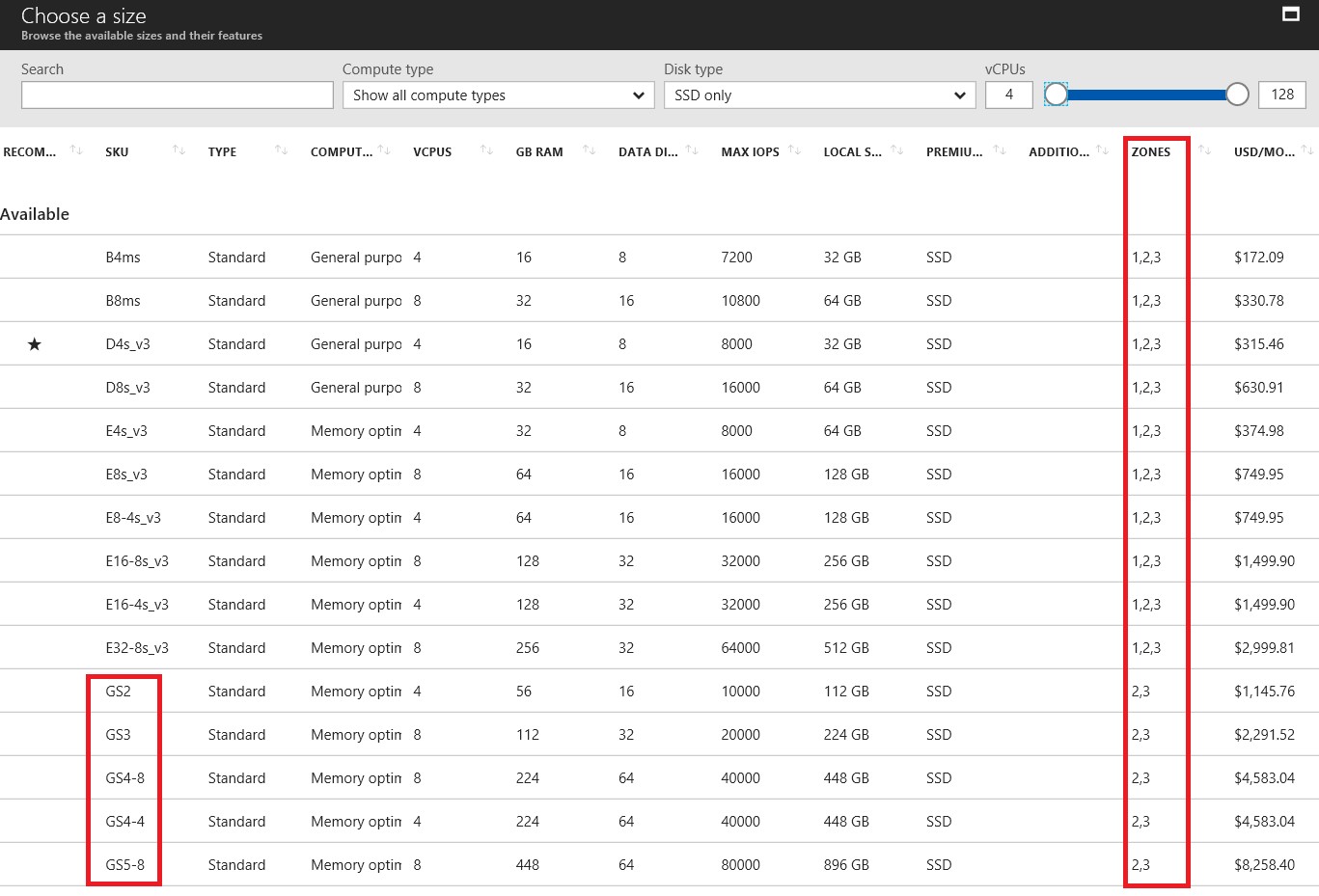

Where is my VM SKU in AZ?

Not all the VM SKUs are available now for AZ zonal VM deployment, but this gap will be reduced over time and more options will be progressively available. I tried to find a central unique link, to list all SKUs availability per region and per zone, but did not find it at the moment, probably it would be too hard to manage. What you can use instead, is the Azure Portal page when creating a VM and specifying the SKU/size, or you can use the nice PowerShell cmdlet “Get-AzureRmComputeResourceSku” as shown below:

Get-AzureRmComputeResourceSku | where {$_.Locations.Contains("FranceCentral") `

-and $_.ResourceType.Equals("virtualMachines") `

-and $_.LocationInfo[0].Zones -ne $null }

Pay attention to the column “Restriction”: as you can see in the print screen above, currently I don’t have provisioned capacity in that zone, in that region, for my subscription. If you want to list all regions and zones where your subscription can deploy VMs in AZ, you can use a slightly modified command:

Get-AzureRmComputeResourceSku | where {$_.ResourceType.Equals("virtualMachines") `

-and $_.LocationInfo[0].Zones -ne $null `

-and $_.Restrictions.Count -eq 0}

If you encounter the same, you should open a Support Ticket through the Azure Portal and ask for a quota increase. Be sure to specify that you want this capacity in the AZ. Using the Azure Portal is easy because based on your selection, available SKUs and zones will be specified for your convenience:

It is worth noting that some SKUs maybe not available in all the zones, as you can see for GS SKU, available only in zones “2” and “3” but not in “1”, in the print screen above. If you scroll down the list, you will also find two additional sections: the first one will tell you for which SKUs your subscription is enabled but you don’t have enough quota, and the second one will provide information on SKU not available at all in the specific region and zone.

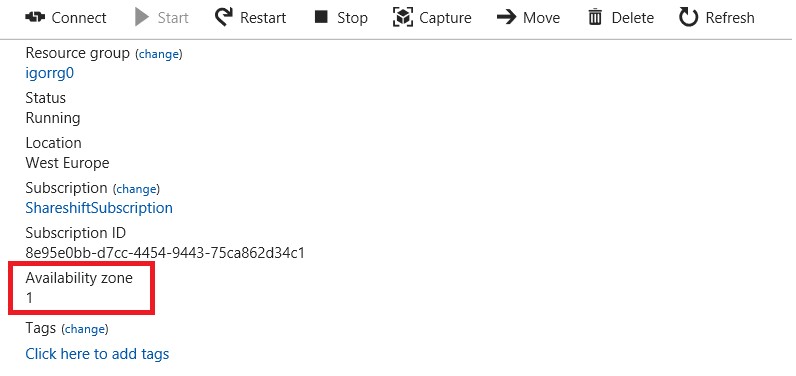

How can I check Zone property for my VM?

Azure Portal properties in the “Overview” pane:

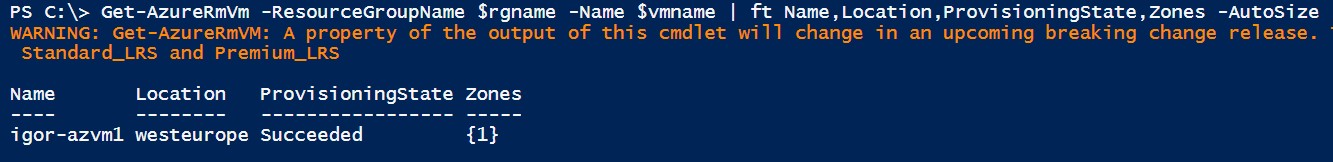

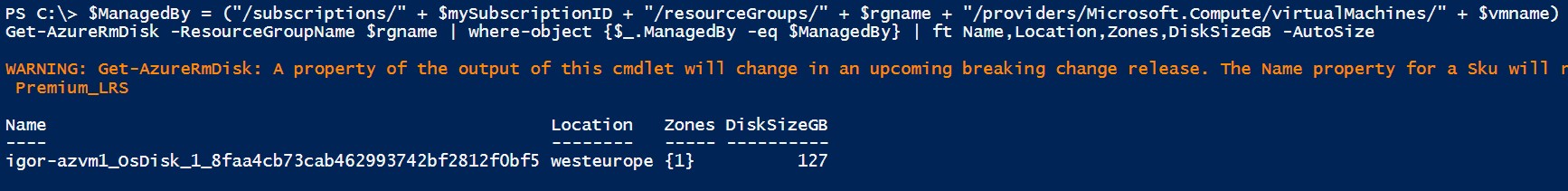

In PowerShell is easy to find the zone property for the VM object and all the Managed Disks in a Resource Group, look at the examples below. For now, I don’t see any PowerShell cmdlet dedicated to Availability Zones.

IMPORTANT: At the time of authoring this article, it seems that there is no way to change the zone where a VM is deployed or migrate a VM to make it “zonal”.

How much AZ will cost?

There is no direct cost associated to Availability Zones, but you will pay for network traffic as specified in the article below. There is a fee associated with data going into VM deployed in an AZ and data going out of VM deployed in an AZ.

Bandwidth Pricing Details

https://azure.microsoft.com/en-us/pricing/details/bandwidth

NOTE: Availability Zones are generally available, but data transfer billing will start on July 1, 2018. Usage prior to July 1, 2018 will not be billed.

Thank You!

Simple Azure Portal guide, and step-by-step code sample in PowerShell are available at the links below:

Create a Windows virtual machine in an availability zone with PowerShell

/en-us/azure/virtual-machines/windows/create-powershell-availability-zone

Create a Windows virtual machine in an availability zone with the Azure portal

/en-us/azure/virtual-machines/windows/create-portal-availability-zone

Hope this content has been useful and interesting for you, let me know your feedbacks. You can always follow me on Twitter ( @igorpag), enjoy with new Azure Availability Zones (AZ) feature!