Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

When SQL Server creates or updates statistics and a sampling rate is not manually specified, SQL Server calculates a default sampling rate. Depending on the real distribution of data in the underlying table, the default sampling rate may not accurately represent the data distribution and then cause degradation of query plan efficiency.

To improve this scenario, a database administrator can choose to manually update statistics with a specific sampling rate that can better represent the distribution of data. However, a subsequent automatic update statistics operation will reset back to the default sampling rate, possibly reintroducing degradation of query plan efficiency.

With the most recent SQL Server 2016 SP1 CU4 and SQL Server 2017 CU1, we released an enhancement for the CREATE and UPDATE STATISTICS command – the ability to persist sampling rates between updates with a PERSIST_SAMPLE_PERCENT keyword.

Let’s use an example. I’m using the following query:

SELECT [SalesOrderID],[ShipDate],[DueDate],[OrderDate]

FROM [Sales].[SalesOrderHeaderBulk]

WHERE OrderDate BETWEEN '8/30/2012 00:00:00.000' AND '9/30/2012 00:00:00.000'

GO

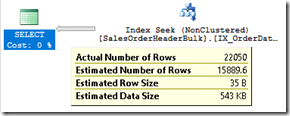

Looking at the actual plan, we see a skew between estimated and actual rows in the scan over the orders table:

Let’s check stats on this table then. I’m searching for stats on table orders with column col2 (part of the join argument):

SELECT ss.stats_id, ss.name, filter_definition, last_updated, rows,

rows_sampled, steps, unfiltered_rows, modification_counter, persisted_sample_percent,

(rows_sampled * 100)/rows AS sample_percent

FROM sys.stats ss

INNER JOIN sys.stats_columns sc

ON ss.stats_id = sc.stats_id AND ss.object_id = sc.object_id

INNER JOIN sys.all_columns ac

ON ac.column_id = sc.column_id AND ac.object_id = sc.object_id

CROSS APPLY sys.dm_db_stats_properties(ss.object_id, ss.stats_id) shr

WHERE ss.[object_id] = OBJECT_ID('[Sales].[SalesOrderHeaderBulk]')

AND ac.name = 'OrderDate';

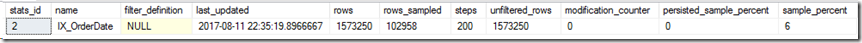

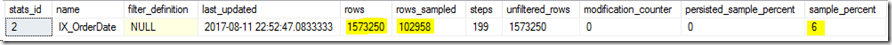

We see a statistic for the index used above, with a sampling rate of 6 percent. Let’s say I really need to improve estimations, and that having a higher sampling rate could just do that.

So I’ll just update this statistic, and while I could try to find a better (and higher) sampling rate, for this demo I’ll just update with full scan:

UPDATE STATISTICS [Sales].[SalesOrderHeaderBulk] [IX_OrderDate] WITH FULLSCAN

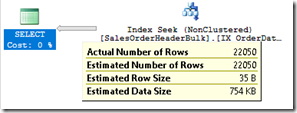

Let’s see the estimations now:

Great, so what happens when auto update statistics is triggered? Or I have some stats maintenance job that does not explicitly sets the sampling rate?

UPDATE STATISTICS [Sales].[SalesOrderHeaderBulk] [IX_OrderDate]

It’s back at default sampling (in this case 6 percent).

I really want to always update this statistic with 100 percent sampling rate, so I can now use the following PERSIST_SAMPLE_PERCENT keyword:

UPDATE STATISTICS [Sales].[SalesOrderHeaderBulk] [IX_OrderDate]

WITH FULLSCAN, PERSIST_SAMPLE_PERCENT = ON

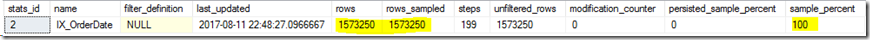

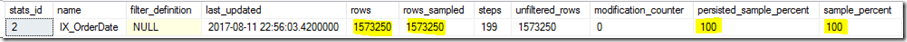

Notice the new persisted_sample_percent column is now showing 100 percent (because I used FULLSCAN). Both DBCC SHOW_STATISTICS and sys.dm_db_stats_properties have this new column.

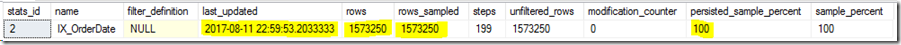

What happens when auto update statistics is triggered or my stats are manually updated again?

UPDATE STATISTICS [Sales].[SalesOrderHeaderBulk] [IX_OrderDate]

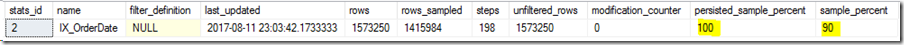

My choice for sampling percentage is now persisted. It will remain persisted until I set this option to OFF. When set to OFF (which is the default), the statistics sampling percentage will get reset to default sampling in subsequent updates that do not explicitly specify a sampling percentage.

Note that if a manual update statistics is executed with a different sampling percentage, but not using PERSIST_SAMPLE_PERCENT keyword to set it as the new persisted value, the previously set fixed sampling will still be in effect for subsequent auto update statistics, or any other manual update that does not set a specific sampling rate.

UPDATE STATISTICS [Sales].[SalesOrderHeaderBulk] [IX_OrderDate] WITH SAMPLE 90 PERCENT

Pedro Lopes (@sqlpto) – Senior Program Manager

Comments

- Anonymous

August 13, 2017

This is a great addition, thanks! - Anonymous

August 14, 2017

a true life saver to avoid overriding sample rate by automatic update statistics for a given object. - Anonymous

August 14, 2017

This is a cool feature indeed. Thanks for the update. - Anonymous

August 16, 2017

Wow, very nice! - Anonymous

August 22, 2017

This is amazing... I have been wanting this for quite a while. - Anonymous

August 28, 2017

Great feature....is there any way to set this on database level or table level (for all statistics) ?- Anonymous

August 28, 2017

Hi Venkat. You can update all stats in a given table. Here's an example with full scan: UPDATE STATISTICS tablename WITH ALL, FULLSCAN, PERSIST_SAMPLE_PERCENT = ON.You can use our own AdaptiveIndexDefrag, which already includes support for this new feature.

- Anonymous

- Anonymous

September 08, 2017

Thanks! That's a great improvement and I was hoping to get something like this since ages.BR Gerald - Anonymous

October 04, 2017

This new column in sys.dm_db_stats_properties does not seem to be present in SQL Server 2017 RTM- Anonymous

October 04, 2017

Hi Glenn,True, we had closed SQL 2017 RTM by then. Persisting stats sampling rate will be enabled for SQL 2017 in an upcoming CU1.

- Anonymous

- Anonymous

October 17, 2017

Is there any chance to back-port in lower versions, such as 2014?- Anonymous

November 09, 2017

Hello Berke, no plans to back port to SQL 2014.

- Anonymous