Using customvision.ai and building an Android application offline image identification app

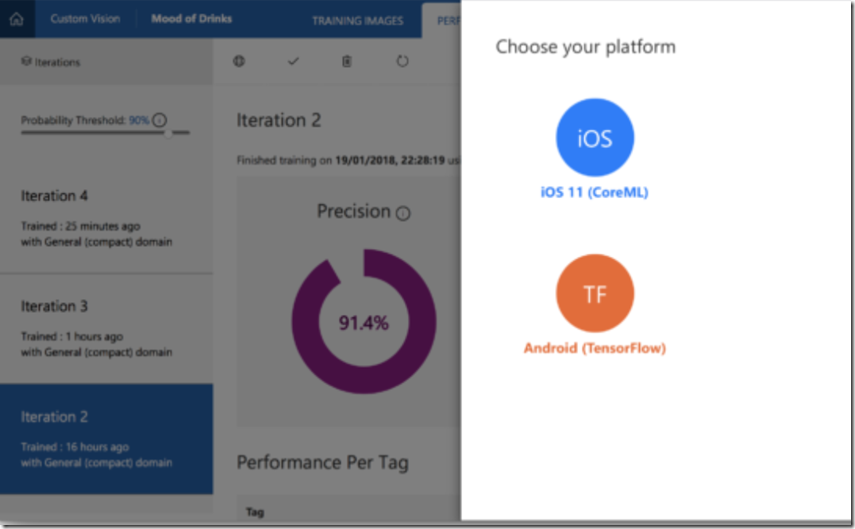

With the recent release of offline custom vision for iOS and CoreML https://blogs.msdn.microsoft.com/uk_faculty_connection/2017/09/14/microsoft-custom-vision-api-and-intelligent-edge-with-ios-11/

You can now build offline model to Android devices https://github.com/Azure-Samples/cognitive-services-android-customvision-sample

The following sample application demonstrates how to take a model exported from the Custom Vision Service in the TensorFlow format and add it to an application for real-time image classification.

Prerequisites

- Android Studio (latest)

- Android device

- An account at Custom Vision Service

Quickstart

- clone the repository and open the project in Android Studio https://github.com/Azure-Samples/cognitive-services-android-customvision-sample

- Build and run the sample on your Android device

Replacing the sample model with your own classifier

The model provided with the sample recognizes some fruits. to replace it with your own model exported from the Custom Vision Service do the following, and then build and launch the application:

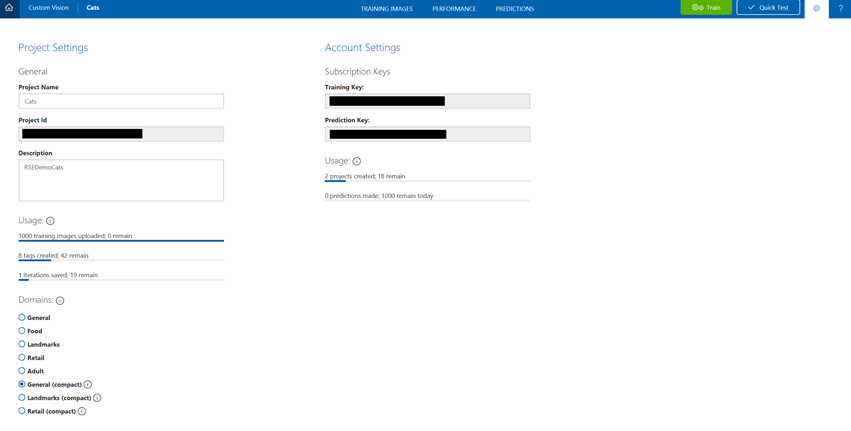

- Create and train a classifer with the Custom Vision Service. You must choose a "compact" domain such as General (compact) to be able to export your classifier. If you have an existing classifier you want to export instead, convert the domain in "settings" by clicking on the gear icon at the top right. In setting, choose a "compact" model, Save, and Train your project.

- Export your model by going to the Performance tab. Select an iteration trained with a compact domain, an "Export" button will appear. Click on Export then TensorFlow then Export. Click the Download button when it appears. A .zip file will download that contains TensorFlow model (.pb) and Labels (.txt)

- Drop your model.pb and labels.txt file into your Android project's Assets folder.

- Build and run.

Make sure the mean values (IMAGE_MEAN_R, IMAGE_MEAN_G, IMAGE_MEAN_B in MSCognitiveServicesClassifier.java) are correct based on your project's domain in Custom Vision:

Project's Domain

Mean Values (RGB)

General (compact)

(123, 117, 104)

Landmark (compact)

(123, 117, 104)

Retail (compact)

(0, 0, 0)

Resources

- Link to TensorFlow documentation

- Link to Custom Vision Service Documentation

- Building an offlne model on iOS https://blogs.msdn.microsoft.com/uk_faculty_connection/2018/01/20/getting-started-building-a-ios-offline-app-using-customvision-ai/