After you successfully deploy a model, you can query the deployment to classify text based on the model you assigned to the deployment.

You can query the deployment programmatically Prediction API or through the client libraries (Azure SDK).

Test deployed model

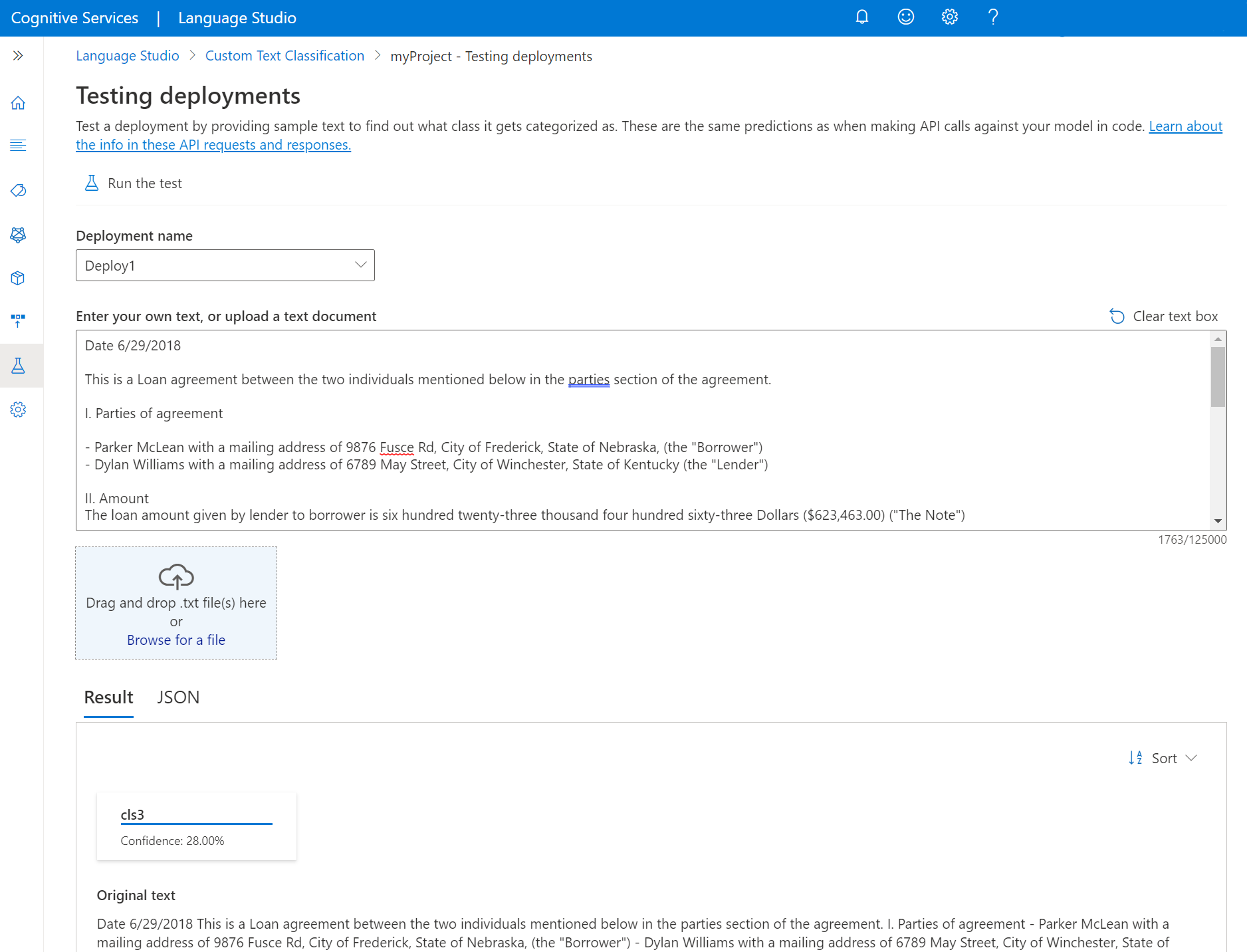

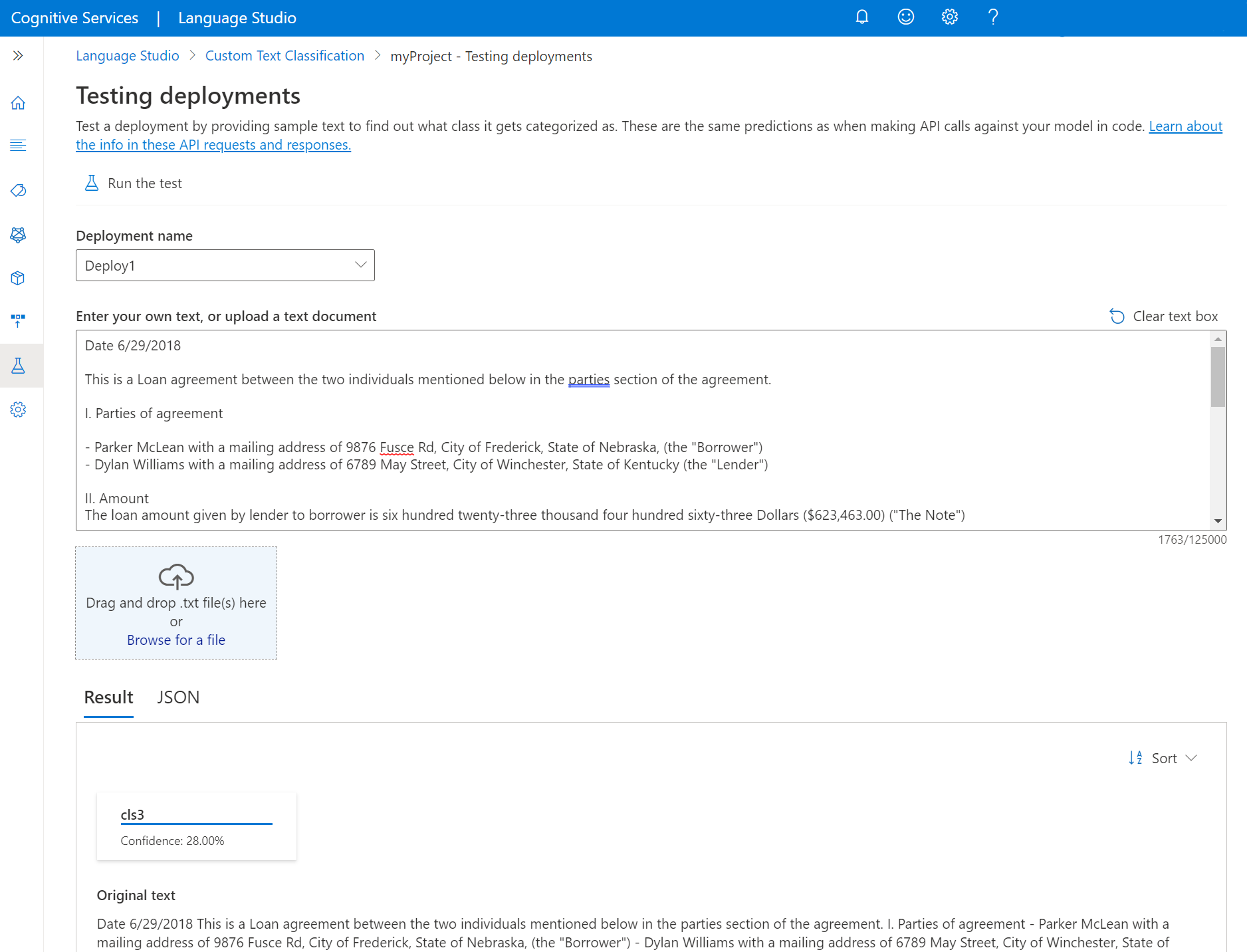

You can use Language Studio to submit the custom text classification task and visualize the results.

To test your deployed models from within the Language Studio:

Select Testing deployments from the left side menu.

Select the deployment you want to test. You can only test models that are assigned to deployments.

For multilingual projects, from the language dropdown, select the language of the text you're testing.

Select the deployment you want to query/test from the dropdown.

You can enter the text you want to submit to the request or upload a .txt file to use.

Select Run the test from the top menu.

In the Result tab, you can see the extracted entities from your text and their types. You can also view the JSON response under the JSON tab.

Send a text classification request to your model

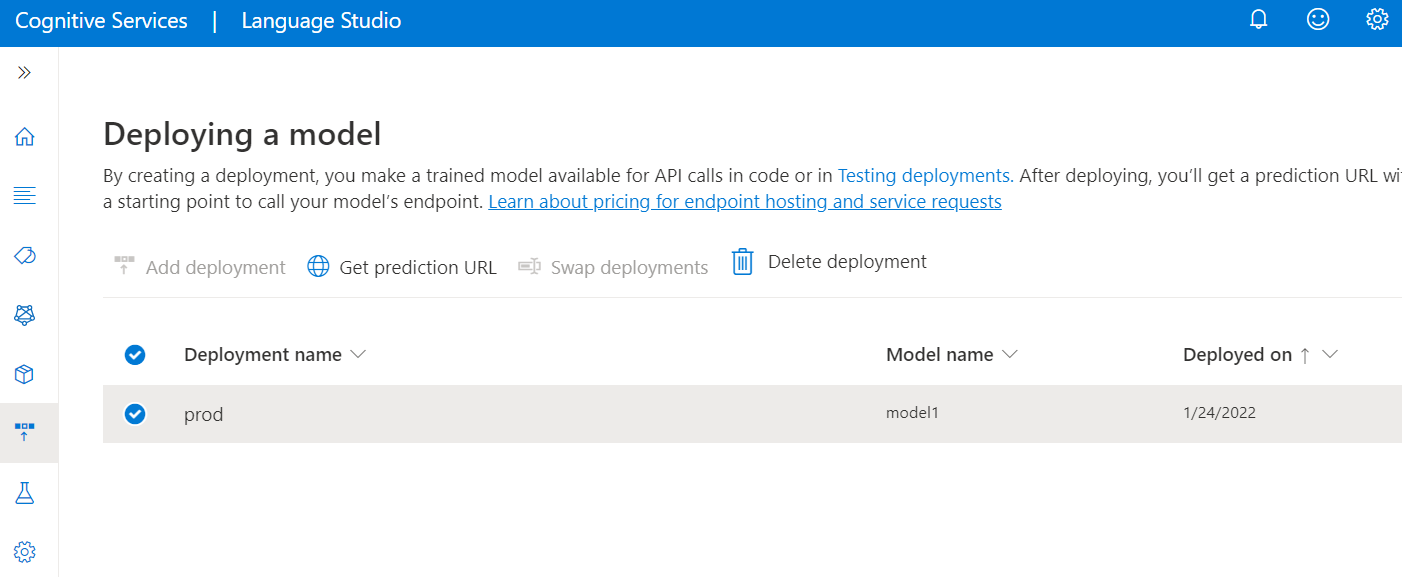

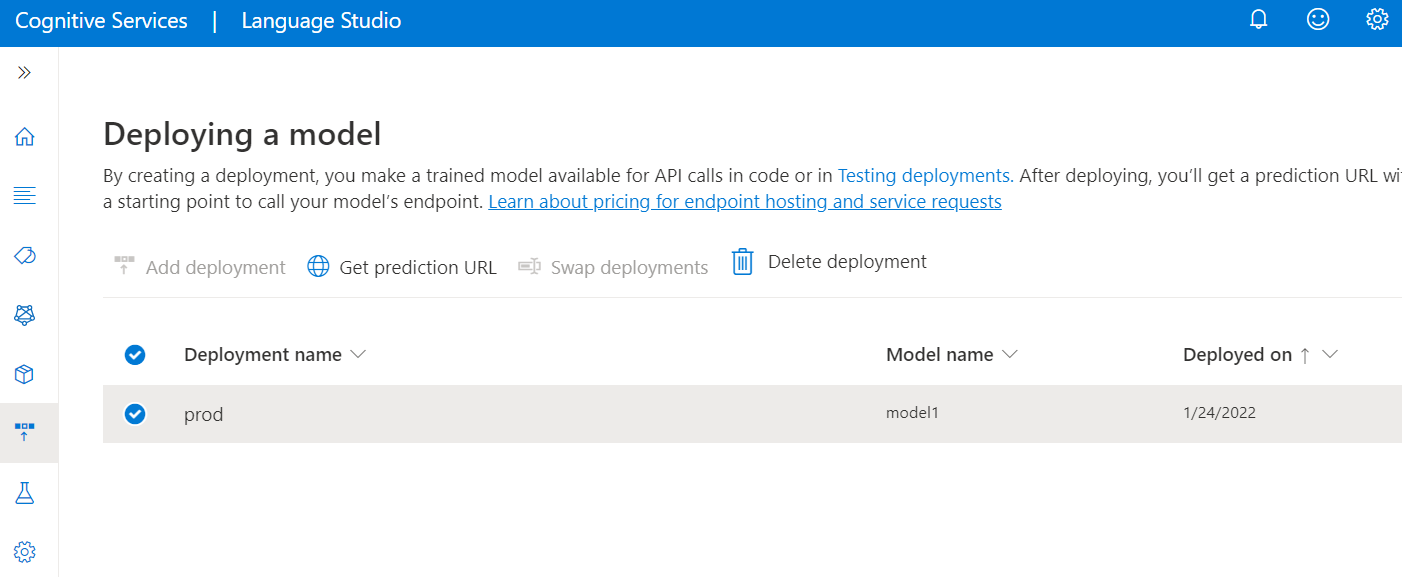

After the deployment job is completed successfully, select the deployment you want to use and from the top menu select Get prediction URL.

In the window that appears, under the Submit pivot, copy the sample request URL and body. Replace the placeholder values such as YOUR_DOCUMENT_HERE and YOUR_DOCUMENT_LANGUAGE_HERE with the actual text and language you want to process.

Submit the POST cURL request in your terminal or command prompt. You receive a 202 response with the API results if the request was successful.

In the response header you receive extract {JOB-ID} from operation-location, which has the format: {ENDPOINT}/language/analyze-text/jobs/<JOB-ID}>

Back to Language Studio; select Retrieve pivot from the same window you got the example request you got earlier and copy the sample request into a text editor.

Add your job ID after /jobs/ to the URL, using the ID you extracted from the previous step.

Submit the GET cURL request in your terminal or command prompt.

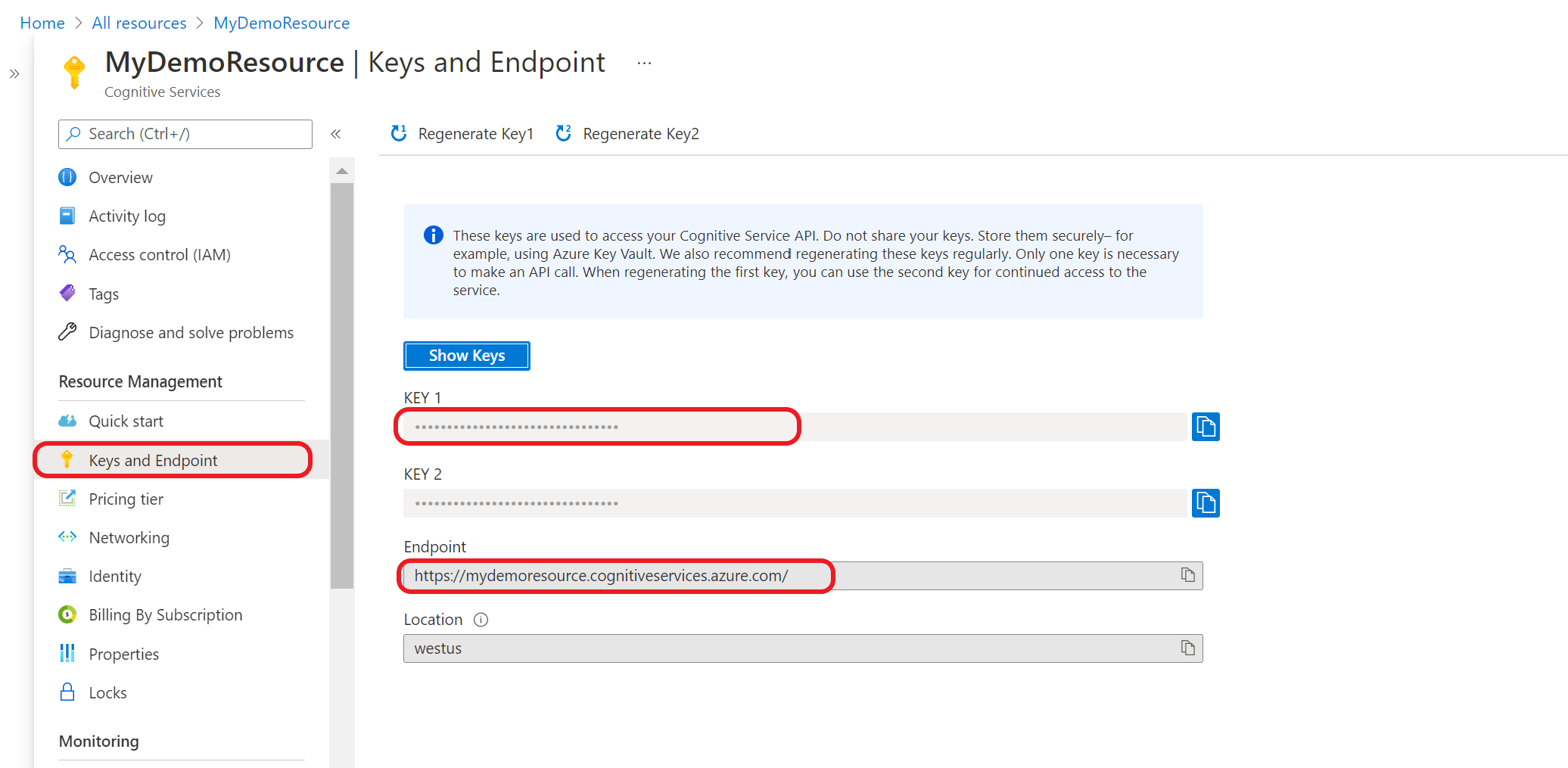

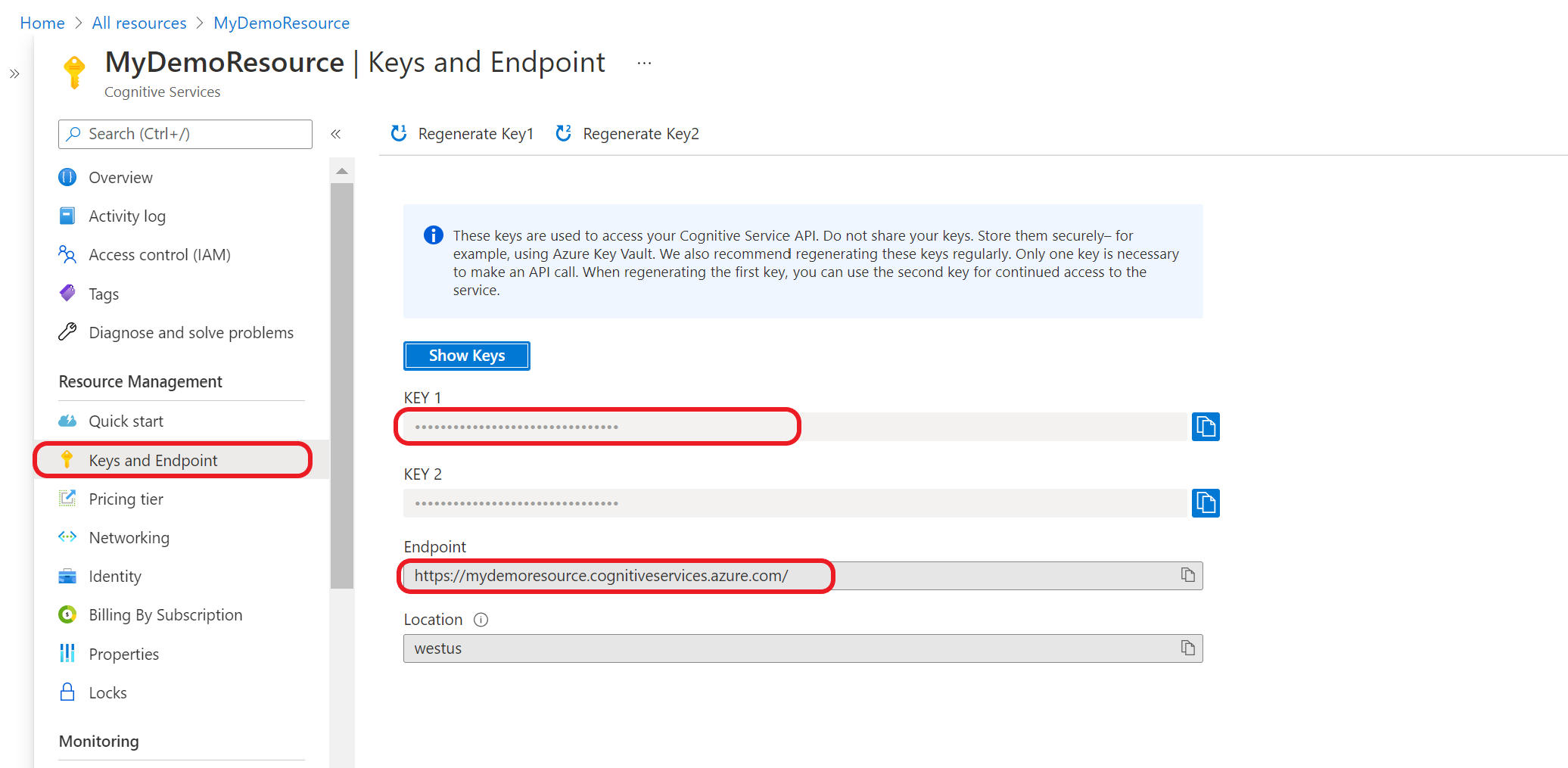

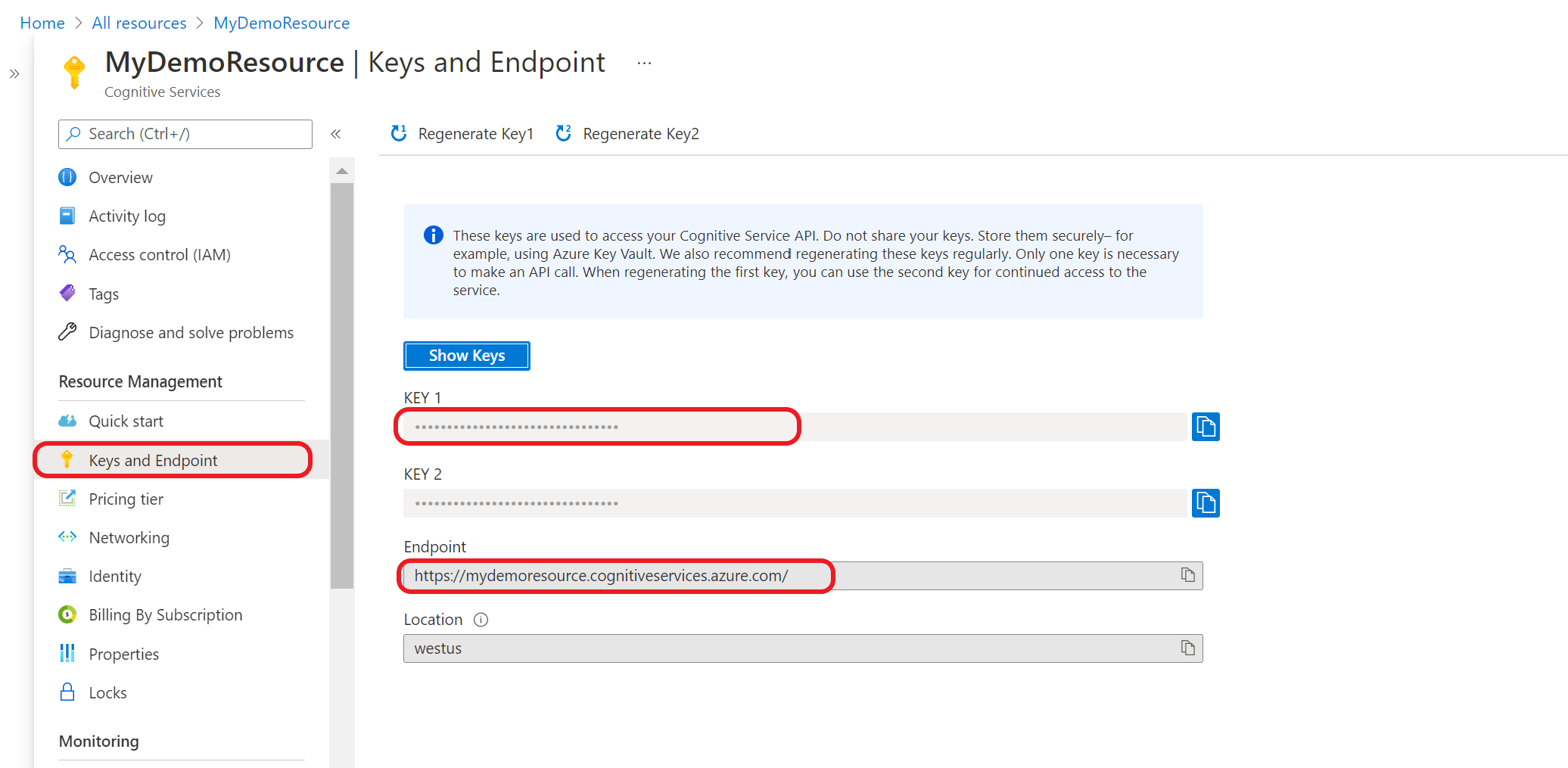

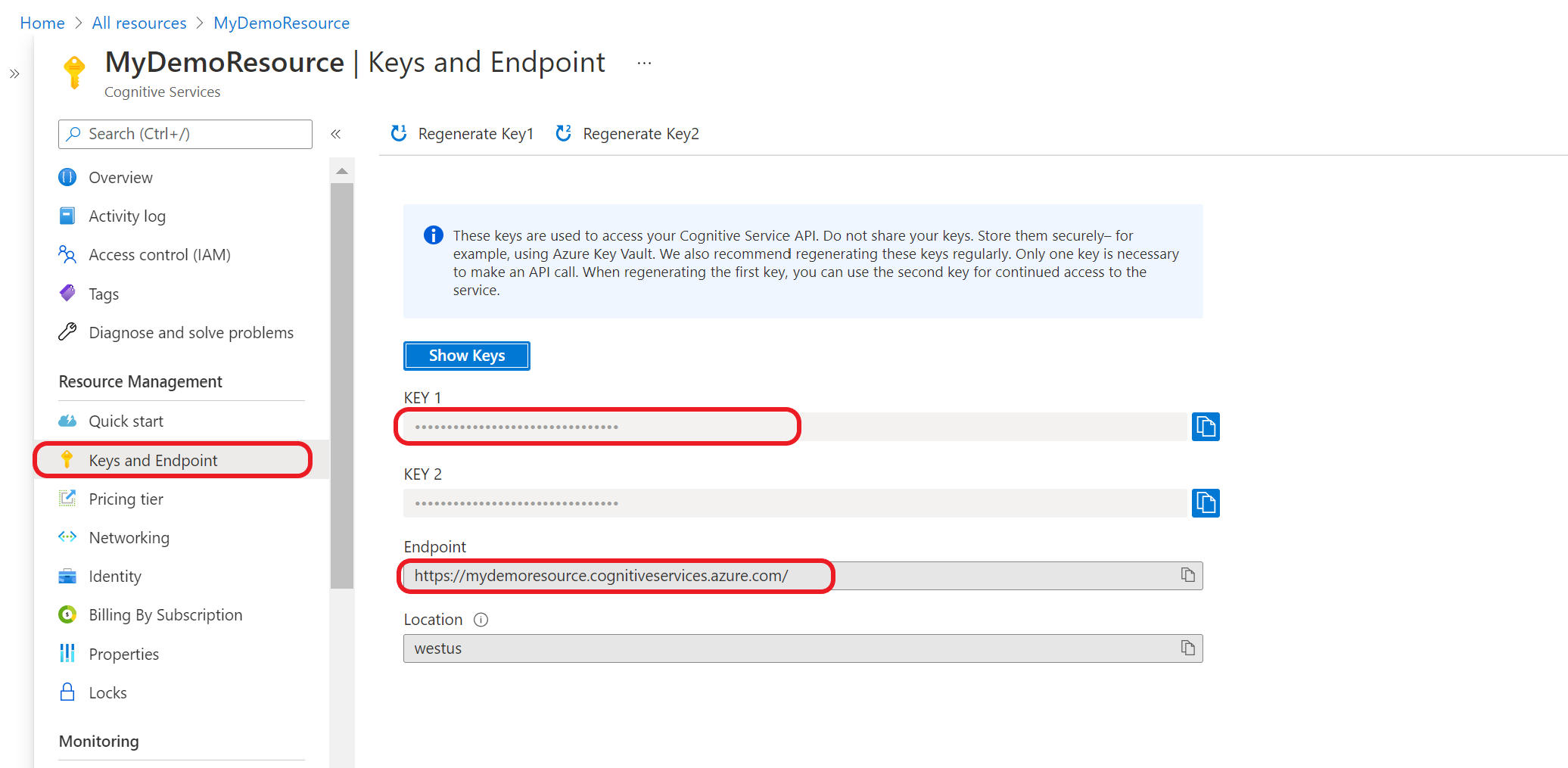

First you need to get your resource key and endpoint:

Go to your resource overview page in the Azure portal

From the menu on the left side, select Keys and Endpoint. You use the endpoint and key for the API requests

Submit a custom text classification task

Use this POST request to start a text classification task.

{ENDPOINT}/language/analyze-text/jobs?api-version={API-VERSION}

| Placeholder |

Value |

Example |

{ENDPOINT} |

The endpoint for authenticating your API request. |

https://<your-custom-subdomain>.cognitiveservices.azure.com |

{API-VERSION} |

The version of the API you're calling. The value referenced is for the latest version released. For more information, see Model lifecycle. |

2022-05-01 |

| Key |

Value |

| Ocp-Apim-Subscription-Key |

Your key that provides access to this API. |

Body

{

"displayName": "Classifying documents",

"analysisInput": {

"documents": [

{

"id": "1",

"language": "{LANGUAGE-CODE}",

"text": "Text1"

},

{

"id": "2",

"language": "{LANGUAGE-CODE}",

"text": "Text2"

}

]

},

"tasks": [

{

"kind": "CustomMultiLabelClassification",

"taskName": "Multi Label Classification",

"parameters": {

"projectName": "{PROJECT-NAME}",

"deploymentName": "{DEPLOYMENT-NAME}"

}

}

]

}

| Key |

Placeholder |

Value |

Example |

displayName |

{JOB-NAME} |

Your job name. |

MyJobName |

documents |

[{},{}] |

List of documents to run tasks on. |

[{},{}] |

id |

{DOC-ID} |

Document name or ID. |

doc1 |

language |

{LANGUAGE-CODE} |

A string specifying the language code for the document. If this key isn't specified, the service will assume the default language of the project that was selected during project creation. See language support for a list of supported language codes. |

en-us |

text |

{DOC-TEXT} |

Document task to run the tasks on. |

Lorem ipsum dolor sit amet |

tasks |

|

List of tasks we want to perform. |

[] |

taskName |

CustomMultiLabelClassification |

The task name |

CustomMultiLabelClassification |

parameters |

|

List of parameters to pass to the task. |

|

project-name |

{PROJECT-NAME} |

The name for your project. This value is case-sensitive. |

myProject |

deployment-name |

{DEPLOYMENT-NAME} |

The name of your deployment. This value is case-sensitive. |

prod |

{

"displayName": "Classifying documents",

"analysisInput": {

"documents": [

{

"id": "1",

"language": "{LANGUAGE-CODE}",

"text": "Text1"

},

{

"id": "2",

"language": "{LANGUAGE-CODE}",

"text": "Text2"

}

]

},

"tasks": [

{

"kind": "CustomSingleLabelClassification",

"taskName": "Single Classification Label",

"parameters": {

"projectName": "{PROJECT-NAME}",

"deploymentName": "{DEPLOYMENT-NAME}"

}

}

]

}

| Key |

Placeholder |

Value |

Example |

| displayName |

{JOB-NAME} |

Your job name. |

MyJobName |

| documents |

|

List of documents to run tasks on. |

|

id |

{DOC-ID} |

Document name or ID. |

doc1 |

language |

{LANGUAGE-CODE} |

A string specifying the language code for the document. If this key isn't specified, the service will assume the default language of the project that was selected during project creation. See language support for a list of supported language codes. |

en-us |

text |

{DOC-TEXT} |

Document task to run the tasks on. |

Lorem ipsum dolor sit amet |

taskName |

CustomSingleLabelClassification |

The task name |

CustomSingleLabelClassification |

tasks |

[] |

Array of tasks we want to perform. |

[] |

parameters |

|

List of parameters to pass to the task. |

|

project-name |

{PROJECT-NAME} |

The name for your project. This value is case-sensitive. |

myProject |

deployment-name |

{DEPLOYMENT-NAME} |

The name of your deployment. This value is case-sensitive. |

prod |

Response

You receive a 202 response indicating success. In the response headers, extract operation-location.

operation-location is formatted like this:

{ENDPOINT}/language/analyze-text/jobs/{JOB-ID}?api-version={API-VERSION}

You can use this URL to query the task completion status and get the results when task is completed.

Get task results

Use the following GET request to query the status/results of the text classification task.

{ENDPOINT}/language/analyze-text/jobs/{JOB-ID}?api-version={API-VERSION}

| Placeholder |

Value |

Example |

{ENDPOINT} |

The endpoint for authenticating your API request. |

https://<your-custom-subdomain>.cognitiveservices.azure.com |

{API-VERSION} |

The version of the API you're calling. The value referenced is for the latest released model version version. |

2022-05-01 |

| Key |

Value |

| Ocp-Apim-Subscription-Key |

Your key that provides access to this API. |

Response body

The response will be a JSON document with the following parameters.

{

"createdDateTime": "2021-05-19T14:32:25.578Z",

"displayName": "MyJobName",

"expirationDateTime": "2021-05-19T14:32:25.578Z",

"jobId": "xxxx-xxxxxx-xxxxx-xxxx",

"lastUpdateDateTime": "2021-05-19T14:32:25.578Z",

"status": "succeeded",

"tasks": {

"completed": 1,

"failed": 0,

"inProgress": 0,

"total": 1,

"items": [

{

"kind": "customMultiClassificationTasks",

"taskName": "Classify documents",

"lastUpdateDateTime": "2020-10-01T15:01:03Z",

"status": "succeeded",

"results": {

"documents": [

{

"id": "{DOC-ID}",

"classes": [

{

"category": "Class_1",

"confidenceScore": 0.0551877357

}

],

"warnings": []

}

],

"errors": [],

"modelVersion": "2020-04-01"

}

}

]

}

}

{

"createdDateTime": "2021-05-19T14:32:25.578Z",

"displayName": "MyJobName",

"expirationDateTime": "2021-05-19T14:32:25.578Z",

"jobId": "xxxx-xxxxxx-xxxxx-xxxx",

"lastUpdateDateTime": "2021-05-19T14:32:25.578Z",

"status": "succeeded",

"tasks": {

"completed": 1,

"failed": 0,

"inProgress": 0,

"total": 1,

"items": [

{

"kind": "customSingleClassificationTasks",

"taskName": "Classify documents",

"lastUpdateDateTime": "2020-10-01T15:01:03Z",

"status": "succeeded",

"results": {

"documents": [

{

"id": "{DOC-ID}",

"class": [

{

"category": "Class_1",

"confidenceScore": 0.0551877357

}

],

"warnings": []

}

],

"errors": [],

"modelVersion": "2020-04-01"

}

}

]

}

}

First you need to get your resource key and endpoint:

Go to your resource overview page in the Azure portal

From the menu on the left side, select Keys and Endpoint. The endpoint and key are used for API requests.

Download and install the client library package for your language of choice:

After you install the client library, use the following samples on GitHub to start calling the API.

Single label classification:

Multi label classification:

See the following reference documentation on the client, and return object:

Next steps