Use QnA Maker to answer questions

APPLIES TO: SDK v4

Note

Azure AI QnA Maker will be retired on 31 March 2025. Beginning 1 October 2022, you won't be able to create new QnA Maker resources or knowledge bases. A newer version of the question and answering capability is now available as part of Azure AI Language.

Custom question answering, a feature of Azure AI Language, is the updated version of the QnA Maker service. For more information about question-and-answer support in the Bot Framework SDK, see Natural language understanding.

QnA Maker provides a conversational question and answer layer over your data. This allows your bot to send a question to the QnA Maker and receive an answer without needing to parse and interpret the question intent.

One of the basic requirements in creating your own QnA Maker service is to populate it with questions and answers. In many cases, the questions and answers already exist in content like FAQs or other documentation; other times, you may want to customize your answers to questions in a more natural, conversational way.

This article describes how to use an existing QnA Maker knowledge base from your bot.

For new bots, consider using the question answering feature of Azure Cognitive Service for Language. For information, see Use question answering to answer questions.

Note

The Bot Framework JavaScript, C#, and Python SDKs will continue to be supported, however, the Java SDK is being retired with final long-term support ending in November 2023.

Existing bots built with the Java SDK will continue to function.

For new bot building, consider using Microsoft Copilot Studio and read about choosing the right copilot solution.

For more information, see The future of bot building.

Prerequisites

- A QnA Maker account and an existing QnA Maker knowledge base.

- Knowledge of bot basics and QnA Maker.

- A copy of the QnA Maker (simple) sample in C# (archived), JavaScript (archived), Java (archived), or Python (archived).

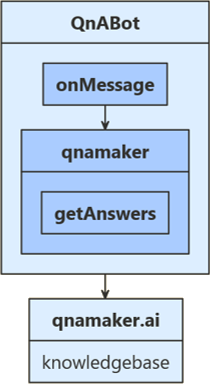

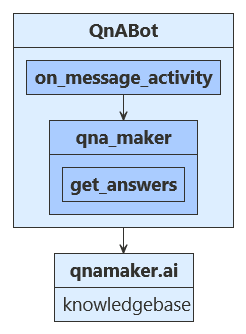

About this sample

To use QnA Maker in your bot, you need an existing knowledge base in the QnA Maker portal. Your bot then can use the knowledge base to answer the user's questions.

For new bot development, consider using Copilot Studio. If you need to create a new knowledge base for a Bot Framework SDK bot, see the following Azure AI services articles:

- What is question answering?

- Create an FAQ bot

- Azure Cognitive Language Services Question Answering client library for .NET

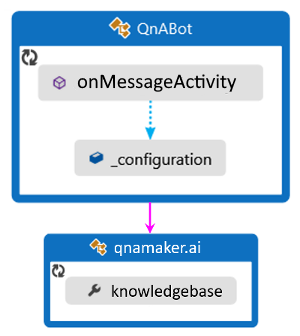

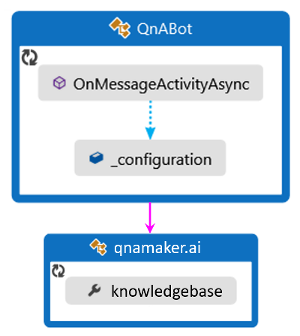

OnMessageActivityAsync is called for each user input received. When called, it accesses configuration settings from the sample code's appsetting.json file to find the value to connect to your pre-configured QnA Maker knowledge base.

The user's input is sent to your knowledge base and the best returned answer is displayed back to your user.

Obtain values to connect your bot to the knowledge base

Tip

The QnA Maker documentation has instructions on how to create, train, and publish your knowledge base.

- In the QnA Maker site, select your knowledge base.

- With your knowledge base open, select the SETTINGS tab. Record the value shown for service name. This value is useful for finding your knowledge base of interest when using the QnA Maker portal interface. It's not used to connect your bot app to this knowledge base.

- Scroll down to find Deployment details and record the following values from the Postman sample HTTP request:

- POST /knowledgebases/<knowledge-base-id>/generateAnswer

- Host: <your-host-url>

- Authorization: EndpointKey <your-endpoint-key>

Your host URL will start with https:// and end with /qnamaker, such as https://<hostname>.azure.net/qnamaker. Your bot needs the knowledge base ID, host URL, and endpoint key to connect to your QnA Maker knowledge base.

Update the settings file

First, add the information required to access your knowledge base—including host name, endpoint key and knowledge base ID (kbId)—to the settings file. These are the values you saved from the SETTINGS tab of your knowledge base in QnA Maker.

If you aren't deploying this for production, you can leave your bot's app ID and password fields blank.

Note

To add a QnA Maker knowledge base into an existing bot application, be sure to add informative titles for your QnA entries. The "name" value within this section provides the key required to access this information from within your app.

appsettings.json

Set up the QnA Maker instance

First, we create an object for accessing our QnA Maker knowledge base.

Be sure that the Microsoft.Bot.Builder.AI.QnA NuGet package is installed for your project.

In QnABot.cs, in the OnMessageActivityAsync method, create a QnAMaker instance. The QnABot class is also where the names of the connection information, saved in appsettings.json above, are pulled in. If you chose different names for your knowledge base connection information in your settings file, be sure to update the names here to reflect your chosen name.

Bots/QnABot.cs

Calling QnA Maker from your bot

When your bot needs an answer from QnAMaker, call the GetAnswersAsync method from your bot code to get the appropriate answer based on the current context. If you're accessing your own knowledge base, change the no answers found message below to provide useful instructions for your users.

Bots/QnABot.cs

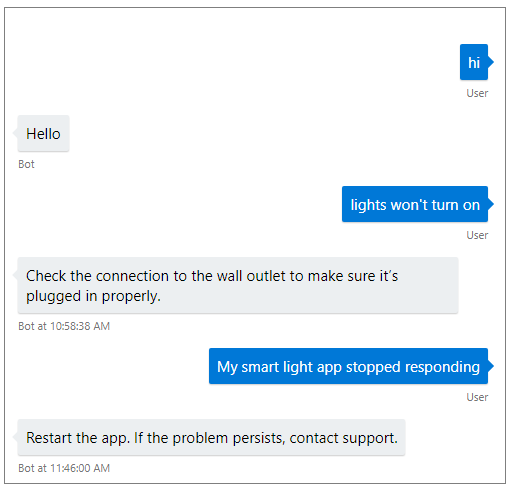

Test the bot

Run the sample locally on your machine. If you haven't done so already, install the Bot Framework Emulator. For further instructions, refer to the sample's README (C# (archived), JavaScript (archived), Java (archived), or Python (archived)).

Start the Emulator, connect to your bot, and send messages to your bot. The responses to your questions will vary, based on the information your knowledge base.

Additional information

The QnA Maker multi-turn sample (C# multi-turn sample (archived), JavaScript multi-turn sample (archived), Java multi-turn sample (archived), Python multi-turn sample (archived)) shows how to use a QnA Maker dialog to support QnA Maker's follow-up prompt and active learning features.

QnA Maker supports follow-up prompts, also known as multi-turn prompts. If the QnA Maker knowledge base requires more information from the user, QnA Maker sends context information that you can use to prompt the user. This information is also used to make any follow-up calls to the QnA Maker service. In version 4.6, the Bot Framework SDK added support for this feature.

To construct such a knowledge base, see the QnA Maker documentation on how to Use follow-up prompts to create multiple turns of a conversation.

QnA Maker also supports active learning suggestions, allowing the knowledge base to improve over time. The QnA Maker dialog supports explicit feedback for the active learning feature.

To enable this feature on a knowledge base, see the QnA Maker documentation on Active learning suggestions.

Next steps

QnA Maker can be combined with other Azure AI services, to make your bot even more powerful. Bot Framework Orchestrator provides a way to combine QnA with Language Understanding (LUIS) in your bot.