Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

APPLIES TO:  Azure Data Factory

Azure Data Factory  Azure Synapse Analytics

Azure Synapse Analytics

Tip

Data Factory in Microsoft Fabric is the next generation of Azure Data Factory, with a simpler architecture, built-in AI, and new features. If you're new to data integration, start with Fabric Data Factory. Existing ADF workloads can upgrade to Fabric to access new capabilities across data science, real-time analytics, and reporting.

This article outlines how to use the Copy Activity in Azure Data Factory and Synapse Analytics pipelines to copy data from an Amazon Redshift. It builds on the copy activity overview article that presents a general overview of copy activity.

Important

The Amazon Redshift connector version 2.0 provides improved native Amazon Redshift support. If you are using Amazon Redshift connector version 1.0 in your solution, please upgrade the Amazon Redshift connector as version 1.0 is at End of Support stage. Your pipeline will fail after April 30, 2026. Refer to this section for details on the difference between version 2.0 and version 1.0.

Supported capabilities

This Amazon Redshift connector is supported for the following capabilities:

| Supported capabilities | IR |

|---|---|

| Copy activity (source/-) | ① ② |

| Lookup activity | ① ② |

① Azure integration runtime ② Self-hosted integration runtime

For a list of data stores that are supported as sources or sinks by the copy activity, see the Supported data stores table.

The service provides a built-in driver to enable connectivity, therefore you don't need to manually install any driver.

The Amazon Redshift connector supports retrieving data from Redshift using query or built-in Redshift UNLOAD support.

The connector supports the Windows versions in this article.

Tip

To achieve the best performance when copying large amounts of data from Redshift, consider using the built-in Redshift UNLOAD through Amazon S3. See Use UNLOAD to copy data from Amazon Redshift section for details.

Prerequisites

If you are copying data to an on-premises data store using Self-hosted Integration Runtime, grant Integration Runtime (use IP address of the machine) the access to Amazon Redshift cluster. See Authorize access to the cluster for instructions. For version 2.0, your self-hosted integration runtime version should be 5.60 or above.

If you are copying data to an Azure data store, see Azure Data Center IP Ranges for the Compute IP address and SQL ranges used by the Azure data centers.

If your data store is a managed cloud data service, you can use the Azure Integration Runtime. If the access is restricted to IPs that are approved in the firewall rules, you can add Azure Integration Runtime IPs to the allow list.

You can also use the managed virtual network integration runtime feature in Azure Data Factory to access the on-premises network without installing and configuring a self-hosted integration runtime.

Getting started

To perform the copy activity with a pipeline, you can use one of the following tools or SDKs:

- Copy Data tool

- Azure portal

- .NET SDK

- Python SDK

- Azure PowerShell

- REST API

- Azure Resource Manager template

Create a linked service to Amazon Redshift using UI

Use the following steps to create a linked service to Amazon Redshift in the Azure portal UI.

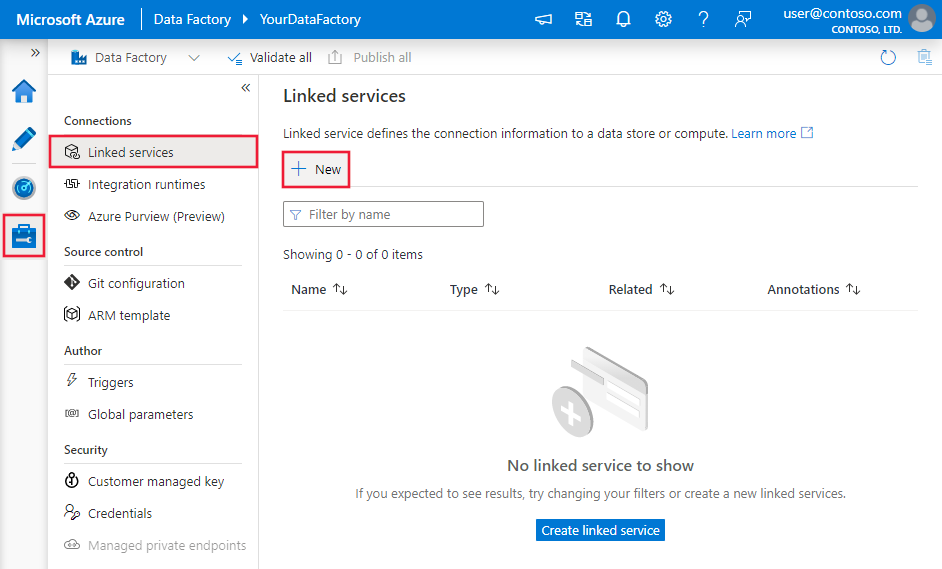

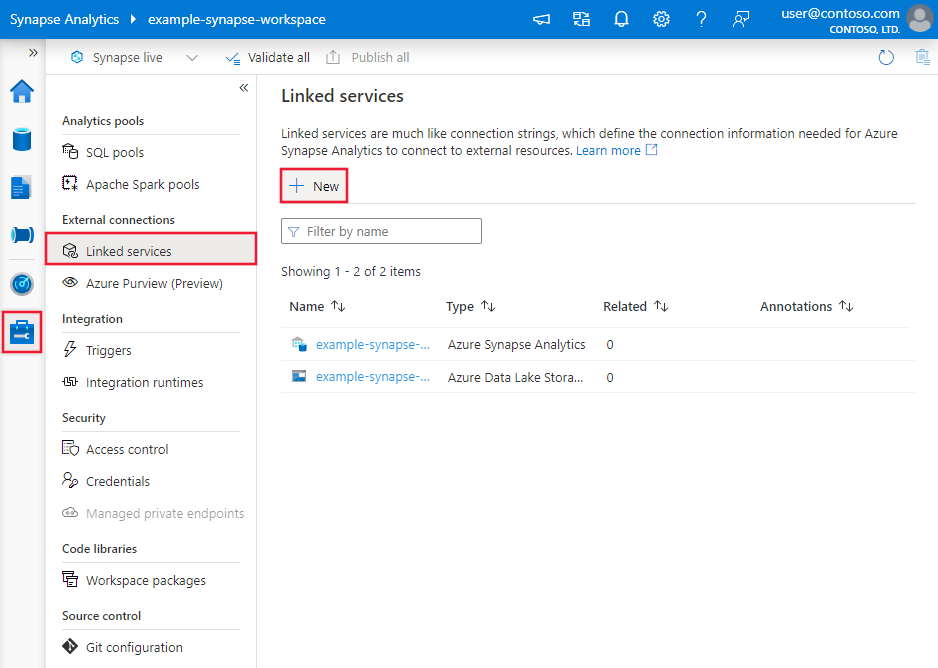

Browse to the Manage tab in your Azure Data Factory or Synapse workspace and select Linked Services, then click New:

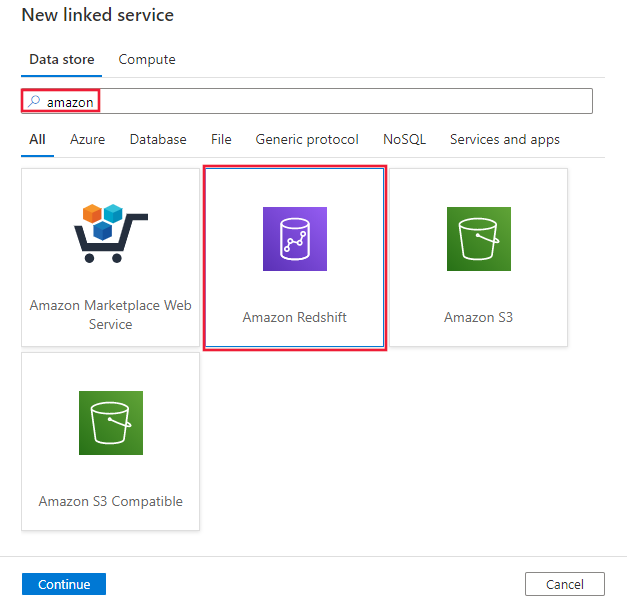

Search for Amazon and select the Amazon Redshift connector.

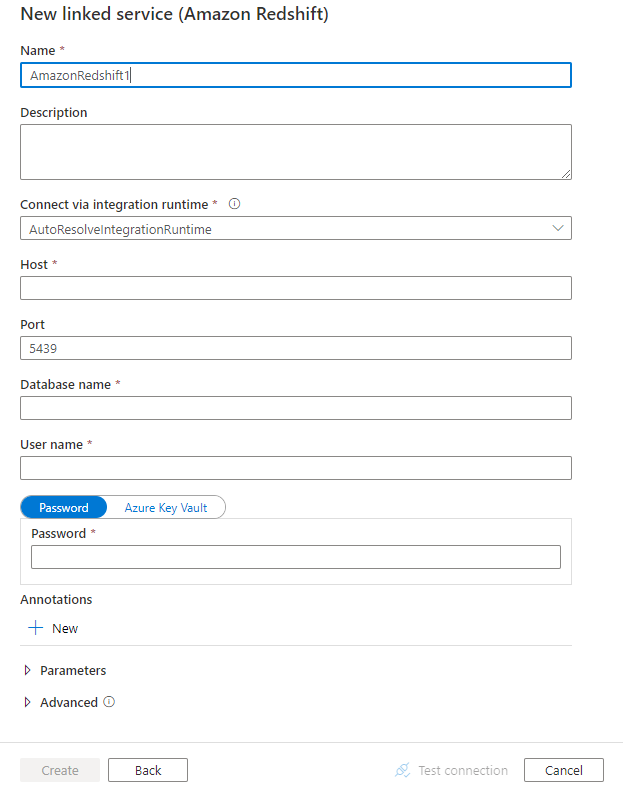

Configure the service details, test the connection, and create the new linked service.

Connector configuration details

The following sections provide details about properties that are used to define Data Factory entities specific to Amazon Redshift connector.

Linked service properties

The following properties are supported for Amazon Redshift linked service:

| Property | Description | Required |

|---|---|---|

| type | The type property must be set to: AmazonRedshift | Yes |

| version | The version that you specify. | Yes for version 2.0. |

| server | IP address or host name of the Amazon Redshift server. | Yes |

| port | The number of the TCP port that the Amazon Redshift server uses to listen for client connections. | No, default is 5439 |

| database | Name of the Amazon Redshift database. | Yes |

| username | Name of user who has access to the database. | Yes |

| password | Password for the user account. Mark this field as a SecureString to store it securely, or reference a secret stored in Azure Key Vault. | Yes |

| sslmode | The SSL certificate verification mode to use when connecting to Amazon Redshift. This property is only supported in version 2.0. - Verify_full: Connect only using SSL, a trusted certificate authority, and a server name that matches the certificate. - Verify_ca: Connect only using SSL and a trusted certificate authority. - Required: Connect only using SSL. - Preferred: Connect using SSL if available. Otherwise, connect without using SSL. - Allowed: By default, connect without using SSL. If the server requires SSL connections, then use SSL. - Disabled: Connect without using SSL. Options: verify-full (Default) / verify-ca / require / prefer / allow / disable |

No, default is verify-full |

| connectVia | The Integration Runtime to be used to connect to the data store. You can use Azure Integration Runtime or Self-hosted Integration Runtime (if your data store is located in private network). If not specified, it uses the default Azure Integration Runtime. | No |

Note

Version 2.0 supports Azure Integration Runtime and Self-hosted Integration Runtime version 5.60 or above. Driver installation is no longer needed with Self-hosted Integration Runtime version 5.60 or above.

Example: version 2.0

{

"name": "AmazonRedshiftLinkedService",

"properties":

{

"type": "AmazonRedshift",

"version": "2.0",

"typeProperties":

{

"server": "<server name>",

"database": "<database name>",

"username": "<username>",

"password": {

"type": "SecureString",

"value": "<password>"

}

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

Example: version 1.0

{

"name": "AmazonRedshiftLinkedService",

"properties":

{

"type": "AmazonRedshift",

"typeProperties":

{

"server": "<server name>",

"database": "<database name>",

"username": "<username>",

"password": {

"type": "SecureString",

"value": "<password>"

}

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

Dataset properties

For a full list of sections and properties available for defining datasets, see the datasets article. This section provides a list of properties supported by Amazon Redshift dataset.

To copy data from Amazon Redshift, the following properties are supported:

| Property | Description | Required |

|---|---|---|

| type | The type property of the dataset must be set to: AmazonRedshiftTable | Yes |

| schema | Name of the schema. | No (if "query" in activity source is specified) |

| table | Name of the table. | No (if "query" in activity source is specified) |

| tableName | Name of the table with schema. This property is supported for backward compatibility. Use schema and table for new workload. |

No (if "query" in activity source is specified) |

Example

{

"name": "AmazonRedshiftDataset",

"properties":

{

"type": "AmazonRedshiftTable",

"typeProperties": {},

"schema": [],

"linkedServiceName": {

"referenceName": "<Amazon Redshift linked service name>",

"type": "LinkedServiceReference"

}

}

}

If you were using RelationalTable typed dataset, it is still supported as-is, while you are suggested to use the new one going forward.

Copy activity properties

For a full list of sections and properties available for defining activities, see the Pipelines article. This section provides a list of properties supported by Amazon Redshift source.

Amazon Redshift as source

To copy data from Amazon Redshift, set the source type in the copy activity to AmazonRedshiftSource. The following properties are supported in the copy activity source section:

| Property | Description | Required |

|---|---|---|

| type | The type property of the copy activity source must be set to: AmazonRedshiftSource | Yes |

| query | Use the custom query to read data. For example: select * from MyTable. | No (if "tableName" in dataset is specified) |

| redshiftUnloadSettings | Property group when using Amazon Redshift UNLOAD. | No |

| s3LinkedServiceName | Refers to an Amazon S3 to-be-used as an interim store by specifying a linked service name of "AmazonS3" type. | Yes if using UNLOAD |

| bucketName | Indicate the S3 bucket to store the interim data. If not provided, the service generates it automatically. | Yes if using UNLOAD |

Example: Amazon Redshift source in copy activity using UNLOAD

"source": {

"type": "AmazonRedshiftSource",

"query": "<SQL query>",

"redshiftUnloadSettings": {

"s3LinkedServiceName": {

"referenceName": "<Amazon S3 linked service>",

"type": "LinkedServiceReference"

},

"bucketName": "bucketForUnload"

}

}

Learn more on how to use UNLOAD to copy data from Amazon Redshift efficiently from next section.

Use UNLOAD to copy data from Amazon Redshift

UNLOAD is a mechanism provided by Amazon Redshift, which can unload the results of a query to one or more files on Amazon Simple Storage Service (Amazon S3). It is the way recommended by Amazon for copying large data set from Redshift.

Example: copy data from Amazon Redshift to Azure Synapse Analytics using UNLOAD, staged copy and PolyBase

For this sample use case, copy activity unloads data from Amazon Redshift to Amazon S3 as configured in "redshiftUnloadSettings", and then copy data from Amazon S3 to Azure Blob as specified in "stagingSettings", lastly use PolyBase to load data into Azure Synapse Analytics. All the interim format is handled by copy activity properly.

"activities":[

{

"name": "CopyFromAmazonRedshiftToSQLDW",

"type": "Copy",

"inputs": [

{

"referenceName": "AmazonRedshiftDataset",

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "AzureSQLDWDataset",

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "AmazonRedshiftSource",

"query": "select * from MyTable",

"redshiftUnloadSettings": {

"s3LinkedServiceName": {

"referenceName": "AmazonS3LinkedService",

"type": "LinkedServiceReference"

},

"bucketName": "bucketForUnload"

}

},

"sink": {

"type": "SqlDWSink",

"allowPolyBase": true

},

"enableStaging": true,

"stagingSettings": {

"linkedServiceName": "AzureStorageLinkedService",

"path": "adfstagingcopydata"

},

"dataIntegrationUnits": 32

}

}

]

Data type mapping for Amazon Redshift

When you copy data from Amazon Redshift, the following mappings apply from Amazon Redshift's data types to the internal data types used by the service. To learn about how the copy activity maps the source schema and data type to the sink, see Schema and data type mappings.

| Amazon Redshift data type | Interim service data type (for version 2.0) | Interim service data type (for version 1.0) |

|---|---|---|

| BIGINT | Int64 | Int64 |

| BOOLEAN | Boolean | String |

| CHAR | String | String |

| DATE | DateTime | DateTime |

| DECIMAL (Precision <= 28) | Decimal | Decimal |

| DECIMAL (Precision > 28) | String | String |

| DOUBLE PRECISION | Double | Double |

| INTEGER | Int32 | Int32 |

| REAL | Single | Single |

| SMALLINT | Int16 | Int16 |

| TEXT | String | String |

| TIMESTAMP | DateTime | DateTime |

| VARCHAR | String | String |

Lookup activity properties

To learn details about the properties, check Lookup activity.

Amazon Redshift connector lifecycle and upgrade

The following table shows the release stage and change logs for different versions of the Amazon Redshift connector:

| Version | Release stage | Change log |

|---|---|---|

| Version 1.0 | End of support announced | / |

| Version 2.0 | GA version available | • Supports Azure Integration Runtime and Self-hosted Integration Runtime version 5.60 or above. Driver installation is no longer needed with Self-hosted Integration Runtime version 5.60 or above. • BOOLEAN is read as Boolean data type. • Support sslmode in the linked service. |

Upgrade the Amazon Redshift connector from version 1.0 to version 2.0

In Edit linked service page, select version 2.0 and configure the linked service by referring to linked service properties.

The data type mapping for the Amazon Redshift linked service version 2.0 is different from that for the version 1.0. To learn the latest data type mapping, see Data type mapping for Amazon Redshift.

Apply a self-hosted integration runtime with version 5.60 or above. Driver installation is no longer needed with Self-hosted Integration Runtime version 5.60 or above.

Related content

For a list of data stores supported as sources and sinks by the copy activity, see supported data stores.