Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

APPLIES TO:  Azure Data Factory

Azure Data Factory  Azure Synapse Analytics

Azure Synapse Analytics

Tip

Data Factory in Microsoft Fabric is the next generation of Azure Data Factory, with a simpler architecture, built-in AI, and new features. If you're new to data integration, start with Fabric Data Factory. Existing ADF workloads can upgrade to Fabric to access new capabilities across data science, real-time analytics, and reporting.

The If Condition activity provides the same functionality that an if statement provides in programming languages. It executes a set of activities when the condition evaluates to true and another set of activities when the condition evaluates to false.

Create an If Condition activity with UI

To use an If Condition activity in a pipeline, complete the following steps:

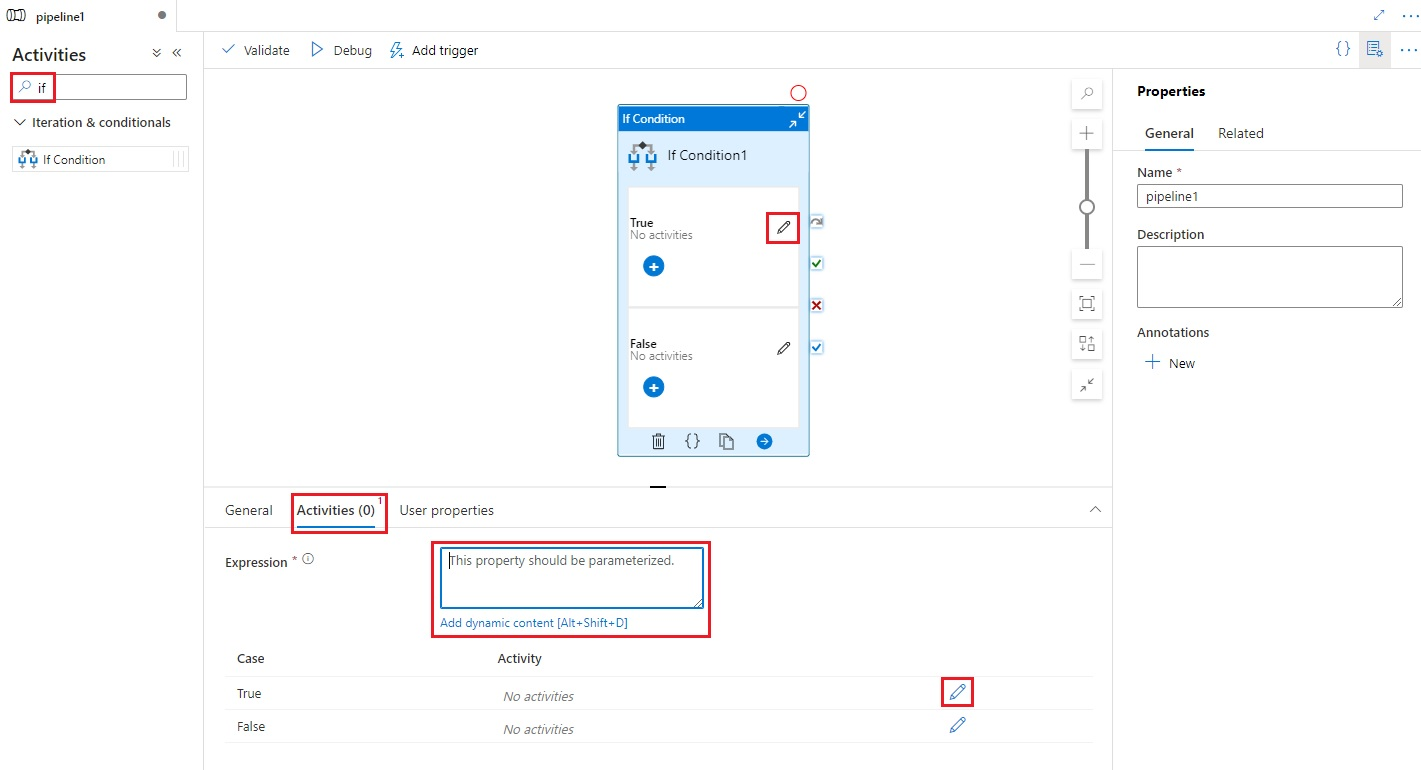

Search for If in the pipeline Activities pane, and drag an If Condition activity to the pipeline canvas.

Select the new If Condition activity on the canvas if it is not already selected, and its Activities tab, to edit its details.

Enter an expression that returns a boolean true or false value. This can be any combination of dynamic expressions, functions, system variables, or outputs from other activities.

Select the Edit Activities buttons on the Activities tab for the If Condition, or directly from the If Condition on the pipeline canvas, to add activities that will be executed when the expression evaluates to

trueorfalse.

Syntax

{

"name": "<Name of the activity>",

"type": "IfCondition",

"typeProperties": {

"expression": {

"value": "<expression that evaluates to true or false>",

"type": "Expression"

},

"ifTrueActivities": [

{

"<Activity 1 definition>"

},

{

"<Activity 2 definition>"

},

{

"<Activity N definition>"

}

],

"ifFalseActivities": [

{

"<Activity 1 definition>"

},

{

"<Activity 2 definition>"

},

{

"<Activity N definition>"

}

]

}

}

Type properties

| Property | Description | Allowed values | Required |

|---|---|---|---|

| name | Name of the if-condition activity. | String | Yes |

| type | Must be set to IfCondition | String | Yes |

| expression | Expression that must evaluate to true or false | Expression with result type boolean | Yes |

| ifTrueActivities | Set of activities that are executed when the expression evaluates to true. |

Array | Yes |

| ifFalseActivities | Set of activities that are executed when the expression evaluates to false. |

Array | Yes |

Example

The pipeline in this example copies data from an input folder to an output folder. The output folder is determined by the value of pipeline parameter: routeSelection. If the value of routeSelection is true, the data is copied to outputPath1. And, if the value of routeSelection is false, the data is copied to outputPath2.

Note

This section provides JSON definitions and sample PowerShell commands to run the pipeline. For a walkthrough with step-by-step instructions to create a pipeline by using Azure PowerShell and JSON definitions, see tutorial: create a data factory by using Azure PowerShell.

Pipeline with IF-Condition activity (Adfv2QuickStartPipeline.json)

{

"name": "Adfv2QuickStartPipeline",

"properties": {

"activities": [

{

"name": "MyIfCondition",

"type": "IfCondition",

"typeProperties": {

"expression": {

"value": "@bool(pipeline().parameters.routeSelection)",

"type": "Expression"

},

"ifTrueActivities": [

{

"name": "CopyFromBlobToBlob1",

"type": "Copy",

"inputs": [

{

"referenceName": "BlobDataset",

"parameters": {

"path": "@pipeline().parameters.inputPath"

},

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "BlobDataset",

"parameters": {

"path": "@pipeline().parameters.outputPath1"

},

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "BlobSource"

},

"sink": {

"type": "BlobSink"

}

}

}

],

"ifFalseActivities": [

{

"name": "CopyFromBlobToBlob2",

"type": "Copy",

"inputs": [

{

"referenceName": "BlobDataset",

"parameters": {

"path": "@pipeline().parameters.inputPath"

},

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "BlobDataset",

"parameters": {

"path": "@pipeline().parameters.outputPath2"

},

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "BlobSource"

},

"sink": {

"type": "BlobSink"

}

}

}

]

}

}

],

"parameters": {

"inputPath": {

"type": "String"

},

"outputPath1": {

"type": "String"

},

"outputPath2": {

"type": "String"

},

"routeSelection": {

"type": "String"

}

}

}

}

Another example for expression is:

"expression": {

"value": "@equals(pipeline().parameters.routeSelection,1)",

"type": "Expression"

}

Azure Storage linked service (AzureStorageLinkedService.json)

{

"name": "AzureStorageLinkedService",

"properties": {

"type": "AzureStorage",

"typeProperties": {

"connectionString": "DefaultEndpointsProtocol=https;AccountName=<Azure Storage account name>;AccountKey=<Azure Storage account key>"

}

}

}

Parameterized Azure Blob dataset (BlobDataset.json)

The pipeline sets the folderPath to the value of either outputPath1 or outputPath2 parameter of the pipeline.

{

"name": "BlobDataset",

"properties": {

"type": "AzureBlob",

"typeProperties": {

"folderPath": {

"value": "@{dataset().path}",

"type": "Expression"

}

},

"linkedServiceName": {

"referenceName": "AzureStorageLinkedService",

"type": "LinkedServiceReference"

},

"parameters": {

"path": {

"type": "String"

}

}

}

}

Pipeline parameter JSON (PipelineParameters.json)

{

"inputPath": "adftutorial/input",

"outputPath1": "adftutorial/outputIf",

"outputPath2": "adftutorial/outputElse",

"routeSelection": "false"

}

PowerShell commands

Note

We recommend that you use the Azure Az PowerShell module to interact with Azure. To get started, see Install Azure PowerShell. To learn how to migrate to the Az PowerShell module, see Migrate Azure PowerShell from AzureRM to Az.

These commands assume that you have saved the JSON files into the folder: C:\ADF.

Connect-AzAccount

Select-AzSubscription "<Your subscription name>"

$resourceGroupName = "<Resource Group Name>"

$dataFactoryName = "<Data Factory Name. Must be globally unique>";

Remove-AzDataFactoryV2 $dataFactoryName -ResourceGroupName $resourceGroupName -force

Set-AzDataFactoryV2 -ResourceGroupName $resourceGroupName -Location "East US" -Name $dataFactoryName

Set-AzDataFactoryV2LinkedService -DataFactoryName $dataFactoryName -ResourceGroupName $resourceGroupName -Name "AzureStorageLinkedService" -DefinitionFile "C:\ADF\AzureStorageLinkedService.json"

Set-AzDataFactoryV2Dataset -DataFactoryName $dataFactoryName -ResourceGroupName $resourceGroupName -Name "BlobDataset" -DefinitionFile "C:\ADF\BlobDataset.json"

Set-AzDataFactoryV2Pipeline -DataFactoryName $dataFactoryName -ResourceGroupName $resourceGroupName -Name "Adfv2QuickStartPipeline" -DefinitionFile "C:\ADF\Adfv2QuickStartPipeline.json"

$runId = Invoke-AzDataFactoryV2Pipeline -DataFactoryName $dataFactoryName -ResourceGroupName $resourceGroupName -PipelineName "Adfv2QuickStartPipeline" -ParameterFile C:\ADF\PipelineParameters.json

while ($True) {

$run = Get-AzDataFactoryV2PipelineRun -ResourceGroupName $resourceGroupName -DataFactoryName $DataFactoryName -PipelineRunId $runId

if ($run) {

if ($run.Status -ne 'InProgress') {

Write-Host "Pipeline run finished. The status is: " $run.Status -foregroundcolor "Yellow"

$run

break

}

Write-Host "Pipeline is running...status: InProgress" -foregroundcolor "Yellow"

}

Start-Sleep -Seconds 30

}

Write-Host "Activity run details:" -foregroundcolor "Yellow"

$result = Get-AzDataFactoryV2ActivityRun -DataFactoryName $dataFactoryName -ResourceGroupName $resourceGroupName -PipelineRunId $runId -RunStartedAfter (Get-Date).AddMinutes(-30) -RunStartedBefore (Get-Date).AddMinutes(30)

$result

Write-Host "Activity 'Output' section:" -foregroundcolor "Yellow"

$result.Output -join "`r`n"

Write-Host "\nActivity 'Error' section:" -foregroundcolor "Yellow"

$result.Error -join "`r`n"

Related content

See other supported control flow activities: